Abstract.

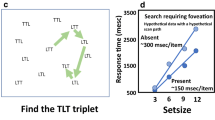

Many vision-based human-computer interaction systems are based on the tracking of user actions. Examples include gaze tracking, head tracking, finger tracking, etc. In this paper, we present a framework that employs no user tracking; instead, all interface components continuously observe and react to changes within a local neighborhood. More specifically, components expect a predefined sequence of visual events called visual interface cues (VICs). VICs include color, texture, motion, and geometric elements, arranged to maximize the veridicality of the resulting interface element. A component is executed when this stream of cues has been satisfied. We present a general architecture for an interface system operating under the VIC-based HCI paradigm and then focus specifically on an appearance-based system in which a hidden Markov model (HMM) is employed to learn the gesture dynamics. Our implementation of the system successfully recognizes a button push with a 96% success rate.

Similar content being viewed by others

References

Azuma R (1997) A survey of augmented reality. Presence Teleoper Virtual Environ 6:355-385

Basu S, Essa I, Pentland A (1996) Motion regularization for model-based head tracking. In: Proc. international conference on pattern recognition

Black MJ, Yacoob Y (1997) Tracking and recognizing rigid and non-rigid facial motions using local parametric models of image motion. Int J Comput Vis 25(1):23-48

Bradski G (1998) Computer vision face tracking for use in a perceptual user interface. Intel Technol J Q2

Bregler C, Malik J (1998) Tracking people with twists and exponential maps. In: Proc. of the conference on computer vision and pattern recognition, pp 8-15

Corso JJ, Burschka D, Hager GD (2003) Direct plane tracking in stereo image for mobile navigation. In: Proc. international conference on robotics and automation, pp 875-880

Corso JJ, Burschka D, Hager GD (2003) The 4DT: Unencumbered HCI With VICs. In: Proc. IEEE workshop on computer vision and pattern recognition for human computer interaction (CVPRHCI)

Cui Y, Weng J (1996) View-based hand segmentation and hand-sequence recognition with complex backgrounds. In: ICPR96, p C8A.4

Gavrila D (1999) The visual analysis of human movement: a survey. Comput Vis Image Understand 73:82-98

Gavrila D. Davis L (1995) Towards 3-D model-based tracking and recognition of human movement: a multi-view approach. In: Proc. international conference on automatic face and gesture recognition

Gevers T (1999) Color based object recognition. Pattern Recog 32(3):453-464

Goncalves L, Di Bernardo E, Ursella E, Perona P (1995) Monocular tracking of the human arm in 3-d. In: Proc. international conference on computer vision, pp 764-770

Hager G, Toyama K (1999) Incremental focus of attention for robust visual tracking. Int J Comput Vis 35(1):45-63

Horprasert T, Harwood D, Davis LS (2000) A robust background substraction and shadow detection. In: Proc. ACCV’2000, Taipei, Taiwan

Ishii K, Yamota J, Ohya J (1992) Recognizing human actions in time-sequential images using hidden markov model. In: IEEE Proc. CVPR 1992, Champaign, IL, pp 379-385

Jelinek F (1999) In: Statistical methods for speech recognition, MIT Press, Cambridge, MA

Jones MJ, Rehg JM (2002) Statistical color models with application to skin detection. Int J Comput Vis 46(1):81-96

Kjeldsen R, Kender JR (1997) Interaction with on-screen objects using visual gesture recognition. In: CVPR97, pp 788-793

Maes P, Darrell TJ, Blumberg B, Pentland AP (1997) The alive system: wireless, full-body interaction with autonomous agents. MultSys 5(2):105-112

Moran T, Saund E, van Melle W, Gujar A, Fishkin K, Harrison B (1999) Design and technology for collaborage: collaborative collages of information on physical walls. In: Proc. ACM symposium on user interface software and technology

Pavlovic VI, Sharma R, Huang TS (1997) Visual interpretation of hand gestures for human-computer interaction: a review. IEEE Trans Pattern Mach Intell 19(7):677-695

Raskar R, Welch G, Cutts M, Lake A, Stesin L, Fuchs H (1998) The office of the future: a unified approach to image-based modeling and spatially immersive displays. In: Proc. SIGGRAPH

Rehg JM, Kanade T Visual tracking of high DOF articulated structures: An application to human hand tracking. In: Computer Vision - ECCV ‘94, vol B, pp 35-46

Rwen CR, Azarbayejani A, Darrell T, Pentland AP (1997) Pfinder: real-time tracking of the human body. IEEE Trans Pattern Anal Mach Intell 19(7):780-784

Segen J, Kumar S (1998) Fast and accurate 3d gesture recognition interface. In: ICPR98, p SA11

Stafford-Fraser Q, Robinson P (1996) Brightboard: a video-augmented environment papers: Virtual and computer-augmented environments. In: Proc. ACM CHI 96 conference on human factors in computing systems, pp 134-141

Starner T, Pentland A (1996) Real-time american sign language recognition from video using hidden markov models. Technical Report TR-375, MIT Media Laboratory, Cambridge, MA

Swain MJ, Ballard DH (1991) Color indexing. Int J Comput Vis 7(1):11-32

van Dam A (1997) Post-wimp user interfaces. Commun ACM 40(2):63-67

Welner P (1993) Interacting with paper on the digital desk. Commun ACM 36(7):87-96

Wren CR, Pentland A (1998) Dynamic modeling of human motion. In: Proc. international conference on automatic face and gesture recognition

Wren CR, Azarbayejani A, Darrell TJ, Pentland AP (1995) Pfinder: real-time tracking of the human body. IEEE Trans Pattern Anal Mach Intell 19(7):780-785

Yamamoto M, Sato A, Kawada S (1998) Incremental tracking of human actions from multiple views. In: Proc. of the conference on computer vision and pattern recognition, pp 2-7

Zeleznik RC, Herndon KP, Hughes JF (1996) Sketch: an interface for sketching 3d scenes. In: Proc. 23rd annual conference on computer graphics and interactive techniques, pp 163-170. ACM Press, New York

Zhang Z, Wu Y, Shan Y, Shafer S (2001) Visual panel: virtual mouse keyboard and 3D controller with an ordinary piece of paper. In: Workshop on perceptive user interfaces. ACM Digital Library, ISBN 1-58113-448-7

Author information

Authors and Affiliations

Corresponding author

Additional information

Published online: 19 November 2004

Rights and permissions

About this article

Cite this article

Ye, G., Corso, J.J., Burschka, D. et al. VICs: A modular HCI framework using spatiotemporal dynamics. Machine Vision and Applications 16, 13–20 (2004). https://doi.org/10.1007/s00138-004-0159-0

Issue Date:

DOI: https://doi.org/10.1007/s00138-004-0159-0