Abstract

We consider the damped Newton method for strongly monotone and Lipschitz continuous operator equations in a variational setting. We provide a very accessible justification why the undamped Newton method performs better than its damped counterparts in a vicinity of a solution. Moreover, in the given setting, an adaptive step-size strategy be presented, which guarantees the global convergence and favours an undamped update if admissible.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In these notes, we focus on the damped Newton method for strongly monotone and Lipschitz continuous operator equations on a real-valued Hilbert space X with inner product \((\cdot ,\cdot )_X\) and induced norm \(\left\| \cdot \right\| _X\). Specifically, given a nonlinear operator \(\textsf{F}:X \rightarrow X'\), we focus on the equation

here, \(X'\) denotes the dual space of X. In weak form, this problem is given by

where \(\langle \cdot ,\cdot \rangle \) signifies the duality pairing in \(X' \times X\). Throughout this work, we impose the following structural assumptions on the nonlinear operator \(\textsf{F}: X \rightarrow X'\):

-

(F1)

The operator \(\textsf{F}\) is Lipschitz continuous; i.e., there exists a constant \(L >0\) such that

$$\begin{aligned} |\langle \textsf{F}(u)-\textsf{F}(v),w\rangle | \le L \left\| u-v\right\| _X \left\| w\right\| _X \qquad \text {for all} \ u,v,w \in X; \end{aligned}$$(3) -

(F2)

The operator \(\textsf{F}\) is strongly monotone; i.e., there exists a constant \(\nu >0\) such that

$$\begin{aligned} \langle \textsf{F}(u)-\textsf{F}(v),u-v\rangle \ge \nu \left\| u-v\right\| _X^2 \qquad \text {for all} \ u,v \in X. \end{aligned}$$(4)

Under those conditions, it is well-known that the operator equation (1) has a unique solution \(u^\star \in X\); see, e.g., [11, Section 3.3] or [13, Section 25.4]. However, since \(\textsf{F}\) is assumed to be nonlinear, it is in general not feasible to solve for \(u^\star \in X\). As a remedy, one may apply an iteration scheme to obtain an approximation \(u^n\) of \(u^\star \). The most famous method to approximate iteratively a root of a nonlinear operator \(\textsf{F}\) is the Newton method, which is defined as follows: For a given initial guess \(u^0 \in X\), we define recursively

where \(\textsf{F}'(u^n):X \rightarrow X'\) denotes the Gateaux derivative of \(\textsf{F}\) at \(u^n \in X\). The main advantage of the Newton method over other iterative linearisation schemes is the local quadratic convergence rate. However, in many cases, the Newton scheme requires an initial guess close to a solution of the operator equation. To improve the lack of global convergence, one may introduce a damping parameter. Then, the damped Newton method is given by

where \(\delta (u^n)>0\) is a damping parameter, which may depend on the given iterate. Often, it is not clear how to choose the damping parameter optimally. Moreover, choices that guarantee the global convergence a priori may often entail an inferior convergence rate compared to the undamped scheme (5). For an extensive overview of Newton’s method for nonlinear problems, we refer the interested reader to [4], especially [4, Section 3] for its damped counterpart, and the references therein. Further and more recent works on the development of adaptive step-size strategies for the damped Newton method may include [1, 2, 12].

The motivation of this work is to present an adaptive damped Newton method, which guarantees the global convergence, and, in a vicinity of a solution, exhibits the optimal quadratic convergence rate. By no means at all do we claim to present a state-of-the-art nonlinear solver for the problem considered herein. However, those superior solvers and adaptive step-size strategies are much more involved, whereas the presented scheme is easily accessible and fairly simple to implement. Finally, the algorithm presented herein can also be applied efficiently in combination with an adaptive finite element method. Indeed, the presented nonlinear solver fits into the framework of [6], and even with the adaptive damping strategy yields the optimal convergence rate with respect to the degrees of freedom and the overall computational costs; see [6, Thm. 4.3]

1.1 Contribution

The novelty of the present manuscript is to combine the convergence analysis of the Newton method from [8, Thm. 2.6] (see also [10, Cor. 2.7] for a closely related result) and the step-size strategies from [10, Section 3], which were developed in the context of the Kačanov iteration scheme. In particular, transferring the step-size strategy from [10, Section 3.1] to the setting of the damped Newton scheme leads to a simple justification why the classical Newton method is optimal in a neighbourhood of a solution. On the other hand, a variant of the adaptive step-size strategy from [10, Section 3.2] yields the global convergence combined with the local quadratic convergence rate, provided that an undamped step is admissible close to the solution.

2 Convergence of the damped Newton method

In many problems of scientific interest, X is an infinite dimensional space, but numerical computations are carried out on finite dimensional subspaces, e.g., finite element spaces. We note, however, that our analysis equally applies to closed subspaces of X—infinite and finite dimensional ones. In order to guarantee the global convergence of the damped Newton method, we need to impose some further assumptions on \(\textsf{F}\):

-

(F3)

The operator \(\textsf{F}\) is Gateaux differentiable. Moreover, \(\textsf{F}'\) is uniformly coercive and bounded in the sense that, for any given \(u \in X\),

$$\begin{aligned} \langle \textsf{F}'(u)v,v\rangle \ge \alpha _{\textsf{F}'} \left\| v\right\| _X^2 \qquad \text {for all} \ v \in X \end{aligned}$$(7)and

$$\begin{aligned} \langle \textsf{F}'(u)v,w\rangle \le \beta _{\textsf{F}'} \left\| v\right\| _X \left\| w\right\| _X \qquad \text {for all} \ v,w \in X, \end{aligned}$$(8)where \(\alpha _{\textsf{F}'},\beta _{\textsf{F}'}>0\) are independent of u;

-

(F4)

There exists a Gateaux differentiable functional \(\textsf{G}:X \rightarrow {\mathbb {R}}\) such that \(\textsf{G}'(u)=\textsf{F}'(u)u\) in \(X'\) for any \(u \in X\), and \(\textsf{G}':X \rightarrow X'\) is continuous with respect to the weak topology in \(X'\);

-

(F5)

\(\textsf{F}\) is a potential operator; i.e., there exists a Gateaux differentiable functional \(\textsf{H}:X \rightarrow {\mathbb {R}}\) with \(\textsf{H}'=\textsf{F}\).

Then, the following convergence result holds.

Theorem 2.1

([8, Thm. 2.6]). Assume (F1)–(F5) and that \(\delta :X \rightarrow [\delta _{\min },\delta _{\max }]\) for some \(0<\delta _{\min }\le \delta _{\max }<\nicefrac {2 \alpha _{\textsf{F}'}}{L}\). Then, the damped Newton method (6) converges to the unique solution \(u^\star \in X\) of (1).

In many cases, we have that \(\nicefrac {2 \alpha _{\textsf{F}'}}{L}<1\). This, however, excludes the choice \(\delta (u^n)=1\), which yields the local quadratic convergence rate. By examining the proof of [8, Thm. 2.6], one realises that the specific upper bound on the damping parameter was imposed to guarantee the existence of a constant \(C_{\textsf{H}}>0\) such that

we also refer to the closely related work [6], especially Eq. (2.9) in that reference. Indeed, in the proof of [8, Thm. 2.6], it is shown that

Consequently, given that \(\delta (u^n) \le \delta _{\max } < \nicefrac {2 \alpha _{\textsf{F}'}}{L}\), we have

where \(C_\textsf{H}:=\left( \frac{\alpha _{\textsf{F}'}}{\delta _{\max }}-\frac{L}{2}\right) >0\). By no means, however, is \(\delta (u^n) \le \delta _{\max } < \nicefrac {2 \alpha _{\textsf{F}'}}{L}\) a necessary condition for (9). The following generalised convergence theorem remains true, which can be easily verified by following along the lines of the proof of [8, Thm. 2.6].

Theorem 2.2

Given (F1)–(F5), for any damping strategy that guarantees \(\delta (u^n) \in [\delta _{\min },\delta _{\max }]\) and (9) for all \(n \in {\mathbb {N}}\), where \(0<\delta _{\min } \le \delta _{\max }<\infty \) and \(C_{\textsf{H}}>0\) are given constants independent of n, we have that \(u^n \rightarrow u^\star \) strongly in X as \(n \rightarrow \infty \).

3 Local optimality of the classical Newton scheme

Before we introduce our step-size strategy, which is borrowed from [10, Section 3.1], we first remark that the unique solution of the operator equation (1) is equally the unique minimiser of the potential \(\textsf{H}\), see [13, Thm. 25.F]. Furthermore, the damped Newton method can be reformulated as

where \(\delta ^n\) is the damping parameter and \(\rho ^n=\textsf{F}'(u^n)^{-1}\textsf{F}(u^n)\) is the undamped update at the given iterate. Equivalently, we have that

Since we want to obtain the unique minimiser \(u^\star \in X\) of \(\textsf{H}\), a sensible strategy is to choose the step-size \(\delta ^n\) in (12) in such a way that, for given \(u^n \in X\), the difference

is minimised. Indeed, this corresponds to a maximal decay of the potential at step \(n+1\). We now follow along the lines of [10, Section 3.1], where the strategy was originally developed, to the best of our knowledge, as a possible choice of the damping parameter of a modified Kačanov scheme. Let us recall that \(\textsf{H}\) is the potential of \(\textsf{F}\), and thus \(\textsf{H}'=\textsf{F}\). Consequently, thanks to the fundamental theorem of calculus, we have that

Let \(\psi (t):=\langle \textsf{F}(u^{n}+t(u^{n+1}-u^n)),u^{n+1}-u^n\rangle \) be the integrand of the right-hand side above. In view of (13) and the linearity of the dual product, the integrand \(\psi \) can be rewritten as

In order to minimise (15), we employ a first order Taylor approximation to (16) at \(t=0\). Specifically, a straightforward calculation reveals that

Plugging (17) into (15) and, subsequently, integrating from zero to one yields

Then, since \(\textsf{F}'(u^n)\) is coercive, it follows immediately that the right-hand side of (18) is minimised for

here, we used that \(\textsf{F}'(u^n)\rho ^n=\textsf{F}'(u^n)\textsf{F}'(u^n)^{-1}\textsf{F}(u^n)=\textsf{F}(u^n)\). Furthermore, the approximations (17) and (18), respectively, become more accurate the smaller \(\left\| \rho ^n\right\| _X\) gets, and thus especially in a neighbourhood of a solution. In particular, this means that \(\delta \equiv 1\) is indeed the optimal local damping parameter in a vicinity of the solution.

4 Adaptive damped Newton algorithm

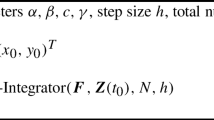

Now we shall present our adaptive damped Newton method, cf. Algorithm 1, which guarantees the global convergence and favours the step-size \(\delta ^n=1\), if admissible, leading to local quadratic convergence rate. This algorithm is closely related to [10, Algorithm 1].

Theorem 4.1

Given the assumptions (F1)–(F5), the sequence \(\{u^n\}\) generated by Algorithm 1 converges strongly to the unique solution \(u^\star \in X\) of the operator equation (1).

Proof

Let \(C_\textsf{H}:=\theta \min \{\alpha _{\textsf{F}'},L\}\). In the following, we shall distinguish two cases:

-

(I)

If \(\nicefrac {\alpha _{\textsf{F}'}}{L} >1\), then the algorithm accepts, for every \(n \in {\mathbb {N}}\), the initial choice \(\delta ^n=1\). Indeed, we have that \(\left( \nicefrac {\alpha _{\textsf{F}'}}{1}-\nicefrac {L}{2}\right) \ge \nicefrac {L}{2} \ge \theta \min \{\alpha _{\textsf{F}'},L\}\), wherefore the statement in line 7 of Algorithm 1 is satisfied, thanks to (10), without any correction. In turn, the convergence follows from Theorem 2.1.

-

(II)

Now let us presume that \(0<\delta _{\min }:=\nicefrac {\alpha _{\textsf{F}'}}{L} \le 1=:\delta _{\max }\). Thanks to Theorem 2.2, it only remains to prove (9). By line 7 of Algorithm 1, this amounts to verify that the repeat-loop (lines 4–7) in Algorithm 1 terminates for each \(n \in {\mathbb {N}}\). By contradiction, assume that this was not the case; i.e., there exists \(n \in {\mathbb {N}}\) such that the statement in line 7 is never satisfied. For this specific \(n \in {\mathbb {N}}\), we have after finitely many steps that \(\delta ^n=\nicefrac {\alpha _{\textsf{F}'}}{L}\). Recalling (10), we find that

$$\begin{aligned} \textsf{H}(u^n)-\textsf{H}(u^{n+1})&\ge \left( \frac{\alpha _{\textsf{F}'}}{\delta ^n}-\frac{L}{2}\right) \left\| u^{n}-u^{n+1}\right\| _X^2 \\&=\frac{L}{2} \left\| u^{n}-u^{n+1}\right\| _X^2 \\&\ge C_{\textsf{H}} \left\| u^n-u^{n+1}\right\| _X^2; \end{aligned}$$i.e., the repeat-loop terminates, which yields the desired contradiction.

\(\square \)

Remark 4.2

Of course, Algorithm 1 could be further improved by taking into account the accepted damping parameter from the previous step. However, for simplicity of the presentation, we stick to our current algorithm.

Remark 4.3

By modus operandi of Algorithm 1, we have that

where \(C_\textsf{H}:=\theta \min \{\alpha _{\textsf{F}'},L\}>0\), and thus the proposed scheme fits into the framework of the iterative nonlinear solver of [6, Section 2]. Moreover, recalling the proof of Theorem 4.1, it is apparent that the number of repeat-loops (lines 4–7) in Algorithm 1 is uniformly bounded for \(n \in {\mathbb {N}}\). Consequently, we may replace the single iterative linearisation step in [6, Algorithm 1 (line 4)] with our nonlinear solver from Algorithm 1, and the convergence results of [6, Section 4] still remain valid. Most importantly, our suggested iterative nonlinear solver in combination with an adaptive finite element method may lead to a computational scheme, which converges to a solution of the original problem (1) with optimal rate with respect to the degrees of freedom as well as the overall computational time.

Remark 4.4

It shall be emphasised that, for the problem considered herein, there exist superior numerical schemes as well as more elaborated adaptive step-size strategies from nonlinear optimisation, such as the Armijo or the Wolfe-Powell rule; we refer the interested reader, e.g., to [5]. However, as mentioned at the beginning, the goal of this work is to present an easily accessible damped Newton method with guaranteed convergence and local quadratic convergence rate, which in addition is fairly simple to implement.

5 Application to quasilinear elliptic diffusion models

In the following, let \(X:=H^1_0(\Omega )\) denote the standard Sobolev space of \(H^1\)-functions on \(\Omega \) with zero trace along the boundary \(\Gamma :=\partial \Omega \), where \(\Omega \subset {\mathbb {R}}^d\), \(d \in {\mathbb {N}}\), is an open and bounded domain with Lipschitz boundary. The inner product and norm on X are defined by \((u,v)_X:=\int _\Omega \nabla u \cdot \nabla v \,{\textsf{d}} {\varvec{{\textsf{x}}}}\) and \(\left\| u\right\| _X^2=\int _\Omega |\nabla u|^2 \,{\textsf{d}} {\varvec{{\textsf{x}}}}\), respectively. We consider the quasilinear elliptic partial differential equation

here, \(\mu :{\mathbb {R}}_{\ge 0} \rightarrow {\mathbb {R}}_{\ge 0}\) is a diffusion coefficient and \(g \in H^{-1}(\Omega ) = X'\) a given source function. Models of the form (20) are widely applied in physics, for instance in plasticity and elasticity, as well as in hydro- and gas-dynamics. Moreover, they also served as our model problems in our previous and closely related works [6, 8,9,10].

To guarantee that the properties (F1)–(F5) are satisfied in the given setting, we need to impose the following assumptions on the diffusion coefficient:

-

(M1)

The diffusion coefficient \(\mu :{\mathbb {R}}_{\ge 0} \rightarrow {\mathbb {R}}_{\ge 0}\) is continuously differentiable and monotonically decreasing; i.e., \(\mu '(t) \le 0\) for all \(t \ge 0\).

-

(M2)

There exist positive constants \(0<m_\mu<M_{\mu }<\infty \) such that

$$\begin{aligned} m_\mu (t-s) \le \mu (t^2)t-\mu (s^2)s\le M_\mu (t-s) \qquad \text {for all} \ t \ge s \ge 0. \end{aligned}$$(21)

The assumption (M2) implies (F1) and (F2) with \(L=3 M_\mu \) and \(\nu =m_\mu \), respectively; see, e.g. [13, Proposition 25.26]. Furthermore, \(\textsf{F}\) is Gateaux differentiable and the derivative satisfies (7) and (8) with \(\alpha _{\textsf{F}'}=m_\mu \) and \(\beta _{\textsf{F}'}=2M_\mu -m_\mu \), respectively; we refer to the proof of [8, Proposition 5.3]. Note that, in the given setting, \(\nicefrac {2\alpha _{\textsf{F}'}}{L}=\nicefrac {2m_\mu }{3 M_{\mu }}<1\), and thus the convergence of the classical Newton method is not guaranteed a priori. For the verification of (F4), we refer to [8, Lemma 5.4] and the proof of [8, Proposition 5.3]. Finally, \(\textsf{F}\) has the potential

where \(\psi (s):=\frac{1}{2} \int _0^s \mu (t) \,{\textsf{d}} t\).

5.1 Numerical experiments

Now we run two experiments to test our adaptive algorithm. For that purpose, we discretise the continuous problem with a conforming P1-finite element method. Moreover, we compute an accurate approximation of the discrete solution with the Kačanov iteration scheme, see, e.g. [11, Section 4.5] or [13, Section 25.14], which is guaranteed to converge given the assumptions (M1) and (M2). In Algorithm 1, we set the parameters \(\sigma =0.8\) and \(\theta =0.1\) for both of our experiments.

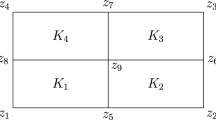

Experiment 5.1

In our first experiment, we consider the L-shaped domain \(\Omega :=(-1,1)^2 \setminus ([0,1] \times [0,1])\) and the diffusion coefficient \(\mu (t)=(t+1)^{-1}+\nicefrac {1}{2}\). It is straightforward to verify that \(\mu \) satisfies (M1) and (M2); specifically, it can be shown that \(m_\mu = \nicefrac {3}{8}\) and \(M_\mu =\nicefrac {3}{2}\). The source function g is chosen in such a way that the exact solution of the continuous problem is given by \(u^\star (x,y)=\sin (\pi x) \sin (\pi y)\), where \((x,y) \in {\mathbb {R}}^2\) denote the Euclidean coordinates. Finally, we consider the constant null function as our initial guess.

Experiment 5.1: Comparison of the adaptive damped Newton method and the damped Newton method with fixed step-size

In this experiment, we compare the performance of Algorithm 1 and the damped Newton method with fixed step-size \(\delta =\nicefrac {\alpha _{\textsf{F}'}}{L}=\nicefrac {m_\mu }{3 M_\mu }\). We can observe in Fig. 1 that, in the given setting, \(\delta \equiv 1\) is an admissible step-size and leads to a quadratic decay rate of the error \(\left\| u^n-u^\star \right\| _X\). In contrast, the fixed damping parameter \(\delta =\nicefrac {m_\mu }{3 M_\mu }\), which is chosen in accordance to Theorem 2.1, leads to a very poor convergence rate.

Experiment 5.2

In our second numerical test, we consider the square domain \(\Omega :=(0,1)^2\). Furthermore, our diffusion coefficient is given by

for some constants \(k,\gamma ,\zeta >0\); this function is based on the Bercovier–Engelman regularisation of the viscosity coefficient of a Bingham fluid, cf. [3]. Clearly, \(\mu \) is differentiable and decreasing. Furthermore, the assumption (M2) is satisfied with \(m_\mu =2 \zeta \) and \(M_\mu =2 \zeta + k \gamma \), see [7, Lemma 4.1]. For our experiment, we set \(\gamma =0.3\), \(\zeta =1\), and \(k=100\). For simplicity, we consider the same source function g as in the previous experiment and set \(u^0(x,y)=\sin (\pi x) \sin (\pi y)\), or more precisely its piecewise linear interpolation in the nodes of the mesh.

Here, we consider the adaptive damped Newton method from Algorithm 1, the damped Newton iteration with fixed step-size \(\delta = 0.2\) (which, however, does not guarantee the convergence a priori), and the classical Newton scheme. As can be observed in Fig. 2, the undamped scheme does not converge in the given setting. In contrast, and in accordance with the theory, the adaptive step-size strategy from Algorithm 1 leads to the convergence of the damped Newton method. Moreover, after a first phase of reduced step-sizes, and in turn of inferior decay rate, the damping parameter finally becomes one, which then leads to the local quadratic convergence rate. As a consequence, the adaptive damping scheme outperforms the Newton method with fixed step-size \(\delta =0.2\), which converges at a linear rate.

Experiment 5.2: Comparison of the adaptive and fixed damped Newton methods and the classical Newton scheme

6 Conclusion

We have presented an adaptively damped Newton method, which converges, under suitable assumptions, for any initial guess to the unique solution in the context of strongly monotone and Lipschitz continuous operator equations. Moreover, our numerical experiments highlighted that this algorithm may indeed converge in situations where the classical Newton scheme fails, and, nonetheless, exhibits the favourable local quadratic convergence rate of the undamped method. Finally, we recall that the proposed nonlinear solver can be applied in combination with an adaptive finite element method to obtain a computational scheme, which converges to the continuous solution with optimal rate with respect to the overall computational time.

References

Amrein, M., Wihler, T.P.: An adaptive Newton-method based on a dynamical systems approach. Commun. Nonlinear Sci. Numer. Simul. 19(9), 2958–2973 (2014)

Amrein, M., Wihler, T.P.: Fully adaptive Newton–Galerkin methods for semilinear elliptic partial differential equations. SIAM J. Sci. Comput. 37(4), A1637–A1657 (2015)

Bercovier, M., Engelman, M.: A finite element method for incompressible non-Newtonian flows. J. Comput. Phys. 36(3), 313–326 (1980)

Deuflhard, P.: Newton Methods for Nonlinear Problems. Affine Invariance and Adaptive Algorithms. Springer Series in Computational Mathematics, vol. 35. Springer, Berlin (2004)

Geiger, C., Kanzow, C.: Theorie und Numerik restringierter Optimierungsaufgaben. Springer, Berlin (2002)

Heid, P., Praetorius, D., Wihler, T.P.: Energy contraction and optimal convergence of adaptive iterative linearized finite element methods. Comput. Methods Appl. Math. 21(2), 407–422 (2021)

Heid, P., Süli, E.: An adaptive iterative linearised finite element method for implicitly constituted incompressible fluid flow problems and its application to Bingham fluids. Appl. Numer. Math. 181, 364–387 (2022)

Heid, P., Wihler, T.P.: Adaptive iterative linearization Galerkin methods for nonlinear problems. Math. Comp. 89(326), 2707–2734 (2020)

Heid, P., Wihler, T.P.: On the convergence of adaptive iterative linearized Galerkin methods. Calcolo 57(3), Paper No. 24, 23 pp. (2020)

Heid, P., Wihler, T.P.: A modified Kačanov iteration scheme with application to quasilinear diffusion models. ESAIM Math. Model. Numer. Anal. 56(2), 433–450 (2022)

Nečas, J.: Introduction to the Theory of Nonlinear Elliptic Equations. John Wiley and Sons, New Jersey (1986)

Polyak, B., Tremba, A.: New versions of Newton method: step-size choice, convergence domain and under-determined equations. Optim. Methods Softw. 35(6), 1272–1303 (2020)

Zeidler, E.: Nonlinear Functional Analysis and Its Applications. II/B. Nonlinear Monotone Operators. Springer, New York (1990)

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Heid, P. A short note on an adaptive damped Newton method for strongly monotone and Lipschitz continuous operator equations. Arch. Math. 121, 55–65 (2023). https://doi.org/10.1007/s00013-023-01858-x

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00013-023-01858-x