Abstract

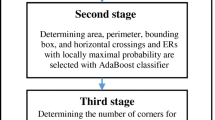

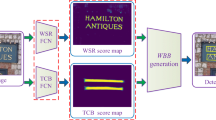

This paper proposes a three-level framework to detect texts in a single image. First, a salient feature map of text is extracted using a Fully Convolutional Network (FCN) that achieves good performance in semantic segmentation. Label combination using both boxes of word and characters level is proposed to improve the detection of uneven boundaries of text regions. Second, in the feature map of FCN, the text region has a higher probability value than the background region, and the coordinates in the character area are very close to each other. We segment the text area and the background area by using the characteristics of text feature map with Hierarchical Cluster Analysis (HCA). Finally, we applied a Convolutional Neural Networks (CNN) to classify the candidate text area into text and non-text. In this paper, we used CNN which can classify 4 classes in total by separating the background area and three text classes (one character, two characters, three characters or more). The text detection framework proposed in this paper have shown good performance with ICDAR 2015, and high performance especially in Recall criterion, finding more texts than other algorithms.

Similar content being viewed by others

References

J. Long, E. Shelhamer, and T. Darrell, “Fully convolutional networks for semantic segmentation,” Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2015.

A. Krizhevsky, I. Sutskever, and G. E. Hinton, “Imagenet classification with deep convolutional neural networks,” Advances in Neural Information Processing Systems, pp. 1097–1105, 2012.

L. Rokach and O. Maimon, “Clustering methods,” Data Mining and Knowledge Discovery Handbook, pp. 321–352, Springer, Boston, MA. 2005.

Q. Ye and D. Doermann, “Text detection and recognition in imagery: a survey,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 37, no. 7, pp. 1480–1500, 2015.

B. Epshtein, E. Ofek, and Y. Wexler, “Detecting text in natural scenes with stroke width transform,” Proc. of IEEE Conference on Computer Vision and Pattern Recognition, pp. 2963–2970, June 2010.

M. Jaderberg, A. Vedaldi, and A. Zisserman, “Deep features for text spotting,” European Conference on Computer Vision, pp. 512–528, Springer, Cham, September 2015.

L. Neumann and J. Matas, “Scene text localization and recognition with oriented stroke detection,” Proceedings of the IEEE International Conference on Computer Vision, pp. 97–104, 2013.

J. J. Lee, P. H. Lee, S. W. Lee, A. Yuille, and C. Koch, “Adaboost for text detection in natural scene,” Proc. of International Conference on Document Analysis and Recognition (ICDAR), pp. 429–434, September 2011.

X. Chen and A. L. Yuille, “Detecting and reading text in natural scenes,” Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition, vol. 2, 2004.

R. Lienhart and A. Wernicke, “Localizing and segmenting text in images and videos,” IEEE Transactions on Circuits and Systems for Video Technology, vol. 12, no. 4, pp. 256–268, 2002.

J. Matas, O. Chum, M. Urban, and T. Pajdla, “Robust widebaseline stereo from maximally stable extremal regions,” Image and Vision Computing, vol. 22, no. 10, pp. 761–767, 2004.

L. Neumann and J. Matas, “Real-time scene text localization and recognition,” Proc. of IEEE Conference on Computer Vision and Pattern Recognition, pp. 3538–3545, June 2012.

Y. F. Pan, X. Hou, and C. L. Liu, “Text localization in natural scene images based on conditional random field,” Proc. of 10th International Conference on Document Analysis and Recognition (ICDAR’09), pp. 6–10, July 2009.

L. Neumann and J. Matas, “A method for text localization and recognition in real-world images,” Proc. of Asian Conference on Computer Vision, pp. 770–783, Springer, Berlin, Heidelberg, November 2010.

L. Neumann and J. Matas, “Text localization in real-world images using efficiently pruned exhaustive search,” Proc. of International Conference on Document Analysis and Recognition (ICDAR 2011), pp. 687–691, September 2011.

W. Huang, Y. Qiao, and X. Tang, “Robust scene text detection with convolution neural network induced mser trees,” Proc. of European Conference on Computer Vision, pp. 497–511, September 2014.

Y. Zheng, Q. Li, J. Liu, H. Liu, G. Li, and S. Zhang, “A cascaded method for text detection in natural scene images,” Neurocomputing, vol. 238, pp. 307–315, May 2017.

J. Ma, W. Shao, H. Ye, L. Wang, H. Wang, Y. Zheng, and X. Xue, “Arbitrary-oriented scene text detection via rotation proposals,” IEEE Transactions on Multimedia, vol. 20, no. 11, pp. 3111–3122, Nov. 2018.

K. Simonyan and A. Zisserman, “Very deep convolutional networks for large-scale image recognition,” arXiv preprint, arXiv:1409.1556, 2014.

C. Szegedy, W. Liu, Y. Jia, P. Sermanet, S. Reed, D. Anguelov, and A. Rabinovich, “Going deeper with convolutions,” Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–9, 2015.

H. H. Kim, J. K. Park, J. H. Oh, and D. J. Kang, “Multitask convolutional neural network system for license plate recognition,” International Journal of Control, Automation and Systems, vol. 15, no. 6, pp. 2942–2949, 2017.

J. K. Park and D. J. Kang, “Unified convolutional neural network for direct facial keypoints detection,” The Visual Computer, pp. 1–12, 2018. DOI: 10.1007/s00371-018-1561-3

Z. Zhang, C. Zhang, W. Shen, C. Yao, W. Liu, and X. Bai, “Multi-oriented text detection with fully convolutional networks,” Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4159–4167, 2016.

K. Simonyan and A. Zisserman, “Very deep convolutional networks for large-scale image recognition,” arXiv preprint, arXiv:1409.1556, 2014.

D. Karatzas, L. Gomez-Bigorda, A. Nicolaou, S. Ghosh, A. Bagdanov, M. Iwamura, and F. Shafait, “ICDAR 2015 competition on robust reading,” Proc. of 13th International Conference on on Document Analysis and Recognition (ICDAR), IEEE, pp. 1156–1160, August 2015.

A. Gupta, A. Vedaldi, and A. Zisserman, “Synthetic data for text localisation in natural images,” Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2315–2324, 2016.

P. D. Boer, D. P. Kroese, S. Mannor, and R. Y. Rubinstein, “A tutorial on the cross-entropy method,” Annals of Operations Research, vol. 134, no. 1, pp. 19–67, 2005.

C. Yao, X. Bai, N. Sang, X. Zhou, S. Zhou, and Z. Cao, “Scene text detection via holistic, multi-channel prediction,” arXiv preprint arXiv:1606.09002., 2016.

Z. Tian, W. Huang, T. He, P. He, and Y. Qiao, “Detecting text in natural image with connectionist text proposal network,” Proc. of European Conference on Computer Vision, pp. 56–72. Springer, Cham. October 2016.

X. Zhou, C. Yao, H. Wen, Y. Wang, S. Zhou, W. He, and J. Liang, “EAST: an efficient and accurate scene text detector,” Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2642–2651, July 2017.

Author information

Authors and Affiliations

Corresponding author

Additional information

Recommended by Associate Editor Kang-Hyun Jo under the direction of Editor Euntai Kim. This work was supported by the National Research Foundation of Korea(NRF) grant funded by the Korea government(MSIT) (2016R1A2B4007608), and National IT Industry Promotion Agency(NIPA) grant funded by the Korea government(MSIT) (S0249-19-1019).

Hong-Hyun Kim received his B.S. degree in School of Mechanical Engineering from Pusan National University, Busan, Korea in 2012. He is currently in Unified Master and Doctor’s course at the same graduate school. His research interests include deep learning, machine learning, and pattern recognition.

Jae-Ho Jo received his B.S. degree in School of Computer Science and Engineering from Pusan National University, Pusan, Korea in 2014 and an M.S. degree in Mechanical Engineering from Pusan National University, Busan, Korea in 2016. Now, he is a research engineer in Hanhwa-Techwin R&D Center. His research interests include machine learning, and computer vision.

Zhu Teng received her B.S. and Ph.D. degrees in Automation from Central South University, China, 2006 and in Mechanical Engineering of Pusan National University, Korea, 2013, respectively. She is now an associate professor in the School of Computer and Information Technology, Beijing Jiatong University. Her current research interests are visual tracking, deep learning, and computer vision.

Dong-Joong Kang received his B.S. degree in Precision Engineering from Pusan National University, Busan, Korea, in 1988 and his M.S. and Ph.D. degrees in Mechanical, and Automation & Design Engineering from KAIST, Korea, in 1990 and 1999, respectively. Now, he is a professor at the School of Mechanical Engineering in Pusan National University. He is also an associate editor of the International Journal of Control, Automation, and Systems since 2007. His current research interests are machine vision, machine learning, and visual inspection in factory.

Rights and permissions

About this article

Cite this article

Kim, HH., Jo, JH., Teng, Z. et al. Text Detection with Deep Neural Network System Based on Overlapped Labels and a Hierarchical Segmentation of Feature Maps. Int. J. Control Autom. Syst. 17, 1599–1610 (2019). https://doi.org/10.1007/s12555-018-0578-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-018-0578-8