Abstract

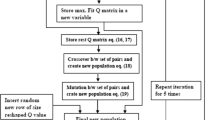

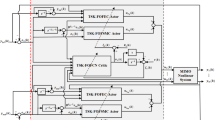

This work proposes Lyapunov theory based Fuzzy/Neural Reinforcement Learning (RL) controllers with guaranteed stability. We look at ways in which Lyapunov theory could be used to produce RL controllers wherein the control action is hybrid or Lyapunov constrained, resulting in self learning controllers that are optimal, effective and stable. Fuzzy systems and Neural networks have been used as generic function approximators to handle exponential rise in computational burden that arises when RL is extended to high dimensional/continuous state-action spaces. We propose two distinct approaches: (i) Hybridized fuzzy Lyapunov RL control by combining Fuzzy Q Learning methodology in a Lyapunov setting thereby guarantying stability, and (ii) Lyapunov constrained Neural RL control wherein the controller’s action set is constrained to satisfy Lyapunov stability condition. Incorporating Lyapunov theory based element in the action generation mechanism of an RL based controller guarantees stability. We implement our soft computing based Lyapunov RL on the two benchmark non linear problems: (a) inverted pendulum and (b) cart pole balancing. The results obtained and associated comparison with baseline Neural/Fuzzy Q-Learning based controllers bring out the advantage of our Lyapunov RL based scheme.

Similar content being viewed by others

References

Levine J (2009) Analysis and control of nonlinear systems. Springer Verlag, London

Wiering M, Van Otterlo M (2012) Reinforcement learning: state-of-the-art. Adaptation, learning and optimization, vol. 12. Springer, Berlin

Busoniu L, Babuska R, De Schutter B, Ernst D (2010) Reinforcement learning and dynamic programming using function approximators. CRC Press, Boca Raton

Kobayashi K, Mizoue H, Kuremoto T, Obayashi M (2009) A meta-learning method based on temporal difference error. ICONIP, Part 1, LNCS 5863, pp 530–537

Kumar R, Nigam MJ, Sharma S, Bhavsar P (2012) Temporal difference based tuning of fuzzy logic controller through reinforcement learning to control an inverted Pendulum. Int J Intell Syst Appl 9:15–21

Ju X, Lian C, Zuo L, He H (2014) Kernel based approximate dynamic programming for real time online learning control: an experimental study. IEEE Trans Control Syst Technol 22(1):146–156

Liu Q, Zhou X, Zhu F, Fu Q, Fu Y (2014) Experience replay for least squares policy iteration. IEEE/CAA J Automatica 1(3):274–281

Vrabie D, Vamaoudakis KG (2013) Optimal adaptive control and differential games by reinforcement learning principles. IET Press, London

Perkins TJ, Barto AG (2002) Lyapunov design for safe reinforcement learning. J Mach Learn Res 3:803–832

Saxena R, Sharma R (2015) A hybrid Lyapunov fuzzy reinforcement learning controller. IEEE IndiaCom Conference, India, pp 423–427

Kumar A, Sharma R (2015) A stable Lyapunov constrained reinforcement learning based neural controller for non linear systems. International conference on computing, communication & automation, pp 185–189

Lin Chuan-Kai (2009) H∞ reinforcement learning control of robot manipulators using fuzzy wavelet networks. Fuzzy Sets Syst 160:1765–1786

Aguilar-Ibanez C (2008) A constructive Lyapunov function for controlling the inverted Pendulum. American control conference, Westin Seattle Hotel, Seattle, Washington

Sharma R, Gopal M (2006) Game-theoretic-reinforcement-adaptive neural controller for nonlinear systems. In: Proceedings of the 2006 american control conference, pp 2975–2980

Mahajan A, Singh HP, Sukavanam N (2017) An unsupervised learning based neural network approach for a robotic manipulator. Int J Inf Technol 9:1–6

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

From physics, we know that

where ∑ τ gives sum of torques, I is moment of inertia and α is angular acceleration.

From the free body diagram (Fig. 16) is clear that there is motion only in the direction perpendicular to the rigid rod. We have:

Considering m, g and L (where length of pendulum = L, mass of pendulum = m and acceleration due to gravity = g) to be unity as used in [9] we get:

Rights and permissions

About this article

Cite this article

Kumar, A., Sharma, R. Neural/fuzzy self learning Lyapunov control for non linear systems. Int. j. inf. tecnol. 14, 229–242 (2022). https://doi.org/10.1007/s41870-017-0074-z

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s41870-017-0074-z