Abstract

Introduction

The University of Manitoba’s ambulatory pediatric clerkship transitioned to daily encounter cards (DECs) from single in-training evaluation reports (ITERs). The impact of this change on quality of student assessment was unknown. Using the validated Completed Clinical Evaluation Report Rating (CCERR) scale, we compared the assessment quality of the single ITER to the DEC-based system.

Methods

Block randomization was used to select from a cohort of ITER- and DEC-based assessments during equivalent points in clerkship training. Data were transcribed and anonymized and scored by two blinded raters using the CCERR.

Results

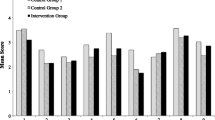

Inter-rater reliability for total CCERR scores was substantive (> 0.6). Mean total CCERR score for the DEC cohort was significantly higher than for the ITER cohort (25.2 vs. 16.8, p < 0.001), as were the mean scores for each item (2.81 vs. 1.86, p < 0.05). Multivariate logistical regression supported the significant influence of assessment method on assessment quality.

Conclusions

There is improvement in the average quality of student assessments associated with the transition from an ITER-based system to a DEC-based system. However, the improvement to only average CCERR scores for the DEC cohort suggests an unmet need for faculty development.

Similar content being viewed by others

References

Hewson MG, Little ML. Giving feedback in medical education: verification of recommended techniques. J Gen Intern Med. 1998;13:111–6.

Sender Liberman A, Liberman M, Steinart Y, et al. Surgery residents and attending surgeons have difference perceptions of feedback. Med Teach. 2009;27:470–2.

Watling CJ, Kenyon CF, Zibrowski EM, Schulz V, Goldszmidt MA, Singh I, et al. Rules of engagement: Resident’s perceptions of the in-training evaluation process. Acad Med. 2008;83:s97–s100.

Watling CJ, Kenyon CF, Schulz V, et al. An exploration of faculty perspectives on the in-training evaluation of residents. Acad Med. 2010;85:1157–62.

Dudek NL, Marks MB, Regehr G. Failure to fail: the perspectives of clinical supervisors. Acad Med. 2005;80:s84–7.

Patel R, Drover A, Chafe R. Pediatric faculty and residents’ perspectives on in-training evaluation reports (ITERs). Can Med Educ J. 2015;6:e41–53.

Gigante J, Dell M, Sharkey A. Getting beyond “good job”: how to give effective feedback. Pediatr. 2011;127:205–7.

Hatala R, Norman GR. In-training evaluation during an internal medicine clerkship. Acad Med. 1999;74:s118–20.

Kogan JR, Holmboe E. Realizing the promise and importance of performance-based assessment. Teach Learn Med. 2013;25:s68–74.

Dudek NL, Marks MB, Wood TJ, Lee AC. Assessing the quality of supervisors’ completed clinical evaluation reports. Med Educ. 2008;42:816–22.

Cheung WJ, Dudek N, Wood TJ, Frank JR. Daily encounter cards - evaluating the quality of documented assessments. J Grad Med Educ. 2016;8:601–4.

Lye PS, Biernat KA, Bragg DS, et al. A pleasure to work with - an analysis of written comments on student evaluations. Ambul Pediatr. 2001;1:128–31.

Royal College of Physicians and Surgeons of Canada. Competence by Design: The Rationale for Change. In: Royal College of Physicians and Surgeons of Canada. n.d. http://www.royalcollege.ca/rcsite/cbd/rationale-why-cbd-e. Accessed 10 Feb 2019.

Cheung WJ, Dudek NL, Wood TJ, Frank JR. Supervisor-trainee continuity and the quality of work-based assessments. Med Educ. 2017;51:1260–8.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Johnston, J., Pinsk, M. Daily Evaluation Cards Are Superior for Student Assessment Compared to Single Rater In-Training Evaluations. Med.Sci.Educ. 30, 203–209 (2020). https://doi.org/10.1007/s40670-019-00855-6

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40670-019-00855-6