Abstract

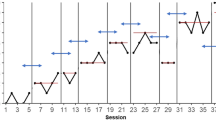

Multiple quantitative methods for single-case experimental design data have been applied to multiple-baseline, withdrawal, and reversal designs. The advanced data analytic techniques historically applied to single-case design data are primarily applicable to designs that involve clear sequential phases such as repeated measurement during baseline and treatment phases, but these techniques may not be valid for alternating treatment design (ATD) data where two or more treatments are rapidly alternated. Some recently proposed data analytic techniques applicable to ATD are reviewed. For ATDs with random assignment of condition ordering, the Edgington’s randomization test is one type of inferential statistical technique that can complement descriptive data analytic techniques for comparing data paths and for assessing the consistency of effects across blocks in which different conditions are being compared. In addition, several recently developed graphical representations are presented, alongside the commonly used time series line graph. The quantitative and graphical data analytic techniques are illustrated with two previously published data sets. Apart from discussing the potential advantages provided by each of these data analytic techniques, barriers to applying them are reduced by disseminating open access software to quantify or graph data from ATDs.

Similar content being viewed by others

Notes

“Single-case designs” (e.g., What Works Clearinghouse, 2020), “single-case experimental designs” (e.g., Smith, 2012), “single-case research designs” (e.g., Maggin et al., 2018), or “single-subject research designs” (e.g., Hammond & Gast, 2010) are terms often used interchangeably. Another possible term is “within-subject designs” (Greenwald, 1976), referring to the fact that in most cases the comparison is performed within the same individual, although in a multiple-baseline design across participants there is also a comparison across participants (Ferron et al., 2014).

Given the absence of phases, immediacy and variability are likely to have a different meaning in the ATD context, as compared to multiple-baseline and ABAB designs. Regarding immediacy, an effect should be immediately visible, if it is to be detected, as each condition lasts for only one or two consecutive measurement occasions. Regarding data variability in each condition, it refers to measurements that are not adjacent.

For instance, Wolfe and McCammon (2020) reviewed instructional practices for behavior analysts and found that instruction on statistical analyses was scarce and most calculations involved only nonoverlap indices. Likewise, the difference between the second edition of the book by Riley-Tillman et al. (2020) and the first edition of 2009, in terms of summary measures and possibilities for meta-analyses, are a few nonoverlap indices mentioned, without referring to either the between-case standardized mean difference (Shadish et al., 2014) or to multilevel models (Van den Noortgate & Onghena, 2003).

For phase designs, several A-B comparisons can be represented on the same modified Brinley plot, because each A-B comparison is a single dot. However, for an ATD, there are multiple dots for each sequence (i.e., one dot for each block). Therefore, having several ATDs on the same modified Brinley plot can make the graphical representation more difficult to interpret.

References

Barlow, D. H., & Hayes, S. C. (1979). Alternating treatments design: One strategy for comparing the effects of two treatments in a single subject. Journal of Applied Behavior Analysis, 12(2), 199–210. https://doi.org/10.1901/jaba.1979.12-199.

Barlow, D. H., Nock, M. K., & Hersen, M. (2009). Single case experimental designs: Strategies for studying behavior change (3rd ed.). Pearson.

Blampied, N. M. (2017). Analyzing therapeutic change using modified Brinley plots: History, construction, and interpretation. Behavior Therapy, 48(1), 115–127. https://doi.org/10.1016/j.beth.2016.09.002.

Branch, M. (2014). Malignant side effects of null-hypothesis significance testing. Theory & Psychology, 24(2), 256–277. https://doi.org/10.1177/0959354314525282.

Bulté, I., & Onghena, P. (2008). An R package for single-case randomization tests. Behavior Research Methods, 40(2), 467–478. https://doi.org/10.3758/BRM.40.2.467.

Cohen, J. (1990). Things I have learned (so far). American Psychologist, 45(12), 1304–1312. https://doi.org/10.1037/0003-066X.45.12.1304.

Cohen, J. (1994). The Earth is round (p < .05). American Psychologist, 49(12), 997–1003. https://doi.org/10.1037/0003-066X.49.12.997.

Cox, A., & Friedel, J. E. (2020). Toward an automation of functional analysis interpretation: A proof of concept. Behavior Modification. Advance online publication. https://doi.org/10.1177/0145445520969188

Craig, A. R., & Fisher, W. W. (2019). Randomization tests as alternative analysis methods for behavior-analytic data. Journal of the Experimental Analysis of Behavior, 111(2), 309–328. https://doi.org/10.1002/jeab.500.

Dart, E. H., & Radley, K. C. (2017). The impact of ordinate scaling on the visual analysis of single-case data. Journal of School Psychology, 63, 105–118. https://doi.org/10.1016/j.jsp.2017.03.008.

De, T. K., Michiels, B., Tanious, R., & Onghena, P. (2020). Handling missing data in randomization tests for single-case experiments: A simulation study. Behavior Research Methods, 52(3), 1355–1370. https://doi.org/10.3758/s13428-019-01320-3.

Dugard, P., File, P., & Todman, J. (2012). Single-case and small-n experimental designs: A practical guide to randomization tests (2nd ed.). Routledge.

Edgington, E. S. (1967). Statistical inference from N=1 experiments. Journal of Psychology, 65(2), 195–199. https://doi.org/10.1080/00223980.1967.10544864.

Edgington, E. S. (1975). Randomization tests for one-subject operant experiments. Journal of Psychology, 90(1), 57–68. https://doi.org/10.1080/00223980.1975.9923926.

Edgington, E. S. (1980). Validity of randomization tests for one-subject experiments. Journal of Educational Statistics, 5(3), 235–251. https://doi.org/10.3102/10769986005003235.

Edgington, E. S. (1996). Randomized single-subject experimental designs. Behaviour Research & Therapy, 34(7), 567–574. https://doi.org/10.1016/0005-7967(96)00012-5.

Edgington, E. S., & Onghena, P. (2007). Randomization tests (4th ed.). Chapman & Hall/CRC.

Fahmie, T. A., & Hanley, G. P. (2008). Progressing toward data intimacy: A review of within-session data analysis. Journal of Applied Behavior Analysis, 41(3), 319–331. https://doi.org/10.1901/jaba.2008.41-319.

Falligant, J. M., Kranak, M. P., Schmidt, J. D., & Rooker, G. W. (2020). Correspondence between fail-safe k and dual-criteria methods: Analysis of data series stability. Perspectives on Behavior Science, 43(2), 303–319. https://doi.org/10.1007/s40614-020-00255-x.

Ferron, J. M., Joo, S.-H., & Levin, J. R. (2017). A Monte Carlo evaluation of masked visual analysis in response-guided versus fixed-criteria multiple-baseline designs. Journal of Applied Behavior Analysis, 50(4), 701–716. https://doi.org/10.1002/jaba.410.

Ferron, J. M., Moeyaert, M., Van den Noortgate, W., & Beretvas, S. N. (2014). Estimating causal effects from multiple-baseline studies: Implications for design and analysis. Psychological Methods, 19(4), 493–510. https://doi.org/10.1037/a0037038.

Fisher, W. W., Kelley, M. E., & Lomas, J. E. (2003). Visual aids and structured criteria for improving visual inspection and interpretation of single-case designs. Journal of Applied Behavior Analysis, 36(3), 387–406. https://doi.org/10.1901/jaba.2003.36-387.

Fletcher, D., Boon, R. T., & Cihak, D. F. (2010). Effects of the TOUCHMATH program compared to a number line strategy to teach addition facts to middle school students with moderate intellectual disabilities. Education & Training in Autism & Developmental Disabilities, 45(3), 449–458 https://www.jstor.org/stable/23880117. Accessed 3 May 2021.

Gafurov, B. S., & Levin, J. R. (2020). ExPRT-Excel® package of randomization tests: Statistical analyses of single-case intervention data (Version 4.1, March 2020). Retrieved from https://ex-prt.weebly.com/. Accessed 3 May 2021.

Gigerenzer, G. (2004). Mindless statistics. Journal of Socio-Economics, 33(5), 587–606. https://doi.org/10.1016/j.socec.2004.09.033.

Greenwald, A. G. (1976). Within-subject designs: To use or not to use? Psychological Bulletin, 8(2), 314–320. https://doi.org/10.1037/0033-2909.83.2.314.

Guyatt, G. H., Keller, J. L., Jaeschke, R., Rosenbloom, D., Adachi, J. D., & Newhouse, M. T. (1990). The n-of-1 randomized controlled trial: Clinical usefulness. Our three-year experience. Annals of Internal Medicine, 112(4), 293–299. https://doi.org/10.7326/0003-4819-112-4-293.

Hagopian, L. P., Fisher, W. W., Thompson, R. H., Owen-DeSchryver, J., Iwata, B. A., & Wacker, D. P. (1997). Toward the development of structured criteria for interpretation of functional analysis data. Journal of Applied Behavior Analysis, 30(2), 313–326. https://doi.org/10.1901/jaba.1997.30-313.

Hall, S. S., Pollard, J. S., Monlux, K. D., & Baker, J. M. (2020). Interpreting functional analysis outcomes using automated nonparametric statistical analysis. Journal of Applied Behavior Analysis, 53(2), 1177–1191. https://doi.org/10.1002/jaba.689.

Hammond, D., & Gast, D. L. (2010). Descriptive analysis of single subject research designs: 1983-2007. Education & Training in Autism & Developmental Disabilities, 45(2), 187–202. https://www.jstor.org/stable/23879806. Accessed 3 May 2021.

Hammond, J. L., Iwata, B. A., Rooker, G. W., Fritz, J. N., & Bloom, S. E. (2013). Effects of fixed versus random condition sequencing during multielement functional analyses. Journal of Applied Behavior Analysis, 46(1), 22–30. https://doi.org/10.1002/jaba.7.

Hantula, D. A. (2019). Editorial: Replication and reliability in behavior science and behavior analysis: A call for a conversation. Perspectives on Behavior Science, 42(1), 1–11. https://doi.org/10.1007/s40614-019-00194-2.

Heyvaert, M., & Onghena, P. (2014). Randomization tests for single-case experiments: State of the art, state of the science, and state of the application. Journal of Contextual Behavioral Science, 3(1), 51–64. https://doi.org/10.1016/j.jcbs.2013.10.002.

Holcombe, A., Wolery, M., & Gast, D. L. (1994). Comparative single subject research: Description of designs and discussion of problems. Topics in Early Childhood and Special Education, 16(1), 168–190. https://doi.org/10.1177/027112149401400111.

Horner, R. H., Carr, E. G., Halle, J., McGee, G., Odom, S., & Wolery, M. (2005). The use of single-subject research to identify evidence-based practice in special education. Exceptional Children, 71(2), 165–179. https://doi.org/10.1177/001440290507100203.

Horner, R. J., & Odom, S. L. (2014). Constructing single-case research designs: Logic and options. In T. R. Kratochwill & J. R. Levin (Eds.), Single-case intervention research: Methodological and statistical advances (pp. 27–51). American Psychological Association. https://doi.org/10.1037/14376-002.

Howick, J., Chalmers, I., Glasziou, P., Greenhaigh, T., Heneghan, C., Liberati, A., Moschetti, I., Phillips, B., Thornton, H., Goddard, O., & Hodgkinson, M. (2011). The 2011 Oxford CEBM Levels of Evidence. Oxford Centre for Evidence-Based Medicine. https://www.cebm.ox.ac.uk/resources/levels-of-evidence/ocebm-levels-of-evidence

Hua, Y., Hinzman, M., Yuan, C., & Balint Langel, K. (2020). Comparing the effects of two reading interventions using a randomized alternating treatment design. Exceptional Children, 86(4), 355–373. https://doi.org/10.1177/0014402919881357.

Iwata, B. A., Duncan, B. A., Zarcone, J. R., Lerman, D. C., & Shore, B. A. (1994). A sequential, test-control methodology for conducting functional analyses of self-injurious behavior. Behavior Modification, 18(3), 289–306. https://doi.org/10.1177/01454455940183003.

Jacobs, K. W. (2019). Replicability and randomization test logic in behavior analysis. Journal of the Experimental Analysis of Behavior, 111(2), 329–341. https://doi.org/10.1002/jeab.501.

Jenson, W. R., Clark, E., Kircher, J. C., & Kristjansson, S. D. (2007). Statistical reform: Evidence-based practice, meta-analyses, and single subject designs. Psychology in the Schools, 44(5), 483–493. https://doi.org/10.1002/pits.20240.

Johnson, A. H., & Cook, B. G. (2019). Preregistration in single-case design research. Exceptional Children, 86(1), 95–112. https://doi.org/10.1177/0014402919868529.

Kazdin, A. E. (1977). Assessing the clinical or applied importance of behavior change through social validation. Behavior Modification, 1(4), 427–452. https://doi.org/10.1177/014544557714001.

Kazdin, A. E. (2011). Single-case research designs: Methods for clinical and applied settings (2nd ed.). Oxford University Press.

Kennedy, C. H. (2005). Single-case designs for educational research. Pearson.

Killeen, P. R. (2005). An alternative to null hypothesis statistical tests. Psychological Science, 16(5), 345–353. https://doi.org/10.1111/j.0956-7976.2005.01538.x.

Kinney, C. E. L. (2020). A clarification of slope and scale. Behavior Modification. Advance online publication. https://doi.org/10.1177/0145445520953366.

Kranak, M. P., Falligant, J. M., & Hausman, N. L. (2021). Application of automated nonparametric statistical analysis in clinical contexts. Journal of Applied Behavior Analysis, 54(2), 824–833. https://doi.org/10.1002/jaba.789.

Kratochwill, T. R., Hitchcock, J. H., Horner, R. H., Levin, J. R., Odom, S. L., Rindskopf, D. M., & Shadish, W. R. (2013). Single-case intervention research design standards. Remedial & Special Education, 34(1), 26–38. https://doi.org/10.1177/0741932512452794.

Kratochwill, T. R., & Levin, J. R. (1980). On the applicability of various data analysis procedures to the simultaneous and alternating treatment designs in behavior therapy research. Behavioral Assessment, 2(4), 353–360.

Kratochwill, T. R., & Levin, J. R. (2010). Enhancing the scientific credibility of single-case intervention research: Randomization to the rescue. Psychological Methods, 15(2), 124–144. https://doi.org/10.1037/a0017736.

Krone, T., Boessen, R., Bijlsma, S., van Stokkum, R., Clabbers, N. D., & Pasman, W. J. (2020). The possibilities of the use of N-of-1 and do-it-yourself trials in nutritional research. PloS One, 15(5), e0232680. https://doi.org/10.1371/journal.pone.0232680.

Lane, J. D., & Gast, D. L. (2014). Visual analysis in single case experimental design studies: Brief review and guidelines. Neuropsychological Rehabilitation, 24(3−4), 445–463. https://doi.org/10.1080/09602011.2013.815636.

Lane, J. D., Ledford, J. R., & Gast, D. L. (2017). Single-case experimental design: Current standards and applications in occupational therapy. American Journal of Occupational Therapy, 71(2), 7102300010p1–7102300010p9. https://doi.org/10.5014/ajot.2017.022210.

Lanovaz, M., Cardinal, P., & Francis, M. (2019). Using a visual structured criterion for the analysis of alternating-treatment designs. Behavior Modification, 43(1), 115–131. https://doi.org/10.1177/0145445517739278.

Lanovaz, M. J., Huxley, S. C., & Dufour, M. M. (2017). Using the dual-criteria methods to supplement visual inspection: An analysis of nonsimulated data. Journal of Applied Behavior Analysis, 50(3), 662–667. https://doi.org/10.1002/jaba.394.

Laraway, S., Snycerski, S., Pradhan, S., & Huitema, B. E. (2019). An overview of scientific reproducibility: Consideration of relevant issues for behavior science/analysis. Perspectives on Behavior Science, 42(1), 33–57. https://doi.org/10.1007/s40614-019-00193-3.

Ledford, J. R. (2018). No randomization? No problem: Experimental control and random assignment in single case research. American Journal of Evaluation, 39(1), 71–90. https://doi.org/10.1177/1098214017723110.

Ledford, J. R., Barton, E. E., Severini, K. E., & Zimmerman, K. N. (2019). A primer on single-case research designs: Contemporary use and analysis. American Journal on Intellectual & Developmental Disabilities, 124(1), 35–56. https://doi.org/10.1352/1944-7558-124.1.35.

Ledford, J. R., & Gast, D. L. (2018). Combination and other designs. In D. L. Gast & J. R. Ledford (Eds.), Single case research methodology: Applications in special education and behavioral sciences (3rd ed., pp. 335–364). Routledge.

Levin, J. R., Ferron, J. M., & Gafurov, B. S. (2017). Additional comparisons of randomization-test procedures for single-case multiple-baseline designs: Alternative effect types. Journal of School Psychology, 63, 13–34. https://doi.org/10.1016/j.jsp.2017.02.003.

Levin, J. R., Ferron, J. M., & Gafurov, B. S. (2020). Investigation of single-case multiple-baseline randomization tests of trend and variability. Educational Psychology Review. Advance online publication. https://doi.org/10.1007/s10648-020-09549-7

Levin, J. R., Ferron, J. M., & Kratochwill, T. R. (2012). Nonparametric statistical tests for single-case systematic and randomized ABAB…AB and alternating treatment intervention designs: New developments, new directions. Journal of School Psychology, 50(5), 599–624. https://doi.org/10.1016/j.jsp.2012.05.001.

Levin, J. R., Kratochwill, T. R., & Ferron, J. M. (2019). Randomization procedures in single-case intervention research contexts: (Some of) “the rest of the story”. Journal of the Experimental Analysis of Behavior, 112(3), 334–348. https://doi.org/10.1002/jeab.558.

Lloyd, B. P., Finley, C. I., & Weaver, E. S. (2018). Experimental analysis of stereotypy with applications of nonparametric statistical tests for alternating treatments designs. Developmental Neurorehabilitation, 21(4), 212–222. https://doi.org/10.3109/17518423.2015.1091043.

Maas, E., Gildersleeve-Neumann, C., Jakielski, K., Kovacs, N., Stoeckel, R., Vradelis, H., & Welsh, M. (2019). Bang for your buck: A single-case experimental design study of practice amount and distribution in treatment for childhood apraxia of speech. Journal of Speech, Language, & Hearing Research, 62(9), 3160–3182. https://doi.org/10.1044/2019_JSLHR-S-18-0212.

Maggin, D. M., Cook, B. G., & Cook, L. (2018). Using single-case research designs to examine the effects of interventions in special education. Learning Disabilities Research & Practice, 33(4), 182–191. https://doi.org/10.1111/ldrp.12184.

Manolov, R. (2019). A simulation study on two analytical techniques for alternating treatments designs. Behavior Modification, 43(4), 544–563. https://doi.org/10.1177/0145445518777875.

Manolov, R., & Onghena, P. (2018). Analyzing data from single-case alternating treatments designs. Psychological Methods, 23(3), 480–504. https://doi.org/10.1037/met0000133.

Manolov, R., & Tanious, R. (2020). Assessing consistency in single-case data features using modified Brinley plots. Behavior Modification. Advance online publication. https://doi.org/10.1177/0145445520982969

Manolov, R., Tanious, R., De, T. K., & Onghena, P. (2020). Assessing consistency in single-case alternation designs. Behavior Modification. Advance online publication. https://doi.org/10.1177/0145445520923990

Manolov, R., & Vannest, K. (2019). A visual aid and objective rule encompassing the data features of visual analysis. Behavior Modification. Advance online publication. https://doi.org/10.1177/0145445519854323

Michiels, B., Heyvaert, M., Meulders, A., & Onghena, P. (2017). Confidence intervals for single-case effect size measures based on randomization test inversion. Behavior Research Methods, 49(1), 363–381. https://doi.org/10.3758/s13428-016-0714-4.

Michiels, B., & Onghena, P. (2019). Randomized single-case AB phase designs: Prospects and pitfalls. Behavior Research Methods, 51(6), 2454–2476. https://doi.org/10.3758/s13428-018-1084-x.

Moeyaert, M., Akhmedjanova, D., Ferron, J., Beretvas, S. N., & Van den Noortgate, W. (2020). Effect size estimation for combined single-case experimental designs. Evidence-Based Communication Assessment & Intervention, 14(1−2), 28–51. https://doi.org/10.1080/17489539.2020.1747146.

Moeyaert, M., Ugille, M., Ferron, J., Beretvas, S. N., & Van den Noortgate, W. (2014). The influence of the design matrix on treatment effect estimates in the quantitative analyses of single-case experimental designs research. Behavior Modification, 38(5), 665–704. https://doi.org/10.1177/0145445514535243.

Nickerson, R. S. (2000). Null hypothesis significance testing: A review of an old and continuing controversy. Psychological Methods, 5(2), 241–301. https://doi.org/10.1037/1082-989X.5.2.241.

Nikles, J., & Mitchell, G. (Eds.). (2015). The essential guide to N-of-1 trials in health. Springer.

Ninci, J. (2019). Single-case data analysis: A practitioner guide for accurate and reliable decisions. Behavior Modification. Advance online publication. https://doi.org/10.1177/0145445519867054

Ninci, J., Vannest, K. J., Willson, V., & Zhang, N. (2015). Interrater agreement between visual analysts of single-case data: A meta-analysis. Behavior Modification, 39(4), 510–541. https://doi.org/10.1177/0145445515581327.

Onghena, P. (2020). One by one: The design and analysis of replicated randomized single-case experiments. In R. van de Schoot & M. Miočević (Eds.), Small sample size solutions: A guide for applied researchers and practitioners (pp. 87–101). Routledge.

Onghena, P., & Edgington, E. S. (1994). Randomization tests for restricted alternating treatments designs. Behaviour Research & Therapy, 32(7), 783–786. https://doi.org/10.1016/0005-7967(94)90036-1.

Onghena, P., & Edgington, E. S. (2005). Customization of pain treatments: Single-case design and analysis. Clinical Journal of Pain, 21(1), 56–68. https://doi.org/10.1097/00002508-200501000-00007.

Onghena, P., Michiels, B., Jamshidi, L., Moeyaert, M., & Van den Noortgate, W. (2018). One by one: Accumulating evidence by using meta-analytical procedures for single-case experiments. Brain Impairment, 19(1), 33–58. https://doi.org/10.1017/BrImp.2017.25.

Perone, M. (1999). Statistical inference in behavior analysis: Experimental control is better. The Behavior Analyst, 22(2), 109–116. https://doi.org/10.1007/BF03391988.

Petursdottir, A. I., & Carr, J. E. (2018). Applying the taxonomy of validity threats from mainstream research design to single-case experiments in applied behavior analysis. Behavior Analysis in Practice, 11(3), 228–240. https://doi.org/10.1007/s40617-018-00294-6.

Pustejovsky, J. E., Swan, D. M., & English, K. W. (2019). An examination of measurement procedures and characteristics of baseline outcome data in single-case research. Behavior Modification. Advance online publication. https://doi.org/10.1177/0145445519864264

Radley, K. C., Dart, E. H., & Wright, S. J. (2018). The effect of data points per x- to y-axis ratio on visual analysts evaluation of single-case graphs. School Psychology Quarterly, 33(2), 314–322. https://doi.org/10.1037/spq0000243.

Riley-Tillman, T. C., Burns, M. K., & Kilgus, S. P. (2020). Evaluating educational interventions: Single-case design for measuring response to intervention (2nd ed.). Guilford Press.

Russell, S. M., & Reinecke, D. (2019). Mand acquisition across different teaching methodologies. Behavioral Interventions, 34(1), 127–135. https://doi.org/10.1002/bin.1643.

Shadish, W. R., Hedges, L. V., & Pustejovsky, J. E. (2014). Analysis and meta-analysis of single-case designs with a standardized mean difference statistic: A primer and applications. Journal of School Psychology, 52(2), 123–147. https://doi.org/10.1016/j.jsp.2013.11.005.

Shadish, W. R., Kyse, E. N., & Rindskopf, D. M. (2013). Analyzing data from single-case designs using multilevel models: New applications and some agenda items for future research. Psychological Methods, 18(3), 385–405. https://doi.org/10.1037/a0032964.

Shadish, W. R., & Sullivan, K. J. (2011). Characteristics of single-case designs used to assess intervention effects in 2008. Behavior Research Methods, 43(4), 971–980. https://doi.org/10.3758/s13428-011-0111-y.

Sidman, M. (1960). Tactics of scientific research. Basic Books.

Simmons, J. P., Nelson, L. D., & Simonsohn, U. (2011). False-positive psychology: Undisclosed flexibility in data collection and analysis allows presenting anything as significant. Psychological Science, 22(11), 1359–1366. https://doi.org/10.1177/0956797611417632.

Sjolie, G. M., Leveque, M. C., & Preston, J. L. (2016). Acquisition, retention, and generalization of rhotics with and without ultrasound visual feedback. Journal of Communication Disorders, 64, 62–77. https://doi.org/10.1016/j.jcomdis.2016.10.003.

Smith, J. D. (2012). Single-case experimental designs: A systematic review of published research and current standards. Psychological Methods, 17(4), 510–550. https://doi.org/10.1037/a0029312.

Solmi, F., Onghena, P., Salmaso, L., & Bulté, I. (2014). A permutation solution to test for treatment effects in alternation design single-case experiments. Communications in Statistics—Simulation & Computation, 43(5), 1094–1111. https://doi.org/10.1080/03610918.2012.725295.

Solomon, B. G. (2014). Violations of assumptions in school-based single-case data: Implications for the selection and interpretation of effect sizes. Behavior Modification, 38(4), 477–496. https://doi.org/10.1177/0145445513510931.

Tanious, R., & Onghena, P. (2020). A systematic review of applied single-case research published between 2016 and 2018: Study designs, randomization, data aspects, and data analysis. Behavior Research Methods. Advance online publication. https://doi.org/10.3758/s13428-020-01502-4

Tate, R. L., Perdices, M., Rosenkoetter, U., Shadish, W., Vohra, S., Barlow, D. H., Horner, R., Kazdin, A., Kratochwill, T. R., McDonald, S., Sampson, M., Shamseer, L., Togher, L., Albin, R., Backman, C., Douglas, J., Evans, J. J., Gast, D., Manolov, R., Mitchell, G., et al. (2016). The Single-Case Reporting guideline In BEhavioural interventions (SCRIBE) 2016 statement. Journal of School Psychology, 56, 133–142. https://doi.org/10.1016/j.jsp.2016.04.001.

Tate, R. L., Perdices, M., Rosenkoetter, U., Wakim, D., Godbee, K., Togher, L., & McDonald, S. (2013). Revision of a method quality rating scale for single-case experimental designs and n-of-1 trials: The 15-item Risk of Bias in N-of-1 Trials (RoBiNT) Scale. Neuropsychological Rehabilitation, 23(5), 619–638. https://doi.org/10.1080/09602011.2013.824383.

Thirumanickam, A., Raghavendra, P., McMillan, J. M., & van Steenbrugge, W. (2018). Effectiveness of video-based modelling to facilitate conversational turn taking of adolescents with autism spectrum disorder who use AAC. AAC: Augmentative & Alternative Communication, 34(4), 311–322. https://doi.org/10.1080/07434618.2018.1523948.

Van den Noortgate, W., & Onghena, P. (2003). Hierarchical linear models for the quantitative integration of effect sizes in single-case research. Behavior Research Methods, Instruments, & Computers, 35(1), 1–10. https://doi.org/10.3758/BF03195492.

Vannest, K. J., Parker, R. I., Davis, J. L., Soares, D. A., & Smith, S. L. (2012). The Theil–Sen slope for high-stakes decisions from progress monitoring. Behavioral Disorders, 37(4), 271–280. https://doi.org/10.1177/019874291203700406.

Vohra, S., Shamseer, L., Sampson, M., Bukutu, C., Schmid, C. H., Tate, R., Nikles, J., Zucker, D. R., Kravitz, R., Guyatt, G., Altman, D. G., & Moher, D. (2015). CONSORT extension for reporting N-of-1 trials (CENT) 2015 Statement. British Medical Journal, 350, h1738. https://doi.org/10.1136/bmj.h1738.

Weaver, E. S., & Lloyd, B. P. (2019). Randomization tests for single case designs with rapidly alternating conditions: An analysis of p-values from published experiments. Perspectives on Behavior Science, 42(3), 617–645. https://doi.org/10.1007/s40614-018-0165-6.

What Works Clearinghouse. (2020). What works clearinghouse standards handbook, version 4.1. U.S. Department of Education, Institute of Education Sciences, National Center for Education Evaluation & Regional Assistance. https://ies.ed.gov/ncee/wwc/handbooks. Accessed 3 May 2021.

Wicherts, J. M., Veldkamp, C. L., Augusteijn, H. E., Bakker, M., van Aert, R. C., & Van Assen, M. A. (2016). Degrees of freedom in planning, running, analyzing, and reporting psychological studies: A checklist to avoid p-hacking. Frontiers in Psychology, 7, 1–12. https://doi.org/10.3389/fpsyg.2016.01832.

Wilkinson, L., & The Task Force on Statistical Inference. (1999). Statistical methods in psychology journals: Guidelines and explanations. American Psychologist, 54(8), 694–704. https://doi.org/10.1037/0003-066X.54.8.594.

Wolery, M., Busick, M., Reichow, B., & Barton, E. E. (2010). Comparison of overlap methods for quantitatively synthesizing single-subject data. Journal of Special Education, 44(1), 18–29. https://doi.org/10.1177/0022466908328009.

Wolery, M., Gast, D. L., & Ledford, J. R. (2018). Comparative designs. In D. L. Gast & J. R. Ledford (Eds.), Single case research methodology: Applications in special education and behavioral sciences (3rd ed., pp. 283–334). Routledge.

Wolfe, K., & McCammon, M. N. (2020). The analysis of single-case research data: Current instructional practices. Journal of Behavioral Education. Advance online publication. https://doi.org/10.1007/s10864-020-09403-4

Wolfe, K., Seaman, M. A., Drasgow, E., & Sherlock, P. (2018). An evaluation of the agreement between the conservative dual-criterion method and expert visual analysis. Journal of Applied Behavior Analysis, 51(2), 345–351. https://doi.org/10.1002/jaba.453.

Zucker, D. R., Ruthazer, R., & Schmid, C. H. (2010). Individual (N-of-1) trials can be combined to give population comparative treatment effect estimates: Methodologic considerations. Journal of Clinical Epidemiology, 63(12), 1312–1323. https://doi.org/10.1016/j.jclinepi.2010.04.020.

Acknowledgements

The authors thank Joelle Fingerhut for reviewing a version of the manuscript and providing feedback on formal and style issues related to the English language.

Availability of data and material

The data used for the illustrations are available from https://osf.io/ks4p2/

Code availability (software application or custom code)

Several freely-available software applications are mentioned in the text, but the underlying code for creating has not been publicly shared.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflicts of interest

The authors report no conflicts of interest. Furthermore, the authors have no financial interest for any of the websites mentioned in the manuscript, as they are free to use and the authors do not generate revenue for themselves by the use of the websites.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Manolov, R., Tanious, R. & Onghena, P. Quantitative Techniques and Graphical Representations for Interpreting Results from Alternating Treatment Design. Perspect Behav Sci 45, 259–294 (2022). https://doi.org/10.1007/s40614-021-00289-9

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s40614-021-00289-9