Abstract

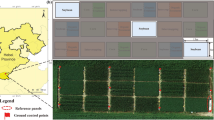

Consumer-grade cameras have emerged as a cost-effective alternative to conventional scientific cameras in precision agriculture applications. However, there is a lack of information on their appropriate use and calibration. This study focused on developing practical methodologies for determining optimal camera settings and converting image digital numbers (DNs) to reflectance. Two Nikon D7100 and two Nikon D850 cameras with visible and near-infrared (NIR) sensitivity were deployed on both manned and unmanned aircraft for image acquisition. To optimize camera settings, including exposure time and aperture, an approach that considered flight parameters and image histograms was employed. Linear and nonlinear regression analyses based on multiple nonlinear models were performed to accurately characterize the reflectance-DN relationship across all four bands (blue, green, red and NIR) based on seven calibration tarps. The results revealed that the exponential model with vertical translation was the optimal model for reflectance conversion for both camera types. Based on the optimized camera parameters and the optimal model type, this study provided an extensive analysis of the models and their root mean square errors (RMSE) derived from all 952 possible 2- to 6-tarp combinations for all bands in both camera types. This analysis led to the selection of optimal tarp combinations based on the desired level of accuracy for each of the five multi-tarp configurations. As the number of tarps increased to 4, 5, or 6, the RMSE values stabilized for all bands, indicating 4-tarp combinations were the optimal choice. These findings hold significant practical implications for practitioners in precision agriculture seeking guidance for configuring consumer-grade cameras effectively while ensuring accurate reflectance conversion.

Similar content being viewed by others

Data availability

Data will be made available upon reasonable request.

References

Bagnall, G. C., Thomasson, J. A., Yang, C., Wang, T., Han, X., Sima, C., & Chang, A. (2023). Uncrewed aerial vehicle radiometric calibration: A comparison of autoexposure and fixed-exposure images. The Plant Phenome Journal, 6, e20082. https://doi.org/10.1002/ppj2.20082.

Bayer, B. E. (1976). Color Imaging Array. US Patent 3971065, 20 July 1976.

Burggraaff, O., Schmidt, N., Zamorano, J., Pauly, K., Pascual, S., Tapia, C., Spyrakos, E., & Snik, F. (2019). Standardized spectral and radiometric calibration of consumer cameras. Optics Express, 27(14), 19075–19101. https://doi.org/10.1364/OE.27.019075.

Burkart, A., Hecht, V. L., Kraska, T., & Rascher, U. (2018). Phenological analysis of unmanned aerial vehicle-based time series of barley imagery with high temporal resolution. Precision Agriculture, 19, 134–146. https://doi.org/10.1007/s11119-017-9504-y.

Cao, S., Danielson, B., Clare, S., Koenig, S., Campos-Vargas, C., & Sanchez-Azofeifa, A. (2019). Radiometric calibration assessments for UAS-borne multispectral cameras: Laboratory and field protocols. ISPRS Journal of Photogrammetry and Remote Sensing, 149, 132–145. https://doi.org/10.1016/j.isprsjprs.2019.01.016.

Coburn, C. A., Smith, A. M., Logie, G. S., & Kennedy, P. (2018). Radiometric and spectral comparison of inexpensive camera systems used for remote sensing. International Journal of Remote Sensing, 39(15–16), 4869–4890. https://doi.org/10.1080/01431161.2018.1466085.

Corti, M., Cavalli, D., Cabassi, G., Vigoni, A., Degano, L., & Gallina, M., P (2019). Application of a low-cost camera on a UAV to estimate maize nitrogen-related variables. Precision Agriculture, 20, 675–696. https://doi.org/10.1007/s11119-018-9609-y.

Daniels, L., Eeckhout, E., Wieme, J., Dejaegher, Y., Audenaert, K., & Maes, W. H. (2023). Identifying the optimal radiometric calibration method for UAV-based multispectral imaging. Remote Sensing, 15(11), 2909. https://doi.org/10.3390/rs15112909.

Del Pozo, S., Rodríguez-Gonzálvez, P., Hernández-López, D., & Felipe-García, B. (2014). Vicarious radiometric calibration of a multispectral camera on board an unmanned aerial system. Remote Sensing, 6(3), 1918–1937. https://doi.org/10.3390/rs6031918.

Guo, Y., Senthilnath, J., Wu, W., Zhang, X., Zeng, Z., & Huang, H. (2019). Radiometric calibration for multispectral camera of different imaging conditions mounted on a UAV platform. Sustainability, 11(4), 978. https://doi.org/10.3390/su11040978.

Herzig, P., Borrmann, P., Knauer, U., Klück, H. C., Kilias, D., Seiffert, U., Pillen, K., & Maurer, A. (2021). Evaluation of RGB and multispectral unmanned aerial vehicle (UAV) imagery for high-throughput phenotyping and yield prediction in barley breeding. Remote Sensing, 13(14), 2670. https://doi.org/10.3390/rs13142670.

Iqbal, F., Lucieer, A., & Barry, K. (2018). Simplified radiometric calibration for UAS-mounted multispectral sensor. European Journal of Remote Sensing, 51(1), 301–313. https://doi.org/10.1080/22797254.2018.1432293.

Ji, Y., Liu, R., Xiao, Y., Cui, Y., Chen, Z., Zong, X., & Yang, T. (2023). Faba bean above-ground biomass and bean yield estimation based on consumer-grade unmanned aerial vehicle RGB images and ensemble learning. Precision Agriculture, 24, 1439–1460. https://doi.org/10.1007/s11119-023-09997-5.

Karpouzli, E., & Malthus, T. (2003). The empirical line method for the atmospheric correction of IKONOS imagery. International Journal of Remote Sensing, 24(5), 1143–1150. https://doi.org/10.1080/0143116021000026779.

Laliberte, A. S., Gogorth, M. A., Steele, C. M., & Rango, A. (2011). Multispectral remote sensing from unmanned aircraft: Image processing workflows and applications for rangeland environments. Remote Sensing, 3, 2529–2551. https://doi.org/10.3390/rs3112529.

Lin, C. H., Chung, K. L., & Yu, C. W. (2016). Novel chroma subsampling strategy based on mathematical optimization for compressing mosaic videos with arbitrary RGB color filter arrays in H.264/AVC and HEVC. IEEE Transactions on Circuits and Systems for Video Technology, 26, 1722–1733. https://doi.org/10.1109/TCSVT.2015.2472118.

Logie, G. S. J., & Coburn, C. A. (2018). An investigation of the spectral and radiometric characteristics of low-cost digital cameras for use in UAV remote sensing. International Journal of Remote Sensing, 39, 4891–4909. https://doi.org/10.1080/01431161.2018.1488297.

Mafanya, M., Tsele, P., Botai, J. O., Manyama, P., Chirima, G. J., & Monate, T. (2018). Radiometric calibration framework for ultra-high-resolution UAV-derived orthomosaics for large-scale mapping of invasive alien plants in semi-arid woodlands: Harrisia pomanensis as a case study. International Journal of Remote Sensing, 39, 1–22. https://doi.org/10.1080/01431161.2018.1490503.

Mamaghani, B., & Salvaggio, C. (2019). Comparative study of panel and panelless-based reflectance conversion techniques for agricultural remote sensing. https://doi.org/10.48550/arXiv.1910.03734.

Marani, R., Milella, A., Petitti, A., & Reina, G. (2021). Deep neural networks for grape bunch segmentation in natural images from a consumer-grade camera. Precision Agriculture, 22, 387–413. https://doi.org/10.1007/s11119-020-09736-0.

Marquardt, D. W. (1963). An algorithm for least-squares estimation of nonlinear parameters. Journal of the Society for Industrial and Applied Mathematics, 11(2), 431–441. https://doi.org/10.1137/0111030.

Menon, D., Andriani, S., & Calvagno, G. (2007). Demosaicing with directional filtering and a posteriori decision. IEEE Transactions on Image Processing, 16(1), 132–141. https://doi.org/10.1109/TIP.2006.884928.

Moran, M. S., Bryant, R. B., Clarke, T. R., & Qi, J. (2001). Deployment and calibration of reference reflectance tarps for use with airborne imaging sensors. Photogrammetric Engineering & Remote Sensing, 67(3), 273–286.

Nijland, W., de Jong, R., de Jong, S. M., Wulder, M. A., Bater, C. W., & Coops, N. C. (2014). Monitoring plant condition and phenology using infrared sensitive consumer grade digital cameras. Agricultural and Forest Meteorology, 184, 98–106. https://doi.org/10.1016/j.agrformet.2013.09.007.

Sakamoto, T., Gitelson, A. A., Nguy-Robertson, A. L., Arkebauer, T. J., Wardlow, B. D., Suyker, A. E., et al. (2012). An alternative method using digital cameras for continuous monitoring of crop status. Agricultural and Forest Meteorology, 154–155, 113–126. https://doi.org/10.1016/j.agrformet.2011.10.014.

Seber, G. A. F., & Wild, C. J. (2003). Nonlinear regression. Wiley. https://doi.org/10.1002/0471725315.

Shin, T., Jeong, S., & Ko, J. (2023). Development of a radiometric calibration method for multispectral images of croplands obtained with a remote-controlled aerial system. Remote Sensing, 15(5), 1408. https://doi.org/10.3390/rs15051408.

Smith, G. M., & Milton, E. J. (1999). The use of the empirical line method to calibrate remotely sensed data to reflectance. International Journal of Remote Sensing, 20(13), 2653–2662. https://doi.org/10.1080/014311699211994.

Tu, Y. H., Phinn, S., Johansen, K., & Robson, A. (2018). Assessing radiometric correction approaches for multi-spectral UAS imagery for horticultural applications. Remote Sensing, 10(11), 1684. https://doi.org/10.3390/rs10111684.

Valencia-Ortiz, M., Sangjan, W., Selvaraj, M. G., McGee, R. J., & Sankaran, S. (2021). Effect of the solar zenith angles at different latitudes on estimated crop vegetation indices. Drones, 5, 80. https://doi.org/10.3390/drones5030080.

Verhoeven, G. J. J. (2010). It’s all about the format–unleashing the power of RAW aerial photography. International Journal of Remote Sensing, 31(8), 2009–2042. https://doi.org/10.1080/01431160902929271.

Wang, C., & Myint, S. W. (2015). A simplified empirical line method of radiometric calibration for small unmanned aircraft systems-based remote sensing. IEEE Journal of Selected Topics in Applied Earth Observations and Remote Sensing, 8(5), 1876–1885. https://doi.org/10.1109/JSTARS.2015.2422716.

Yang, C., Westbrook, J. K., Suh, C. P. C., Martin, D. E., Hoffmann, W. C., Lan, Y., et al. (2014). An airborne multispectral imaging system based on two consumer-grade cameras for agricultural remote sensing. Remote Sensing, 6, 5257–5278. https://doi.org/10.3390/rs6065257.

Zarzar, C. M., Dash, P., Dyer, J. L., Moorhead, R., & Hathcock, L. (2020). Development of a simplified radiometric calibration framework for water-based and rapid deployment unmanned aerial system (UAS) operations. Drones, 4(2), 17. https://doi.org/10.3390/drones4020017.

Zhang, J., Yang, C., Song, H., Hoffmann, W. C., Zhang, D., & Zhang, G. (2016). Evaluation of an airborne remote sensing platform consisting of two consumer-grade cameras for crop identification. Remote Sensing, 8, 257. https://doi.org/10.3390/rs8030257.

Zhang, J., Wang, C., Yang, C., Jiang, Z., Zhou, G., Wang, B., Shi, Y., Zhang, D., You, L., & Xie, J. (2020). Evaluation of a UAV-mounted consumer-grade camera with different spectral modifications and two handheld spectral sensors for rapeseed growth monitoring: Performance and influencing factors. Precision Agriculture, 21, 1092–1120. https://doi.org/10.1007/s11119-020-09710-w.

Zheng, H., Cheng, T., Li, D., Zhou, X., Yao, X., Tian, Y., Cao, W., & Zhu, Y. (2018). Evaluation of RGB, color-infrared and multispectral images acquired from unmanned aerial systems for the estimation of nitrogen accumulation in rice. Remote Sensing, 10(6), 824. https://doi.org/10.3390/rs10060824.

Acknowledgements

The authors extend their gratitude to Fred Gomez and Andrew Garza for their assistance in image acquisition and ground control point collection.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Competing interests

The authors declare no conflicts of interest.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Yang, C., Fritz, B.K. & Suh, C.PC. Practical methods for aerial image acquisition and reflectance conversion using consumer-grade cameras on manned and unmanned aircraft. Precision Agric (2024). https://doi.org/10.1007/s11119-024-10145-w

Accepted:

Published:

DOI: https://doi.org/10.1007/s11119-024-10145-w