Abstract

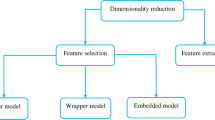

Feature selection (FS) is an essential step for machine learning problems that can improve the performance of the classification by removing useless features from the data set. FS is an NP-hard problem, so meta-heuristic algorithms can be used to find good solutions for this problem. Horse herd Optimization Algorithm (HOA) is a new meta-heuristic approach inspired by horses ‘herding behavior. In this paper, an improved version of the HOA algorithm called BHOA is proposed as a wrapper-based FS method. To convert continuous to discrete search space, S-Shaped and V-Shaped transfer functions are considered. Moreover, to control selection pressure, exploration, and exploitation capabilities, the Power Distance Sums Scaling approach is used to scale the fitness values of the population. The efficiency of the proposed method is estimated on 17 standard benchmark datasets. The implementation results prove the efficiency of the proposed method based on the V-shaped category of transfer functions compared to other transfer functions and other wrapper-based FS algorithms.

Similar content being viewed by others

Data availability

Data sharing not applicable to this article as no datasets were generated or analyzed during the current study.

References

Abdel-Basset M, El-Shahat D, El-henawy I, de Albuquerque VHC, Mirjalili SA (2020) A new fusion of grey wolf optimizer algorithm with a two-phase mutation for feature selection. Expert Syst Appl 139:112824

Al-Tashi Q, Kadir SJA, Rais HM, Mirjalili S, Alhussian H (2019) Binary optimization using hybrid grey wolf optimization for feature selection. IEEE Access 7:39496–39508

Altman NS (1992) An introduction to kernel and nearest-neighbor nonparametric regression. Am Stat 46(3):175–185

Al-Wajih R, Abdulkadir SJ, Aziz N, Al-Tashi Q, Talpur N (2021) Hybrid binary Grey wolf with Harris hawks optimizer for feature selection. IEEE Access 9:31662–31677

Arora S, Anand P (2019) Binary butterfly optimization approaches for feature selection. Expert Syst Appl 116:147–160

Bello R, Gomez Y, Nowe A, Garcia MM (2007) Two-step particle swarm optimization to solve the feature selection problem. In: Proc. 7th Int. Conf. Intell. Syst. Des. Appl. (ISDA) pp 691–696

Bhattacharya S, Maddikunta PKR, Kaluri R, Singh S, Gadekallu TR, Alazab M, Tariq U (2020) A novel PCA-firefly based XGBoost classification model for intrusion detection in networks using GPU. Electronics 9(2):219

Blachnik M (2019) Ensembles of instance selection methods: a comparative study. Int J Appl Math Comput Sci 29(1):151–168

Chandrashekar G, Sahin F (2014) A survey on feature selection methods. Comput Electr Eng 40(1):16–28

Chizi B, Rokach L, Maimon O (2009) A Survey of Feature Selection Techniques. Encyclopedia of Data Warehousing and Mining, Second Edition, IGI Global. pp. 1888–1895

Cui Y, Dong S, Liu W (2017) Feature Selection Algorithm Based on Correlation between Muti Metric Network Traffic Flow Features. Int Arab J Inform Technol (IAJIT), 14(3)

Dash M, Liu H (1997) Feature selection for classification. Intel Data Analy 1:131–156

Dhiman G, Oliva D, Kaur A, Singh KK, Vimal S, Sharma A, Cengiz K (2021) BEPO: a novel binary emperor penguin optimizer for automatic feature selection. Knowl-Based Syst 211:106560

Dua D, KarraTaniskidou E (2017) UCI Machine Learning Repository, University of California, Irvine, School of Information and Computer Sciences, http://archive.ics.uci.edu/ml

Emary E, Zawbaa HM, Hassanien AE (2016) Binary grey wolf optimization approaches for feature selection. Neurocomputing 172:371–381

Emary E, Zawbaa HM, Hassanien AE (2016) Binary grey wolf optimization approaches for feature selection. Neurocomputing 172:371–381

Emary E, Zawbaa HM, Hassanien A (2016) Binary ant lion approaches for feature selection. Neurocomputing 213:54–65

Ewees AA, Abd El Aziz M, Hassanien AE (2019) Chaotic multi-verse optimizer-based feature selection. Neural Comput Applic 31(4):991–1006

Faris H, Mafarja MM, Heidari AA, Aljarah I, al-Zoubi A’M, Mirjalili S, Fujita H (2018) An efficient binary salp swarm algorithm with crossover scheme for feature selection problems. Knowl-Based Syst 154:43–67

Fayyad U, Piatetsky-Shapiro G, Smyth P (1996) From data mining to knowledge discovery in databases. AI Mag 17(3):37

Gadekallu TR, Gao X-Z (2021) An efficient attribute reduction and fuzzy logic classifier for heart disease and diabetes prediction. Recent Adv Comput Sci Commun 14(1):158–165

Gao Y, Zhou Y, Luo Q (2020) An efficient binary equilibrium optimizer algorithm for feature selection. J IEEE Access 8:140936–140963

Gao Y, Zhou Y, Luo Q (2020) An efficient binary equilibrium optimizer algorithm for feature selection. IEEE Access 8:140936–140963

García S, Fernández A, Luengo J, Herrera F (2010) Advanced nonparametric tests for multiple comparisons in the design of experiments in computational intelligence and data mining: experimental analysis of power. Inf Sci 180(10):2044–2064

Ghosh KK, Guha R, Bera SK, Sarkar R, Mirjalili S (2020) BEO: binary equilibrium optimizer combined with simulated annealing for feature selection

Ghosh KK, Guha R, Bera SK, Kumar N, Sarkar R, Applications (2021) S-shaped versus V-shaped transfer functions for binary Manta ray foraging optimization in feature selection problem, Neural Computing and Applications. pp. 1–15

Goldberg DE (1989) Genetic algorithms in search, optimization, and machine learning. Reading: Addison-Wesley

Guha R, Ghosh M, Mutsuddi S, Sarkar R, Mirjalili S (2020) Embedded chaotic whale survival algorithm for filter-wrapper feature selection. Soft Comput 24(17):12821–12843

Guyon I, Elisseeff A (2003) An introduction to variable and feature selection. J Mach Learn Res 3:1157–1182

Han J, Pei J, Kamber M (2011) Data Mining: Concepts and Techniques. Amsterdam, the Netherlands. Elsevier

Heidari AA, Mirjalili S, Faris H, Aljarah I, Mafarja M, Chen H (2019) Harris hawks optimization: algorithm and applications. Futur Gener Comput Syst 97:849–872

Hussain K, Neggaz N, Zhu W, Houssein EH (2021) An efficient hybrid sine-cosine Harris hawks optimization for low and high-dimensional feature selection. Expert Syst Appl 176:114778

Kabir MM, Shahjahan M, Murase K (2011) A new local search based hybrid genetic algorithm for feature selection. Neurocomputing 74(17):2914–2928

Karegowda AG, Manjunath AS, Jayaram MA (Feb. 2010) Feature subset selection problem using wrapper approach in supervised learning. Int J Comput Appl 1(7):13–17

Kashef S, Nezamabadi-pour H (2015) An advanced ACO algorithm for feature subset selection. Neurocomputing 147:271–279

Kennedy J, Eberhart R (1995) Particle swarm optimization. In: Proceedings of ICNN'95-international conference on neural networks. vol. 4, pp. 1942–1948: IEEE

Kohavi R, John GH (1997) Wrappers for feature subset selection. Artif Intell 97(1–2):273–324

Li J, Cheng K, Wang S (2017) Feature selection: A data perspective. ACM Comput Surv (CSUR) 50(6):1–45

Liu H, Motoda H (2012) Feature Selection for Knowledge Discovery and Data Mining, Springer 454

Mafarja M, Mirjalili S (2018) Whale optimization approaches for wrapper feature selection. Appl Soft Comput 62:441–453

Mafarja M, Mirjalili SA (2018) Whale optimization approach for wrapper feature selection. Appl Soft Comput 62:441–453

Mafarja M, Eleyan D, Abdullah S, Mirjalili S (2017) S-shaped vs. V-shaped transfer functions for ant lion optimization algorithm in feature selection problem. In: Proceedings of the international conference on future networks and distributed systems. pp. 1–7

MiarNaeimi F, Azizyan G, Rashki M (2021) Horse herd optimization algorithm: a nature-inspired algorithm for high-dimensional optimization problems. Knowl-Based Syst 213:106711

Mirjalili S, Lewis A (2013) S-shaped versus V-shaped transfer functions for binary particle swarm optimization. Swarm Evol Comput 9:1–14

Mirjalili S, Mirjalili SM, Lewis A (2014) Grey wolf optimizer. Adv Eng Softw 69:46–61

Mirjalili S, Gandomi AH, Mirjalili SZ, Saremi S, Faris H, Mirjalili SM (2017) Salp Swarm Algorithm: a bio-inspired optimizer for engineering design problems. Adv. Eng. Softw, PP. 114, PP. 163–191

Neggaz N, Houssein EH, Hussain K (2020) An efficient henry gas solubility optimization for feature selection. Expert Syst Appl 152:113364

Punitha S, Stephan T, Gandomi AH, P. I. Biomedicine (2022) A Novel Breast Cancer Diagnosis Scheme With Intelligent Feature and Parameter Selections. Computer Methods and Programs in Biomedicine 214:106432

Ramírez-Gallego S, Lastra I, Martínez-Rego D, et al (2016) Fast-mRMR: Fast Minimum Redundancy Maximum Relevance Algorithm for High-Dimensional Big Data. Int J Intel Syst vol. 32, no. 2

Reddy GT, Reddy MPK, Lakshmanna K, Kaluri R, Rajput DS, Srivastava G, Baker T (2020) Analysis of dimensionality reduction techniques on big data. IEEE Access 8:54776–54788

SaiSindhuTheja R, Shyam GK (2021) An efficient metaheuristic algorithm based feature selection and recurrent neural network for DoS attack detection in cloud computing environment. Appl Soft Comput 100:106997

Song X-f, Zhang Y, Gong D-w, Sun X-y (2021) Feature selection using bare-bones particle swarm optimization with mutual information. Pattern Recogn 112:107804

Storn R, Price K (1997) Differential evolution–a simple and efficient heuristic for global optimization over continuous spaces. J Glob Optim 11(4):341–359

Tu Q, Chen X, Liu X (2019) Multi-strategy ensemble grey wolf optimizer and its application to feature selection. Appl Soft Comput 76:16–30

Vergara JR, Estévez PA (Jan. 2014) A review of feature selection methods based on mutual information. Neural Comput Applic 24(1):175–186

Wilcoxon F (1945) Individual comparisons by ranking methods. Biom Bull 6:80–83

Zhang LF, Zhou CX, He R, Xu Y, Yan ML (2015) A novel fitness allocation algorithm for maintaining a constant selective pressure during GA procedure. Neurocomputing 148:3–16

Zhang X, Xu Y, Yu C, Heidari AA, Li S, Chen H, Li C (2020) Gaussian mutational chaotic fruit fly-built optimization and feature selection. Expert Syst Appl 141:112976

ZorarpacI E, Özel SA (2016) A hybrid approach of differential evolution and artificial bee colony for feature selection. Expert Syst Appl 62:91–103

Funding

This research received no external funding.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendices

Appendix A

Reconsider Theorem 1.

Proof

The scaled fitness values for all particles in each population can be extracted as follows.

where \( {N}_{i^{-}} \) indicates the number of \( fi{t}_j\in fi{t}_i^{-} \). The value of \( \underset{\alpha \to \infty }{\lim }{Fit}_{best} \) can be obtained as follows:

We know that \( \frac{\left(N-1\right)\left( fi{t}_{best}- fi{t}_{worst}\right)}{{\left(N-1\right)}^{\infty }+\left( fi{t}_{best}- fi{t}_{worst}\right)}=0 \) . Moreover \( 1>\frac{N_{i^{-}}}{N-1}\ge 0 \). Thus \( {\left(\frac{N_{i^{-}}}{N-1}\right)}^{\infty }=\frac{N_{i^{-}}^{\infty }}{{\left(N-1\right)}^{\infty }}=0 \).

Moreover \( \frac{N_{i^{-}}^{\infty }}{{\left(N-1\right)}^{\infty }}\ge \frac{N_{i^{-}}^{\infty }-\left( fi{t}_{best}- fi{t}_i\right)}{{\left(N-1\right)}^{\infty }+\left( fi{t}_{best}- fi{t}_{worst}\right)}\ge 0 \). So, \( \frac{N_{i^{-}}^{\infty }-\left( fi{t}_{best}- fi{t}_i\right)}{{\left(N-1\right)}^{\infty }+\left( fi{t}_{best}- fi{t}_{worst}\right)}=0 \).

Thus, \( \underset{\alpha \to \infty }{\lim }{Fit}_{b\textrm{e} st}=1 \).

On the other hand, we know that \( {\sum}_{i=1}^N{Fit}_i(t)=1. \) Thus, the value of other individuals is equal to “0”.

Reconsider Theorem 2.

Proof

The scaled fitness values for all particles in each population can be extracted as follows:

where \( {N}_{i^{+}} \) indicates the number of \( fi{t}_j\in fi{t}_i^{+} \). This theorem can be proved the same as theorem 1.

Appendix B

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Asghari Varzaneh, Z., Hosseini, S. & Javidi, M.M. A novel binary horse herd optimization algorithm for feature selection problem. Multimed Tools Appl 82, 40309–40343 (2023). https://doi.org/10.1007/s11042-023-15023-7

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-15023-7