Abstract

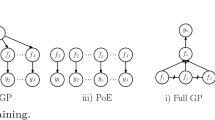

In many real world optimization problems observations are corrupted by a heteroscedastic noise, which depends on the input location. Bayesian optimization (BO) is an efficient approach for global optimization of black-box functions, but the performance of using a Gaussian process (GP) model can degrade with changing levels of noise due to a homoscedastic noise assumption. However, a generalized product of experts (GPOE) model allows us to build independent GP experts on the subsets of observations with individual set of hyperparameters, which is flexible enough to capture the changing levels of noise. In this paper we propose a heteroscedastic Bayesian optimization algorithm by combining the GPOE model with two modifications of existing acquisition functions, which are capable of representing and penalizing heteroscedastic noise across the input space. We compare and evaluate the performance of GPOE based BO (GPOEBO) model on 6 synthetic global optimization functions corrupted with the heteroscedastic noise as well as on two real-world scientific datasets. The results show that GPOEBO is able to improve the accuracy compared to other methods.

Similar content being viewed by others

References

Assael, J.A.M., Wang, Z., Shahriari, B., de Freitas, N.: Heteroscedastic treed Bayesian optimisation. arXiv preprint arXiv:1410.7172 (2014)

Brochu, E., Cora, V.M., De Freitas, N.: A tutorial on Bayesian optimization of expensive cost functions, with application to active user modeling and hierarchical reinforcement learning. arXiv preprint arXiv:1012.2599 (2010)

Calandra, R.: Bayesian modeling for optimization and control in robotics. Ph.D. thesis, Technische Universität Darmstadt (2017)

Cao, Y.: Scaling Gaussian processes. Ph.D. thesis, University of Toronto (Canada) (2018)

Cao, Y., Fleet, D.J.: Generalized product of experts for automatic and principled fusion of Gaussian process predictions. In: Modern Nonparametrics 3: Automating the Learning Pipeline workshop at NIPS. arXiv:1410.7827 (2014)

Cao, Y., Fleet, D.J.: Transductive log opinion pool of Gaussian process experts. In: Workshop on Nonparametric Methods for Large Scale Representation Learning at NIPS. arXiv:1511.07551 (2015)

Chalupka, K., Williams, C.K., Murray, I.: A framework for evaluating approximation methods for Gaussian process regression. J. Mach. Learn. Res. 14, 333–350 (2013)

Cohen, S., Mbuvha, R., Marwala, T., Deisenroth, M.P.: Healing products of Gaussian process experts. In: Proceedings of the 37th International Conference on Machine Learning pp. 2068–2077. PMLR (2020)

Cowen-Rivers, A.I., Lyu, W., Tutunov, R., Wang, Z., Grosnit, A., Griffiths, R.R., Maraval, A.M., Jianye, H., Wang, J., Peters, J., et al.: HEBO: pushing the limits of sample-efficient hyper-parameter optimisation. J. Artif. Intell. Res. 74, 1269–1349 (2022)

Deisenroth, M.P., Ng, J.W.: Distributed Gaussian processes. In: 32nd International Conference on Machine Learning, ICML 2015, vol. 2 (2015)

Frazier, P.I.: Bayesian optimization. In: Recent Advances in Optimization and Modeling of Contemporary Problems, pp. 255–278 (2018)

Goldberg, P., Williams, C., Bishop, C.: Regression with input-dependent noise: a Gaussian process treatment. Adv. Neural Inf. Process. Syst. 10, 493–499 (1997)

Griffiths, R.R., Aldrick, A.A., Garcia-Ortegon, M., Lalchand, V., et al.: Achieving robustness to aleatoric uncertainty with heteroscedastic Bayesian optimisation. Mach. Learn. Sci. Technol. 3(1), 015004 (2021)

Hennig, P., Schuler, C.J.: Entropy search for information-efficient global optimization. J. Mach. Learn. Res. 13(6), 1809–1837 (2012)

Hinton, G.E.: Training products of experts by minimizing contrastive divergence. Neural Comput. 14(8), 1771–1800 (2002)

Huang, D., Allen, T.T., Notz, W.I., Zeng, N.: Global optimization of stochastic black-box systems via sequential kriging meta-models. J. Glob. Optim. 34(3), 441–466 (2006)

Jones, D.R., Schonlau, M., Welch, W.J.: Efficient global optimization of expensive black-box functions. J. Glob. Optim. 13(4), 455–492 (1998)

Kersting, K., Plagemann, C., Pfaff, P., Burgard, W.: Most likely heteroscedastic Gaussian process regression. In: Proceedings of the 24th International Conference on Machine Learning, pp. 393–400 (2007)

Letham, B., Karrer, B., Ottoni, G., Bakshy, E.: Constrained Bayesian optimization with noisy experiments. Bayesian Anal. 14(2), 495–519 (2019)

Liu, H., Cai, J., Wang, Y., Ong, Y.S.: Generalized robust Bayesian committee machine for large-scale Gaussian process regression. In: 35th International Conference on Machine Learning, ICML 2018, vol. 80, pp. 3131–3140 (2018)

Liu, H., Ong, Y.S., Cai, J.: Large-scale heteroscedastic regression via Gaussian process. IEEE Trans. Neural Netw. Learn. Syst. 32(2), 708–721 (2020)

Lázaro-Gredilla, M., Titsias, M.: Variational heteroscedastic Gaussian process regression. In: ICML, pp. 841–848 (2011)

Makarova, A., Usmanova, I., Bogunovic, I., Krause, A.: Risk-averse heteroscedastic Bayesian optimization. Adv. Neural. Inf. Process. Syst. 34, 17235–17245 (2021)

Mockus, J., Tiesis, V., Zilinskas, A.: The application of Bayesian methods for seeking the extremum. Towards Glob. Optim. 2, 117–129 (1978)

Picheny, V., Ginsbourger, D., Richet, Y., Caplin, G.: Quantile-based optimization of noisy computer experiments with tunable precision. Technometrics 55(1), 2–13 (2013)

Picheny, V., Wagner, T., Ginsbourger, D.: A benchmark of kriging-based infill criteria for noisy optimization. Struct. Multidiscip. Optim. 48(3), 607–626 (2013)

Shahriari, B., Swersky, K., Wang, Z., Adams, R.P., de Freitas, N.: Taking the human out of the loop: a review of Bayesian optimization. Proc. IEEE 104(1), 148–175 (2016)

Snoek, J., Larochelle, H., Adams, R.P.: Practical Bayesian optimization of machine learning algorithms. Adv. Neural Inf. Process. Syst. 25, 2951–2959 (2012)

Tautvaišas, S., Žilinskas, J.: Scalable Bayesian optimization with generalized product of experts. J. Glob. Optim. (2022). https://doi.org/10.1007/s10898-022-01236-x

Tresp, V.: A Bayesian committee machine. Neural Comput. 12(11), 2719–2741 (2000)

Vazquez, E., Villemonteix, J., Sidorkiewicz, M., Walter, E.: Global optimization based on noisy evaluations: an empirical study of two statistical approaches. J. Phys. Conf. Ser. 135, 012100 (2008)

Williams, C.K., Rasmussen, C.E.: Gaussian Processes for Machine Learning, vol. 2. MIT Press, Cambridge (2006)

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Additional experiment details

Additional experiment details

1.1 Comparing different data partitioning strategies for GP experts

We consider two strategies for data partitioning: random and disjoint, and K-means partitioning to assess the effect of the data assignment strategy. In the random partitioning strategy, we partition the data \({\mathcal {D}}_{n}\) into \(\textit{M}\) subsets, where each expert is allocated a random subset of \(n_{i}\) data points without replacement. This guarantees that each expert receives a unique set of data points, ensuring diversity across experts. The k-means point allocation strategy aims to group data points with similar characteristics together, allowing experts to specialize in distinct data patterns. We use k-means algorithm to identify \(\textit{M}\) cluster centers, which equals to the number of experts. Then, for each cluster center, we query the BallTree to identify its \(n_{i}\) nearest data points. These points are then assigned to the corresponding i-th expert.

To find the best performing data partitioning strategy for PoE and GPOE we use the same parameters for experiments as in Sect. 4.1. Table 2 shows the optimization results when using random and k-means data partitioning strategies. Overall GPOE model performance with random data partitioning show better results on lower dimension functions, while k-means outperform random data partitioning strategy only on higher functions (Hartmann6D and Sphere).

1.2 Performance sensitivity to the number of points per expert

The optimization performance of the expert models tends to vary depending on the number of points assigned per expert. The Fig. 5 shows the effect of number of data points per expert on optimization performance. We can see that performance tends to vary between different functions, but the overall performance improves (absolute error gets closer to zero) as the number of points per expert increases. The detailed information is provided in the Tables 3, 4 and 5.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Tautvaišas, S., Žilinskas, J. Heteroscedastic Bayesian optimization using generalized product of experts. J Glob Optim (2023). https://doi.org/10.1007/s10898-023-01333-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10898-023-01333-5