Abstract

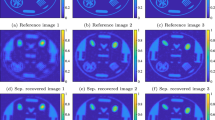

Fluoroscopy is an imaging technique that uses X-ray to obtain a real-time 2D video of the interior of a 3D object, helping surgeons to observe pathological structures and tissue functions especially during intervention. However, it suffers from heavy noise that mainly arises from the clinical use of a low dose X-ray, thereby necessitating the technology of fluoroscopy denoising. Such denoising is challenged by the relative motion between the object being imaged and the X-ray imaging system. We tackle this challenge by proposing a self-supervised, three-stage framework that exploits the domain knowledge of fluoroscopy imaging. (i) Stabilize: we first construct a dynamic panorama based on optical flow calculation to stabilize the non-stationary background induced by the motion of the X-ray detector. (ii) Decompose: we then propose a novel mask-based Robust Principle Component Analysis (RPCA) decomposition method to separate a video with detector motion into a low-rank background and a sparse foreground. Such a decomposition accommodates the reading habit of experts. (iii) Denoise: we finally denoise the background and foreground separately by a self-supervised learning strategy and fuse the denoised parts into the final output via a bilateral, spatiotemporal filter. To assess the effectiveness of our work, we curate a dedicated fluoroscopy dataset of 27 videos (1,568 frames) and corresponding ground truth. Our experiments demonstrate that it achieves significant improvements in terms of denoising and enhancement effects when compared with standard approaches. Finally, expert rating confirms this efficacy.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Batson, J., Royer, L.: Noise2Self: blind denoising by self-supervision. In: International Conference on Machine Learning, pp. 524–533. PMLR (2019)

Buades, A., Coll, B., Morel, J.M.: Non-local means denoising. Image Processing On Line 1, 208–212 (2011)

Candès, E.J., Li, X., Ma, Y., Wright, J.: Robust principal component analysis? J. ACM (JACM) 58(3), 1–37 (2011)

Cao, X., Yang, L., Guo, X.: Total variation regularized RPCA for irregularly moving object detection under dynamic background. IEEE Trans. Cybern. 46(4), 1014–1027 (2016). https://doi.org/10.1109/TCYB.2015.2419737

Cerciello, T., Romano, M., Bifulco, P., Cesarelli, M., Allen, R.: Advanced template matching method for estimation of intervertebral kinematics of lumbar spine. Med. Eng. Phys. 33, 1293–302 (07 2011). https://doi.org/10.1016/j.medengphy.2011.06.009

Claus, M., van Gemert, J.: ViDeNN: deep blind video denoising. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops (2019)

Dabov, K., Foi, A., Egiazarian, K.: Video denoising by sparse 3D transform-domain collaborative filtering. In: 2007 15th European Signal Processing Conference, pp. 145–149 (2007)

Dabov, K., Foi, A., Katkovnik, V., Egiazarian, K.: Image denoising by sparse 3-D transform-domain collaborative filtering. IEEE Trans. Image Process. 16(8), 2080–2095 (2007). https://doi.org/10.1109/TIP.2007.901238

Ebadi, S.E., Guerra-Ones, V., Izquierdo, E.: Approximated robust principal component analysis for improved general scene background subtraction. arXiv abs/1603.05875 (2016)

Ehret, T., Davy, A., Morel, J.M., Facciolo, G., Arias, P.: Model-blind video denoising via frame-to-frame training. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 11369–11378 (2019)

Feng, J., Xu, H., Yan, S.: Online robust PCA via stochastic optimization. In: Advances in Neural Information Processing Systems, pp. 404–412. Citeseer (2013)

Guyon, C., Bouwmans, T., Hadi Zahzah, E.: Foreground detection via robust low rank matrix decomposition including spatio-temporal constraint. In: ACCV Workshops (2012)

Guyon, C., Bouwmans, T., Zahzah, E.H.: Foreground detection via robust low rank matrix factorization including spatial constraint with iterative reweighted regression, November 2012

Han, S., Cho, E.-S., Park, I., Shin, K., Yoon, Y.-G.: Efficient neural network approximation of robust PCA for automated analysis of calcium imaging data. In: de Bruijne, M., et al. (eds.) MICCAI 2021. LNCS, vol. 12907, pp. 595–604. Springer, Cham (2021). https://doi.org/10.1007/978-3-030-87234-2_56

He, J., Balzano, L., Szlam, A.: Incremental gradient on the Grassmannian for online foreground and background separation in subsampled video. In: 2012 IEEE Conference on Computer Vision and Pattern Recognition, pp. 1568–1575. IEEE (2012)

Krull, A., Buchholz, T.O., Jug, F.: Noise2Void-learning denoising from single noisy images. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 2129–2137 (2019)

Lehtinen, J., et al.: Noise2Noise: learning image restoration without clean data. arXiv preprint arXiv:1803.04189 (2018)

Maggioni, M., Boracchi, G., Foi, A., Egiazarian, K.: Video denoising using separable 4D nonlocal spatiotemporal transforms. In: Proceedings of SPIE - The International Society for Optical Engineering, vol. 7870, February 2011. https://doi.org/10.1117/12.872569

Moore, B.E., Gao, C., Nadakuditi, R.R.: Panoramic robust PCA for foreground-background separation on noisy, free-motion camera video. IEEE Trans. Comput. Imaging 5(2), 195–211 (2019)

Quan, Y., Chen, M., Pang, T., Ji, H.: Self2Self with dropout: learning self-supervised denoising from single image. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 1890–1898 (2020)

Rodriguez, P., Wohlberg, B.: Incremental principal component pursuit for video background modeling. J. Math. Imaging Vision 55(1), 1–18 (2016)

Ronneberger, O., Fischer, P., Brox, T.: U-Net: convolutional networks for biomedical image segmentation. In: Navab, N., Hornegger, J., Wells, W.M., Frangi, A.F. (eds.) MICCAI 2015. LNCS, vol. 9351, pp. 234–241. Springer, Cham (2015). https://doi.org/10.1007/978-3-319-24574-4_28

Sun, D., Yang, X., Liu, M.Y., Kautz, J.: PWC-Net: CNNs for optical flow using pyramid, warping, and cost volume. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 8934–8943 (2018)

Wang, C., Zhou, S.K., Cheng, Z.: First image then video: a two-stage network for spatiotemporal video denoising. arXiv preprint arXiv:2001.00346 (2020)

Zhang, K., Zuo, W., Zhang, L.: FFDNet: toward a fast and flexible solution for CNN-based image denoising. IEEE Trans. Image Process. 27(9), 4608–4622 (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Liu, R., Ma, Q., Cheng, Z., Lyu, Y., Wang, J., Kevin Zhou, S. (2022). Stabilize, Decompose, and Denoise: Self-supervised Fluoroscopy Denoising. In: Wang, L., Dou, Q., Fletcher, P.T., Speidel, S., Li, S. (eds) Medical Image Computing and Computer Assisted Intervention – MICCAI 2022. MICCAI 2022. Lecture Notes in Computer Science, vol 13438. Springer, Cham. https://doi.org/10.1007/978-3-031-16452-1_2

Download citation

DOI: https://doi.org/10.1007/978-3-031-16452-1_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-16451-4

Online ISBN: 978-3-031-16452-1

eBook Packages: Computer ScienceComputer Science (R0)