Abstract

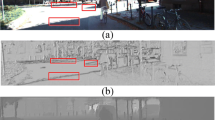

Depth completion from a sparse set of depth measurements and a single RGB image has been shown to be an effective method for generating high-quality depth images. However, traditional convolutional neural network methods tend to interpolate and replicate the output from the surrounding depth values. The underutilization of sparse information leads to blurred boundaries and loss of structural information. To further improve the accuracy of depth completion, we extend the original U-shaped network by self-attention convolution to extract more useful information from the sparse depth measurements. The experimental results validate the effectiveness of self-attention convolution using the U-net architecture on the NYUv2 depth dataset. The accuracy of the proposed method has been improved by 16.9% compared to the original Unet network.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Fu, H., Gong, M., Wang, C., Batmanghelich, K., Tao, D.: Deep ordinal regression network for monocular depth estimation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2002–2011 (2018)

Wang, W., Neumann, U.: Depth-aware CNN for RGB-D segmentation. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11215, pp. 144–161. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01252-6_9

Fu, H., Xu, D., Lin, S.: Object-based multiple foreground segmentation in RGBD video. IEEE Trans. Image Process. 26(3), 1418–1427 (2017)

Loo, S.Y., Amiri, A.J., Mashohor, S., Tang, S.H., Zhang, H.: CNN-SVO: Improving the Mapping in Semi-Direct Visual Odometry Using Single-Image Depth Prediction, arXiv:1810.01011 [cs], Oct. 2018. http://arxiv.org/abs/1810.01011. Accessed 26 Nov 2020

Scharstein, D., Szeliski, R.: A taxonomy and evaluation of dense two-frame stereo correspondence algorithms. Int. J. Comput. Vis. 47(1), 7–42 (2002)

Chang, J.-R., Chen, Y.-S.: Pyramid stereo matching network. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 5410–5418 (2018)

Hamzah, R.A., Kadmin, A.F., Hamid, M.S., Ghani, S.F.A., Ibrahim, H.: Improvement of stereo matching algorithm for 3D surface reconstruction. Signal Process. Image Commun. 65, 165–172 (2018)

Ma, F., Karaman, S.: Sparse-to-dense: depth prediction from sparse depth samples and a single image. In: 2018 IEEE International Conference on Robotics and Automation (ICRA), pp. 4796–4803 (2018)

Uhrig, J., Schneider, N., Schneider, L., Franke, U., Brox, T., Geiger, A.: Sparsity Invariant CNNs. In: 2017 International Conference on 3D Vision (3DV), Qingdao, pp. 11–20 (October 2017). https://doi.org/10.1109/3DV.2017.00012

Hawe, S., Kleinsteuber, M., Diepold, K.: Dense disparity maps from sparse disparity measurements. In: 2011 International Conference on Computer Vision, pp. 2126–2133 (2011)

Liu, L.-K., Chan, S.H., Nguyen, T.Q.: Depth reconstruction from sparse samples: representation, algorithm, and sampling. IEEE Trans. Image Process. 24(6), 1983–1996 (2015)

Ma, F., Carlone, L., Ayaz, U., Karaman, S.: Sparse sensing for resource-constrained depth reconstruction. In: 2016 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS), pp. 96–103 (2016)

Ma, F., Cavalheiro, G.V., Karaman, S.: Self-supervised Sparse-to-Dense: Self-supervised Depth Completion from LiDAR and Monocular Camera, arXiv:1807.00275 [cs], July 2018. http://arxiv.org/abs/1807.00275. Accessed 26 Nov 2020

Jaritz, M., De Charette, R., Wirbel, E., Perrotton, X., Nashashibi, F.: Sparse and dense data with CNNs: depth completion and semantic segmentation. In: 2018 International Conference on 3D Vision (3DV), pp. 52–60 (2018)

Hu, J., Shen, L., Sun, G.: Squeeze-and-excitation networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7132–7141 (2018)

Dauphin, Y.N., Fan, A., Auli, M., Grangier, D.: Language modeling with gated convolutional networks. In: International Conference on Machine Learning, pp. 933–941 (2017)

Van den Oord, A., et al.: Wavenet: a generative model for raw audio, arXiv preprint arXiv:1609.03499 (2016)

Iizuka, S., Simo-Serra, E., Ishikawa, H.: Globally and locally consistent image completion. ACM Trans. Graph. (ToG) 36(4), 1–14 (2017)

Ronneberger, O., Fischer, P., Brox, T.: U-net: convolutional networks for biomedical image segmentation. In: International Conference on Medical Image Computing and Computer-Assisted Intervention, pp. 234–241 (2015)

Shivakumar, S.S., Nguyen, T., Miller, I.D., Chen, S.W., Kumar, V., Taylor, C.J.: Dfusenet: Deep fusion of RGB and sparse depth information for image guided dense depth completion. In: 2019 IEEE Intelligent Transportation Systems Conference (ITSC), pp. 13–20 (2019)

Acknowledgment

This research was financially supported by the National Natural Science Foundation of China (Grant No. 41774027).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Zhao, T., Pan, S., Zhang, H. (2022). Self-attention Convolution for Sparse to Dense Depth Completion. In: Sharma, H., Vyas, V.K., Pandey, R.K., Prasad, M. (eds) Proceedings of the International Conference on Intelligent Vision and Computing (ICIVC 2021). ICIVC 2021. Proceedings in Adaptation, Learning and Optimization, vol 15. Springer, Cham. https://doi.org/10.1007/978-3-030-97196-0_9

Download citation

DOI: https://doi.org/10.1007/978-3-030-97196-0_9

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-97195-3

Online ISBN: 978-3-030-97196-0

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)