Abstract

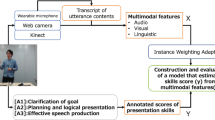

This paper presents an analysis of informative presentations using sequential multimodal modeling for automatic assessment of presentation performance. For this purpose, we transform a single video into multiple time-series segments that are provided as inputs to sequential models, such as Long Short-Term Memory (LSTM). This sequence modeling approach enables us to capture the time-series change of multimodal behaviors during the presentation. We proposed variants of sequential models that improve the accuracy of performance prediction over non-sequential models. Moreover, we performed segment analysis on the sequential models to analyze how relevant information from various segments can lead to better performance in sequential prediction models.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Halef. http://halef.org

Baltrusaitis, T., Zadeh, A., Lim, Y., Morency, L.: OpenFace 2.0: facial behavior analysis toolkit. In: Proceedings of the International Conference on Automatic Face and Gesture Recognition (FG), pp. 59–66 (2018)

Bird, S., Loper, E.: NLTK: the natural language toolkit. In: Proceedings of the ACL Interactive Poster and Demonstration Sessions, pp. 214–217. Barcelona, Spain (2004)

Chen, L., Feng, G., Joe, J., Leong, C.W., Kitchen, C., Lee, C.M.: Towards automated assessment of public speaking skills using multimodal cues. In: Proceedings of the International Conference on Multimodal Interaction (ICMI), pp. 200–203 (2014)

Chen, L., Zhao, R., Leong, C.W., Lehman, B., Feng, G., Hoque, M.E.: Automated video interview judgment on a large-sized corpus collected online. In: 2017 Seventh International Conference on Affective Computing and Intelligent Interaction (ACII), pp. 504–509. IEEE (2017)

Chollet, M., Scherer, S.: Assessing public speaking ability from thin slices of behavior. In: Procedings of the International Conference on Automatic Face and Gesture Recognition (FG), pp. 310–316 (2017)

Degottex, G., Kane, J., Drugman, T., Raitio, T., Scherer, S.: COVAREP: a collaborative voice analysis repository for speech technologies. In: Proceedings of the IEEE International Conference on Acoustics, Speech & Signal Processing (ICASSP) (2014)

Haider, F., Koutsombogera, M., Conlan, O., Vogel, C., Campbell, N., Luz, S.: An active data representation of videos for automatic scoring of oral presentation delivery skills and feedback generation. Frontiers Comput. Sci. 2, 1 (2020)

Hemamou, L., Felhi, G., Vandenbussche, V., Martin, J.C., Clavel, C.: HireNet: a hierarchical attention model for the automatic analysis of asynchronous video job interviews. In: Proceedings of the AAAI Conference on Artificial Intelligence, pp. 573–581 (2019)

Hoque, M.E., Courgeon, M., Martin, J.C., Mutlu, B., Picard, R.W.: MACH: my automated conversation coach. In: Proceedings of the 2013 ACM International Joint Conference on Pervasive and Ubiquitous Computing, pp. 697–706. New York, USA (2013)

Kimani, E., Murali, P., Shamekhi, A., Parmar, D., Munikoti, S., Bickmore, T.: Multimodal assessment of oral presentations using HMMs. In: Proceedings of the International Conference on Multimodal Interaction (ICMI), pp. 650–654. New York, USA (2020)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. In: Bengio, Y., LeCun, Y. (eds.) 3rd International Conference on Learning Representations, ICLR 2015, San Diego, CA, USA, 7–9 May 2015, Conference Track Proceedings (2015)

Leong, C.W., et al.: To trust, or not to trust? A study of human bias in automated video interview assessments. arXiv preprint arXiv:1911.13248 (2019)

Lepp, H., Leong, C.W., Roohr, K., Martin-Raugh, M., Ramanarayanan, V.: Effect of modality on human and machine scoring of presentation videos. In: Proceedings of the International Conference on Multimodal Interaction (ICMI), pp. 630–634 (2020)

Mikolov, T., Chen, K., Corrado, G., Dean, J.: Efficient estimation of word representations in vector space. arXiv preprint arXiv:1301.3781 (2013)

Nguyen, L., Frauendorfer, D., Mast, M., Gatica-Perez, D.: Hire me: computational inference of hirability in employment interviews based on nonverbal behavior. IEEE Trans. Multimed. 16, 1018–1031 (2014)

Okada, S., et al.: Estimating communication skills using dialogue acts and nonverbal features in multiple discussion datasets. In: Proceedings of the International Conference on Multimodal Interaction (ICMI), pp. 169–176. New York, USA (2016)

Park, S., Shim, H.S., Chatterjee, M., Sagae, K., Morency, L.P.: Computational analysis of persuasiveness in social multimedia: a novel dataset and multimodal prediction approach. In: Proceedings of the International Conference on Multimodal Interaction (ICMI), pp. 50–57. New York, USA (2014)

Pedregosa, F., et al.: Scikit-learn: machine learning in Python. J. Mach. Learn. Res. 12, 2825–2830 (2011)

Ramanarayanan, V., Leong, C.W., Chen, L., Feng, G., Suendermann-Oeft, D.: Evaluating speech, face, emotion and body movement time-series features for automated multimodal presentation scoring. In: Proceedings of the International Conference on Multimodal Interaction (ICMI), pp. 23–30 (2015)

Sanchez-Cortes, D., Aran, O., Mast, M., Gatica-Perez, D.: A nonverbal behavior approach to identify emergent leaders in small groups. IEEE Trans. Multimed. 14, 816–832 (2012)

Srivastava, N., Hinton, G., Krizhevsky, A., Sutskever, I., Salakhutdinov, R.: Dropout: a simple way to prevent neural networks from overfitting. J. Mach. Learn. Res. 15(56), 1929–1958 (2014)

Tanaka, H., et al.: Automated social skills trainer. In: Proceedings of the International Conference on Intelligent User Interfaces (IUI), pp. 17–27. New York, USA (2015)

Trinh, H., Asadi, R., Edge, D., Bickmore, T.: RoboCOP: a robotic coach for oral presentations. In: Proceedings of the ACM Interactive Mobile, Wearable and Ubiquitous Technologies 1(2) (2017)

Wörtwein, T., Chollet, M., Schauerte, B., Morency, L.P., Stiefelhagen, R., Scherer, S.: Multimodal public speaking performance assessment. In: Proceedings of the International Conference on Multimodal Interaction (ICMI), pp. 43–50 (2015)

Yagi, Y., Okada, S., Shiobara, S., Sugimura, S.: Predicting multimodal presentation skills based on instance weighting domain adaptation. J. Multimod. User Interfaces 1–16 (2021). https://doi.org/10.1007/s12193-021-00367-x

Řehůřek, R., Sojka, P.: Software framework for topic modelling with large corpora. In: Proceedings of LREC 2010 Workshop New Challenges for NLP Frameworks, pp. 46–50. Valletta, Malta (2010)

Acknowledgements

This work was partially supported by the Japan Society for the Promotion of Science (JSPS) KAKENHI Grant Numbers 19H01120, 19H01719 and JST AIP Trilateral AI Research, Grant Number JPMJCR20G6, Japan.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Shwe Yi Tun, S., Okada, S., Huang, HH., Leong, C.W. (2021). Analysis of Modality-Based Presentation Skills Using Sequential Models. In: Meiselwitz, G. (eds) Social Computing and Social Media: Experience Design and Social Network Analysis . HCII 2021. Lecture Notes in Computer Science(), vol 12774. Springer, Cham. https://doi.org/10.1007/978-3-030-77626-8_24

Download citation

DOI: https://doi.org/10.1007/978-3-030-77626-8_24

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-77625-1

Online ISBN: 978-3-030-77626-8

eBook Packages: Computer ScienceComputer Science (R0)