Abstract

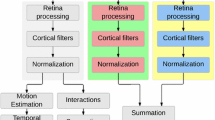

We propose a method for drawing gaze to a given target in videos, by modulating the value of pixels based on the saliency map. The change of pixel values is described by enhancement maps, which are weighted combination of center-surround difference maps of intensity channel and two color opponency channels. Enhancement maps are applied to each video frame in the HSI color space to increase saliency in the target region, and to decrease that in the background. The TLD tracker is employed for tracking the target over frames. Saliency map is used to control the strength of modulation. Moreover, a pre-enhancement step is introduced for accelerating computation, and a post-processing module helps to eliminate flicker. Experimental results show that this method is effective in drawing attention of subjects, but the problem of flicker may rise in minor cases.

Chapter PDF

Similar content being viewed by others

References

Bailey, R., McNamara, A., Sudarsanam, N., Grimm, C.: Subtle gaze direction. ACM Trans. on Graphics 28(4), 1–14 (2009)

Greenspan, H., Belongie, S., Goodman, R., Perona, P., Rakshit, S., Anderson, C.: Overcomplete steerable pyramid filters and rotation invariance. In: Proc. of IEEE Conf. on CVPR, pp. 222–228 (1994)

Hagiwara, A., Sugimoto, A., Kawamoto, K.: Saliency-based image editing for guiding visual attention. In: Proc. of the 1st Int. Workshop on Pervasive Eye Tracking & Mobile Eye-Based Interaction, pp. 43–48 (2011)

Harel, J., Koch, C., Perona, P.: Graph-based visual saliency. Advances in Neural Information Processing Systems 19, 545–552 (2007)

Huang, C., Liu, Q., Yu, S.: Regions of interest extraction from color image based on visual saliency. The Journal of Supercomputing 58(1), 20–33 (2010)

Itti, L., Koch, C., Niebur, E.: A Model of saliency-based visual attention for rapid scene analysis. IEEE Trans. on PAMI 20(11), 1254–1259 (1998)

Itti, L., Dhavale, N., Pighin, F.: Realistic avatar eye and head animation using a neurobiological model of visual attention. In: Proc. of SPIE. Applications and Science of Neural Networks, Fuzzy Systems, and Evolutionary Computation VI, vol. 5200, pp. 64–78 (2004)

Kalal, Z., Mikolajczyk, K., Matas, J.: Tracking-Learning-Detection. IEEE Trans. on PAMI 6(1), 1–14 (2011)

Koch, C., Ullman, S.: Shifts in selective visual attention: towards the underlying neural circuitry. Matters of Intelligence 188, 115–141 (1987)

Ledley, R., Buas, M., Golab, T.: Fundamentals of true-color image processing. In: Proc. of the 10th ICPR, pp. 791–795 (1990)

Mendez, E., Feiner, S., Schmalstieg, D.: Focus and context in mixed reality by modulating first order salient features. In: Proc. of the 10th Int. Symposium on Smart Graphics, pp. 232–243 (2010)

Reichardt, W.: Evaluation of optical motion information by movement detectors. Journal of comparative physiology. A, Sensory, Neural, and Behavioral Physiology 161(4), 533–547 (1987)

Veas, E., Mendez, E., Feiner, S., Schmalstieg, D.: Directing attention and influencing memory with visual saliency modulation. In: Proc. of the SIGCHI Conf. on Human Factors in Computing Systems, pp. 1471–1480 (2011)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Shi, T., Sugimoto, A. (2014). Video Saliency Modulation in the HSI Color Space for Drawing Gaze. In: Klette, R., Rivera, M., Satoh, S. (eds) Image and Video Technology. PSIVT 2013. Lecture Notes in Computer Science, vol 8333. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-53842-1_18

Download citation

DOI: https://doi.org/10.1007/978-3-642-53842-1_18

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-53841-4

Online ISBN: 978-3-642-53842-1

eBook Packages: Computer ScienceComputer Science (R0)