Abstract

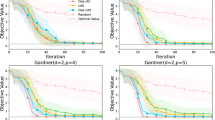

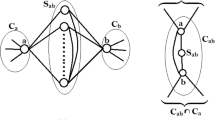

Learning underlying mechanisms of data generation is of great interest in the scientific and engineering fields amongst others. Finding dependency structures among variables in the data is one possible approach for the purpose, and is an important task in data mining. In this paper, we focus on learning dependency substructures shared by multiple datasets. In many scenarios, the nature of data varies due to a change in the surrounding conditions or non-stationary mechanisms over the multiple datasets. However, we can also assume that the change occurs only partially and some relations between variables remain unchanged. Moreover, we can expect that such commonness over the multiple datasets is closely related to the invariance of the underlying mechanism. For example, errors in engineering systems are usually caused by faults in the sub-systems with the other parts remaining healthy. In such situations, though anomalies are observed in sensor values, the underlying invariance of the healthy sub-systems is still captured by some steady dependency structures before and after the onset of the error. We propose a structure learning algorithm to find such invariances in the case of Graphical Gaussian Models (GGM). The proposed method is based on a block coordinate descent optimization, where subproblems can be solved efficiently by existing algorithms for Lasso and the continuous quadratic knapsack problem. We confirm the validity of our approach through numerical simulations and also in applications with real world datasets extracted from the analysis of city-cycle fuel consumption and anomaly detection in car sensors.

Chapter PDF

Similar content being viewed by others

References

Baillie, R.T., Bollerslev, T.: Common stochastic trends in a system of exchange rates. The Journal of Finance 44(1), 167–181 (1989)

Zhang, B., Li, H., Riggins, R.B., Zhan, M., Xuan, J., Zhang, Z., Hoffman, E.P., Clarke, R., Wang, Y.: Differential dependency network analysis to identify condition-specific topological changes in biological networks. Bioinformatics 25(4), 526–532 (2009)

Varoquaux, G., Gramfort, A., Poline, J.B., Thirion, B.: Brain covariance selection: better individual functional connectivity models using population prior. Arxiv preprint arXiv:1008.5071 (2010)

Ahmed, A., Xing, E.P.: Recovering time-varying networks of dependencies in social and biological studies. Proceedings of the National Academy of Sciences 106(29), 11878–11883 (2009)

Idé, T., Lozano, A.C., Abe, N., Liu, Y.: Proximity-based anomaly detection using sparse structure learning. In: Proceedings of the 2009 SIAM International Conference on Data Mining. SIAM, Philadelphia (2009)

Lauritzen, S.: Graphical models. Oxford University Press, USA (1996)

Dempster, A.P.: Covariance selection. Biometrics 28(1), 157–175 (1972)

Meinshausen, N., Bühlmann, P.: High-dimensional graphs and variable selection with the lasso. The Annals of Statistics 34(3), 1436–1462 (2006)

Yuan, M., Lin, Y.: Model selection and estimation in the gaussian graphical model. Biometrika 94, 19–35 (2007)

Banerjee, O., El Ghaoui, L., d’Aspremont, A.: Model selection through sparse maximum likelihood estimation for multivariate gaussian or binary data. The Journal of Machine Learning Research 9, 485–516 (2008)

Friedman, J., Hastie, T., Tibshirani, R.: Sparse inverse covariance estimation with the graphical lasso. Biostatistics 9(3), 432–441 (2008)

Zhang, B., Wang, Y.: Learning structural changes of gaussian graphical models in controlled experiments. In: Proceedings of the 26th Conference on Uncertainty in Artificial Intelligence (2010)

Honorio, J., Samaras, D.: Multi-task learning of gaussian graphical models. In: Proceedings of the 27th Conference on Machine Learning (2010)

Chiquet, J., Grandvalet, Y., Charbonnier, C.: Sparsity with sign-coherent groups of variables via the cooperative-lasso. Arxiv preprint arXiv:1103.2697 (2011)

Guo, J., Levina, E., Michailidis, G., Zhu, J.: Joint estimation of multiple graphical models. Biometrika 98(1), 1–15 (2011)

Tibshirani, R., Saunders, M., Rosset, S., Zhu, J., Knight, K.: Sparsity and smoothness via the fused lasso. Journal of the Royal Statistical Society: Series B 67(1), 91–108 (2005)

Caruana, R.: Multitask learning. Machine Learning 28(1), 41–75 (1997)

Bach, F.R.: Consistency of the group lasso and multiple kernel learning. The Journal of Machine Learning Research 9, 1179–1225 (2008)

Tseng, P.: Convergence of a block coordinate descent method for nondifferentiable minimization. Journal of Optimization Theory and Applications 109(3), 475–494 (2001)

Frank, A., Asuncion, A.: UCI machine learning repository (2010)

Zou, H.: The adaptive lasso and its oracle properties. Journal of the American Statistical Association 101(476), 1418–1429 (2006)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2011 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Hara, S., Washio, T. (2011). Common Substructure Learning of Multiple Graphical Gaussian Models. In: Gunopulos, D., Hofmann, T., Malerba, D., Vazirgiannis, M. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2011. Lecture Notes in Computer Science(), vol 6912. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-23783-6_1

Download citation

DOI: https://doi.org/10.1007/978-3-642-23783-6_1

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-23782-9

Online ISBN: 978-3-642-23783-6

eBook Packages: Computer ScienceComputer Science (R0)