Abstract

The objective of this study is to systematically review the usability of mobile applications currently available in radiology to support training in diagnostic decision-making. Two online stores with major market share (Google Play and iTunes) were searched. A multi-step review process was utilized by three usability investigators and five radiology experts to identify eligible applications and extract usability reviews. From 381 applications that were initially identified, user reviews of final 52 applications revealed 79 usability issues. Usability issues were categorized according to Nielsen’s heuristic usability evaluation principles (HE). The top three most frequent types of usability issues were: Naturalness (43), Simplicity (43), and Efficient Interactions (21). Examples of the most frequent usability issues were: lack of information, lack of labeling, and details about images. This study demonstrates the urgent need of usability test to provide evidence-based guidelines to help choose mobile applications that will yield educational and clinical benefits.

You have full access to this open access chapter, Download conference paper PDF

Similar content being viewed by others

Keywords

1 Introduction

1.1 Mobile Applications in Improving Radiology Education

About 90 % of medical professionals have used mobile devices in medical practice to access their patients’ electronic health records and medical information. Currently, there are four major application stores in the market: iTunes, Google Play, Windows, and Blackberry. iTunes and Google Play stores contain the majority of mobile applications. iTunes’ application store consist of approximately 20,000 medical mobile applications while Google Play’s application store has about 9,000 medical applications [1].

Radiology is a specialty that requires extensive training in image analysis for decision making. Radiology residents are physicians who are being trained in the specialty, and are increasingly using mobile devices. It is estimated that in professional settings, 78 % of physicians use smartphones for work-related purposes [2]. Smartphone usage has spread to healthcare settings with numerous potential and realized benefits. Mobile applications that are installed on smartphones have provided clinicians with readily available evidence-based decisional tools [3]. Medical applications fall under many different categories, including but not limited to, reference applications, such as the Physician’s Desk Reference (PDR®) or WebMD®, medical calculators, and applications designed to access electronic health records (EHR) or personal health information (PHI) [4].

1.2 Poorly Designed Mobile Application Hinders User Acceptance

Recently, the functionality of mobile applications has increased greatly. This increase in functionality has come at the expense of the usability of these applications. The international standard, ISO 9241-11:1998 Guidance on Usability defines usability as:

“The extent to which a product can be used by specified users to achieve specified goals with effectiveness, efficiency and satisfaction, in a specified context of use of the system” [ 5 ].

While technical evaluations of mobile applications receive much attention, few usability evaluation studies have been conducted, especially, for healthcare mobile applications. As such, there have been few studies that investigated the efficacy of using mobile applications that are used in hopes of assisting in training [4, 6].

Consequently, it is estimated that 95 % of downloaded mobile applications are abandoned within a month [7] and 26 % of applications are only used once, possibly because of the lack of attention to usability [8]. Poor healthcare system design may lower effectiveness [9, 10], decrease efficiency [11], and decrease team collaboration [12]. This, in turn, may lead to high cognitive load [13], medical errors [14], and decreased quality of patient care [15]. These issues are correlated within the scope of usability.

The objective of this study is to review and measure the usability of mobile applications currently available in radiology to support training in diagnostic decision-making.

2 Method

2.1 Setting

The University of Missouri Health Care (UMHC) is a tertiary care academic medical center located in Columbia, Missouri, with a total of 564 beds. With 626 medical staff at clinics throughout mid-Missouri, UMHC had an estimated 553,300 visits in 2012 [16]. Department of Radiology includes a full complement of 28 + highly trained clinicians and researchers, and successful training programs of 25 + resident physicians involving cutting-edge technologies and specialized clinical experiences. The department also operates Missouri Radiology Imaging Center, one of the most advanced resonance imaging facilities in the state.

2.2 Systematic Review of the Mobile Application

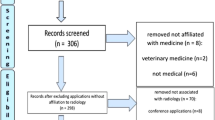

To determine usability issues of mobile applications in radiology, systematic review process was conducted. Two online stores with major market share (Google Play and iTunes) were searched on July 10, 2014 for mobile radiology applications that assist in training.

A multi-step review process was utilized by three usability investigators and five radiology experts to identify eligible applications and extract usability reviews. Distinct and broad search terms were used to capture a wide range of radiological applications: radiology, X-ray, ultrasound, MRI, CT, radiography, nuclear medicine, mammogram, mammography, and fluoroscopy.

Through screening of the titles and descriptions, applications were selected if they supported education and training of radiological diagnostic decision-making processes. They were excluded if they:

-

1.

only provided access to journals, books, encyclopedias, or other reference material;

-

2.

were designed solely for trivial medical calculations;

-

3.

were designed solely for specific commercial vendor products;

-

4.

were designed for use by a specific hospital/clinic only; or

-

5.

were written in a non-English language.

The investigators extracted the usability reviews to be analyzed and coded them according to validated Nielsen’s heuristic usability evaluation principles (HE) [17, 18]. Two independent investigators (ABR, MAC) cross-examined the usability reviews of the counterpart to reach agreement. An experienced usability investigator (MSK) adjudicated any disagreement. Finally, the entire team collectively reviewed the findings for validity before analysis was carried out.

3 Results

From 381 applications that were initially identified, 52 applications of the total searched applications were eligible applications with user reviews. Using HE was instrumental in understanding areas in need of improvement. For the studied 52 radiology training applications, the usability-related reviews are 79 reviews. The types of usability issues with frequency by principles discovered were (some usability issues were cross-listed):

-

Naturalness (43)

-

Simplicity (43)

-

Efficient Interactions (21)

-

Consistency (16)

-

Effective Information Presentation (11)

-

Preservation of context (11)

-

Minimize cognitive (10)

-

Effective use of language (5)

-

Forgiveness and feedback (1).

The example usability problems were: applications lacking in content (17 apps), requests for scientifically based information (5 apps), downloading and crashing systems problems (5 apps), more images (3 apps), and more labels (2 apps).

The usability issues were classified according to 3 point usability severity scale [19]. Examples of the catastrophic usability issues (level 3 severity) were: crashing/force closing, and inability to install application to the user’s mobile devices. Level 3 usability issues prevent users from completing their task. Level 2 usability issues include: inadequate content, insufficient cases and quizzes, non-intuitive labels to the images, and inefficient interfacing problems. Level 2 usability issues delay users significantly but eventually allows them to complete the task. Minor usability issues (level 1) include: the small font size, lack of zoom option in on the image. Level 1 issues could only delay user briefly to complete the task.

4 Discussion

4.1 Mobile Applications in Radiology Need Further Usability Evaluation

Most existing mobile applications in radiology fall short in adequately engaging stakeholders and ensuring that the system designs are user-centered. This may be attributed to a number of reasons, for example, a system designers’ lack of understanding of clinical workflow, unclear guidelines in how user-engaged technologies should be implemented and actively used. Of most important is an insufficient understanding of users’ information needs, socio-demographic status, preferences, health and literacy, computer literacy, and values. Medical mobile applications that do not take account of these factors can impair the effectiveness of clinical management.

While the majority of mobile applications in radiology have been evaluated using tablet platforms [20–28], there are very few studies available that investigated the potential benefit of mobile applications on smartphone platforms, potentially because of the small display size [20, 29]. However, advancement of display technology and better mobility will allow increasing use of smartphones in the near future [30]. Thus, it is important to explore the feasibility of mobile applications on smaller mobile devices among physicians.

4.2 User-Centered Design Process in Mobile Application Development

Prior studies showed that mobile applications, including radiology, can be utilized to enhance education, with the potential to improve overall patient care [31, 32] but suffered from poor usability. User-Centered Design (UCD) is a process wherein the needs, wants, and limitations of end users of a product are given extensive attention at throughout the design process [33, 34].

The UCD process involves engagement of users throughout the processes:

-

1.

user needs analysis,

-

2.

algorithm development,

-

3.

cyclical prototype design, and

-

4.

development.

In user needs analysis, the design team collects users’ information needs, wants, and motivations for using mobile applications to acquire an understanding of potential factors that may impact the intended goals. Information items include: basic education-related demographics, prior mobile application experiences, perceived mobile device skills, and expectations (contents, features and functionalities) on usage of the mobile application in their clinical management setting. In algorithm development stage, design team sketches several ideas for potential prototype design through an iterative review process. Collected users’ needs are reviewed to ensure if they could be integrated within the application’s limitations. The team should decide on whether or not to include certain educational contents, features, and functional elements. Once the optimal algorithm is established, the team begins to design and develop a few low-fidelity (Lo-Fi) prototypes (i.e., sketches on paper or slides). The Lo-Fi prototypes will then be evaluated utilizing formative evaluations, such as, heuristic evaluation [17, 18] and cognitive walkthrough [35, 36]. When the final prototype has been selected, the team work to develop the high-fidelity (Hi-Fi) prototype with partial to complete functions. The Hi-Fi prototype are evaluated using summative lab-based usability testing [37].

After implementation, continuous data collection on usability should be warranted to measure usability [5, 38, 39] and acceptance [40] of the mobile application. This continuous evaluation will allow the team to adjust the design of the application for improving user acceptance and maintaining maximal educational effectiveness. Following the UCD process, mobile applications in radiology education and training may ultimately increase usability and therefore decrease cognitive overload, and may increase the quality of healthcare services.

4.3 Weakness to the Study

As with all study designs, there are limitations. First, due to this study’s exploratory nature, this study involved mobile applications in two mobile application stores. Selection of iOS (iTunes) and Android (Google Play) platforms for this study was made based on current trend and it may change in the future considering the fast-changing IT market. As such, evaluation of mobile applications in other platforms, such as, Windows or BlackBerry, should be warranted. Second, while Nielsen’s heuristic usability evaluation principles (HE) is an exemplary evaluation method, summative usability testing [37, 41] comparing multiple representative mobile applications could provide evidence-based guidelines to help choose mobile applications that will yield maximal educational and clinical benefits.

5 Conclusion

This study demonstrates the urgent need for usability evaluation in the development of radiology mobile applications. Investigators suggest that the approval process of any medical mobile applications should undergo a more systematic and rigorous process to improve the applications that will satisfy the users’ experience and meet clinical training goals. In addition, the investigators suggest an institution of systematic and standardized guidelines regarding design and test of healthcare mobile applications to achieve maximal adoption.

References

Aungst, T.D., Clauson, K.A., Misra, S., et al.: How to identify, assess and utilise mobile medical applications in clinical practice. Int. J. Clin. Pract. 68(2), 155–162 (2014)

Sources & Interactions Study, September 2013: Medical/Surgical Edition. [electronic article] (2013)

Prgomet, M., Georgiou, A., Westbrook, J.I.: The impact of mobile handheld technology on hospital physicians’ work practices and patient care: a systematic review. J. Am. Med. Inform. Assoc. 16(6), 792–801 (2009)

Szekely, A., Talanow, R., Bagyi, P.: Smartphones, tablets and mobile applications for radiology. Eur. J. Radiol. 82(5), 829–836 (2013)

Standard ISO 9241: Ergonomic requirements for office work with visual display terminals (VDTs), part 11: Guidance on usability (1998)

Harrison, F., Duce, D.: Usability of mobile applications: literature review and rationale for a new usability model. J. Interact. Sci. 1(1), 12 (2013)

Mocherman, A.: Why 95 % of apps are quickely abandoned - and how to avoid becoming a statistic [ectronic article] (2011)

First Impressions Matter! 26 % of Apps Downloaded in 2010 Were Used Just Once: Localytics [electronic article] (2011)

Koppel, R., Metlay, J.P., Cohen, A., et al.: Role of computerized physician order entry systems in facilitating medication errors. JAMA 293(10), 1197–1203 (2005)

Steele, E.: EHR implementation: who benefits, who pays? Health Manag. Technol. 27(7), 43–44 (2006)

Crabtree, B.F., Miller, W.L., Tallia, A.F., et al.: Delivery of clinical preventive services in family medicine offices. Ann. Fam. Med. 3(5), 430–435 (2005)

Han, Y.Y., Carcillo, J.A., Venkataraman, S.T., et al.: Unexpected increased mortality after implementation of a commercially sold computerized physician order entry system. Pediatrics 116(6), 1506–1512 (2005)

Tang, P.C., Patel, V.L.: Major issues in user interface design for health professional workstations: summary and recommendations. Int. J. Biomed. Comput. 34(1–4), 139–148 (1994)

Ash, J.S., Berg, M., Coiera, E.: Some unintended consequences of information technology in health care the nature of patient care: information system-related errors. J. Am. Med. Inf. Assoc. 11(2), 104–112 (2004)

Beuscart-Zéphir, M.C., Elkin, P., Pelayo, S., et al.: The human factors engineering approach to biomedical informatics projects: state of the art, results, benefits and challenges. Yearb. Med. Inform. 109–127 (2007)

MO: University of Missouri Health Care. Annual Report, Columbia

Selecting a mobile app: evaluating the usability of medical applications (2012)

Nielsen, J., Hackos, J.T.: Usability Engineering. Academic press, Boston (1993)

Sauro, J.: Rating The Severity Of Usability Problems, [News article] (2013)

Ridley, E.L.: Size Matters: IPad Tops IPhone for Evaluating Acute Stroke. AuntMinnie.com (2011). http://www.auntminnie.com/index.aspx?sec=ser&sub=def&pag=dis&ItemID=97731

Ridley, E.L.: Despite Speed Bump, IPad Up for Task of Assessing Tuberculosis. AuntMinnie.com (2012). http://www.auntminnie.com/index.aspx?sec=ser&sub=def&pag=dis&ItemID=98084

McNulty, J.P., Ryan, J.T., Evanoff, M.G., et al.: Flexible image evaluation. iPad versus secondary-class monitors for review of mr spinal emergency cases, a comparative study. Acad. Radiol. 19(8), 1023–1028 (2012)

Johnson, P.T., Zimmerman, S.L., Heath, D., et al.: The iPad as a mobile device for CT display and interpretation: diagnostic accuracy for identification of pulmonary embolism. Emerg. Radiol. 19(4), 323–327 (2012)

Ridley, E.L.: IPad Up for Challenge of Plain Radiographs. AuntMinnie.com http://www.auntminnie.com/index.aspx?sec=sup_n&sub=pac&pag=dis&itemId=97736 (2011)

Ridley, E.L.: IPad Up for Task of Assessing Pulmonary Nodules. AuntMinnie.com http://www.auntminnie.com/index.aspx?sec=rca&sub=rsna_2011&pag=dis&itemId=97565 (2011)

Ward, P.: IPads Are OK for VC Reviews, but Take Longer. AuntMinnie.com (2011). http://www.auntminnie.com/index.aspx?sec=rca_n&sub=rsna_2011&pag=dis&ItemID=97597. Accessed 22 Jan 2014

Hammon, M., Schlechtweg, P.M., Schulz-Wendtland, R., et al.: iPads in breast imaging - a phantom study. Geburtshilfe Frauenheilkd. 74(2), 152–156 (2014)

iPads show the way forward for medical imaging, [online article] (2012)

Wolf, J.A., Moreau, J.F., Akilov, O., et al.: Diagnostic inaccuracy of smartphone applications for melanoma detection. JAMA Dermatol. 149(4), 422–426 (2013)

Qiao, J., Liu, Z., Xu, L., et al.: Reliability analysis of a smartphone-aided measurement method for the Cobb angle of scoliosis. J Spinal Disord. Tech. 25(4), E88–E92 (2012)

Trelease, R.B.: Diffusion of innovations: smartphones and wireless anatomy learning resources. Anat. Sci. Educ. 1(6), 233–239 (2008)

Torre, D.M., Treat, R., Durning, S., et al.: Comparing PDA- and paper-based evaluation of the clinical skills of third-year students. WMJ 110(1), 9–13 (2011)

Johnson, C.M., Johnson, T.R., Zhang, J.: A user-centered framework for redesigning health care interfaces. J. Biomed. Inform. 38(1), 75–87 (2005)

Da Silva, T.S., Martin, A., Maurer, F., et al. (eds.): User-centered design and agile methods: a systematic review. AGILE (2011)

Polson, P.G., Lewis, C., Rieman, J., et al.: Cognitive walkthroughs: a method for theory-based evaluation of user interfaces. Int. J. Man Mach. Stud. 36(5), 741–773 (1992)

Partala, T.: The combined walkthrough: measuring behavioral, affective, and cognitive information in usability testing. J. Usability Stud. 5(1), 21–33 (2009)

Bastien, J.M.: Usability testing: a review of some methodological and technical aspects of the method. Int. J. Med. Inform. 79(4), e18–e23 (2010)

Brooke, J.: SUS - a quick and dirty usability scale. In: Jordan, P.W., Thomas, B., Weerdmeester, B.A., McClelland, I.L. (eds.) Usability Evaluation in Industry. Taylor & Francis, London (1996)

Bangor, A., Kortum, P.T., Miller, J.T.: An empirical evaluation of the system usability scale. Int. J. Hum. Comput. Interact. 24(6), 574–594 (2008)

Renaud, K., Biljon, J.V.: Predicting technology acceptance and adoption by the elderly: a qualitative study. In: Proceedings of the 2008 Annual Research Conference of the South African Institute of Computer Scientists and Information Technologists on IT research in Developing Countries: Riding the Wave of Technology, Wilderness, South Africa, 1456684, pp. 210–219. ACM (2008)

Faulkner, L.: Beyond the five-user assumption: benefits of increased sample sizes in usability testing. Behav. Res. Methods Instrum. Comput. 35(3), 379–383 (2003)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2015 Springer International Publishing Switzerland

About this paper

Cite this paper

Kim, M.S. et al. (2015). Usability of Mobile Applications Supporting Training in Diagnostic Decision-Making by Radiologists. In: Duffy, V. (eds) Digital Human Modeling. Applications in Health, Safety, Ergonomics and Risk Management: Ergonomics and Health. DHM 2015. Lecture Notes in Computer Science(), vol 9185. Springer, Cham. https://doi.org/10.1007/978-3-319-21070-4_45

Download citation

DOI: https://doi.org/10.1007/978-3-319-21070-4_45

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-21069-8

Online ISBN: 978-3-319-21070-4

eBook Packages: Computer ScienceComputer Science (R0)