Abstract

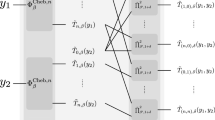

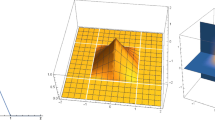

The Lipschitz constant of neural networks plays an important role in several contexts of deep learning ranging from robustness certification and regularization to stability analysis of systems with neural network controllers. Obtaining tight bounds of the Lipschitz constant is therefore important. We introduce LipBaB, a branch and bound framework to compute certified bounds of the local Lipschitz constant of deep neural networks with ReLU activation functions up to any desired precision. It is based on iteratively upper-bounding the norm of the Jacobians, corresponding to different activation patterns of the network caused within the input domain. Our algorithm can provide provably exact computation of the Lipschitz constant for any p-norm.

Research sponsored by DRDO Headquarters, New Delhi, India.

Extended version containing additional proofs and details [1].

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

We have used the names of the techniques as given in the respective papers. The column titled LipBaB is our algorithm.

- 2.

In very rare cases where the feasibility checks demands more precision than the tolerance limit of solvers, we might get a larger value than the true exact.

References

Bhowmick, A., D’Souza, M., Raghavan, G.S.: Lipbab: computing exact lipschitz constant of relu networks. arXiv preprint arXiv:2105.05495 (2021)

Fazlyab, M., Robey, A., Hassani, H., Morari, M., Pappas, G.J.: Efficient and accurate estimation of Lipschitz constants for deep neural networks. In: NeurIPS (2019)

Jordan, M., Dimakis, A.G.: Exactly computing the local lipschitz constant of relu networks. In: NeurIPS (2020)

Kim, H., Papamakarios, G., Mnih, A.: The lipschitz constant of self-attention. arXiv preprint arXiv:2006.04710 (2020)

Latorre, F., Rolland, P., Cevher, V.: Lipschitz constant estimation of neural networks via sparse polynomial optimization. In: ICLR (2020)

Virmaux, A., Scaman, K.: Lipschitz regularity of deep neural networks: analysis and efficient estimation. In: NeurIPS (2018)

Wang, S., Pei, K., Whitehouse, J., Yang, J., Jana, S.: Efficient formal safety analysis of neural networks. In: NeurIPS (2018)

Wang, S., Pei, K., Whitehouse, J., Yang, J., Jana, S.: Formal security analysis of neural networks using symbolic intervals. In: Proceedings of the 27th USENIX Conference on Security Symposium, pp. 1599–1614 (2018)

Weng, T.-W., et al.: Towards fast computation of certified robustness for ReLU networks. In: International Conference on Machine Learning (ICML) (2018)

Weng, T.-W., et al.: Evaluating the robustness of neural networks: an extreme value theory approach. In: ICLR (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 Springer Nature Switzerland AG

About this paper

Cite this paper

Bhowmick, A., D’Souza, M., Raghavan, G.S. (2021). LipBaB: Computing Exact Lipschitz Constant of ReLU Networks. In: Farkaš, I., Masulli, P., Otte, S., Wermter, S. (eds) Artificial Neural Networks and Machine Learning – ICANN 2021. ICANN 2021. Lecture Notes in Computer Science(), vol 12894. Springer, Cham. https://doi.org/10.1007/978-3-030-86380-7_13

Download citation

DOI: https://doi.org/10.1007/978-3-030-86380-7_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-86379-1

Online ISBN: 978-3-030-86380-7

eBook Packages: Computer ScienceComputer Science (R0)