Abstract

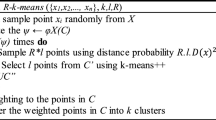

This paper proposes a new algorithm, Slice_OP, which selects the initial cluster centers on high-dimensional data. A set of observation points is allocated to transform the high-dimensional data into one-dimensional distance data. Multiple Gamma models are built on distance data, which are fitted with the expectation-maximization algorithm. The best-fitted model is selected with the second-order Akaike information criterion. We estimate the candidate initial centers from the objects in each component of the best-fitted model. A cluster tree is built based on the distance matrix of candidate initial centers and the cluster tree is divided into K branches. Objects in each branch are analyzed with k-nearest neighbor algorithm to select initial cluster centers. The experimental results show that the Slice_OP algorithm outperformed the state-of-the-art Kmeans++ algorithm and random center initialization in the k-means algorithm on synthetic and real-world datasets.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Alcalafdez, J., Fernandez, A., Luengo, J., Derrac, J., Garcia, S.: KEEL data-mining software tool: data set repository, integration of algorithms and experimental analysis framework. Soft Comput. 17, 255–287 (2011)

Arthur, D., Vassilvitskii, S.: k-means++: the advantages of careful seeding. In: Symposium on Discrete Algorithms (SODA), pp. 1027–1035. Society for Industrial and Applied Mathematics (2007)

Banfield, J.D., Raftery, A.E.: Model-based Gaussian and non-Gaussian clustering. Biometrics 49(3), 803–821 (1993)

Bradley, P.S., Fayyad, U.M.: Refining initial points for k-means clustering. In: Proceedings of 15th International Conference on Machine Learning, pp. 91–99. Morgan Kaufmann, San Francisco (1998)

Deelers, S., Auwatanamongkol, S.: Enhancing k-means algorithm with initial cluster centers derived from data partitioning along the data axis with the highest variance. Int. J. Phys. Math. Sci. 1(11), 518–523 (2007)

Dennis, J.E., Schnabel, R.B.: Numerical Methods for Unconstrained Optimization and Nonlinear Equations. Prentice-Hall, Englewood Cliffs (1983)

Dheeru, D., Karra Taniskidou, E.: UCI machine learning repository (2017). http://archive.ics.uci.edu/ml

Erisoglu, M., Calis, N., Sakallioglu, S.: A new algorithm for initial cluster centers in k-means algorithm. Pattern Recogn. Lett. 32, 1701–1705 (2011)

Figueiredo, M.A.T., Jain, A.K.: Unsupervised learning of finite mixture models. IEEE Trans. Pattern Anal. Mach. Intell. 24(3), 381–396 (2002)

Fraley, C., Raftery, A.E.: Model-based clustering, discriminant analysis, and density estimation. J. Am. Stat. Assoc. 97(458), 611–631 (2002)

Fraley, C., Raftery, A.E.: Bayesian regularization for normal mixture estimation and model-based clustering. J. Classif. 24(2), 155–181 (2007)

Jain, A.K., Dubes, R.C.: Algorithms for Clustering Data. Prentice Hall, Englewood Cliffs (1998)

Khan, S.S., Ahmad, A.: Cluster center initialization algorithm for k-means clustering. Pattern Recogn. Lett. 25(11), 1293–1302 (2004)

Kuncheva, L.I., Hadjitodorov, S.: Using diversity in cluster ensembles. In: International Conference on System, Man and Cybernetics, vol. 2, pp. 1214–1219 (2004)

Lloyd, S.P.: Least squares quantization in PCM. IEEE Trans. Inf. Theory 28(2), 129–137 (1982)

Manning, C.D., Raghavan, P., Schutze, H.: Introduction to Information Retrieval. Cambridge University Press, Cambridge, New York (2008). http://opac.inria.fr/record=b1127339

Masud, M.A., Huang, J.Z., Wei, C., Wang, J., Khan, I., Zhong, M.: I-nice: a new approach for identifying the number of clusters and initial cluster centres. Inf. Sci. 466, 129–151 (2018)

Sbhatia, M.P., Khurana, D.: Analysis of initial centers for k-means clustering algorithm. Int. J. Comput. Appl. 71(5), 9–12 (2013)

Sugiura, N.: Further analysis of the data by Akaike’ s information criterion and the finite corrections. Commun. Stat.-Theory Methods 7(1), 13–26 (1978)

Tzortzis, G., Likas, A.: The minmax k-means clustering algorithm. Pattern Recogn. 47(7), 2505–2516 (2014)

Vegas-Sáchez-Ferrero, G., et al.: Gamma mixture classifier for plaque detection in intravascular ultrasonic images. IEEE Trans. Ultrason. Ferroelectr. Freq. Control 61(1), 44–61 (2014)

Ye, Y., Huang, J.Z., Chen, X., Zhou, S., Williams, G., Xu, X.: Neighborhood density method for selecting initial cluster centers in k-means clustering. In: Ng, W.-K., Kitsuregawa, M., Li, J., Chang, K. (eds.) PAKDD 2006. LNCS (LNAI), vol. 3918, pp. 189–198. Springer, Heidelberg (2006). https://doi.org/10.1007/11731139_23

Acknowledgment

This paper was supported by National Natural Science Foundations of China (under Grant No. 61473194 and 61472258) and Shenzhen-Hong Kong Technology Cooperation Foundation (under Grant No. SGLH20161209101100926).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer Nature Switzerland AG

About this paper

Cite this paper

Masud, M.A., Huang, J.Z., Zhong, M., Fu, X., Mahmud, M.S. (2018). Slice_OP: Selecting Initial Cluster Centers Using Observation Points. In: Gan, G., Li, B., Li, X., Wang, S. (eds) Advanced Data Mining and Applications. ADMA 2018. Lecture Notes in Computer Science(), vol 11323. Springer, Cham. https://doi.org/10.1007/978-3-030-05090-0_2

Download citation

DOI: https://doi.org/10.1007/978-3-030-05090-0_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-05089-4

Online ISBN: 978-3-030-05090-0

eBook Packages: Computer ScienceComputer Science (R0)