Abstract

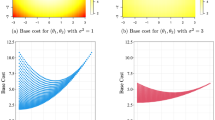

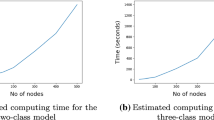

We propose universal clustering in line with the concepts of universal estimation. In order to illustrate above model we introduce family of power loss functions in probabilistic space which is marginally linked to the Kullback-Leibler divergence. Above model proved to be effective in application to the synthetic data. Also, we consider large web-traffic dataset. The aim of the experiment is to explain and understand the way people interact with web sites.

The paper proposes special regularization in order to ensure consistency of the corresponding clustering model.

This work was supported by the grants of the Australian Research Council. National ICT Australia is funded through the Australian Government initiative.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Nikulin, V., Smola, A.: Parametric model-based clustering. In: Dasarathy, B. (ed.) Data Mining, Intrusion Detection, Information Assurance, and Data Network Security, Orlando, Florida, USA, March 28-29, vol. 5812, pp. 190–201. SPIE, San Jose (2005)

Dhillon, I., Mallela, S., Kumar, R.: Divisive information-theoretic feature clustering algorithm for text classification. Journal of Machine Learning Research 3, 1265–1287 (2003)

Cohn, D., Hofmann, T.: The missing link - a probabilistic model of document content and hypertext connectivity. In: 13th Conference on Neural Information Processing Systems (2001)

Hwang, J.T.: Universal domination and stochastic domination: Estimation simultaneously under a broad class of loss functions. The Annals of Statistics 13, 295–314 (1985)

Rukhin, A.: Universal Bayes estimators. The Annals of Statistics 6, 1345–1351 (1978)

Pollard, D.: Strong consistency of k-means clustering. The Annals of Statistics 10, 135–140 (1981)

Cuesta-Albertos, J., Gordaliza, A., Matran, C.: Trimmed k-means: an attempt to robustify quantizers. The Annals of Statistics 25, 553–576 (1997)

Stute, W., Zhu, L.: Asymptotics of k-means clustering based on projection pursuit. Sankhya 57, 462–471 (1995)

Vapnik, V.: The Nature of Statistical Learning Theory. Springer, Heidelberg (1995)

Kagan, A., Linnik, Y., Rao, C.: Characterization Problems in Mathematical Statistics. John Wiley & Sons, Chichester (1973)

Hamerly, G., Elkan, C.: Learning the k in k-means. In: 16th Conference on Neural Information Processing Systems (2003)

Cadez, I., Heckerman, D., Meek, C., Smyth, P., White, S.: Model-based clustering and visualization of navigation patterns on a web site. Data Mining and Knowledge Discovery 7, 399–424 (2003)

Msnbc: msnbc.com anonymous web data. In: UCI Knowledge Discovery in Databases Archive (1999), http://kdd.ics.uci.edu/summary.data.type.html

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2005 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Nikulin, V., Smola, A.J. (2005). Universal Clustering with Regularization in Probabilistic Space. In: Perner, P., Imiya, A. (eds) Machine Learning and Data Mining in Pattern Recognition. MLDM 2005. Lecture Notes in Computer Science(), vol 3587. Springer, Berlin, Heidelberg. https://doi.org/10.1007/11510888_15

Download citation

DOI: https://doi.org/10.1007/11510888_15

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-26923-6

Online ISBN: 978-3-540-31891-0

eBook Packages: Computer ScienceComputer Science (R0)