Abstract

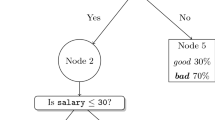

In the context of classification problems, algorithms that generate multivariate trees are able to explore multiple representation languages by using decision tests based on a combination of attributes. In the regression setting, model trees algorithms explore multiple representation languages but using linear models at leaf nodes. In this work we study the effects of using combinations of attributes at decision nodes, leaf nodes, or both nodes and leaves in regression and classification tree learning. In order to study the use of functional nodes at different places and for different types of modeling, we introduce a simple unifying framework for multivariate tree learning. This framework combines a univariate decision tree with a linear function by means of constructive induction. Decision trees derived from the framework are able to use decision nodes with multivariate tests, and leaf nodes that make predictions using linear functions. Multivariate decision nodes are built when growing the tree, while functional leaves are built when pruning the tree. We experimentally evaluate a univariate tree, a multivariate tree using linear combinations at inner and leaf nodes, and two simplified versions restricting linear combinations to inner nodes and leaves. The experimental evaluation shows that all functional trees variants exhibit similar performance, with advantages in different datasets. In this study there is a marginal advantage of the full model. These results lead us to study the role of functional leaves and nodes. We use the bias-variance decomposition of the error, cluster analysis, and learning curves as tools for analysis. We observe that in the datasets under study and for classification and regression, the use of multivariate decision nodes has more impact in the bias component of the error, while the use of multivariate decision leaves has more impact in the variance component.

Article PDF

Similar content being viewed by others

References

Berthold, M., & Hand, D. (1999). Intelligent data analis-An introduction.Springer Verlag.

Bhattacharyya, G., & Johnson, R. (1977).Statistical concepts and methods.New York: John Willey & Sons.

Blake, C., Keogh, E., & Merz, C. (999). ICI repository of machine learning databases.

Brain, D., & Webb, G. (2002). Th need ifor low bias algorithms in classification learning from large data sets. In T. Elomaa, H. Mannila, & H,. Tiionen (Eds.), Principles of data mining and knowledge discovery PKDD-02, LNAI 2431 (pp. 62–73). Springer Verlag.

Breiman, L. (1996). Baging’predictors. Machine Learning, 24,123–140.

Breiman, L. (1998). cing:classifiers. The Annals of Statistics, 26:3,801–849.

Breiman, L., Friedman J., Olshen, R., & Stone, C. (1984). Classification and regression trees. Wadsworth International Group.

Brodley, C. E. (1995). Recursive automatic bias selection for classifier construction. Machine Learning, 20,63–94.

Brodley, C. E.,::& Utgoff, P. E. (1995). Multivariate decision trees. Machine Learning, 19,45–77.

Frank, E., Wang, Y, Inglis, S., Holmes, G., & Witten, I. (1998). Using model trees for classification. Machine Learning,32,63–82.

Frank, E., & Witten, H. (1998). Generating accurate rule sets without global optimization. In J. Shavlik (Ed.), Proceedings of the 15th international conference-ICML’98 (pp. 144–151). Morgan Kaufmann.

Gama, J. (1997). Probabilistic linear tree. In D. Fisher (Ed.), Machine learning Proc. of the 14th international conference (pp. 134–142). Morgan Kaufmann.

Gama, J. (2000). A linear-bayes classifier. In C. Monard, & J. Sichman (Eds.),Advances on artificial intelligence-SBIA2000,LNAI 1952 (pp. 269–279). Springer Verlag.

Gama, J., & Brazdil, P. (2000). Cascade generalization. Machine Learning 41,315–343.

Geman, S., Bienenstock, E., & Doursat, R. (1992). Neural networks and the bias/variance dilema. Neural Com-putation, 4,1–58.

Ihaka, R., & Gentleman, R. (1996). R: A language for data analysis and graphics. Journal of Computational and Graphical Statistics, 5:3,299–314.

Karalic, A. (1992). Employing linear regression in regression tree leaves. In B. Neumann (Ed.), European confer-ence on artificial intelligence (pp. 440–441). John Wiley & Sons.

Kim, H., & Loh, W. (2001). Classification trees with unbiased multiway splits. Journal of theAmerican Statistical Association,96, 589–604.

Kim, H., & Loh, W.-Y. (2003). Classification trees with bivariate linear discriminant node models. Journal of Computational and Graphical Statistics, 12:3,512–530.

Kohavi, R. (1996). Scaling up the accuracy of naive-bayes classifiers: A decision tree hybrid. In Proc. of the 2nd international conference on knowledge discovery and data mining (pp. 202–207). AAAI Press.

Kohavi, R., & Wolpert, D. (1996). Bias plus variance decomposition for zero-one loss functions. In L. Saitta (Ed.), Machine learning, Proc. of the 13th international conference.(pp. 275–283). Morgan Kaufmann.

Kononenko, I., Cestnik, B., & Bratko, I. (1988). Assistant professional user’s guide.Technical report, Jozef Stefan Institute.

Li, K. C., Lue, H., & Chen, C. (2000). Interactive tree-structured regression via principal Hessians direction. Journal of the American Statistical Association, 95, 547–560.

Loh, W., & Shih, Y. (1997). Split selection methods for classification trees. Statistica Sinica,7, 815–840.

Loh, W., & Vanichsetakul, N. (1988). Tree-structured classification via generalized discriminantanalysis. Journal of the American Statistical Association, 83, 715–728.

McLachlan, G. (1992). Discriminant analysis and statistical pattern recognition. New York: Wiley and Sons.

Mitchell, T. (1997). Machine learning.MacGraw-Hill Companies, Inc.

Murthy, S., Kasif, S., & Salzberg, S. (1994). A system for induction of oblique decision trees. Journal ofArtificial Inteligence Research,2, 1–32.

Perlich, C., Provost,.F., & Simonoff, J. (2003). Tree induction vs. logistic regression: A learning-curve analysis. Journal of Machine Learning Research,4, 211–255.

Quinlan, R. (1992). Learning with continuous classes. In Adams, & Steling (Eds.), 5th Australianjoint conference on artificial intelligence.(pp. 343–348). World Scientific.

Quinlan, R. (1993a). C4.5: Programs for machine learningiMorgan Kaufmann Publishers, Inc.

Quinlan, R. (1993b). Combining instance-based and model-based learning. In P. Utgoff (Ed.), Machine learning, proceedings of the 10th international conference (pp.236–243). Morgan Kaufmann.

Sahami, M. (1995). Generating neural networks though!the induction of threshold logic unit trees. In Proceedings of the first international IEEE symposium qn intelligence in neural and biological systems.(pp. 108–115). IEEE Computer Society.

Seewald, A., Petrak, J., & Widmer,G. (2001). Hybrid decision tree learners with alternative leaf classifiers: An empirical study. In Proceedingi the 14th FLAIRS conference. (pp.407–411). AAAI Press.

Todorovski, L., & Dzeroski S. (2003). Combining classifiers with meta decision trees. Machine Learning, 50, 223–249.

Torgo, L. (1997). Functional models for regression tree leaves. In D. Fisher (Ed.), Machine learning, proceedings of the 14th iternational’conference.(pp. 385–393). Morgan Kaufmann.

Torgo, L. (2000). Partial linear trees. In P. Langley (Ed.), Machine learning, proceedings of the 17th international conference.(pp. 1007–1014). Morgan Kaufmann.

Utgoff, P. (1988). Percepton trees-A case study in hybrid conceptr epresentation. In Proceedings of the seventh national conference on artificial intelligence.(pp. 601–606). AAAI Press.

Utgoff, P., & Brodley, C. (1991). Linear machine decision trees. Coins technical report, 91–10, University of Massachusetts.

Witten, I., & Frank, E. (2000). Data mining: Practical machine learning tools and techniques with Java impleminentations. Morgan Kaufmann Publishers

Wolpert, D. (1992). Stacked generalization. Neural Networks (vol. 5, pp. 241–260). Pergamon Press.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Gama, J. Functional Trees. Mach Learn 55, 219–250 (2004). https://doi.org/10.1023/B:MACH.0000027782.67192.13

Issue Date:

DOI: https://doi.org/10.1023/B:MACH.0000027782.67192.13