Abstract

Bibliometric indicators are widely used to compare performance between units operating in different fields of science.

For cross-field comparisons, article citation rates have to be normalised to baseline values because citation practices vary between fields, in respect of timing and volume. Baseline citation values vary according to the level at which articles are aggregated (journal, sub-field, field). Consequently, the normalised citation performance of each research unit will depend on the level of aggregation, or ‘zoom’, that was used when the baselines were calculated.

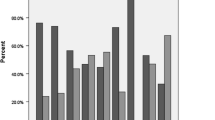

Here, we calculate the citation performance of UK research units for each of three levels of article-aggregation. We then compare this with the grade awarded to that unit by external peer review. We find that the correlation between average normalised citation impact and peerreviewed grade does indeed vary according to the selected level of zoom.

The possibility that the level of ‘zoom’ will affect our assessment of relative impact is an important insight. The fact that more than one view and hence more than one interpretation of performance might exist would need to be taken into account in any evaluation methodology. This is likely to be a serious challenge unless a reference indicator is available and will generally require any evaluation to be carried out at multiple levels for a reflective review.

Similar content being viewed by others

References

Adams, J. (1998), Benchmarking international research. Nature, 396: 615–618.

Adams, J., Bailey, T., Jackson, L., Scott, P., Small, H., Pendlebury, D. (1998), Benchmarking of the International Standing of Research in England — a Consultancy Study for the Higher Education Funding Council for England. CPSE, University of Leeds. 108 pp. ISBN 1 901981 04 5.

Glänzel, W., Moed, H. F. (2002), Journal impact measures in bibliometric research. Scientometrics, 53: 171–193.

Hirst, G. (1978), Discipline Impact factor: a method for determining core journal lists. Journal of the American Society for Information Science, 29: 171–172.

Kostoff, R. (2002), Citation analysis of research performer quality. Scientometrics, 53: 49–71.

Med, H. F., Burger, W. J. M., Frankfort, J. G., Van Raan, A. F. J. (1985), A comparative study of bibliometric part performance analysis and peer judgment. Scientometrics, 8: 149–159.

Podlubny, I. (2005), Comparison of scientific impact expressed by the number of citations in different fields of science. Scientometrics, 64: 95–99.

Schubert, A., Braun T. (1993), Reference standards for citation based assessments. Scientometrics, 26: 21–35.

Schubert, A., Braun T. (1996), Cross-field normalization of scientometric indicators, Scientometrics, 36: 311–324.

Van Raan, A. J. (2006), Comparison of the Hirsch-index with standard bibliometric indicators and with peer judgment. Scientometrics, 67: 491–502.

Zitt, M., Ramanana-Rahary, S., Bassecoulard, E. (2005), Relativity of citation performance and excellence measures: From cross-field to cross-scale effects of field-normalization. Scientometrics, 63: 373–401.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Adams, J., Gurney, K. & Jackson, L. Calibrating the zoom — a test of Zitt’s hypothesis. Scientometrics 75, 81–95 (2008). https://doi.org/10.1007/s11192-007-1832-7

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-007-1832-7