Abstract

Based on eigenvalue analyses, well-structured upper bounds for the condition number of the scaled memoryless quasi-Newton updating formulae Broyden–Fletcher–Goldfarb–Shanno and Davidon–Fletcher–Powell are obtained. Then, it is shown that the scaling parameter proposed by Oren and Spedicato is the unique minimizer of the given upper bound for the condition number of scaled memoryless Broyden–Fletcher–Goldfarb–Shanno update, and the scaling parameter proposed by Oren and Luenberger is the unique minimizer of the given upper bound for the condition number of scaled memoryless Davidon–Fletcher–Powell update. Thus, scaling parameters proposed by Oren et al. may enhance numerical stability of the self-scaling memoryless Broyden–Fletcher–Goldfarb–Shanno and Davidon–Fletcher–Powell methods.

Similar content being viewed by others

References

Sun, W., Yuan, Y.X.: Optimization Theory and Methods: Nonlinear Programming. Springer, New York (2006)

Wolfe, P.: Convergence conditions for ascent methods. SIAM Rev. 11(2), 226–235 (1969)

Oren, S.S.: Self-scaling variable metric (SSVM) algorithms. II. Implementation and experiments. Manag. Sci. 20(5), 863–874 (1974)

Oren, S.S., Luenberger, D.G.: Self-scaling variable metric (SSVM) algorithms. I. Criteria and sufficient conditions for scaling a class of algorithms. Manag. Sci. 20(5), 845–862 (1974)

Oren, S.S., Spedicato, E.: Optimal conditioning of self-scaling variable metric algorithms. Math. Program. 10(1), 70–90 (1976)

Yin, H.X., Du, D.L.: The global convergence of self-scaling BFGS algorithm with nonmonotone line search for unconstrained nonconvex optimization problems. Acta Math. Sin. Engl. Ser. 23(7), 1233–1240 (2007)

Barzilai, J., Borwein, J.M.: Two-point stepsize gradient methods. IMA J. Numer. Anal. 8(1), 141–148 (1988)

Andrei, N.: A scaled BFGS preconditioned conjugate gradient algorithm for unconstrained optimization. Appl. Math. Lett. 20(6), 645–650 (2007)

Andrei, N.: Scaled conjugate gradient algorithms for unconstrained optimization. Comput. Optim. Appl. 38(3), 401–416 (2007)

Andrei, N.: Scaled memoryless BFGS preconditioned conjugate gradient algorithm for unconstrained optimization. Optim. Methods Softw. 22(4), 561–571 (2007)

Andrei, N.: A scaled nonlinear conjugate gradient algorithm for unconstrained optimization. Optimization 57(4), 549–570 (2008)

Andrei, N.: Accelerated scaled memoryless BFGS preconditioned conjugate gradient algorithm for unconstrained optimization. Eur. J. Oper. Res. 204(3), 410–420 (2010)

Babaie-Kafaki, S.: A note on the global convergence theorem of the scaled conjugate gradient algorithms proposed by Andrei. Comput. Optim. Appl. 52(2), 409–414 (2012)

Babaie-Kafaki, S.: A new proof for the sufficient descent condition of Andrei’s scaled conjugate gradient algorithms. Pac. J. Optim. 9(1), 23–28 (2013)

Babaie-Kafaki, S.: A modified scaled memoryless BFGS preconditioned conjugate gradient method for unconstrained optimization. 4OR 11(4), 361–374 (2013)

Babaie-Kafaki, S.: Two modified scaled nonlinear conjugate gradient methods. J. Comput. Appl. Math. 261(5), 172–182 (2014)

Babaie-Kafaki, S., Ghanbari, R.: A modified scaled conjugate gradient method with global convergence for nonconvex functions. Bull. Belg. Math. Soc. Simon Stevin 21(3), 465–477 (2014)

Li, E.D., Fukushima, M.: A modified BFGS method and its global convergence in nonconvex minimization. J. Comput. Appl. Math. 129(1–2), 15–35 (2001)

Zhou, W., Zhang, L.: A nonlinear conjugate gradient method based on the MBFGS secant condition. Optim. Methods Softw. 21(5), 707–714 (2006)

Zhang, J.Z., Deng, N.Y., Chen, L.H.: New quasi-Newton equation and related methods for unconstrained optimization. J. Optim. Theory Appl. 102(1), 147–167 (1999)

Zhang, J., Xu, C.: Properties and numerical performance of quasi-Newton methods with modified quasi-Newton equations. J. Comput. Appl. Math. 137(2), 269–278 (2001)

Babaie-Kafaki, S., Ghanbari, R., Mahdavi-Amiri, N.: Two new conjugate gradient methods based on modified secant equations. J. Comput. Appl. Math. 234(5), 1374–1386 (2010)

Yuan, Y.X., Byrd, R.H.: Non-quasi-Newton updates for unconstrained optimization. J. Comput. Math. 13(2), 95–107 (1995)

Wei, Z., Li, G., Qi, L.: New quasi-Newton methods for unconstrained optimization problems. Appl. Math. Comput. 175(2), 1156–1188 (2006)

Babaie-Kafaki, S.: A modified BFGS algorithm based on a hybrid secant equation. Sci. China Math. 54(9), 2019–2036 (2011)

Li, G., Tang, C., Wei, Z.: New conjugacy condition and related new conjugate gradient methods for unconstrained optimization. J. Comput. Appl. Math. 202(2), 523–539 (2007)

Yuan, Y.X.: A modified BFGS algorithm for unconstrained optimization. IMA J. Numer. Anal. 11(3), 325–332 (1991)

Dai, Y.H., Kou, C.X.: A nonlinear conjugate gradient algorithm with an optimal property and an improved Wolfe line search. SIAM J. Optim. 23(1), 296–320 (2013)

Watkins, D.S.: Fundamentals of Matrix Computations. Wiley, New York (2002)

Babaie-Kafaki, S.: A quadratic hybridization of Polak–Ribière–Polyak and Fletcher–Reeves conjugate gradient methods. J. Optim. Theory Appl. 154(3), 916–932 (2012)

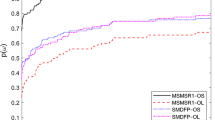

Dolan, D.H., Moré, J.J.: Benchmarking optimization software with performance profiles. Math. Program. 91(2, Ser. A), 201–213 (2002)

Andrei, N.: Open problems in conjugate gradient algorithms for unconstrained optimization. Bull. Malays. Math. Sci. Soc. 34(2), 319–330 (2011)

Acknowledgments

This research was supported by a grant from IPM (No. 93650051). The author is grateful to the anonymous reviewers for their valuable comments and suggestions helped to improve the presentation. He also thanks Professor Michael Navon for providing the line search code.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Babaie-Kafaki, S. On Optimality of the Parameters of Self-Scaling Memoryless Quasi-Newton Updating Formulae. J Optim Theory Appl 167, 91–101 (2015). https://doi.org/10.1007/s10957-015-0724-x

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10957-015-0724-x

Keywords

- Unconstrained optimization

- Large-scale optimization

- Memoryless quasi-Newton update

- Eigenvalue

- Condition number