Abstract

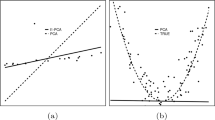

We consider high-dimensional data which contains a linear low-dimensional non-Gaussian structure contaminated with Gaussian noise, and discuss a method to identify this non-Gaussian subspace. For this problem, we provided in our previous work a very general semi-parametric framework called non-Gaussian component analysis (NGCA). NGCA has a uniform probabilistic bound on the error of finding the non-Gaussian components and within this framework, we presented an efficient NGCA algorithm called Multi-index Projection Pursuit. The algorithm is justified as an extension of the ordinary projection pursuit (PP) methods and is shown to outperform PP particularly when the data has complicated non-Gaussian structure. However, it turns out that multi-index PP is not optimal in the context of NGCA. In this article, we therefore develop an alternative algorithm called iterative metric adaptation for radial kernel functions (IMAK), which is theoretically better justifiable within the NGCA framework. We demonstrate that the new algorithm tends to outperform existing methods through numerical examples.

Similar content being viewed by others

References

Attias H. (1999). Independent factor analysis. Neural Computation 11(4): 803–851

Bach F.R., Jordan M.I. (2002). Kernel independent component analysis. Journal of Machine Learning Research 3, 1–48

Belkin M., Niyogi P. (2003). Laplacian eigenmaps for dimensionality reduction and data representation. Neural Computation 15(6): 1373–1396

Bishop C.M. (1995). Neural Networks for Pattern Recognition. Oxford University Press, Oxford

Blanchard G., Kawanabe M., Sugiyama M., Spokoiny V., Müller K.-R. (2006). In search of non-gaussian components of a high-dimensional distribution. Journal of Machine Learning Research 7, 247–282

Boscolo R., Pan H., Roychowdhury V.P. (2004). Independent component analysis based on nonparametric density estimation. IEEE Transactions on Neural Networks 15(1): 55–65

Chen, A., Bickel, P. J. (2006). Efficient independent component analysis. Annals of Statistics, 34(6).

Comon P. (1994). Independent component analysis—a new concept?. Signal Processing 36, 287–314

Eriksson, J., Kankainen, A., Koivunen, V. (2001). Novel characteristic function based criteria for ica. In: Proceedings of Third International Workshop on Independent Component Analysis and Blind Source Separation, pp 108–113.

Eriksson, J., Karvanen, J., Koivunen, V. (2000). Source distribution adaptive maximum likelihood estimation of ica model. In: P. Pajunen, J. Karhunen, (Eds.), Proceedings of second international workshop on independent component analysis and blind source separation, (pp. 227–232).

Friedman J.H., Tukey J.W. (1974). A projection pursuit algorithm for exploratory data analysis. IEEE Transactions on Computers 23(9): 881–890

Girolami M., Fyfe C. (1997). An extended exploratory projection pursuit network with linear and nonlinear anti-hebbian lateral connections applied to the cocktail party problem. Neural Networks 10(9): 1607–1618

Gretton A., Herbrich R., Smola A., Bousquet O., Schölkopf B. (2005). Kernel methods for measuring independence. Journal of Machine Learning Research 6, 2075–2129

Harmeling S., Ziehe A., Kawanabe M., Müller K.-R. (2003). Kernel-based nonlinear blind source separation. Neural Computation 15(5): 1089–1124

Hastie, T., Tibshirani, R. (2003). Independent components analysis through product density estimation. In: S. Becker, S. T., Obermayer, K. (Eds.), Advances in Neural Information Processing Systems 15, (pp 649–656). Cambridge: MIT

Huber P.J. (1985). Projection pursuit. The Annals of Statistics 13, 435–475

Hyvärinen A. (1999). Fast and robust fixed-point algorithms for independent component analysis. IEEE Transactions on Neural Networks 10(3): 626–634

Hyvärinen A., Karhunen J., Oja E. (2001). Independent Component Analysis. Wiley, New York

Learned-Miller E.G., Fisher J.W. (2003). ICA using spacing estimates of entropy. Journal of Machine Learning Research 4, 1271–1295

Lee T.W., Girolami M., Sejnowski T.J. (1999). Independent component analysis using an extended informax algorithm for mixed subgaussian and supergaussian sources. Neural Computation 11(2): 417–441

Moody J., Darken C. (1989). Fast learning in networks of locally-tuned processing units. Neural Computation 1, 281–294

Müller K.-R., Mika S., Rätsch G., Tsuda K., Schölkopf B. (2001). An introduction to kernel-based learning algorithms. IEEE Neural Networks 12(2): 181–201

Nason, G. (1992). Design and choice of projection indices. PhD thesis, University of Bath.

Roweis S., Saul L. (2000). Nonlinear dimensionality reduction by locally linear embedding. Science 290(5500): 2323–2326

Schölkopf B., Smola A. (2001). Learning with Kernels. MIT, New York

Stein C.M. (1981). Estimation of the mean of a multivariate normal distribution. Annals of Statistics 9, 1135–1151

Sugiyama, M., Kawanabe, M., Blanchard, G., Spokoiny, V., Müller, K.-R. (2006). Obtaining the best linear unbiased estimator of noisy signals by non-Gaussian component analysis. In: Proceedings of 2006 IEEE international conference on acoustics, speech, and signal processing, (pp. 608–611).

Tenenbaum J.B., de Silva V., Langford J.C. (2000). A global geometric framework for nonlinear dimensionality reduction. Science 290(5500): 2319–2323

Theis, F. J., Kawanabe, M. (2006). Uniqueness of non-gaussian subspace analysis. In Proceedings of Sixth International Workshop on Independent Component Analysis and Blind Source Separation, LNCS vol. 3889, (pp 917–924) Berlin Heidelberg New York: Springer.

Vassis N., Motomura Y. (2001). Efficient source adaptivity in independent compoenent analysis. IEEE Transactions on Neural Networks 12, 559–566

Author information

Authors and Affiliations

Corresponding author

About this article

Cite this article

Kawanabe, M., Sugiyama, M., Blanchard, G. et al. A new algorithm of non-Gaussian component analysis with radial kernel functions. AISM 59, 57–75 (2007). https://doi.org/10.1007/s10463-006-0098-9

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10463-006-0098-9