Abstract

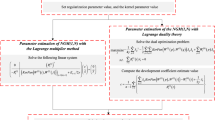

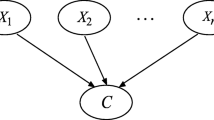

Reliable prediction intervals (PIs) construction for industrial time series is substantially significant for decision-making in production practice. Given the industrial data feature of high level noises and incomplete input, a high order dynamic Bayesian network (DBN)-based PIs construction method for industrial time series is proposed in this study. For avoiding to designate the amount and type of the basis functions in advance, a linear combination of kernel functions is designed to describe the relationships between the nodes in the network, and a learning method based on the scoring criterion—the sparse Bayesian score, is then reported to acquire suitable model parameters such as the weights and the variances. To verify the performance of the proposed method, two types of time series which are the classical Mackey-Glass data mixed by additive noises and a real-world industrial data are employed. The results indicate the effectiveness of our proposed method for the PIs construction of the industrial data with incomplete input.

Similar content being viewed by others

References

Berger JO (1985) Statistical decision theory and Bayesian analysis. Springer, New York

Bishop CM (2006) Pattern recognition and machine learning. Springer, New York

Carvalho AM, Roos TT, Oliveira AL, MyllymÄaki P (2011) Discriminative learning of Bayesian networks via factorized conditional log-likelihood. J Mach Learn Res 12:2181–2210

Chickering DM (1996) Learning Bayesian networks is NP-complete. In: Fisher D, Lenz HJ (eds) Learning from data. Springer, New York, pp 121–130

Cooper GF, Herskovits E (1992) A Bayesian method for the induction of probabilistic networks from data. Mach Learn 9:309–347

Cruz-Ramírez N, Acosta-Mesa HG, Barrientos-Martínez RE et al (2006) How good are the Bayesian information criterion and the minimum description length principle for model selection? A Bayesian network analysis. In: Gelbukh A, Reyes-Garcia CA (eds) Advances in artificial intelligence. Springer, Heidelberg, pp 494–504

Daly R, Shen Q, Aitken S (2011) Learning Bayesian networks: approaches and issues. Knowl Eng Rev 26:99–157

de Campos LM (2006) A scoring function for learning Bayesian networks based on mutual information and conditional independence tests. J Mach Learn Res 7:2149–2187

Fung R, Chang KC (1990) Weighting and integrating evidence for stochastic simulation in Bayesian networks. In: Bonissone PP, Henrion M, Kanal LN, Lemmer JF (eds) Uncertainty in Artificial Intelligence, 5. Elsevier, pp 208–219

Gámez JA, Mateo JL, Puerta JM (2011) Learning Bayesian networks by hill climbing: efficient methods based on progressive restriction of the neighborhood. Data Min Knowl Discov 22:106–148

Giordano F, La Rocca M, Perna C (2007) Forecasting nonlinear time series with neural network sieve bootstrap. Comput Stat Data Anal 51:3871–3884

Heckerman D, Geiger D, Chickering DM (1995) Learning Bayesian networks: the combination of knowledge and statistical data. Mach Learn 20:197–243

Heckerman D (2008) A tutorial on learning with Bayesian networks. In: Holmes DE, Jain LC (eds) Innovations in Bayesian networks. Springer, Heidelberg, pp 33–82

Hwang JTG, Ding AA (1997) Prediction intervals for artificial neural networks. J Am Stat Assoc 92:748–757

Imoto S, Kim S, Goto T et al (2003) Bayesian network and nonparametric heteroscedastic regression for nonlinear modeling of genetic network. J Bioinform Comput Biol 1:231–252

Jaeger H (2002) Tutorial on training recurrent neural networks, covering BPPT, RTRL, EKF and the “echo state network” approach. GMD Report 159, German National Research Center for Information Technology

Jaeger H, Haas H (2004) Harnessing nonlinearity: predicting chaotic systems and saving energy in wireless communication. Science 304:78–80

Khosravi A, Nahavandi S, Creighton D et al (2011) Comprehensive review of neural network-based prediction intervals and new advances. IEEE Trans Neural Netw 22:1341–1356

Khosravi A, Nahavandi S, Creighton D (2011) Prediction interval construction and optimization for adaptive neurofuzzy inference systems. IEEE Trans Fuzzy Syst 19:983–988

Khosravi A, Nahavandi S, Creighton D et al (2011) Lower upper bound estimation method for construction of neural network-based prediction intervals. IEEE Trans Neural Netw 22(3):337–346

Kim S, Imoto S, Miyano S (2004) Dynamic Bayesian network and nonparametric regression for nonlinear modeling of gene networks from time series gene expression data. Biosystems 75:57–65

Larrañaga P, Karshenas H, Bielza C et al (2013) A review on evolutionary algorithms in Bayesian network learning and inference tasks. Inf Sci 233:109–125

Lee S, Bolic M, Groza VZ et al (2011) Confidence interval estimation for oscillometric blood pressure measurements using bootstrap approaches. IEEE Trans Instrum Meas 60:3405–3415

Mencar C, Castellano G, Fanelli AM (2005) Deriving prediction intervals for neuro-fuzzy networks. Math Comput Model 42:719–726

Murphy KP (2002) Dynamic Bayesian networks: representation, inference and learning. University of California, Berkeley

Nix DA, Weigend AS (1994) Estimating the mean and variance of the target probability distribution. Computational Intelligence. In: 1994 IEEE World Congress on Computational Intelligence 1: 55–60

Njah H, Jamoussi S (2015) Weighted ensemble learning of Bayesian network for gene regulatory networks. Neurocomputing 150:404–416

Papadopoulos G, Edwards PJ, Murray AF (2001) Confidence estimation methods for neural networks: a practical comparison. IEEE Trans Neural Netw 12:1278–1287

Regnier-Coudert O, McCall J (2012) An island model genetic algorithm for Bayesian network structure learning. In: 2012 IEEE Congress on Evolutionary Computation, 1–8

Sheng C, Zhao J, Wang W et al (2013) Prediction intervals for a noisy nonlinear time series based on a bootstrapping reservoir computing network ensemble. IEEE Trans Neural Netw Learn Syst 24:1036–1048

Shrivastava NA, Panigrahi BK (2013) Point and prediction interval estimation for electricity markets with machine learning techniques and wavelet transforms. Neurocomputing 118:301–310

Silander T, Roos T, MyllymÄaki P (2010) Learning locally mini-max optimal Bayesian networks. Int J Approx Reason 51:544–557

Tipping ME (2005) Variational relevance vector machine. U.S. Patent

Tipping ME, Faul AC (2003) Fast marginal likelihood maximisation for sparse Bayesian models. In: 2003 Proceedings of the ninth international workshop on artificial intelligence and statistics. 1

Tipping ME (2001) Sparse Bayesian learning and the relevance vector machine. J Mach Learn Res 1:211–244

Zhang L, Luh PB, Kasiviswanathan K (2003) Energy clearing price prediction and confidence interval estimation with cascaded neural networks. IEEE Trans Power Syst 18:99–105

Zhang Y, Zhang W, Xie Y (2013) Improved heuristic equivalent search algorithm based on maximal information coefficient for Bayesian network structure learning. Neurocomputing 117:186–195

Acknowledgments

This work is supported by the National Natural Sciences Foundation of China (No. 61273037, 61304213, 61473056, 61533005, 61522304, U1560102), the National Sci-Tech Support Plan (No. 2015BAF22B01) and Fundamental Research Funds for the Central Universities (DUT15YQ113).

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Chen, L., Liu, Y., Zhao, J. et al. Prediction intervals for industrial data with incomplete input using kernel-based dynamic Bayesian networks. Artif Intell Rev 46, 307–326 (2016). https://doi.org/10.1007/s10462-016-9465-y

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10462-016-9465-y