Abstract

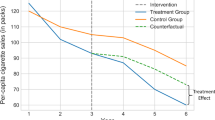

Resampling for stationary sequences has been well studied in the last couple of decades. In the paper at hand, we focus on nonstationary time series data where the nonstationarity is due to a slowly-changing deterministic trend. We show that the local block bootstrap methodology is appropriate for inference under this locally stationary setting without the need of detrending the data. We prove the asymptotic consistency of the local block bootstrap in the smooth trend model, and complement the theoretical results by a finite-sample simulation.

Similar content being viewed by others

References

Altman NS (1990) Kernel smoothing of data with correlated errors. J Am Stat Assoc 85:749–759

Bühlmann P (1998) Sieve bootstrap for smoothing in nonstationary time series. Ann Stat 26(1):48–83

Dahlhaus R (1996) On the Kullback-Leibler information divergence of locally stationary processes. Stochastic Proc Appl 62:139–168

Dahlhaus R (1997) Fitting time series models to nonstationary processes. Ann Stat 25:1–37

Dowla A, Paparoditis E, Politis DN (2003) Locally stationary processes and the Local Block Bootstrap. In: Akritas MG, Politis DN (eds) Recent advances and trends in nonparametric statistics. Elsevier, North Holland, pp 437–444

Efron B (1979) Bootstrap methods: another look at the jackknife. Ann Stat 7:1–26

Gasser T, Müller HG (1984) Kernel estimation of regression functions. In: Rosenblatt M (ed) Smoothing techniques for curve estimation (Gasser T). Springer, Berlin

Hall P, Hart JD (1990) Nonparametric regression with long-range dependence. Stochastic Proc Appl 36:339–351

Hall P, Horowitz JL, Jing BY (1995) On blocking rules for the bootstrap with dependent data. Biometrika 50:561–574

Hart JD (1991) Kernel regression estimation with time series errors. J R Stat Soc B 53:173–187

Ibragimov IA, Linnik YU (1971) Independent and stationary sequences of random variables. Wolters-Noordhoff, Groningen

Kreiss JP, Paparoditis E (2011) Bootstrap for dependent data: a review. J Korean Stat Soc 40:357–378

Künsch HR (1989) The jackknife and the bootstrap for general stationary observations. Ann Stat 17:1217–1241

Lahiri SN (2003) Resampling methods for dependent data. Springer, New York

Liu RY, Singh K (1992) Moving blocks jacknife and bootstrap capture weak dependence. In: Raoul LePage, Lynne Billard (eds) Exploring the limits of the bootstrap. Wiley, pp 225–248

Nadaraya EA (1964) On estimating regression. Theor Prob Appl 10:186–190

Paparoditis E, Politis DN (2002) Local block bootstrap. C R Acad Sci Paris I 335:959–962

Politis DN (1998) Computer-intensive methods in statistical analysis. IEEE Signal Proc Mag 15(1):39–55

Romano JP, Thombs LA (1996) Inference form autocorrelations under weak assumptions. J Am Stat Assoc 91:590–600

Roussas GG (1990) Nonparametric regression estimation under mixing conditions. Stochastic Proc Appl 36:107–116

Roussas GG, Tran LT, Ioannides DA (1992) Fixed design regression for time series: Asymptotic normality. J Multiv Anal 40:262–291

Watson GS (1964) Smooth regression analysis. Sankhyā Ser A 26:359–372

Author information

Authors and Affiliations

Corresponding author

Additional information

Research partially supported by NSF grant DMS-10-07513. Many thanks are due to the expert referee whose comments and suggestions greatly helped in improving the paper.

Rights and permissions

About this article

Cite this article

Dowla, A., Paparoditis, E. & Politis, D.N. Local block bootstrap inference for trending time series. Metrika 76, 733–764 (2013). https://doi.org/10.1007/s00184-012-0413-9

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00184-012-0413-9