Abstract

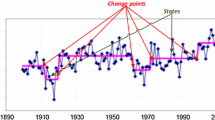

The ability to collect data is changing drastically. Nowadays, data are gathered in the form of transient and finite data streams. Memory restrictions preclude keeping all received data in memory. When dealing with massive data streams, it is mandatory to create compact representations of data, also known as synopses structures or summaries. Reducing memory occupancy is of utmost importance when handling a huge amount of data. This paper addresses the problem of constructing histograms from data streams under error constraints. When constructing online histograms from data streams there are two main characteristics to embrace: the updating facility and the error of the histogram. Moreover, in dynamic environments, besides the need of compact summaries to capture the most important properties of data, it is also essential to forget old data. Therefore, this paper presents sliding histograms and fading histograms, an abrupt and a smooth strategies to forget outdated data.

Similar content being viewed by others

Notes

The square error is one of the most used error measures in histogram construction. It is also known as the V-Optimal measure and was introduced by [14].

References

Babcock, B., Babu, S., Datar, M., Motwani, R., Widom, J.: Models and issues in data stream systems. In: Proceedings of the 21st ACM SIGMOD–SIGACT–SIGART Symposium on Principles of Database Systems, PODS ’02, pp. 1–16. ACM, New York (2002). doi10.1145/543613.543615

Barbar, D.: Requirements for clustering data streams. SIGKDD Explor. Newsl. 3(2), 23–27 (2002). doi:10.1145/507515.507519

Chakrabarti, K., Garofalakis, M.N., Rastogi, R., Shim, K.: Approximate query processing using wavelets. In: Abbadi, A.E., Brodie, M.L., Chakravarthy, S., Dayal, U., Kamel, N., Schlageter, G., Whang, K.Y. (eds.) VLDB 2000. Proceedings of 26th International Conference on Very Large Data Bases, 10–14 September 2000, Cairo, pp. 111–122. Morgan Kaufmann, Burlington (2000)

Cormode, G., Muthukrishnan, S.: An improved data stream summary: the count-min sketch and its applications. J. Algorithms 55(1), 58–75 (2005). doi:10.1016/j.jalgor.2003.12.001

Cormode, G., Muthukrishnan, S.: What’s hot and what’s not: tracking most frequent items dynamically. ACM Trans. Database Syst. 30(1), 249–278 (2005). doi:10.1145/1061318.1061325

Correa, M., Bielza, C., Pamies-Teixeira, J.: Comparison of bayesian networks and artificial neural networks for quality detection in a machining process. Expert Syst. Appl. 36(3), 7270–7279 (2009). http://dblp.uni-trier.de/db/journals/eswa/eswa36.html#CorreaBP09

Freedman, D., Diaconis, P.: On the histogram as a density estimator: L2 theory. Probab. Theory Relat. Fields 57(4), 453–476 (1981). doi:10.1007/BF01025868

Gama, J., Sebastipo, R., Rodrigues, P.P.: On evaluating stream learning algorithms. Mach. Learn. 90(3), 317–346 (2013)

Gibbons, P.B., Matias, Y.: Synopsis data structures for massive data sets. In: ACM–SIAM Symposium on Discrete Algorithms, pp. 909–910 (1999). doi:10.1145/314500.315083

Gilbert, A.C., Kotidis, Y., Muthukrishnan, S., Strauss, M.J.: One-pass wavelet decompositions of data streams. IEEE Trans. Knowl. Data Eng. 15(3), 541–554 (2003). doi:10.1109/TKDE.2003.1198389

Guha, S., Koudas, N., Shim, K.: Approximation and streaming algorithms for histogram construction problems. ACM Trans. Database Syst. 31(1), 396–438 (2006). doi:10.1145/1132863.1132873

Guha, S., Shim, K., Woo, J.: Rehist: relative error histogram construction algorithms. In: Proceedings of the 30th International Conference on Very Large Data Bases, pp. 300–311 (2004)

Ioannidis, Y.: The history of histograms (abridged). In: VLDB Endowment. Proceedings of the 29th International Conference on Very Large Data Bases, vol. 29, VLDB ’03, pp. 19–30 (2003). http://dl.acm.org/citation.cfm?id=1315451.1315455

Ioannidis, Y.E., Poosala, V.: Balancing histogram optimality and practicality for query result size estimation. In: Carey, M.J., Schneider, D.A. (eds.) Proceedings of the 1995 ACM SIGMOD International Conference on Management of Data, San Jose, 22–25 May 1995, pp. 233–244. ACM Press, New York (1995)

Jagadish, H.V., Koudas, N., Muthukrishnan, S., Poosala, V., Sevcik, K.C., Suel, T.: Optimal histograms with quality guarantees. In: Proceedings of the 24th International Conference on Very Large Data Bases, VLDB ’98, pp. 275–286. Morgan Kaufmann Publishers Inc., San Francisco (1998). http://dl.acm.org/citation.cfm?id=645924.671191

Karras, P., Mamoulis, N.: Hierarchical synopses with optimal error guarantees. ACM Trans. Database Syst. 33, 1–53 (2008). doi:10.1145/1386118.1386124

Lin, M.Y., Hsueh, S.C., Hwang, S.K.: Interactive mining of frequent itemsets over arbitrary time intervals in a data stream. In: Proceedings of the 19th Conference on Australasian Database, vol. 75, ADC ’08, pp. 15–21. Australian Computer Society Inc., Darlinghurst (2007). http://dl.acm.org/citation.cfm?id=1378307.1378315

Misra, J., Gries, D.: Finding repeated elements. Sci. Comput. Program. 2, 143–152 (1982). doi:10.1016/0167-6423(82)90012-0

Poosala, V., Ioannidis, Y.E., Haas, P.J., Shekita, E.J.: Improved histograms for selectivity estimation of range predicates. In: SIGMOD Conference, pp. 294–305 (1996)

Rodrigues, P., Gama, J., Sebastipo, R.: Memoryless fading windows in ubiquitous settings. In: Proceedings of Ubiquitous Data Mining (UDM) Workshop, in conjunction with the 19th European Conference on Artificial Intelligence, ECAI 2010, pp. 27–32 (2010)

Scott, D.W.: On optimal and data-based histograms. Biometrika 66(3), 605–610 (1979). doi:10.1093/biomet/66.3.605

Street, W.N., Kim, Y.: A streaming ensemble algorithm (sea) for large-scale classification. In: Proceedings of the 7th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining, pp. 377–382. ACM Press, New York (2001)

Sturges, H.A.: The choice of a class interval. Am. Stat. Assoc. 21, 65–66 (1926)

Vitter, J.S.: Random sampling with a reservoir. ACM Trans. Math. Softw. 11(1), 37–57 (1985). doi:10.1145/3147.3165

Acknowledgments

The work of Raquel Sebastião was supported by FCT (Portuguese Foundation for Science and Technology) under the PhD Grant SFRH/BD/41569/2007. The authors acknowledge the support of the European Commission through the project MAESTRA (Grant number ICT-2013-612944). This work was also funded by the European Regional Development Fund through the COMPETE Program, by the Portuguese Funds through the FCT (Portuguese Foundation for Science and Technology) within project FCOMP-01-0124-FEDER-022701, and by the Projects NORTE-07-0124-FEDER-000056/000059 which is financed by the North Portugal Regional Operational Program (ON.2 O Novo Norte), under the National Strategic Reference Framework (NSRF).

Author information

Authors and Affiliations

Corresponding author

Appendix: Fading histograms

Appendix: Fading histograms

This appendix presents some computations on the error of approximating the fading sliding histogram with the fading histogram. Considering the definition of histogram frequencies (1), the frequencies of a sliding histogram (with \(k\) buckets) constructed over a sliding window of length \(w\) and computed at observation \(i\) with an exponential fading factor \(\alpha \) (\(0 \ll \alpha < 1\)) can be defined as follows:

To approximate a fading sliding histogram by a fading histogram, the older data than that within the most recent window \(W = \{x_l: l=i-w+1,\ldots ,i \}\) must be taken into consideration. Therefore, for each bucket \(j = 1,\ldots , k\), the proportion of weight given to old observations (with respect to \(W\)) in the computation of the fading histogram is defined as the bucket ballast weight:

where \(N_\alpha (i)\) is the fading increment defined as \(N_{\alpha }(i) = \sum \nolimits _{j=1}^k \sum \nolimits _{l=1}^i \alpha ^{i-l} C_j(l)\).

As with the old observations, for each bucket \(j = 1,\dots , k\), the proportion of weight given to observations within the most recent window \(W\) is defined by

Hence, the error of approximating the fading sliding histogram with the fading histogram, both with \(k\) buckets, can be defined as

Theorem 1

Let \(\varepsilon <1\) be an admissible ballast weight for the fading histogram. Then, \(\Delta _{\alpha ,w}(i) \le 2\varepsilon \).

Proof

From the respective histogram frequency definitions comes that the approximation error in each bucket is

Splitting each of these errors considering the frequencies inside and outside the most recent window of size \(w\)

where

and

Looking for an upper bound on the error, the worst case scenario is that these two sources of error do not cancel out, rather adding up their effect

Hence

The upper bound on the error is given by considering all \(C_j(l) = 1\):

Then, from bucket ballast weight definition comes that

Considering in each bucket \(j = 1,\dots , k\) an admissible ballast weight, at most, of \(\varepsilon /k\) comes that

Rights and permissions

About this article

Cite this article

Sebastião, R., Gama, J. & Mendonça, T. Constructing fading histograms from data streams. Prog Artif Intell 3, 15–28 (2014). https://doi.org/10.1007/s13748-014-0050-9

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13748-014-0050-9