Abstract

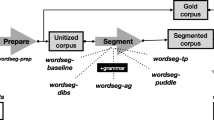

In recent years, Bayesian models have become increasingly popular as a way of understanding human cognition. Ideal learner Bayesian models assume that cognition can be usefully understood as optimal behavior under uncertainty, a hypothesis that has been supported by a number of modeling studies across various domains (e.g., Griffiths and Tenenbaum, Cognitive Psychology, 51, 354–384, 2005; Xu and Tenenbaum, Psychological Review, 114, 245–272, 2007). The models in these studies aim to explain why humans behave as they do given the task and data they encounter, but typically avoid some questions addressed by more traditional psychological models, such as how the observed behavior is produced given constraints on memory and processing. Here, we use the task of word segmentation as a case study for investigating these questions within a Bayesian framework. We consider some limitations of the infant learner, and develop several online learning algorithms that take these limitations into account. Each algorithm can be viewed as a different method of approximating the same ideal learner. When tested on corpora of English child-directed speech, we find that the constrained learner’s behavior depends non-trivially on how the learner’s limitations are implemented. Interestingly, sometimes biases that are helpful to an ideal learner hinder a constrained learner, and in a few cases, constrained learners perform equivalently or better than the ideal learner. This suggests that the transition from a computational-level solution for acquisition to an algorithmic-level one is not straightforward.

Article PDF

Similar content being viewed by others

References

Anderson J. R., Schooler L. J. (2000) The adaptive nature of memory. In: Tulving E., Craik F. I. M. (eds) The Oxford handbook of memory. Oxford University Press, Oxford, pp 557–570

Bernstein-Ratner N. (1984) Patterns of vowel modification in motherese. Journal of Child Language 11: 557–578

Blanchard D., Heinz J., Golinkoff R. (2010) Modeling the contribution of phonotactic cues to word segmentation. Journal of Child Language 27: 487–511

Brent M. (1999) An efficient, probabilistically sound algorithm for segmentation and word discovery. Machine Learning 34: 71–105

Brown S., Steyvers M. (2009) Detecting and predicting changes. Cognitive Psychology 58: 49–67

Christiansen M., Allen J., Seidenberg M. (1998) Learning to segment speech using multiple cues: A connectionist model. Language and Cognitive Processes 13: 221–268

Curtin S., Mintz T., Christansen M. (2005) Stress changes the representational landscape: Evidence from word segmentation in infants. Cognition 96: 233–262

Ferguson T. (1973) A Bayesian analysis of some nonparametric problems. Annals of Statistics 1: 209–230

Fleck, M. (2008). Lexicalized phonotactic word segmentation. In Proceedings of the association for computational linguistics (pp. 130–138).

Frank M. C., Goodman N. D., Tenenbaum J. (2009) Using speakers’ referential intentions to model early cross-situational word learning. Psychological Science 20: 579–585

Gambell T., Yang C. (2006) Word segmentation: Quick but not dirty. Manuscript. Yale University, New Haven

Griffiths T. L., Chater N., Kemp C., Perfors A., Tenenbaum J. B. (2010) Probabilistic models of cognition: Exploring representations and inductive biases. Trends in Cognitive Sciences 14: 357–364

Griffiths T. L., Kemp C., Tenenbaum J. B. (2008) Bayesian models of cognition. In: Sun Ron (Ed.) The Cambridge handbook of computational cognitive modeling. Cambridge University Press, Cambridge

Griffiths T. L., Tenenbaum J. B. (2005) Structure and strength in causal induction. Cognitive Psychology 51: 354–384

Goldwater (2006). Nonparametric Bayesian models of lexical acquisition. Ph.D. thesis, Brown University.

Goldwater S., Griffiths T., Johnson M. (2007) Distributional cues to word boundaries: Context is important. In: Caunt-Nulton H., Kulatilake S., Woo I. (eds) BUCLD 31: Proceedings of the 31st annual Boston university conference on language development. Cascadilla Press, Somerville, MA, pp 239–250

Goldwater S., Griffiths T.L., Johnson M. (2009) A Bayesian framework for word segmentation: Exploring the effects of context. Cognition 112(1): 21–54

Hewlett, D., & Cohen, P. (2009). Bootstrap voting experts. In Proceedings of the twenty-first international joint conference on artificial intelligence (IJCAI-09) (pp. 1071–1076). Available at http://www.ijcai.org/papers09/contents.php.

Johnson E., Jusczyk P. (2001) Word segmentation by 8-month-olds: When speech cues count more than statistics. Journal of Memory and Language 44: 548–567

Johnson, M., Griffiths, T., & Goldwater, S. (2007). Bayesian inference for PCFGs via Markov Cain Monte Carlo. In Proceedings of the meeting of the North American association for computational linguistics.

Jusczyk P., Goodman M., Baumann A. (1999a) Nine-month-olds’ attention to sound similarities in syllables. Journal of Memory & Language 40: 62–82

Jusczyk P., Hohne E., Baumann A. (1999b) Infants’ sensitivity to allophonic cues for word segmentation. Perception and Psychophysics 61: 1465–1476

Juszcyk P., Houston D., Newsome M. (1999c) The beginnings of word segmentation in English-learning infants. Cognitive Psychology 39: 159–207

MacWhinney B. (2000) The CHILDES project: Tools for analyzing talk. Lawrence Erlbaum Associates, Mahwah, NJ

Marr D. (1982) Vision. Freeman, San Francisco

Marthi, B., Pasula, H., Russell, S., & Peres, Y., et al. (2002). Decayed MCMC Filtering. In Proceedings of 18th UAI (pp. 319–326).

Mattys S., Jusczyk P., Luce P., Morgan J. (1999) Phonotactic and prosodic effects on word segmentation in infants. Cognitive Psychology 38: 465–494

McClelland J. L., Botvinick M. M., Noelle D. C., Plaut D. C., Rogers T. T., Seidenberg M. S., Smith L. B. (2010) Letting structure emerge: Connectionist and dynamical systems approaches to understanding cognition. Trends in Cognitive Sciences 14: 348–356

Morgan J., Bonamo K., Travis L. (1995) Negative evidence on negative evidence. Developmental Psychology 31: 180–197

Newport E. (1990) Maturational constraints on language learning. Cognitive Science 14: 11–28

Oaksford M., Chater N. (1998) Rational models of cognition. Oxford University Press, Oxford, England

Pelucchi B., Hay J., Saffran J. (2009) Learning in reverse: Eight-month-old infants track backward transitional probabilities. Cognition 113: 244–247

Perruchet P., Desaulty S. (2008) A role for backward transitional probabilities in word segmentation?. Memory and Cognition 36: 1299–1305

Peters A. (1983) The Units of Language Acquisition, Monographs in Applied Psycholinguistics. Cambridge University Press, New York

Saffran J., Aslin R., Newport E. (1996) Statistical learning by 8-month-olds. Science 274: 1926–1928

Saffran J. R. (2001) The use of predictive dependencies in language learning. Journal of Memory and Language 44: 493–513

Sanborn, A. N., Griffiths, T. L., & Navarro, D. J. (in press). Rational approximations to rational models: Alternative algorithms for category learning. Psychological Review.

Seidl A., Johnson E. (2006) Infant word segmentation revisited: Edge alignment facilitates target extraction. Developmental Science 9(6): 565–573

Shi, L., Griffiths, T. L., Feldman, N. H., & Sanborn, A. N. (in press). Exemplar models as a mechanism for performing Bayesian inference. Psychonomic Bulletin & Review.

Swingley D. (2005) Statistical clustering and contents of the infant vocabulary. Cognitive Psychology 50: 86–132

Teh Y., Jordan M., Beal M., Blei D. (2006) Hierarchical Dirichlet processes. Journal of the American Statistical Association 101(476): 1566–1581

Tenenbaum J., Griffiths T. (2001) Generalization, similarity, and Bayesian inference. Behavioral and Brain Sciences 24: 629–641

Tenenbaum J., Griffiths T., Kemp C. (2006) Theory-based models of inductive learning and reasoning. Trends in Cognitive Sciences 10: 309–318

Thiessen E., Saffran J. R. (2003) When cues collide: Use of stress and statistical cues to word boundaries by 7- to 9-month-old infants. Developmental Psychology 39: 706–716

Xu F., Tenenbaum J. B. (2007) Word learning as Bayesian inference. Psychological Review 114: 245–272

Acknowledgments

We would like to thank the audiences at the PsychoComputational Models of Human Language workshop in 2009, BUCLD 34, three anonymous reviewers, Alexander Clark, William Sakas, Tom Griffiths, and Michael Frank. We would also like to give a special thanks to Jim White for his insight about the differences in performance between the ideal and online Bayesian learners. This work was supported by NSF grant BCS-0843896 to the first author and CORCL grant MI 14B-2009-2010 to the first and third authors.

Open Access

This article is distributed under the terms of the Creative Commons Attribution Noncommercial License which permits any noncommercial use, distribution, and reproduction in any medium, provided the original author(s) and source are credited.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

This article is published under an open access license. Please check the 'Copyright Information' section either on this page or in the PDF for details of this license and what re-use is permitted. If your intended use exceeds what is permitted by the license or if you are unable to locate the licence and re-use information, please contact the Rights and Permissions team.

About this article

Cite this article

Pearl, L., Goldwater, S. & Steyvers, M. Online Learning Mechanisms for Bayesian Models of Word Segmentation. Res on Lang and Comput 8, 107–132 (2010). https://doi.org/10.1007/s11168-011-9074-5

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11168-011-9074-5