Abstract

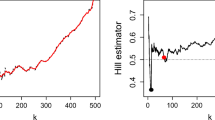

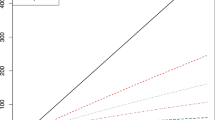

We consider removing lower order statistics from the classical Hill estimator in extreme value statistics, and compensating for it by rescaling the remaining terms. Trajectories of these trimmed statistics as a function of the extent of trimming turn out to be quite flat near the optimal threshold value. For the regularly varying case, the classical threshold selection problem in tail estimation is then revisited, both visually via trimmed Hill plots and, for the Hall class, also mathematically via minimizing the expected empirical variance. This leads to a simple threshold selection procedure for the classical Hill estimator which circumvents the estimation of some of the tail characteristics, a problem which is usually the bottleneck in threshold selection. As a by-product, we derive an alternative estimator of the tail index, which assigns more weight to large observations, and works particularly well for relatively lighter tails. A simple ratio statistic routine is suggested to evaluate the goodness of the implied selection of the threshold. We illustrate the favourable performance and the potential of the proposed method with simulation studies and real insurance data.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Albrecher, H., Beirlant, J., Teugels, J.L.: Reinsurance: Actuarial and Statistical Aspects. John Wiley & Sons, Chichester (2017)

Bader, B., Yan, J., Zhang, X.: Automated threshold selection for extreme value analysis via ordered goodness-of-fit tests with adjustment for false recovery rate. Ann. Appl. Statistics 12, 310–329 (2018)

Beirlant, J., Boniphace, E., Dierckx, G.: Generalized sum plots. REVSTAT-Statistical Journal 9(2), 181–198 (2011)

Beirlant, J., Dierckx, G., Guillou, A., Stǎricǎ, C.: On exponential representations of log-spacings of extreme order statistics. Extremes 5 (2), 157–180 (2002)

Beirlant, J., Goegebeur, Y., Segers, J., Teugels, J.L.: Statistics of Extremes: Theory and Applications. John Wiley & Sons, Chichester (2004)

Beirlant, J., Vynckier, P., Teugels, J.L.: Tail index estimation, pareto quantile plots regression diagnostics. J. Am. Stat. Assoc. 91(436), 1659–1667 (1996)

Bhattacharya, S., Kallitsis, M., Stoev, S.: Data-adaptive trimming of the Hill estimator and detection of outliers in the extremes of heavy-tailed data. Electronic Journal of Statistics 13, 1872–1925 (2019)

Bladt, M., Albrecher, H., Beirlant, J.: Combined tail estimation using censored data and expert information. Scandinavian Actuarial Journal. https://doi.org/10.1080/03461238.2019.1694974 (2019)

Buitendag, S., Beirlant, J., de Wet, T.: Ridge regression estimators for the extreme value index. Extremes 22, 271–292 (2019)

Csörgő, S., Deheuvels, P., Mason, D.: Kernel estimates of the tail index of a distribution. Ann. Statist. 13(3), 1050–1077 (1985)

Danielsson, J., de Haan, L., Peng, L., de Vries, C.G.: Using a bootstrap method to choose the sample fraction in tail index estimation. Journal of Multivariate Analysis 76(2), 226–248 (2001)

de Haan, L., Ferreira, A.: Extreme value theory: an introduction. Springer Science & Business Media (2007)

De Sousa, B., Michailidis, G.: A diagnostic plot for estimating the tail index of a distribution. J. Comput. Graph. Stat. 13(4), 974–995 (2004)

Draisma, G., de Haan, L., Peng, L., Pereira, T.T.: A bootstrap-based method to achieve optimality in estimating the extreme-value index. Extremes 2(4), 367–404 (1999)

Drees, H., de Haan, L., Resnick, S.: How to make a Hill plot. Ann. Stat. 28(1), 254–274 (2000)

Drees, H., Janßen, A., Resnick, S.I., Wang, T.: On a minimum distance procedure for threshold selection in tail analysis. SIAM Journal on Mathematics of Data Science 2(1), 75–102 (2020)

Drees, H., Kaufmann, E.: Selecting the optimal sample fraction in univariate extreme value estimation. Stoch. Process. Appl. 75(2), 149–172 (1998)

Embrechts, P., Klüppelberg, C., Mikosch, T.: Modelling extremal events: for insurance and finance, vol. 33. Springer Science & Business Media, 2nd Edn (2013)

Fraga Alves, M., Gomes, M., de Haan, L.: A new class of semi-parametric estimators of the second order parameter. Portugaliae Mathematica 60, 193–213 (2003)

Gomes, M.I., Brilhante, M.F., Pestana, D.: New reduced-bias estimators of a positive extreme value index. Communications in Statistics - Simulation and Computation 45(3), 833–862 (2016)

Gomes, M.I., de Haan, L., Rodrigues, L.H.: Tail index estimation for heavy-tailed models:, accommodation of bias in weighted log-excesses. Journal of the Royal Statistical Society: Series B (Statistical Methodology) 70(1), 31–52 (2008)

Gomes, M.I., Guillou, A.: Extreme value theory and statistics of univariate extremes: a review. Int. Stat. Rev. 83(2), 263–292 (2015)

Gomes, M.I., Oliveira, O.: The bootstrap methodology in statistics of extremes—choice of the optimal sample fraction. Extremes 4(4), 331–358 (2001)

Gomes, M.I., Pestana, D.: A sturdy reduced-bias extreme quantile (var) estimator. J. Am. Stat. Assoc. 102(477), 280–292 (2007)

Guillou, A., Hall, P.: A diagnostic for selecting the threshold in extreme value analysis. Journal of the Royal Statistical Society:, Series B (Statistical Methodology) 63(2), 293–305 (2001)

Hall, P.: On some simple estimates of an exponent of regular variation. J. Roy. Statist. Soc. Ser. B 44(1), 37–42 (1982)

Hall, P.: Using the bootstrap to estimate mean squared error and select smoothing parameter in nonparametric problems. Journal of Multivariate Analysis 32(2), 177–203 (1990)

Hall, P., Welsh, A., et al.: Adaptive estimates of parameters of regular variation. Ann. Stat. 13(1), 331–341 (1985)

Hill, B.M.: A simple general approach to inference about the tail of a distribution. Ann. Stat. 3, 1163–1174 (1975)

Papastathopoulos, I., Tawn, J.: Extended generalised pareto models for tail estimation. Journal of Statistical Planning and Inference 143(1), 131–143 (2013)

Schneider, L., Krajina, A., Krivobokova, T.: Threshold selection in univariate extreme value analysis. arXiv:1903.02517v1 (2019)

Acknowledgements

M.B. and H.A. acknowledge financial support from the Swiss National Science Foundation Project 200021_191984.

Funding

Open access funding provided by University of Lausanne.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix: Proofs

Appendix: Proofs

Proof of Proposition 2.1.

Set q = k − b + 1. By the Rényi representation Eq. 6,

Plugging in q = 1 (b = k) gives

which corresponds to the usual variance of the Hill estimator Tk,k and gives the first identity. In the general case,

But j ≤ q implies \(\frac {k-q+1}{k-j+1}\le 1\), such that

so

Thus

which gives the second identity. □

Proof of Theorem 3.1.

We first note that

where Y1,n < ⋯ < Yn,n are the order statistics of a standard Pareto sample (the ξ = 1 case). Then, from the second order condition Eq. 14 we obtain that for A = Yn−k,n and x = Yn−i+ 1,n/Yn−k,n, as \(k,n,n/k\to \infty \),

But by the Rényi representation Eq. 6 of exponential order statistics, the first term is distributed as

where \(E_{1},E_{2},\dots , E_{k}\) are i.i.d. standard exponential random variables. For the second term, by convergence to uniform random variables and a Riemann integral approximation, we get

and since (1 − 1/Yn−k,n) is a uniform order statistic, we further get that

Putting the three pieces together then establishes Eq. 15. □

Proof of Theorem 3.2.

With the shortened notation, we write

and by exchange of the order of summation, we can write

Again, by Riemann integration we have that

and

Similarly,

Putting the pieces together then indeed yields Eq. 18. □

Proof of Theorem 3.3.

Let us first decompose each summand by writing

and subsequently consider each term separately. From Eq. 27 we have that

On the other hand, Eq. 18 gives

The third term can be analyzed using both Eqs. 18 and 27 as follows,

where \(S(j,k):=\log (1+\log (k/j))+\frac {ek}{j} \mathtt {E}(1+\log (k/j))\).

We now proceed to add the k summands of the expected variance. To this end, some preparatory calculations will be helpful. By Eq. 17 and Riemann approximation we have

By virtue of Eq. 28,

from which we deduce that as \(k\to \infty \),

where f(p) is given by Eq. 19.

Observe that for the terms arising from the expected covariance term

Next,

and

Finally, for the mean squared error term of \(\overline {T}_{k}\) we find

Altogether we hence obtain

with

□

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Bladt, M., Albrecher, H. & Beirlant, J. Threshold selection and trimming in extremes. Extremes 23, 629–665 (2020). https://doi.org/10.1007/s10687-020-00385-0

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10687-020-00385-0