Abstract

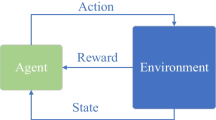

Policy Gradient methods are model-free reinforcement learning algorithms which in recent years have been successfully applied to many real-world problems. Typically, Likelihood Ratio (LR) methods are used to estimate the gradient, but they suffer from high variance due to random exploration at every time step of each training episode. Our solution to this problem is to introduce a state-dependent exploration function (SDE) which during an episode returns the same action for any given state. This results in less variance per episode and faster convergence. SDE also finds solutions overlooked by other methods, and even improves upon state-of-the-art gradient estimators such as Natural Actor-Critic. We systematically derive SDE and apply it to several illustrative toy problems and a challenging robotics simulation task, where SDE greatly outperforms random exploration.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Watkins, C., Dayan, P.: Q-learning. Machine Learning 8(3), 279–292 (1992)

Sutton, R., Barto, A.: Reinforcement Learning: An Introduction. MIT Press, Cambridge (1998)

Kaelbling, L.P., Littman, M.L., Moore, A.W.: Reinforcement learning: a survey. Journal of AI research 4, 237–285 (1996)

Wiering, M.A.: Explorations in Efficient Reinforcement Learning. PhD thesis, University of Amsterdam / IDSIA (February 1999)

Williams, R.J.: Simple statistical gradient-following algorithms for connectionist reinforcement learning. Machine Learning 8, 229–256 (1992)

Sutton, R.S., McAllester, D., Singh, S., Mansour, Y.: Policy gradient methods for reinforcement learning with function approximation. In: Advances in Neural Information Processing Systems (2000)

Peters, J., Schaal, S.: Policy gradient methods for robotics. In: Proc. 2006 IEEE/RSJ Intl. Conf. on Intelligent Robots and Systems (2006)

Moody, J., Saffell, M.: Learning to trade via direct reinforcement. IEEE Transactions on Neural Networks 12(4), 875–889 (2001)

Peshkin, L., Savova, V.: Reinforcement learning for adaptive routing. In: Proc. 2002 Intl. Joint Conf. on Neural Networks (IJCNN 2002) (2002)

Baxter, J., Bartlett, P.: Reinforcement learning in POMDP’s via direct gradient ascent. In: Proc. 17th Intl. Conf. on Machine Learning, pp. 41–48 (2000)

Peters, J., Vijayakumar, S., Schaal, S.: Natural actor-critic. In: Proceedings of the Sixteenth European Conference on Machine Learning (2005)

Spall, J.C.: Implementation of the simultaneous perturbation algorithm for stochastic optimization. IEEE Transactions on Aerospace and Electronic Systems 34(3), 817–823 (1998)

Wierstra, D., Foerster, A., Peters, J., Schmidhuber, J.: Solving deep memory POMDPs with recurrent policy gradients. In: de Sá, J.M., Alexandre, L.A., Duch, W., Mandic, D.P. (eds.) ICANN 2007. LNCS, vol. 4668, pp. 697–706. Springer, Heidelberg (2007)

Ng, A., Jordan, M.: PEGASUS: A policy search method for large MDPs and POMDPs. In: Proceedings of the Sixteenth Conference on Uncertainty in Artificial Intelligence, pp. 406–415 (2000)

Aberdeen, D.: Policy-gradient Algorithms for Partially Observable Markov Decision Processes. Australian National University (2003)

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Rückstieß, T., Felder, M., Schmidhuber, J. (2008). State-Dependent Exploration for Policy Gradient Methods. In: Daelemans, W., Goethals, B., Morik, K. (eds) Machine Learning and Knowledge Discovery in Databases. ECML PKDD 2008. Lecture Notes in Computer Science(), vol 5212. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-87481-2_16

Download citation

DOI: https://doi.org/10.1007/978-3-540-87481-2_16

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-87480-5

Online ISBN: 978-3-540-87481-2

eBook Packages: Computer ScienceComputer Science (R0)