Abstract

In a real Hilbert space, let GSVI and CFPP represent a general system of variational inequalities and a common fixed point problem of a countable family of nonexpansive mappings and an asymptotically nonexpansive mapping, respectively. In this paper, via a new subgradient extragradient implicit rule, we introduce and analyze two iterative algorithms for solving the monotone bilevel equilibrium problem (MBEP) with the GSVI and CFPP constraints, i.e., a strongly monotone equilibrium problem over the common solution set of another monotone equilibrium problem, the GSVI and the CFPP. Some strong convergence results for the proposed algorithms are established under the mild assumptions, and they are also applied for finding a common solution of the GSVI, VIP, and FPP, where the VIP and FPP stand for a variational inequality problem and a fixed point problem, respectively.

Similar content being viewed by others

1 Introduction

Throughout this paper, suppose that C is a nonempty closed convex subset of a real Hilbert space (\({\mathcal {H}},\|\cdot \|\)) with the inner product \(\langle \cdot,\cdot \rangle \). Let \(P_{C}\) be the metric projection from \(\mathcal {H}\) onto C. Recall that a mapping \(T:C\to C\) is said to be asymptotically nonexpansive if there exists a sequence \(\{\theta _{k}\}\subset [0,\infty )\) such that \(\lim_{k\to \infty }\theta _{k} =0\) and \(\|T^{k}x-T^{k}y\|\leq (1+\theta _{k})\|x-y\|\ \forall x,y\in C,k \geq 1\). In particular, if \(\theta _{k}=0\ \forall k\geq 1\), then T is said to be nonexpansive. We denote by \(\mathrm{Fix}(T)\) the fixed point set of the mapping T and by \({\mathcal {R}}\) the set of all real numbers, respectively. Let A be a self-mapping on \({\mathcal {H}}\). The classical variational inequality problem (VIP) is to find \(x^{*}\in C\) s.t. \(\langle Ax^{*},y-x^{*}\rangle \geq 0 \ \forall y\in C\). The solution set of the VIP is denoted by VI(\(C,A\)).

Let \(\Phi:{\mathcal {H}}\times {\mathcal {H}}\to {\mathcal {R}}\cup \{+\infty \}\) be a bifunction satisfying \(\Phi (x,x)=0 \ \forall x\in C\). The equilibrium problem (shortly, EP(\(C,\Phi \))) for bifunction Φ on the constraint domain C is to find \(x^{*}\in C\) such that

The solution set of EP(\(C,\Phi \)) is denoted by Sol(\(C,\Phi \)). It is worth pointing out that the EP(\(C,\Phi \)) is a unified model of several problems, namely, variational inequality problems, optimization problems, saddle point problems, complementarity problems, fixed point problems, Nash equilibrium problems, and so forth. Till now the existence and algorithms for variational inequality and equilibrium problems have been widely studied by many authors; see, e.g., [1–4, 6–9, 11, 13, 14, 16, 19, 24–26] and the references therein. In 2009, by using the viscosity approximation method, Chang et al. [16] introduced an iterative algorithm for finding an element in the common solution set Ω of the common fixed point problem (CFPP) of a countable family of nonexpansive self-mappings \(\{T_{k}\}^{\infty }_{k=1}\) on C, the VIP for an α-inverse-strongly monotone mapping A, and the EP(\(C,\Phi \)) for bifunction Φ on C, that is, for any initial \(x^{1}\in {\mathcal {H}}\), the sequence \(\{x^{k}\}\) is generated by

where \(f:{\mathcal {H}}\to {\mathcal {H}}\) is a contraction, and each \(W_{k}\) is a W-mapping generated by \(T_{k},T_{k-1},\ldots, T_{1}\) and \(\zeta _{k},\zeta _{k-1},\ldots,\zeta _{1}\) with \(\zeta _{i}\in (0,l]\subset (0,1)\ \forall i\geq 1\). Assume that the sequences \(\{\alpha _{k} \},\{\beta _{k}\}, \{\gamma _{k}\}\subset [0,1], \{ \lambda _{k}\}\subset [a,b]\subset (0,2\alpha )\), and \(\{r_{k}\}\subset (0,\infty )\) satisfy the conditions: (i) \(\alpha _{k}+\beta _{k}+\gamma _{k}=1\); (ii) \(\lim_{k\to \infty }\alpha _{k}=0, \sum^{\infty }_{k=1}\alpha _{k}= \infty \); (iii) \(0<\liminf_{k\to \infty }\beta _{k}\leq \limsup_{k\to \infty }\beta _{k}<1\); (iv) \(0<\liminf_{k\to \infty }r_{k}, \sum^{\infty }_{k=1}|r_{k+1}-r_{k}|< \infty \); and (v) \(\lim_{k\to \infty }|\lambda _{k+1}-\lambda _{k}|=0\). Then it was proven in [16] that \(\{x^{k}\}\) converges strongly to \(x^{*}=P_{\Omega }f(x^{*})\) under some appropriate assumptions.

Let \(B_{1},B_{2}:{\mathcal {H}}\to {\mathcal {H}}\) be two nonlinear mappings. The general system of variational inequalities (GSVI) is the following problem of finding \((x^{*},y^{*})\in C\times C\) s.t.:

with constants \(\mu _{1},\mu _{2}\in (0,\infty )\). In particular, if \(B_{1}=B_{2}=A\) and \(x^{*}=y^{*}\), then GSVI (1.2) reduces to the classical VIP. Note that problem (1.2) can be transformed into a fixed point problem in the following way.

Lemma 1.1

(see, e.g., [20])

For given \(x^{*},y^{*}\in C, (x^{*},y^{*})\) is a solution of GSVI (1.2) if and only if \(x^{*}\in \mathrm{ GSVI}(C,B_{1},B_{2})\), where \(\mathrm{ GSVI}(C,B_{1},B_{2})\) is the fixed point set of the mapping \(G:=P_{C}(I-\mu _{1}B_{1}) P_{C}(I-\mu _{2}B_{2})\), and \(y^{*}=P_{C}(I-\mu _{2}B_{2})x^{*}\).

Let Ω denote the common solution set of the fixed point problem (FPP) of asymptotically nonexpansive mapping \(T:C\to C\) with \(\{\theta _{k}\}\) and GSVI (1.2) for two inverse-strongly monotone mappings \(B_{1},B_{2}\). In 2018, using a modified extragradient method, Cai et al. [2] introduced a viscosity implicit rule for finding a solution of GSVI (1.2) with the FPP constraint, that is, for any initial \(x^{1}\in C\), the sequence \(\{x^{k}\}\) is generated by

where \(f:C\to C\) is a δ-contraction with \(\delta \in [0,1)\), and the sequences \(\{\alpha _{k}\},\{s_{k}\}\subset (0,1]\) satisfy the conditions: (i) \(\lim_{k\to \infty }\alpha _{k}=0, \sum^{\infty }_{k=1}\alpha _{k}= \infty \), \(\sum^{\infty }_{k=1}|\alpha _{k+1}-\alpha _{k}|< \infty \); (ii) \(\lim_{k\to \infty }\frac{\theta _{k}}{\alpha _{k}}=0\); (iii) \(0<\varepsilon \leq s_{k}\leq 1\), \(\sum^{\infty }_{k=1}|s_{k+1}-s_{k}|<\infty \); and (iv) \(\sum^{\infty }_{k=1}\|T^{k+1}p^{k}- T^{k}p^{k}\|<\infty \). They proved the strong convergence of \(\{x^{k}\}\) to an element \(x^{*}\in {\Omega }\), which solves the VIP: \(\langle (\rho F-f)x^{*},x-x^{*} \rangle \geq 0\ \forall x\in {\Omega }\). Subsequently, Ceng and Wen [3] proposed a hybrid extragradient-like implicit method with strong convergence for finding a solution of GSVI (1.2) with the constraint of a common fixed point problem (CFPP). Very recently, Ceng et al. [22] suggested a modified inertial subgradient extragradient method for finding a common solution of the VIP with pseudomonotone and Lipschitz continuous mapping \(A:{\mathcal {H}}\to {\mathcal {H}}\) and the CFPP of finitely many nonexpansive mappings \(\{T_{i}\}^{N}_{i=1}\) on \({\mathcal {H}}\). Under some suitable conditions, they proved strong convergence of the constructed sequence to a common solution of the VIP and the CFPP.

On the other hand, Anh and An [24] introduced the monotone bilevel equilibrium problem (MBEP) with the fixed point problem (FPP) constraint, i.e., a strongly monotone equilibrium problem EP(\({\Omega },\Psi \)) over the common solution set Ω of another monotone equilibrium problem EP(\(C, \Phi \)) and the fixed point problem of \(\mathcal {K}\)-demicontractive mapping T:

where \(\Psi:C\times C\to {\mathcal {R}}\cup \{+\infty \}\) such that \(\Psi (x,x)=0\ \forall x\in C\) and \({\Omega }=\mathrm{Sol}(C,\Phi )\cap \mathrm{Fix}(T)\).

Pick the parameter sequences \(\{\lambda _{k}\}\) and \(\{\beta _{k}\}\) such that

where ϒ is a constant associated with Ψ. The following modified subgradient extragradient method is proposed in [24, Algorithm 4.1] for finding a unique element of \(\mathrm{Sol}({\Omega },\Psi )\).

Algorithm 1.1

Initial step: Choose an initial point \(x^{0}\in C\) and \(\{\alpha _{k}\}\subset [r,\bar{r}]\subset (0,1-{\mathcal {K}})\). The parameter sequences \(\{\lambda _{k}\}\) and \(\{\beta _{k}\}\) satisfy conditions (1.5).

Iterative steps: Compute \(x^{k+1} (k\geq 0)\) as follows:

Step 1. Compute \(v^{k}=\operatorname{ argmin}\{\lambda _{k}\Phi (x^{k},v)+\frac{1}{2}\|v-x^{k} \|^{2}:v\in C\}\) and \(q^{k}=\operatorname{ argmin}\{\lambda _{k}\Phi (v^{k},z) +\frac{1}{2}\|z-x^{k}\|^{2}:z\in C_{k}\}\), where \(C_{k}=\{y\in {\mathcal {H}}:\langle x^{k}-\lambda _{k}w^{k}-v^{k},y-v^{k} \rangle \leq 0\}\) and \(w^{k}\in \partial _{2}\Phi (x^{k},v^{k})\).

Step 2. Compute \(p^{k}=(1-\alpha _{k})q^{k}+\alpha _{k}Tq^{k}\) and \(x^{k+1}=\operatorname{ argmin}\{\beta _{k}\Psi (p^{k},p)+\frac{1}{2} \|p-p^{k} \|^{2}:p\in C\}\). Set \(k:=k+1\) and return to Step 1.

It was proven in [24] that \(\{x^{k}\}\) converges strongly to a unique element of \(\mathrm{Sol}({\Omega },\Psi )\) under some mild conditions. In what follows, let the CFPP indicate a common fixed point problem of a countable family of nonexpansive mappings and an asymptotically nonexpansive mapping. In this paper, via a new subgradient extragradient implicit rule, we introduce and analyze two iterative algorithms for solving the monotone bilevel equilibrium problem (MBEP) with the GSVI and CFPP constraints, i.e., a strongly monotone equilibrium problem EP(\({\Omega },\Psi \)) over the common solution set Ω of another monotone equilibrium problem EP(\(C,\Phi \)), the GSVI and the CFPP. Some strong convergence results for the proposed algorithms are established under the suitable assumptions, and also applied for finding a common solution of the GSVI, VIP, and FPP, where VIP and FPP stand for a variational inequality problem and a fixed point problem, respectively. Our results improve and extend some corresponding results in the earlier and very recent literature; see, e.g., [3, 16, 22, 24].

2 Preliminaries

Let C be a nonempty closed convex subset of a real Hilbert space \(\mathcal {H}\). In the following, we denote by “⇀” strong convergence and by “→” weak convergence. A bifunction \(\Psi:C\times C\to {\mathcal {R}}\) is said to be

(i) η-strongly monotone if \(\Psi (x,y)+\Psi (y,x)\leq -\eta \|x-y\|^{2}\ \forall x,y\in C\);

(ii) monotone if \(\Psi (x,y)+\Psi (y,x)\leq 0\ \forall x,y\in C\);

(iii) Lipschitz-type continuous with constants \(c_{1},c_{2}>0\) (see [15]) if \(\Psi (x,y)+\Psi (y,z)\geq \Psi (x,z)-c_{1} \|x-y\|^{2}-c_{2}\|y-z\|^{2}\ \forall x,y,z\in C\).

Also, recall that a mapping \(F:C\to {\mathcal {H}}\) is said to be

(i) L-Lipschitz continuous or L-Lipschitzian if \(\exists L>0\) s.t. \(\|Fx-Fy\|\leq L\|x-y\|\ \forall x,y\in C\);

(ii) monotone if \(\langle Fx-Fy,x-y\rangle \geq 0\ \forall x,y\in C\);

(iii) pseudomonotone if \(\langle Fx,y-x\rangle \geq 0\Rightarrow \langle Fy,y-x\rangle \geq 0\ \forall x,y\in C\);

(iv) η-strongly monotone if \(\exists \eta >0\) s.t. \(\langle Fx-Fy,x-y\rangle \geq \eta \|x-y\|^{2}\ \forall x,y\in C\);

(v) α-inverse-strongly monotone if \(\exists \alpha >0\) s.t. \(\langle Fx-Fy,x-y\rangle \geq \alpha \|Fx-Fy\|^{2} \ \forall x,y\in C\).

It is clear that every inverse-strongly monotone mapping is monotone and Lipschitz continuous but the converse is not true. For each point \(x\in {\mathcal {H}}\), we know that there exists a unique nearest point in C, denoted by \(P_{C}x\), such that \(\|x-P_{C}x\|\leq \|x-y\| \ \forall y\in C\). The mapping \(P_{C}\) is said to be the metric projection of \({\mathcal {H}}\) onto C. Recall that the following statements hold (see [17]):

(i) \(\langle x-y,P_{C}x-P_{C}y\rangle \geq \|P_{C}x-P_{C}y\|^{2}\ \forall x,y\in {\mathcal {H}}\);

(ii) \(\langle x-P_{C}x,y-P_{C}x\rangle \leq 0 \ \forall x\in {\mathcal {H}},y \in C\);

(iii) \(\|x-y\|^{2}\geq \|x-P_{C}x\|^{2}+\|y-P_{C}x\|^{2} \ \forall x\in { \mathcal {H}},y\in C\);

(iv) \(\|x-y\|^{2}=\|x\|^{2}-\|y\|^{2}-2\langle x-y,y\rangle \ \forall x,y \in {\mathcal {H}}\);

(v) \(\|sx+(1-s)y\|^{2}=s\|x\|^{2}+(1-s)\|y\|^{2}-s(1-s)\|x-y\|^{2}\ \forall x,y\in {\mathcal {H}},s\in [0,1]\).

Definition 2.1

(see [10])

Let \(\{T_{i}\}^{\infty }_{i=1}\) be a countable family of nonexpansive self-mappings on C and \(\{\zeta _{i}\}^{\infty }_{i=1}\) be a sequence in \([0,1]\). For any \(k\geq 1\), one defines a mapping \(W_{k}:C\to C\) as follows:

Such a mapping \(W_{k}\) is nonexpansive, and it is called a W-mapping generated by \(T_{k},T_{k-1}, \ldots,T_{1}\) and \(\zeta _{k},\zeta _{k-1},\ldots,\zeta _{1}\).

Lemma 2.1

(see [10])

Let \(\{T_{i}\}^{\infty }_{i=1}\) be a countable family of nonexpansive self-mappings on C with \(\bigcap^{\infty }_{i=1}\mathrm{Fix}(T_{i})\neq \emptyset \) and \(\{\zeta _{i}\}^{\infty }_{i=1}\) be a sequence in \((0,1]\). Then

(i) \(W_{k}\) is nonexpansive and \(\mathrm{Fix}(W_{k})=\bigcap^{k}_{i=1}\mathrm{Fix}(T_{i})\ \forall k\geq 1\);

(ii) The limit \(\lim_{k\to \infty }U_{k,i}x\) exists for all \(x\in C\) and \(i\geq 1\);

(iii) The mapping W defined by \(Wx:=\lim_{k\to \infty }W_{k}x=\lim_{k\to \infty }U_{k,1}x\ \forall x \in C\) is a nonexpansive mapping satisfying \(\mathrm{Fix}(W)=\bigcap^{\infty }_{i=1}\mathrm{Fix}(T_{i})\), and it is called the W-mapping generated by \(T_{1},T_{2},\ldots \) and \(\zeta _{1},\zeta _{2},\ldots \) .

Lemma 2.2

(see [16])

Let \(\{T_{i}\}^{\infty }_{i=1}\) be a countable family of nonexpansive self-mappings on C with \(\bigcap^{\infty }_{i=1}\mathrm{Fix}(T_{i})\neq \emptyset \) and \(\{\zeta _{i}\}^{\infty }_{i=1}\) be a sequence in \((0,l]\) for some \(l\in (0,1)\). If D is any bounded subset of C, then \(\lim_{k\to \infty }\sup_{x\in D}\|W_{k}x-Wx\|=0\).

Throughout this paper we always assume that \(\{\zeta _{i}\}^{\infty }_{i=1}\subset (0,l]\) for some \(l\in (0,1)\). It is easy to check that the following lemma is valid.

Lemma 2.3

Let the mapping \(B:{\mathcal {H}}\to {\mathcal {H}}\) be α-inverse-strongly monotone. Then, for given \(\mu \geq 0\), \(\|(I-\mu B)x-(I-\mu B)y\|^{2}\leq \|x-y\|^{2}-\mu (2\alpha -\mu )\|Bx-By \|^{2}\). In particular, if \(0\leq \mu \leq 2\alpha \), then \(I-\mu B\) is nonexpansive.

Utilizing Lemma 2.3, we immediately obtain the following lemma.

Lemma 2.4

Let the mappings \(B_{1},B_{2}:{\mathcal {H}}\to {\mathcal {H}}\) be α-inverse-strongly monotone and β-inverse-strongly monotone, respectively. Let the mapping \(G:{\mathcal {H}}\to C\) be defined as \(G:=P_{C}(I-\mu _{1}B_{1})P_{C}(I-\mu _{2}B_{2})\). If \(0\leq \mu _{1}\leq 2\alpha \) and \(0\leq \mu _{2}\leq 2\beta \), then \(G:{\mathcal {H}}\to C\) is nonexpansive.

The following inequality is an immediate consequence of the subdifferential inequality of the function \(\|\cdot \|^{2}/2 \).

Lemma 2.5

The inequality holds:

Lemma 2.6

(see [5])

Let X be a Banach space which admits a weakly continuous duality mapping, C be a nonempty closed convex subset of X, and \(T:C\to C\) be an asymptotically nonexpansive mapping with \(\mathrm{Fix} (T)\neq \emptyset \). Then \(I-T\) is demiclosed at zero, i.e., if \(\{u^{k}\}\) is a sequence in C such that \(u^{k} \rightharpoonup u\in C\) and \((I-T)u^{k}\to 0\), then \((I-T)u=0\), where I is the identity mapping of X.

Lemma 2.7

(see [12])

Every Hilbert space enjoys the Opial property, that is, for any sequence \(\{x^{k}\}\) in a Hilbert space \(\mathcal {H}\) with \(x^{k}\rightharpoonup x\), the inequality

holds for every \(y\in {\mathcal {H}}\) with \(y\neq x\).

The following lemma is very useful to analyze the convergence of the proposed algorithms in this paper.

Lemma 2.8

(see [18])

Let \(\{{\Gamma }_{k}\}\) be a sequence of real numbers that does not decrease at infinity in the sense that there exists a subsequence \(\{{\Gamma }_{k_{j}}\}\) of \(\{{\Gamma }_{k}\}\) which satisfies \({\Gamma }_{k_{j}}<{\Gamma }_{k_{j}+1}\) for each integer \(j\geq 1\). Define the sequence \(\{\tau (k)\}_{k\geq k_{0}}\) of integers as follows:

where integer \(k_{0}\geq 1\) such that \(\{j\leq k_{0}:{\Gamma }_{j}<{\Gamma }_{j+1}\}\neq \emptyset \). Then the following hold:

(i) \(\tau (k_{0})\leq \tau (k_{0}+1)\leq \cdots \) and \(\tau (k)\to \infty \);

(ii) \({\Gamma }_{\tau (k)}\leq {\Gamma }_{\tau (k)+1}\) and \({\Gamma }_{k}\leq {\Gamma }_{\tau (k)+1}\ \forall k\geq k_{0}\).

On the other hand, the normal cone \(N_{C}(x)\) of C at \(x\in C\) is defined as \(N_{C}(x)=\{z\in {\mathcal {H}}:\langle z,y-x \rangle \leq 0\ \forall y \in C\}\). The subdifferential of a convex function \(g:C\to {\mathcal {R}}\cup \{+\infty \}\) at \(x\in C\) is defined by

In this paper, we are devoted to finding a solution \(x^{*}\in \mathrm{Sol}({\Omega },\Psi )\) of the problem EP(\({\Omega },\Psi \)), where \({\Omega }=\bigcap^{\infty }_{i=0}\mathrm{Fix}(T_{i})\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{Sol}(C,\Phi )\) with \(T_{0}:=T\). We assume always that the following hold:

\(\{T_{i}\}^{\infty }_{i=1}\) is a countable family of nonexpansive self-mappings on C and \(T:{\mathcal {H}}\to C\) is an asymptotically nonexpansive mapping with a sequence \(\{\theta _{k}\}\).

\(W_{k}\) is the W-mapping generated by \(T_{k},T_{k-1},\ldots,T_{1}\) and \(\zeta _{k},\zeta _{k-1},\ldots,\zeta _{1}\), where \(\{\zeta _{i}\} ^{\infty }_{i=1}\) is a sequence in \((0,l]\) for some \(l\in (0,1)\).

\(B_{1},B_{2}:{\mathcal {H}}\to {\mathcal {H}}\) are α-inverse-strongly monotone and β-inverse-strongly monotone, respectively, and \(G:{\mathcal {H}}\to C\) is defined as \(G:=P_{C}(I-\mu _{1}B_{1})P_{C}(I-\mu _{2}B_{2})\) where \(\mu _{1}\in (0,2\alpha )\) and \(\mu _{2}\in (0,2\beta )\).

Choose the sequences \(\{\varepsilon _{k}\},\{\beta _{k}\},\{\gamma _{k}\},\{\delta _{k}\}\) in \((0,1)\) and positive sequences \(\{\alpha _{k}\}\), \(\{s_{k}\}\) such that

(H1) \(\beta _{k}+\gamma _{k}+\delta _{k}=1\ \forall k\geq 1\), \(0<\liminf_{k\to \infty }\beta _{k}\), and \(0<\liminf_{k\to \infty } \delta _{k}\);

(H2) \(0<\liminf_{k\to \infty }\gamma _{k}\leq \limsup_{k\to \infty } \gamma _{k}<1\) and \(0<\liminf_{k\to \infty }\varepsilon _{k} \leq \limsup_{k\to \infty } \varepsilon _{k}<1\);

(H3) \(\sum^{\infty }_{k=1}s_{k}=\infty \), \(\lim_{k\to \infty }s_{k}=0, \lim_{k\to \infty }\theta _{k}/s_{k}=0\), and \(\sum^{\infty }_{k=1}\theta _{k}<\infty \);

(H4) \(\{\alpha _{k}\}\subset (\underline{\alpha },\overline{\alpha }) \subset (0,\min \{\frac{1}{2c_{1}},\frac{1}{2c_{2}}\})\) and \(\lim_{k\to \infty }\alpha _{k}=\tilde{\alpha }\), where \(c_{1}\) and \(c_{2}\) are Lipschitz constants of Φ;

(H5) \(2s_{k}\nu -s^{2}_{k}{\Upsilon }^{2}<1\), \(0<\lambda <\min \{\nu,{ \Upsilon }\}\) and \(0< s_{k}<\min \{\frac{1}{\lambda }, \frac{2\nu -2\lambda }{{\Upsilon }^{2}-\lambda ^{2}}, \frac{2\nu }{{\Upsilon }^{2}}\}\), where ν is the strongly monotone constant of Ψ and \({\Upsilon }:={\sum^{m}_{i=1}}\bar{L}_{i}\hat{L}_{i}\) is the constant as defined in the following Remark 2.1.

Algorithm 2.1

Initial step: Given \(x^{1}\in C\) arbitrarily. The sequences \(\{\varepsilon _{k}\},\{\beta _{k}\},\{\gamma _{k}\}\), \(\{\delta _{k}\}\) in \((0,1)\) and positive sequences \(\{\alpha _{k}\},\{s_{k}\}\) satisfy conditions (H1)–(H5).

Iterative steps: Calculate \(x^{k+1}\) as follows:

Step 1. Compute

Step 2. Choose \(w^{k}\in \partial _{2}\Phi (u^{k},y^{k})\), and compute

Step 3. Compute

Step 4. Compute

Set \(k:=k+1\) and return to Step 1.

We need the following technical propositions.

Proposition 2.1

(see [23, Theorem 2.1.3])

Let C be a convex subset of a real Hilbert space \({\mathcal {H}}\) and \(g:C\to {\mathcal {R}}\cup \{+\infty \}\) be subdifferentiable. Then x̂ is a solution to the following convex minimization problem:

if and only if \(0\in \partial g(\hat{x})+N_{C}(\hat{x})\), where ∂g denotes the subdifferential of g.

Proposition 2.2

(see [21, Proposition 23])

Let X and Y be two sets, \({\mathcal {G}}\) be a set-valued map from Y to X, and W be a real-valued function defined on \(X\times Y\). The marginal function M is defined as

If W and \({\mathcal {G}}\) are continuous, then M is upper semicontinuous.

Next, we assume that two bifunctions \(\Psi:C\times C\to {\mathcal {R}}\cup \{+\infty \}\) and \(\Phi:{\mathcal {H}}\times {\mathcal {H}}\to {\mathcal {R}}\cup \{+\infty \}\) satisfy the following conditions:

\(\mathbf{Ass}_{\Phi}\):

(\(\Phi _{1}\)) \({\Omega }=\bigcap^{\infty }_{i=0}\mathrm{Fix}(T_{i})\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{Sol}(C,\Phi )\neq \emptyset \) with \(T_{0}:=T\).

(\(\Phi _{2}\)) Φ is monotone and Lipschitz-type continuous with constants \(c_{1},c_{2}>0\), and Φ is weakly continuous, i.e., \(\{x^{k}\rightharpoonup \bar{x} \text{ and} y^{k}\rightharpoonup \bar{y} \}\Rightarrow \{\Phi (x^{k},y^{k})\to \Phi (\bar{x},\bar{y})\}\).

\(\mathbf{Ass}_{\Psi}\):

(\(\Psi _{1}\)) Ψ is ν-strongly monotone and weakly continuous.

(\(\Psi _{2}\)) For each \(i\in \{1,\ldots,m\}\), there exist the mappings \(\bar{\Psi }_{i},\hat{\psi }_{i}:C\times C\to {\mathcal {H}}\) such that

(i) \(\bar{\Psi }_{i}(x,y)+\bar{\Psi }_{i}(y,x)=0\) and \(\|\bar{\Psi }_{i}(x,y)\|\leq \bar{L}_{i}\|x-y\|\) for all \(x,y\in C\);

(ii) \(\hat{\psi }_{i}(x,x)=0\) and \(\|\hat{\psi }_{i}(x,y)-\hat{\psi }_{i}(u,v)\|\leq \hat{L}_{i}\|(x-y)-(u-v) \|\) for all \(x,y,u,v\in C\);

(iii) \(\Psi (x,y)+\Psi (y,z)\geq \Psi (x,z)+\sum^{m}_{i=1}\langle \bar{\Psi }_{i}(x,y),\hat{\psi }_{i}(y,z)\rangle \) for all \(x,y,z\in C\).

(\(\Psi _{3}\)) For any sequence \(\{y^{k}\}\subset C\) such that \(y^{k}\to d\), we have \(\limsup_{k\to \infty }\frac{|\Psi (d,y^{k})|}{\|y^{k}-d\|}<+\infty \).

Remark 2.1

Suppose that the bifunction Ψ satisfies condition \(\mathbf{Ass}_{\Psi}\)(\(\Psi _{2}\)). Then

where \({\Upsilon }:={\sum^{m}_{i=1}}\bar{L}_{i}\hat{L}_{i}\). Thus, Ψ is Lipschitz-type continuous with constants \(c_{1}=c_{2}=\frac{1}{2}{\Upsilon }\).

3 Main results

In this section, let the CFPP indicate the common fixed point problem of a countable family of nonexpansive self-mappings \(\{T_{i}\}^{\infty }_{i=1}\) on C and an asymptotically nonexpansive mapping T. We consider and analyze two implicit subgradient extragradient algorithms for solving the MBEP with the GSVI and CFPP constraints, i.e., a strongly monotone equilibrium problem EP(\({\Omega },\Psi \)) over the common solution set Ω of another monotone equilibrium problem EP(\(C, \Phi \)), GSVI (1.2) and the CFPP, where \({\Omega }=\bigcap^{\infty }_{i=0}\mathrm{Fix}(T_{i})\cap \mathrm{GSVI}(C,B_{1},B_{2}) \cap \mathrm{Sol}(C,\Phi )\) with \(T_{0}:=T\). We are now in a position to state and prove the first main result in this paper.

Theorem 3.1

Assume that \(\{x^{k}\}\) is the sequence constructed by Algorithm 2.1. Let the bifunctions \(\Psi,\Phi \) satisfy assumptions \(\mathbf{Ass}_{\Phi}\)–\(\mathbf{Ass}_{\Psi}\). Then, under conditions (H1)–(H5), the sequence \(\{x^{k} \}\) converges strongly to the unique solution \(x^{*}\) of the problem EP(\({\Omega },\Psi \)) provided \(T^{k}x^{k}-T^{k+1}x^{k} \to 0\).

Proof

First of all, by Lemma 2.1 we know that each \(W_{k}\) is a nonexpansive self-mapping on C. Also, note that the mapping \(G:{\mathcal {H}}\to C\) is defined as \(G=P_{C}(I-\mu _{1}B_{1})P_{C} (I-\mu _{2}B_{2})\), where \(\mu _{1}\in (0,2\alpha )\) and \(\mu _{2}\in (0,2\beta )\). Then, by Lemma 2.4, we know that G is nonexpansive. Hence, by the Banach contraction mapping principle, we deduce from \(\{\varepsilon _{k}\},\{\gamma _{k}\}\subset (0,1)\) that for each \(k\geq 1\) there hold the following:

(i) \(\exists | u^{k}\in C\) s.t. \(u^{k}=\varepsilon _{k}x^{k}+(1-\varepsilon _{k})W_{k}u^{k}\);

(ii) \(\exists | \varrho ^{k}\in C\) s.t.

Choose an element \(q\in {\Omega }=\bigcap^{\infty }_{i=0}\mathrm{Fix}(T_{i})\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{Sol}(C,\Phi )\) arbitrarily. Since \(\lim_{k\to \infty }\frac{\theta _{k}}{s_{k}}=0\), we may assume, without loss of generality, that \(\theta _{k}\leq \frac{1}{2}\lambda s_{k}\) for all \(k\geq 1\). We divide the proof into several steps as follows.

Step 1. We show that the following inequality holds:

Indeed, by Proposition 2.1, we know that for \(y^{k}=\operatorname{ argmin}\{\alpha _{k}\Phi (u^{k},y)+\frac{1}{2}\|y-u^{k}\|^{2}:y \in C\}\) there exists \(w^{k}\in \partial _{2}\Phi (u^{k},y^{k})\) such that

which hence yields

From the definition of \(w^{k}\in \partial _{2}\Phi (u^{k},y^{k})\), it follows that

Adding the last two inequalities, we get

It follows from \(z^{k}\in C_{k}\) and the definition of \(C_{k}\) that

and hence

Putting \(x=z^{k}\) in (3.2), we get

Adding (3.4) and the last inequality, we have

By Proposition 2.1, we know that for \(z^{k}=\operatorname{ argmin}\{\alpha _{k}\Phi (y^{k},y)+\frac{1}{2}\|y-u^{k}\|^{2}:y \in C_{k}\}\) there exist \(h^{k}\in \partial _{2}\Phi (y^{k},z^{k})\) and \(t^{k}\in N_{C_{k}}(z^{k})\) such that

So, we infer that \(\alpha _{k}\langle h^{k},y-z^{k}\rangle \geq \langle u^{k}-z^{k},y-z^{k} \rangle \ \forall y\in C_{k}\), and

Putting \(y=q\in C\subset C_{k}\) in two last inequalities and later adding them, we get

By the monotonicity of Φ, \(q\in \mathrm{Sol}(C,\Phi )\) and \(y^{k}\in C\), we get

Therefore,

Combining this and the following Lipschitz-type continuity of Φ

we obtain that

This together with (3.5) implies that

Therefore, applying the equality

for \(\langle u^{k}-z^{k},z^{k}-q\rangle \) and \(\langle y^{k}-u^{k},z^{k}-y^{k}\rangle \) in (3.6), we obtain the desired result.

Step 2. We show that the following inequality holds:

Indeed, since \(x^{k+1}=\operatorname{ argmin}\{s_{k}\Psi (\varrho ^{k},t)+\frac{1}{2}\|t- \varrho ^{k}\|^{2}:t\in C\}\), there exists \(m^{k} \in \partial _{2}\Psi (\varrho ^{k}, x^{k+1})\) such that

By the definition of normal cone \(N_{C}\) and the subgradient \(m^{k}\), we get

Adding the last two inequalities, we get

Putting \(u=x^{k+1}-\varrho ^{k}\) and \(v=x-x^{k+1}\) in (3.7), we get

This attains the desired result.

Step 3. We show that if \(x^{*}\) is a solution of the MBEP with the GSVI and CFPP constraints, then

where \(\varrho ^{k}_{*}=\operatorname{ argmin}\{s_{k}\Psi (x^{*},v)+\frac{1}{2}\|v-x^{*} \|^{2}:v\in C\}\), \(\eta _{k}=\sqrt{1-2s_{k}\nu +s^{2}_{k}{\Upsilon }^{2}}, 0< \lambda <\min \{\nu, {\Upsilon }\}, 0<s_{k}<\min \{ \frac{1}{\lambda }, \frac{2\nu -2\lambda }{{\Upsilon }^{2}-\lambda ^{2}}\}\), and \({\Upsilon }=\sum^{m}_{i=1}\bar{L}_{i}\hat{L}_{i}\).

Indeed, put \(\varrho ^{k}_{*}=\operatorname{ argmin}\{s_{k}\Psi (x^{*},v)+\frac{1}{2}\|v-x^{*} \|^{2}:v\in C\}\). By the similar arguments to those of (3.8), we also get

Setting \(x=\varrho ^{k}_{*}\in C\) in (3.8) and \(x=x^{k+1}\in C\) in (3.9), respectively, we obtain that

Adding the last two inequalities, we have

where the last equality follows directly from (3.7).

Note that, under assumption \(\mathbf{Ass}_{\Psi }(\Psi _{2})\), it follows that

Therefore, we have

Then, using \(\mathbf{Ass}_{\Psi }(\Psi _{2})\) and the strong monotonicity of Ψ in \(\mathbf{Ass}_{\Psi }(\Psi _{1})\) that \(\Psi (x,y)+\Psi (y,x)\leq -\nu \|x-y\|^{2}\ \forall x,y\in C\), we get

Combining (3.10) and (3.11), we get

From

it follows that \(0\leq \eta _{k}=\sqrt{1-2s_{k}\nu +s^{2}_{k}{\Upsilon }^{2}}<1- \lambda s_{k}\). This ensures the desired result.

Step 4. We show that the sequence \(\{x^{k}\}\) is bounded. In fact, putting

we have that

Note that M is continuous and \(\lim_{k\to \infty }\varrho ^{k}_{*}=x^{*}\). Since Ψ is continuous on C, we get \(\lim_{k\to \infty }\Psi (x^{*},\varrho ^{k}_{*})=\Psi (x^{*},x^{*})=0\). In terms of \(\mathbf{Ass}_{\Psi }(\Psi _{3})\), there exists a constant \(\hat{M}(x^{*})>0\) such that

Putting \(x=x^{*}\) in (3.9) and using \(\Psi (x^{*},x^{*})=0\), we get

which hence yields

This immediately implies that

Also, according to Lemma 2.3, we know that \(I-\mu _{1}B_{1}\) and \(I-\mu _{2}B_{2}\) are nonexpansive mappings, where \(\mu _{1}\in (0,2\alpha )\) and \(\mu _{2}\in (0,2\beta )\). Moreover, by Lemma 2.4, we know that G is nonexpansive. We write \(y^{*}=P_{C}(I-\mu _{2}B_{2})x^{*}\). Then, by Lemma 1.1, we get \(x^{*}=P_{C}(I-\mu _{1}B_{1})y^{*}=Gx^{*}\). So it follows that

which immediately leads to

Utilizing the result in Step 1, from (3.13) we get

Since G is a nonexpansive mapping and T is asymptotically nonexpansive, we deduce from (3.14) that

which hence yields

Consequently,

By induction, we get \(\|x^{k}-x^{*}\|\leq \max \{\|x^{1}-x^{*}\|, \frac{2\hat{M}(x^{*})}{\lambda }\}\ \forall k\geq 1\). Thus, \(\{x^{k}\}\) is bounded, and so are the sequences \(\{p^{k}\},\{\varrho ^{k}\},\{y^{k}\},\{z^{k}\},\{u^{k}\},\{v^{k}\}\).

Step 5. We show that if \(x^{k_{i}}\rightharpoonup \hat{x}, u^{k_{i}}-x^{k_{i}}\to 0\) and \(u^{k_{i}}-y^{k_{i}}\to 0\) for \(\{k_{i}\}\subset \{k\}\), then \(\hat{x}\in \mathrm{Sol}(C,\Phi )\).

Indeed, noticing \(u^{k_{i}}-x^{k_{i}}\to 0\) and \(u^{k_{i}}-y^{k_{i}}\to 0\), we get

So it follows from \(x^{k_{i}}\rightharpoonup \hat{x}\) that \(u^{k_{i}}\rightharpoonup \hat{x}\) and \(y^{k_{i}}\rightharpoonup \hat{x}\). Since \(\{y^{k}\}\subset C\), \(y^{k_{i}}\rightharpoonup \hat{x}\) and C is weakly closed, we know that \(\hat{x}\in C\). By (3.3), we have

Taking the limit as \(i\to \infty \) and using the assumptions that \(\lim_{k\to \infty }\alpha _{k}=\tilde{\alpha }>0\), \(\Phi (\hat{x},\hat{x})=0\), \(\{y^{k_{i}}\}\) is bounded, and Φ is weakly continuous, we obtain that \(\tilde{\alpha }\Phi (\hat{x},x)\geq 0\ \forall x\in C\). This implies that \(\hat{x}\in \mathrm{ sol}(C,\Phi )\).

Step 6. We show that \(x^{k}\to x^{*}\), a unique solution of the MBEP with the GSVI and CFPP constraints. Indeed, set \({\Gamma }_{k}=\|x^{k}-x^{*}\|^{2}\). Since G is nonexpansive and T is asymptotically nonexpansive, we obtain that

where \(\sup_{k\geq 1}(2+\theta _{k})\|x^{k}-x^{*}\|^{2}\leq \widetilde{M}\) for some \(\widetilde{M}>0\). This implies that

By the results in Steps 1 and 2 we deduce from (3.14) and (3.17) that

where \(\sup_{k\geq 1}\{2|\Psi (\varrho ^{k},x^{*})-\Psi (\varrho ^{k},x^{k+1})| \}\leq K\) for some \(K>0\).

Finally, we show the convergence of \(\{{\Gamma }_{k}\}\) to zero by the following two cases.

Case 1. Suppose that there exists an integer \(k_{0}\geq 1\) such that \(\{{\Gamma }_{k}\}\) is nonincreasing. Then the limit \(\lim_{k\to \infty }{\Gamma }_{k}=\hbar <+\infty \) and

From (3.18), we get

Since \(s_{k}\to 0, \theta _{k}\to 0, {\Gamma }_{k}-{\Gamma }_{k+1} \to 0, 0<\liminf_{k\to \infty }\beta _{k}, 0<\liminf_{k\to \infty } \delta _{k}\), and \(0<\liminf_{k\to \infty }(1-\gamma _{k})\), we obtain from \(\{\alpha _{k}\}\subset (\underline{\alpha },\overline{\alpha }) \subset (0,\min \{\frac{1}{2c_{1}},\frac{1}{2c_{2}}\})\) that

We now show that \(\|\varrho ^{k}-p^{k}\|\to 0\) as \(k\to \infty \). Indeed, we set \(y^{*}=P_{C}(x^{*}-\mu _{2}B_{2} x^{*})\). Note that \(v^{k}=P_{C}(\varrho ^{k}-\mu _{2}B_{2}\varrho ^{k})\) and \(p^{k}=P_{C}(v^{k}-\mu _{1}B_{1}v^{k})\). Then \(p^{k}=G\varrho ^{k}\). By Lemma 2.3 we have

Substituting (3.22) for (3.23), by (3.14) and (3.17) we get

Also, substituting (3.24) for (3.18), we get

which immediately yields

Since \(\mu _{1}\in (0,2\alpha ), \mu _{2}\in (0,2\beta ), s_{k}\to 0, \theta _{k}\to 0, {\Gamma }_{k}-{\Gamma }_{k+1}\to 0, \liminf_{k \to \infty }\gamma _{k}>0\), and \(\liminf_{k\to \infty }(1-\gamma _{k})>0\), we get

On the other hand, observe that

This ensures that

Similarly, we get

Combining (3.26) and (3.27), by (3.14) and (3.17) we have

Substituting (3.28) for (3.18), from (3.14) we get

This immediately leads to

Since \(s_{k}\to 0, \theta _{k}\to 0, {\Gamma }_{k}-{\Gamma }_{k+1} \to 0, \liminf_{k\to \infty }\gamma _{k}>0\), and \(\liminf_{k\to \infty }(1-\gamma _{k})>0\), we deduce from (3.25) that

Thus,

Noticing \(u^{k}=\varepsilon _{k}x^{k}+(1-\varepsilon _{k})W_{k}u^{k}\), from (3.14) and the nonexpansivity of \(W_{k}\) we get

This together with (3.14), (3.17), and (3.18) implies that

So it follows that

Since \(s_{k}\to 0, \theta _{k}\to 0, {\Gamma }_{k}-{\Gamma }_{k+1} \to 0, 0<\liminf_{k\to \infty }(1-\gamma _{k}), 0<\liminf_{k\to \infty }\delta _{k}\), and \(0<\liminf_{k\to \infty }\varepsilon _{k}\leq \limsup_{k\to \infty } \varepsilon _{k}<1\), we obtain that

Using (3.20) and (3.21), we get

and

Combining (3.20) and (3.29), we have

We claim that \(\|W_{k}x^{k}-x^{k}\|\to 0\) and \(\|Tx^{k}-x^{k}\|\to 0\) as \(k\to \infty \). In fact, using Lemma 2.1(i) we deduce from (3.29) and (3.30) that

Combining (3.30) and (3.32), we have

Using (3.20) and (3.35), we infer from the asymptotical nonexpansivity of T that

This together with the assumption \(\|T^{k}x^{k}-T^{k+1}x^{k}\|\to 0\) implies that

Next we claim that \(\lim_{k\to \infty }\|x^{k}-x^{*}\|=0\). In fact, since the sequences \(\{\varrho ^{k}\}\) and \(\{x^{k}\}\) are bounded, we know that there exists a subsequence \(\{\varrho ^{k_{i}}\}\) of \(\{\varrho ^{k}\}\) converging weakly to \(\hat{x}\in C\) and satisfying the equality

From (3.20) and (3.21) it follows that \(x^{k_{i}}\rightharpoonup \hat{x}\) and \(x^{k_{i}+1}\rightharpoonup \hat{x}\). Then, by the result in Step 5, we deduce that \(\hat{x}\in \mathrm{Sol}(C,\Phi )\).

It is clear from (3.37) that \(x^{k_{i}}-Tx^{k_{i}}\to 0\). Note that Lemma 2.6 guarantees the demiclosedness of \(I-T\) at zero. So, we know that \(\hat{x}\in \mathrm{Fix}(T)\). Also, note that Lemma 2.6 guarantees the demiclosedness of \(I-G\) at zero. Hence, from \(x^{k_{i}}\rightharpoonup \hat{x}\) and \(x^{k}-Gx^{k}\to 0\) (due to (3.33)) it follows that \(\hat{x}\in \mathrm{Fix}(G)=\mathrm{ GSVI}(C,B_{1},B_{2})\). Let us show that \(\hat{x}\in \bigcap^{\infty }_{i=1}\mathrm{Fix}(T_{i})=\mathrm{Fix}(W)\). As a matter of fact, on the contrary we assume that \(\hat{x}\notin \mathrm{Fix}(W)\), i.e., \(W\hat{x}\neq \hat{x}\). Then, by Lemma 2.1(iii) and Lemma 2.7, we get

Moreover, we have

where \(D=\{x_{k}:k\geq 1\}\). Using Lemma 2.2 and (3.34), we obtain that \(\lim_{i\to \infty }\|Wx^{k}-x^{k}\|=0\), which together with (3.39) yields \(\liminf_{i\to \infty }\|x^{k_{i}}-\hat{x}\|<\liminf_{i\to \infty }\|x^{k_{i}}- \hat{x}\|\). This reaches a contradiction, and hence we have \(\hat{x}\in \mathrm{Fix}(W)=\bigcap^{\infty }_{i=1}\mathrm{Fix}(T_{i})\). Consequently, \(\hat{x}\in \bigcap^{\infty }_{j=0}\mathrm{Fix}(T_{j})\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{Sol}(C,\Phi )={\Omega }\). In terms of (3.38), we have

Since Ψ is ν-strongly monotone, we have

Combining (3.40) and (3.41), we obtain

We now claim that \(\hbar =0\). On the contrary we assume \(\hbar >0\). Without loss of generality we may assume that \(\exists k_{0}\geq 1\) s.t.

which together with (3.18) implies that, for all \(k\geq k_{0}\),

So it follows that, for all \(k\geq k_{0}\),

Since \(\sum^{\infty }_{j=1}s_{j}=\infty, \sum^{\infty }_{j=1}\theta _{j}< \infty, 0<\liminf_{k\to \infty }(1-\gamma _{k})\), and \(\lim_{k\to \infty }{\Gamma }_{k}=\hbar \), taking the limit in (3.45) as \(k\to \infty \), we get

This reaches a contradiction. Therefore, \(\lim_{k\to \infty }{\Gamma }_{k}=0\) and hence \(\{x^{k}\}\) converges strongly to the unique solution \(x^{*}\) of the problem EP(\({\Omega },\Psi \)).

Case 2. Suppose that \(\exists \{{\Gamma }_{k_{j}}\}\subset \{{\Gamma }_{k}\}\) s.t. \({\Gamma }_{k_{j}}<{\Gamma } _{k_{j}+1}\ \forall j\in {\mathcal {N}}\), where \({\mathcal {N}}\) is the set of all positive integers. Define the mapping \(\tau:{\mathcal {N}}\to {\mathcal {N}}\) by

By Lemma 2.8, we get

Utilizing the same inferences as in (3.21) and (3.31), we can obtain that

Since \(\{\varrho ^{k}\}\) is bounded, there exists a subsequence of \(\{\varrho ^{\tau (k)}\}\) converging weakly to x̂. Without loss of generality, we may assume that \(\varrho ^{\tau (k)}\rightharpoonup \hat{x}\). Then, utilizing the same inferences as in Case 1, we can obtain that \(\hat{x}\in {\Omega }=\bigcap^{\infty }_{i=0}\mathrm{Fix}(T_{i})\cap { \rm GSVI}(C,B_{1},B_{2})\cap \mathrm{Sol}(C,\Phi )\). From \(\varrho ^{\tau (k)}\rightharpoonup \hat{x}\) and (3.47), we get \(x^{\tau (k)+1}\rightharpoonup \hat{x}\). Using the condition \(\{\alpha _{k}\}\subset (\underline{\alpha },\overline{\alpha }) \subset (0,\min \{\frac{1}{2c_{1}},\frac{1}{2c_{2}}\})\), we have \(1-2\alpha _{\tau (k)}c_{1}>0\) and \(1-2\alpha _{\tau (k)}c_{2}>0\). So it follows from (3.18) that

which hence leads to

Since Ψ is ν-strongly monotone on C, we get

Combining (3.49) and (3.50), we deduce from \(\mathbf{Ass}_{\Psi }(\Psi _{1})\) and \(\hat{x}\in {\Omega }\) that

Hence, \(\limsup_{k\to \infty }\|x^{\tau (k)}-x^{*}\|^{2}\leq 0\). Thus, we get

From (3.48), we get

Owing to \({\Gamma }_{k}\leq {\Gamma }_{\tau (k)+1}\), we get

So it follows from (3.48) that \(x^{k}\to x^{*}\) as \(k\to \infty \). This completes the proof. □

Next, we introduce another iterative algorithm by using the subgradient extragradient implicit rule.

Algorithm 3.1

Initial step: Given \(x^{1}\in C\) arbitrarily. The sequences \(\{\varepsilon _{k}\},\{\beta _{k}\},\{\gamma _{k}\}\), \(\{\delta _{k}\}\) in \((0,1)\) and positive sequences \(\{\alpha _{k}\},\{s_{k}\}\) satisfy conditions (H1)–(H5).

Iterative steps: Calculate \(x^{k+1}\) as follows:

Step 1. Compute

Step 2. Choose \(w^{k}\in \partial _{2}\Phi (u^{k},y^{k})\), and compute

Step 3. Compute

Step 4. Compute

Set \(k:=k+1\) and return to Step 1.

Theorem 3.2

Assume that \(\{x^{k}\}\) is the sequence constructed by Algorithm 3.1. Let the bifunctions \(\Psi,\Phi \) satisfy assumptions \(\mathbf{Ass}_{\Phi}\)–\(\mathbf{Ass}_{\Psi}\). Then, under conditions (H1)–(H5), the sequence \(\{x^{k} \}\) converges strongly to the unique solution \(x^{*}\) of the problem EP(\({\Omega },\Psi \)) provided \(T^{k}x^{k}-T^{k+1}x^{k} \to 0\).

Proof

In terms of Lemma 2.4, we know that G is nonexpansive. Hence, by the Banach contraction mapping principle, we deduce from \(\{\gamma _{k}\}\subset (0,1)\) that for each \(k\geq 1\) there exists a unique element \(\varrho ^{k} \in C\) such that

Choose an element \(q\in {\Omega }=\bigcap^{\infty }_{i=0}\mathrm{Fix}(T_{i})\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{Sol}(C,\Phi )\) arbitrarily. Noticing \(\lim_{k\to \infty }\frac{\theta _{k}}{s_{k}}=0\), we might assume that \(\theta _{k}\leq \frac{1}{2}\lambda s_{k}\) for all \(k\geq 1\). We divide the proof into several steps as follows.

Steps 1–3. We show that the results in Steps 1–3 of the proof of Theorem 3.1 are still valid. In fact, using the same arguments as in the proof of Theorem 3.1, we obtain the desired results.

Step 4. We claim that the sequence \(\{x^{k}\}\) is bounded. Indeed, using the similar arguments to those in the proof of Theorem 3.1, we know that inequality (3.14) still holds. Since G is a nonexpansive mapping and T is asymptotically nonexpansive, we deduce from (3.14) that

which hence yields \(\|\varrho ^{k}-x^{*}\|\leq (1+\theta _{k})\|x^{k}-x^{*}\|\). Consequently,

By induction, we get \(\|x^{k}-x^{*}\|\leq \max \{\|x^{1}-x^{*}\|, \frac{2\hat{M}(x^{*})}{\lambda }\}\ \forall k\geq 1\). Thus, \(\{x^{k}\}\) is bounded, and so are the sequences \(\{p^{k}\},\{\varrho ^{k}\},\{y^{k}\},\{z^{k}\},\{u^{k}\},\{v^{k}\}\).

Step 5. We show that if \(x^{k_{i}}\rightharpoonup \hat{x}, u^{k_{i}}-x^{k_{i}}\to 0\) and \(u^{k_{i}}-y^{k_{i}}\to 0\) for \(\{k_{i}\}\subset \{k\}\), then \(\hat{x}\in \mathrm{Sol}(C,\Phi )\). Indeed, using the same arguments as in the proof of Theorem 3.1, we obtain the desired result.

Step 6. We show that \(x^{k}\to x^{*}\), a unique solution of the MBEP with the GSVI and CFPP constraints.

Indeed, set \({\Gamma }_{k}=\|x^{k}-x^{*}\|^{2}\). Since G is a nonexpansive mapping and T is asymptotically nonexpansive, by Lemma 2.4 we obtain

where \(\sup_{k\geq 1}(2+\theta _{k})\|x^{k}-x^{*}\|^{2}\leq \widetilde{M}\) for some \(\widetilde{M}>0\). This implies that

By the results in Steps 1 and 2, we deduce from (3.14) and (3.56) that

where \(\sup_{k\geq 1}\{2|\Psi (\varrho ^{k},x^{*})-\Psi (\varrho ^{k},x^{k+1})| \}\leq K\) for some \(K>0\).

Finally, we show the convergence of \(\{{\Gamma }_{k}\}\) to zero by the following two cases.

Case 1. Suppose that there exists an integer \(k_{0}\geq 1\) such that \(\{{\Gamma }_{k}\}\) is nonincreasing. Then the limit \(\lim_{k\to \infty }{\Gamma }_{k}=\hbar <+\infty \) and \(\lim_{k\to \infty }({\Gamma }_{k}-{\Gamma }_{k+1})=0\). From (3.57), we get

Since \(s_{k}\to 0, \theta _{k}\to 0, {\Gamma }_{k}-{\Gamma }_{k+1} \to 0, 0<\liminf_{k\to \infty }\beta _{k}, 0<\liminf_{k\to \infty } \delta _{k}\), and \(0<\liminf_{k\to \infty }\gamma _{k}\leq \limsup_{k\to \infty } \gamma _{k}<1\), we obtain from \(\{\alpha _{k}\}\subset (\underline{\alpha },\overline{\alpha }) \subset (0,\min \{\frac{1}{2c_{1}},\frac{1}{2c_{2}}\})\) that

Next we show that \(\lim_{k\to \infty }\|x^{k}-x^{*}\|=0\). In fact, utilizing the same arguments as those of (3.24), we get

which together with (3.57) leads to

which immediately yields

Since \(\mu _{1}\in (0,2\alpha ), \mu _{2}\in (0,2\beta ), s_{k}\to 0, \theta _{k}\to 0, {\Gamma }_{k}-{\Gamma }_{k+1}\to 0\), and \(0<\liminf_{n\to \infty }\gamma _{k}\leq \limsup_{n\to \infty } \gamma _{k}<1\), we get

On the other hand, utilizing the same arguments as those of (3.28), we get

which together with (3.57) implies that

This immediately leads to

Since \(s_{k}\to 0, \theta _{k}\to 0, {\Gamma }_{k}-{\Gamma }_{k+1} \to 0, 0<\liminf_{k\to \infty }\gamma _{k}\leq \limsup_{k\to \infty } \gamma _{k}<1\), we deduce from (3.61) that

Thus,

Utilizing the similar arguments to those of (3.30), we get

which immediately yield

In the meantime, it follows from (3.59) and (3.60) that

and hence

We claim that \(\|Tx^{k}-x^{k}\|\to 0\) and \(\|Gx^{k}-x^{k}\|\to 0\) as \(k\to \infty \). In fact, since G is a nonexpansive mapping, we deduce from (3.62) and (3.64) that

From (3.59), (3.60), and (3.64), we conclude that

and hence

Utilizing the same arguments as those of (3.37), we have

Further, utilizing the same arguments as in Case 1 of the proof of Theorem 3.1, we obtain that \(\lim_{k \to \infty }{\Gamma }_{k}=0\), and hence \(\{x^{k}\}\) converges strongly to the unique solution \(x^{*}\) of the problem EP(\({\Omega },\Psi \)).

Case 2. Suppose that \(\exists \{{\Gamma }_{k_{j}}\}\subset \{{\Gamma }_{k}\}\) s.t. \({\Gamma }_{k_{j}}<{\Gamma } _{k_{j}+1}\ \forall j\in {\mathcal {N}}\), where \({\mathcal {N}}\) is the set of all positive integers. Define the mapping \(\tau:{\mathcal {N}}\to {\mathcal {N}}\) by

In the remainder of the proof, utilizing the same arguments as in Case 2 of the proof of Theorem 3.1, we obtain the desired result. This completes the proof. □

Remark 3.1

Compared with the corresponding results in Ceng and Wen [3], Chang et al. [16], Ceng et al. [22], and Anh and An [24], our results improve and extend them in the following aspects.

(i) The problem of finding a solution of GSVI (1.2) with the CFPP constraint of a countable family of ℓ-uniformly Lipschitzian pseudocontractions and an asymptotically nonexpansive mapping in [3] is extended to develop our problem of finding a solution of the MBEP with the GSVI and CFPP constraints, i.e., a strongly monotone equilibrium problem over the common solution set of another monotone equilibrium problem, the GSVI and the CFPP. The hybrid extragradient-like implicit method in [3] is extended to develop our new subgradient extragradient implicit rule for solving the MBEP with the GSVI and CFPP constraints.

(ii) The problem of finding a solution of the equilibrium problem with the VIP and CFPP constraints in [16] is extended to develop our problem of finding a solution of the MBEP with the GSVI and CFPP constraints, i.e., a strongly monotone equilibrium problem over the common solution set of another monotone equilibrium problem, the GSVI and the CFPP. The iterative algorithm based on the viscosity approximation method in [16] is extended to develop our new subgradient extragradient implicit rule for solving the MBEP with the GSVI and CFPP constraints.

(iii) The problem of finding a solution of the VIP with the CFPP constraint of finitely many nonexpansive mappings in [22] is extended to develop our problem of finding a solution of the MBEP with the GSVI and CFPP constraints, i.e., a strongly monotone equilibrium problem over the common solution set of another monotone equilibrium problem, the GSVI and the CFPP. The inertial subgradient extragradient method for solving the VIP with the CFPP constraint in [22] is extended to develop our new subgradient extragradient implicit rule for solving the MBEP with the GSVI and CFPP constraints.

(iv) The problem of finding a solution of the MBEP with the FPP constraint in [24] is extended to develop our problem of finding a solution of the MBEP with the GSVI and CFPP constraints, i.e., a strongly monotone equilibrium problem over the common solution set of another monotone equilibrium problem, the GSVI and the CFPP. The modified subgradient extragradient method for solving the MBEP with the FPP constraint in [24] is extended to develop our new subgradient extragradient implicit rule for solving the MBEP with the GSVI and CFPP constraints.

4 Applications and numerical examples

In this section, we consider the applications of Theorems 3.1 and 3.2 to finding a common solution of the GSVI, VIP, and FPP. Let C be a nonempty closed convex subset of a real Hilbert space \({\mathcal {H}}\). Let \(B_{1},B_{2}:{\mathcal {H}}\to {\mathcal {H}}\) be α-inverse-strongly monotone and β-inverse-strongly monotone, respectively. Let \(G:{\mathcal {H}}\to C\) be defined as \(G:=P_{C}(I-\mu _{1}B_{1})P_{C}(I-\mu _{2}B_{2})\), where \(0<\mu _{1}<2\alpha \) and \(0<\mu _{2}<2\beta \). Let \(T:{\mathcal {H}}\to C\) be an asymptotically nonexpansive mapping with a sequence \(\{\theta _{k}\}\), and \(T_{k}=S:C\to C\) be a nonexpansive mapping for all \(k\geq 1\). An operator \(A:{\mathcal {H}}\to {\mathcal {H}}\) is said to be

(i) monotone if \(\langle Ax-Ay,x-y\rangle \geq 0\ \forall x,y\in {\mathcal {H}}\);

(ii) L-Lipschitz continuous if \(\exists L>0\) s.t. \(\|Ax-Ay\|\leq L\|x-y\|\ \forall x,y\in {\mathcal {H}}\).

The VIP for A is to find \(x^{*}\in C\) s.t.

We denote by \(\mathrm{ VI}(C,A)\) the solution set of problem (4.1). Let \({\Omega }=\mathrm{Fix}(S)\cap \mathrm{Fix}(T)\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{ VI}(C,A)\neq \emptyset \), and suppose that A satisfies the following conditions:

(B1) A is monotone;

(B2) A is weakly to strongly continuous, that is, \(Ax^{k}\to Ax\) for each sequence \(\{x^{k}\}\subset {\mathcal {H}}\) converging weakly to x;

(B3) A is L-Lipschitz continuous for some constant \(L>0\).

In addition, let the bifunction Ψ and the positive sequences \(\{\alpha _{k}\},\{s_{k}\}\) and \(\{\varepsilon _{k}\},\{ \beta _{k}\},\{\gamma _{k}\}\), \(\{\delta _{k}\}\) be the same as in Algorithm 2.1. We define \(\Phi (x,y):=\langle Ax,y-x\rangle \) for each \(x,y\in {\mathcal {H}}\). Then EP (1.1) becomes VIP (4.1). It is easy to check that the bifunction \(\Phi (x,y) =\langle Ax,y-x\rangle \) satisfies conditions \(\mathbf{Ass}_{\Phi }(\Phi _{1})\)–\(\mathbf{Ass}_{\Phi }(\Phi _{2})\) where Φ is Lipschitz-type continuous with \(c_{1}=c_{2}=L/2\). It follows from the definitions of \(y^{k}\) in Algorithm 2.1 and Φ that

and similarly, \(z^{k}\) in Algorithm 2.1 reduces to

In terms of \(w^{k}\in \partial _{2}\Phi (u^{k},y^{k})\) and the definition of the subdifferential of Φ, we have

and hence

Thus

Therefore, the implicit subgradient extragradient Algorithm 2.1 reduces to the following algorithm for solving the GSVI, VIP, and FPP.

Algorithm 4.1

Initial step: Given \(x^{1}\in C\) arbitrarily. The sequences \(\{\varepsilon _{k}\},\{\beta _{k}\},\{\gamma _{k}\}\), \(\{\delta _{k}\}\) in \((0,1)\) and positive sequences \(\{\alpha _{k}\},\{s_{k}\}\) satisfy conditions (H1)–(H5).

Iterative steps: Calculate \(x^{k+1}\) as follows:

Step 1. Compute

Step 2. Choose \(w^{k}=Au^{k}\), and compute

Step 3. Compute

Step 4. Compute

Set \(k:=k+1\) and return to Step 1.

Using Theorem 3.1 we obtain the following result.

Theorem 4.1

Assume that \(\{x^{k}\}\) is the sequence constructed by Algorithm 4.1. Then \(\{x^{k}\}\) converges strongly to the unique solution \(x^{*}\) of the problem \(\mathrm{ EP}({\Omega },\Psi )\) provided \(T^{k}x^{k}-T^{k+1}x^{k}\to 0\), where \({\Omega }=\mathrm{Fix}(S)\cap \mathrm{Fix}(T)\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{ VI}(C,A)\).

In the same way, the implicit subgradient extragradient Algorithm 3.1 reduces to the following algorithm for solving the GSVI, VIP, and FPP.

Algorithm 4.2

Initial step: Given \(x^{1}\in C\) arbitrarily. The sequences \(\{\varepsilon _{k}\},\{\beta _{k}\},\{\gamma _{k}\}\), \(\{\delta _{k}\}\) in \((0,1)\) and positive sequences \(\{\alpha _{k}\},\{s_{k}\}\) satisfy conditions (H1)–(H5).

Iterative steps: Calculate \(x^{k+1}\) as follows:

Step 1. Compute

Step 2. Choose \(w^{k}=Au^{k}\), and compute

Step 3. Compute

Step 4. Compute

Set \(k:=k+1\) and return to Step 1.

Using Theorem 3.2 we derive the following result.

Theorem 4.2

Assume that \(\{x^{k}\}\) is the sequence constructed by Algorithm 4.2. Then \(\{x^{k}\}\) converges strongly to the unique solution \(x^{*}\) of the problem \(\mathrm{ EP}({\Omega },\Psi )\) provided \(T^{k}x^{k}-T^{k+1}x^{k}\to 0\), where \({\Omega }=\mathrm{Fix}(S)\cap \mathrm{Fix}(T)\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{ VI}(C,A)\).

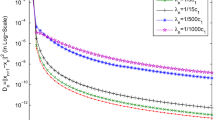

In what follows, we include a numerical example of comparisons with other algorithms (see e.g., [3, 16, 22, 24]) to strengthen the results of the article.

Theorems 4.1 and 4.2 are applied to solve the GSVI, VIP, and FPP in an illustrating example. Let \(\varepsilon _{k}=\lambda =\mu _{1}=\mu _{2}=\frac{2}{9}, \alpha _{k}= \frac{1}{3},\underline{\alpha }=\frac{1}{6}, \overline{\alpha }= \frac{3}{7}\), \(\beta _{k}=\frac{1}{2(k+1)}+\frac{1}{4}, \gamma _{k}= \frac{2k+1}{2(k+1)}-\frac{1}{2}, \delta _{k}=\frac{1}{4}\), and \(s_{k}=\frac{1}{2(k+1)}\) for all \(k\geq 1\). Let \(C=[-1,1]\) and \({\mathcal {H}}=\mathbf{R}\) with the inner product \(\langle a,b\rangle = ab\) and the induced norm \(\|\cdot \|=|\cdot |\). For \(i=1,2\), let \(S:C\to C, T:{\mathcal {H}}\to C, A,B_{i}:{\mathcal {H}}\to {\mathcal {H}}\), \(\Psi:C\times C\to \mathbf{ R}\), and \(\bar{\Psi }_{1},\hat{\psi }_{1}:C\times C\to {\mathcal {H}}\) be defined as \(Sx=\sin x, Tx=\frac{2}{3}\sin x, Ax=x-\sin x, B_{i}x=x-\frac{1}{2} \sin x, \Psi (x,y)=\langle x-\frac{1}{2}\sin x,y-x\rangle, \bar{\Psi }_{1}(x,y)=x-y-\frac{1}{2}(\sin x-\sin y)\), and \(\hat{\psi }_{1}(x,y)=x-y\). Then S is nonexpansive with \(\mathrm{Fix}(S)=\{0\}\) and T is asymptotically nonexpansive with \(\theta _{k}=(\frac{2}{3})^{k}\ \forall k\geq 1\), such that \(\|T^{k+1}x^{k}-T^{k}x^{k}\|\to 0\) as \(k\to \infty \). Indeed, we observe that

and

It is clear that \(\mathrm{Fix}(T)=\{0\}\),

Moreover, A is monotone and L-Lipschitz continuous with \(L=2\) since \(\|Ax-Ay\|\leq 2\|x-y\|\) and

It is clear that \(c_{1}=c_{2}=L/2=1\) and \(0\in \mathrm{ VI}(C,A)\). In the meantime, for \(i=1,2\), \(B_{i}\) is \(\frac{2}{9} \)-inverse-strongly monotone with \(\alpha =\beta =\frac{2}{9}\), since for all \(x,y\in {\mathcal {H}}\) we deduce that \(\|B_{i}x-B_{i}y\|\leq \frac{3}{2}\|x-y\|\) and

It is clear that \(G(0)=P_{C}(I-\frac{2}{9}B_{1})P_{C}(I-\frac{2}{9}B_{2})0=P_{C}(I- \frac{2}{9}B_{1})0=0\), and hence \(0\in \mathrm{Fix}(G)=\mathrm{ GSVI}(C,B_{1},B_{2})\). Therefore, \({\Omega }=\mathrm{Fix}(S)\cap \mathrm{Fix}(T)\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{ VI}(C,A)=\{0\}\neq \emptyset \).

Also, it is not hard to find out that

(a) Ψ is ν-strongly monotone with \(\nu =\frac{1}{2}\);

(b) For \(\bar{L}_{1}=\frac{3}{2}\) and \(\hat{L}_{1}=1\), \(\bar{\Psi }_{1}\) and \(\hat{\psi }_{1}\) enjoy the following properties:

\(\bar{\Psi }_{1}(x,y)+\bar{\Psi }_{1}(y,x)=0\), \(\|\bar{\Psi }_{1}(x,y)\|\leq \bar{L}_{1}\|x-y\|\), \(\hat{\psi }_{1}(x,x)=0\), \(\|\hat{\psi }_{1}(x,y)-\hat{\psi }_{1}(u,v)\|=\hat{L}_{1}\|(x-y)-(u-v) \|\) and

where \({\Upsilon }=\bar{L}_{1}\hat{L}_{1}=\frac{3}{2}\);

(c) For any sequence \(\{y^{k}\}\subset C\) such that \(y^{k}\to d\), we have

In addition, it is clear that the sequences \(\{\varepsilon _{k}\},\{\beta _{k}\},\{\gamma _{k}\},\{\delta _{k}\}\) in \((0,1)\) and positive sequences \(\{\alpha _{k}\}\), \(\{s_{k}\}\) satisfy (H1)–(H4). Next we verify that (H5) is also valid. Indeed, note that \(2s_{k}\nu -s^{2}_{k}{\Upsilon }^{2}=\frac{1}{2(k+1)}(1- \frac{9}{8}\cdot \frac{1}{k+1})<1\), \(0<\lambda =\frac{2}{9}<\frac{1}{2}=\min \{\frac{1}{2},\frac{3}{2}\}= \min \{\nu,{ \Upsilon }\}\) and

This ensures that (H5) is satisfied. In this case, Algorithm 4.1 can be rewritten as follows:

where, for each \(k\geq 1, C_{k}\) is chosen as in Algorithm 4.1. Then, by Theorem 4.1, we know that \(\{x^{k}\}\) converges to \(0\in {\Omega }=\mathrm{Fix}(S)\cap \mathrm{Fix}(T)\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{ VI}(C,A)\).

On the other hand, Algorithm 4.2 can be rewritten as follows:

where, for each \(k\geq 1, C_{k}\) is chosen as in Algorithm 4.2. Then, by Theorem 4.2, we know that \(\{x^{k}\}\) converges to \(0\in {\Omega }=\mathrm{Fix}(S)\cap \mathrm{Fix}(T)\cap \mathrm{ GSVI}(C,B_{1},B_{2}) \cap \mathrm{ VI}(C,A)\).

5 Conclusions

In a real Hilbert space, let GSVI and CFPP represent a general system of variational inequalities and a common fixed point problem of a countable family of nonexpansive mappings and an asymptotically nonexpansive mapping, respectively. In this article, we have suggested two new iterative algorithms based on the subgradient extragradient implicit rule for solving the monotone bilevel equilibrium problem (MBEP) with the GSVI and CFPP constraints, i.e., a strongly monotone equilibrium problem over the common solution set of another monotone equilibrium problem, the GSVI and the CFPP. The strong convergence results for the proposed algorithms to solve such a MBEP with the GSVI and CFPP constraints are established under some mild assumptions. Furthermore, in the proposed method, the second minimization problem over a closed convex set is replaced with the subgradient projection onto some constructible half-space, and a new approach for solving GSVI and CFPP via Mann (implicit) iterations is presented. As a consequence, we have obtained the iterative algorithms for solving GSVI, VIP, and FPP.

Availability of data and materials

All data generated or analyzed during this study are included in this published article.

References

Colao, V., Marino, G., Xu, H.K.: An iterative method for finding common solutions of equilibrium and fixed point problems. J. Math. Anal. Appl. 344, 340–352 (2008)

Cai, G., Shehu, Y., Iyiola, O.S.: Strong convergence results for variational inequalities and fixed point problems using modified viscosity implicit rules. Numer. Algorithms 77, 535–558 (2018)

Ceng, L.C., Wen, C.F.: Systems of variational inequalities with hierarchical variational inequality constraints for asymptotically nonexpansive and pseudocontractive mappings. Rev. R. Acad. Cienc. Exactas Fís. Nat., Ser. A Mat. 113, 2431–2447 (2019)

Fan, K.: A minimax inequality and applications. In: Shisha, O. (ed.) Inequalities III, pp. 103–113. Academic Press, San Diego (1972)

Ceng, L.C., Xu, H.K., Yao, J.C.: The viscosity approximation method for asymptotically nonexpansive mappings in Banach spaces. Nonlinear Anal. 69, 1402–1412 (2008)

Yao, Y., Noor, M.A.: Strong convergence of the modified hybrid steepest-descent methods for general variational inequalities. J. Appl. Math. Comput. 24, 179–190 (2007)

Muu, L.D., Oettli, W.: Convergence of an adaptive penalty scheme for finding constrained equilibria. Nonlinear Anal. 18, 1159–1166 (1992)

Blum, E., Oettli, W.: From optimization and variational inequalities to equilibrium problems. Math. Stud. 63, 123–145 (1994)

Ceng, L.C., Latif, A., Al-Mazrooei, A.E.: Hybrid viscosity methods for equilibrium problems, variational inequalities, and fixed point problems. Appl. Anal. 95, 1088–1117 (2016)

Shimoji, K., Takahashi, W.: Strong convergence to common fixed points of infinite nonexpansive mappings and applications. Taiwan. J. Math. 5, 387–404 (2001)

Noor, M.A., Zainab, S., Yao, Y.: Implicit methods for equilibrium problems on Hadamard manifolds. J. Appl. Math. 2012, Article ID 437391 (2012)

Opial, Z.: Weak convergence of the sequence of successive approximations for nonexpansive mappings. Bull. Am. Math. Soc. 73, 591–597 (1967)

Konnov, I.: Combined Relaxation Methods for Variational Inequalities. Springer, Berlin (2001)

Kassay, G., Radulescu, V.D.: Equilibrium Problems and Applications. Mathematics in Science and Engineering. Elsevier, London (2019)

Mastroeni, G.: On auxiliary principle for equilibrium problems. In: Daniele, P., Giannessi, F., Maugeri, A. (eds.) Nonconvex Optimization and Its Applications. Kluwer Academic, Dordrecht (2003)

Chang, S.S., Lee, H.W.J., Chan, C.K.: A new method for solving equilibrium problem fixed point problem and variational inequality problem with application to optimization. Nonlinear Anal. 70, 3307–3319 (2009)

Goebel, K., Reich, S.: Uniform Convexity, Hyperbolic Geometry, and Nonexpansive Mappings. Dekker, New York (1984)

Maingé, P.E.: Strong convergence of projected subgradient methods for nonsmooth and nonstrictly convex minimization. Set-Valued Anal. 16, 899–912 (2008)

Dadashi, V., Iyiola, O.S., Shehu, Y.: The subgradient extragradient method for pseudomonotone equilibrium problems. Optimization 69, 901–923 (2020)

Ceng, L.C., Wang, C.Y., Yao, J.C.: Strong convergence theorems by a relaxed extragradient method for a general system of variational inequalities. Math. Methods Oper. Res. 67, 375–390 (2008)

Aubin, J.P., Ekeland, I.: Applied Nonlinear Analysis. Wiley, New York (1984)

Ceng, L.C., Petrusel, A., Qin, X., Yao, J.C.: A modified inertial subgradient extragradient method for solving pseudomonotone variational inequalities and common fixed point problems. Fixed Point Theory 21, 93–108 (2020)

Bigi, G., Castellani, M., Pappalardo, M., Passacantando, M.: Nonlinear Programming Techniques for Equilibria. Springer, Switzerland (2019)

Anh, P.N., An, L.T.H.: New subgradient extragradient methods for solving monotone bilevel equilibrium problems. Optimization 68, 2097–2122 (2019)

Jung, J.S.: A general iterative algorithm for monotone inclusion, generalized mixed equilibrium and fixed point problems. J. Nonlinear Convex Anal. 20, 1793–1812 (2019)

Qin, X., Cho, S.Y., Kang, S.M.: Some results on generalized equilibrium problems involving a family of nonexpansive mappings. Appl. Math. Comput. 217, 3113–3126 (2010)

Acknowledgements

The authors are grateful to the referees for useful suggestions which improved the contents of this paper.

Funding

This paper was partially supported by the 2020 Shanghai Leading Talents Program of the Shanghai Municipal Human Resources and Social Security Bureau (20LJ2006100), the Innovation Program of Shanghai Municipal Education Commission (15ZZ068), and the Program for Outstanding Academic Leaders in Shanghai City (15XD1503100).

Author information

Authors and Affiliations

Contributions

All authors contributed equally to the manuscript and approved the final manuscript.

Corresponding author

Ethics declarations

Competing interests

The authors declare that they have no competing interests.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

He, L., Cui, YL., Ceng, LC. et al. Strong convergence for monotone bilevel equilibria with constraints of variational inequalities and fixed points using subgradient extragradient implicit rule. J Inequal Appl 2021, 146 (2021). https://doi.org/10.1186/s13660-021-02683-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13660-021-02683-y