Abstract

When multiple followers are involved in a bilevel programming problem, the leader’s decision will be affected by the reactions of these followers. For actual problems, the leader in general cannot obtain complete information from the followers so that he may be risk-averse. Then he would need a safety margin to bound the damage resulting from the undesirable selections of the followers. This situation is called a pessimistic bilevel multi-follower (PBLMF) programming problem. This research considers a partially-shared linear PBLMF programming in which there is a partially-shared variable among the followers. The concept and solution algorithm of such a problem are developed. As an illustration, the partially-shared linear PBLMF programming model is applied to a company making venture investments.

Similar content being viewed by others

1 Introduction

Bilevel programming plays an exceedingly important role in different application fields, such as transportation, economics, ecology, engineering and others; see [1] and the references therein. It has been developed and researched by many authors; e.g., see the monographs [2–5].

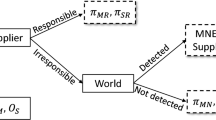

When the set of solutions of the lower level problem does not reduce to a singleton, the leader can hardly optimize his choice unless he knows the follower’s reaction to his choice. In this situation, at least two approaches have been suggested: optimistic (or strong) formulation and pessimistic (or weak) formulation [3, 6, 7]. The pessimistic bilevel programming problem is very difficult [8]. As a result, most research on bilevel programming focuses on the optimistic formulation. Interested readers can refer to [1, 9] and the references therein.

This research focuses on the concept, algorithms and applications of the pessimistic bilevel programming problem. Several relative studies are reviewed for existing results of solutions and approximations results for pessimistic bilevel programming; see [10–15]. For papers discussing optimality conditions, see [16, 17]. Recently, Wiesemann et al. [8] analyzed the structural properties and presented a solvable ϵ-approximation algorithm for the independent pessimistic bilevel programming problem. Based on an exact penalty function, Zheng et al. [18] proposed an algorithm for the pessimistic linear bilevel programming problem. C̆ervinka, Matonoha and Outrata [19] developed a new numerical method to compute approximate and so-called relaxed pessimistic solutions to mathematical programming with equilibrium constraints which is a generalized bilevel programming problem.

As is well known, most theoretical and algorithmic contributions to bilevel programming are limited to a specific situation with one leader and one follower. For the actual bilevel programming problems, however, the lower level problem often involves multiple decision makers. For example, in a university, the dean of a faculty is the leader, and aims to minimize the faculty annual budget. All the heads of departments in the faculty are the followers whose aims are maximizing their respective annual budget. The leader chooses an optimal strategy knowing how the followers will react. This is a typical bilevel multi-follower (BLMF) programming problem. Note that the research on BLMF has been concentrated in its optimistic formulation. For example, Calvete and Galé [20] discussed the linear BLMF with independent followers and transformed such a problem into a linear bilevel problem with one leader and one follower. Lu et al. [21] generalized a framework for a special kind of BLMF, and identified nine main types of relations among followers. Lu et al. [22] considered a trilevel multi-follower programming problem, and analyzed various kinds of relations between decision entities. However, some practical problems need to be modeled as a partially-shared pessimistic BLMF programming model. Let us consider a simple example as follows.

Example 1.1

Assume that a company (i.e., leader) undertakes M projects which will be performed by M construction teams (i.e., followers), respectively. In addition to his own resources, each team usually need to use some shared resources, such as piling machines and cranes, in the company. Furthermore, assume that the construction teams are competitive, and the company cannot obtain complete information from these teams. Then the company may be risk-averse, and consequently, he protects himself against the possible worst choice of the teams. That is, his aim is to minimize the worst-case cost. Let the cost function of the corporation be \(H(x,y_{1},y_{2},\dots,y_{M},z)\). The cost function of the ith construction team is \(f_{i}(x,y_{i},z)\) and the ith team subjects constraints are \(G_{i}(x,y_{i},z)\leqslant0\) in which z is a shared resource among teams. Then the model is given as follows:

where \((y_{i},z)\) is a solution of the ith team’s problem (\(i=1,2,\dots,M\))

Note that the above problem cannot be modeled from the existing approaches. To model such a problem, the proposed study considers a partially-shared PBLMF programming problem. The main contributions of this study are three-fold: (i) the concept of a solution of the general PBLMF programming problem is presented and the related existence theorem is established; (ii) a simple algorithm based on penalty function is developed for solving a partially-shared linear PBLMF programming problem; and (iii) we apply the proposed partially-shared linear PBLMF programming problem to a company making venture investments.

The paper is organized as follows. In the next section, the concept of a partially-shared PBLMF programming problem is introduced, and an equivalently penalty problem inspired from [10, 23–25] is given. In Section 3, we analyze the relationships between the original problem and its penalty problem, and then present a solution algorithm. To illustrate the feasibility and rationality of the proposed partially-shared linear PBLMF programming model, an example of venture investments is proposed in Section 4. Finally, concluding remarks are provided in Section 5.

2 Concept and penalty function of partially-shared linear PBLMF

Consider the following partially-shared linear PBLMF programming problem in which \(M\geqslant2\) followers are involved and there is a partially shared decision variable z among followers:

where \(\Psi_{i}(x)\) is the set of solutions of the ith follower’s problem

Here, \(x,c\in\mathbb{R}^{n}\), \(y_{i},d_{i},u_{i}\in\mathbb{R}^{m_{i}}\), \(s,z,v_{i}\in\mathbb{R}^{l}\), \(A_{i}\in\mathbb{R}^{q_{i}\times n}\), \(B_{i}\in \mathbb{R}^{q_{i}\times{m_{i}}}\), \(C_{i}\in\mathbb{R}^{q_{i}\times l}\), \(b_{i}\in \mathbb{R}^{q_{i}}\), \(i=1,2,\dots,M\), X is a closed subset of \(\mathbb{R}^{n}\), and T stands for transpose.

Definition 1

-

(a)

Constraint region of problem (1):

$$\begin{aligned}& S= \bigl\{ (x,y_{1},y_{2},\dots,y_{M},z): x\in X, A_{i}x+B_{i}y_{i}+C_{i}z\leqslant b_{i}, y_{i},z\geqslant0, i=1,2, \dots,M \bigr\} . \end{aligned}$$ -

(b)

Projection of S onto the leader’s decision space:

$$\begin{aligned}& S(X)= \bigl\{ x\in X: \exists(y_{1},y_{2}, \dots,y_{M},z), \mbox{such that } (x,y_{1},y_{2}, \dots,y_{M},z)\in S \bigr\} . \end{aligned}$$ -

(c)

Feasible set for the ith follower \(\forall x\in S(X)\):

$$\begin{aligned}& S_{i}(x)= \bigl\{ (y_{i},z): B_{i}y_{i}+C_{i}z \leqslant b_{i}-A_{i}x, y_{i},z\geqslant0 \bigr\} . \end{aligned}$$ -

(d)

The ith follower’s rational reaction set for \(x\in S(X)\):

$$\begin{aligned}& \Psi_{i}(x)= \bigl\{ (y_{i},z): (y_{i},z)\in \operatorname {Arg}\min \bigl[u_{i}^{T}y_{i}+v_{i}^{T}z: (y_{i},z)\in S_{i}(x) \bigr] \bigr\} . \end{aligned}$$ -

(e)

Inducible region or feasible region of the leader:

$$\begin{aligned}& \mathit{IR}= \bigl\{ (x,y_{1},y_{2},\dots,y_{M},z): (x,y_{1},y_{2},\dots,y_{M},z)\in S, (y_{i},z)\in\Psi_{i}(x), i=1,2,\dots,M \bigr\} . \end{aligned}$$

To introduce the concept of a solution of problem (1) (also called pessimistic solution), one usually employs the following value function \(\varphi(x)\):

Definition 2

A point \((x^{*},y_{1}^{*},y_{2}^{*},\dots,y_{M}^{*},z^{*})\in \mathit{IR}\) is called a pessimistic solution to problem (1), if

For the sake of simplicity, this study only considers a special case of \(M=2\) in problem (1), i.e.,

where \(\Psi_{i}(x)\) is the set of solutions of the ith follower’s problem

For each \(x\in S(X)\), denote by \(f(x)\) the optimal value of the following problem \(P(x)\):

Then problem (2) is equivalently transformed into the following problem P:

The dual problem of (3) is written as

Denote by \(\pi_{i}(x,y_{i},w_{i},z)=u_{i}^{T}y_{i}+v_{i}^{T}z+(b_{i}-A_{i}x)^{T}w_{i}\) the ith (\(i=1,2\)) follower’s duality gap.

For \(\rho>0\), we now consider the following penalized problem \(P_{\rho}(x)\):

and denote the optimal value function by \(f_{\rho}(x)\).

The dual problem of (4) is

Furthermore, for each \(x\in S(X)\), if \(f_{\rho}(x)\) exists, then it is also the optimal value function of problem (5).

Finally, we find two penalized problems of problem P as follows.

Problem \(P_{\rho}\):

Problem \(\tilde{P}_{\rho}\):

The following section outlines the existence of solutions to problems \(P_{\rho}(x)\), \(P_{\rho}\), \(\tilde{P}_{\rho}\), and P, gives the relationships among them and presents a solution algorithm.

3 Algorithm of partially-shared linear PBLMF

In order to establish theoretical results, we state the main assumption throughout the paper.

Assumption (A)

S is a non-empty compact polyhedron.

For convenience, we denote

In the sequel, denote by \(V(\mathcal{A})\) the set of vertices of \(\mathcal{A}\) for a set \(\mathcal{A}\).

The following three lemmas provide the existence of solutions to problems \(P_{\rho}(x)\), \(P_{\rho}\), and \(\tilde{P}_{\rho}\), respectively.

Lemma 3.1

Under Assumption (A), for each \(x\in S(X)\) and a fixed value of \(\rho>0\), problem \(P_{\rho}(x)\) has at least one solution in \(V(Z_{5}(x))\times V(Z_{4}^{1})\times V(Z_{4}^{2})\).

Proof

For each \(x\in S(X)\) and fixed \(\rho>0\), we have

It follows from Assumption (A) that the objective function of the linear programming problem \(P_{\rho}(x)\) is bounded from above, and hence it has at least one solution in \(V(Z_{5}(x))\times V(Z_{4}^{1})\times V(Z_{4}^{2})\). This completes the proof. □

Lemma 3.2

Under Assumption (A), for a fixed value of \(\rho>0\), problem \(P_{\rho}\) has at least one solution in \(V(Z_{2}(\rho))\times V(Z_{1}(\rho))\).

Proof

Clearly, problem \(P_{\rho}\) is a disjoint bilinear programming problem whose solution occurs at a vertex of its constraint region [26]. This completes the proof. □

Lemma 3.3

Under Assumption (A), for a fixed value of \(\rho>0\), problem \(\tilde{P}_{\rho}\) has at least one solution.

Proof

Under Assumption (A), it follows from Theorem 4.3 in [3] that \(f_{\rho}(x)\) is continuous. Hence, the result follows immediately from the Weierstrass theorem. □

For any \(\eta>0\), let \(Z_{6}:=\{(x,t): Bt\leqslant\eta(b-Ax)\}\) and \(Z_{7}:=\{(x,y): By\leqslant b-Ax\}\). To prove Theorem 3.1, we first provide the following lemma.

Lemma 3.4

For any \(\eta>0\), if \((x^{*}_{\eta},t^{*}_{\eta})\in V(Z_{6})\), then there exists \((x^{*},y^{*})\in V(Z_{7})\), such that \(x^{*}=x^{*}_{\eta}\) and \(t^{*}_{\eta}=\eta y^{*}\).

Proof

It is easy to verify that \((x^{*}_{\eta},\frac{t^{*}_{\eta}}{\eta})\in Z_{7}\). Let \((x^{1},y^{1}),\dots,(x^{r},y^{r})\) be the distinct vertices of \(Z_{7}\). Since any point in \(Z_{7}\) can be written as a convex combination of these vertices, let \((x^{*}_{\eta},\frac{t^{*}_{\eta}}{\eta})=\sum_{i=1}^{\hat{r}} \alpha^{i} (x^{i},y^{i})\), where \(\sum_{i=1}^{\hat{r}} \alpha^{i}=1\), \(\alpha^{i}>0\), \(i=1,\dots,\hat{r}\), and \(\hat{r}\leqslant r\). Then we have

Note that \((x^{i}, \eta y^{i})\in Z_{6}\). Hence, (6) implies that \(\hat{r}=1\). Because \((x^{*}_{\eta},t^{*}_{\eta})\) is a vertex of \(Z_{6}\), a contradiction results unless \(\hat{r}=1\).

Therefore, there exists a point \((x^{*},y^{*})\in V(Z_{7})\), such that \(x^{*}=x^{*}_{\eta}\) and \(t^{*}_{\eta}=\eta y^{*}\). This completes the proof. □

Next, the following result relates the solution between problems \(P_{\rho}\) and \(\tilde{P}_{\rho}\).

Theorem 3.1

Under Assumption (A), for a fixed value of \(\rho >0\), if \((x_{\rho},t_{1}^{\rho},t_{2}^{\rho},t_{3}^{\rho},t_{4}^{\rho},t_{5}^{\rho})\) is a solution of problem \(P_{\rho}\), \(x_{\rho}\) solves problem \(\tilde{P}_{\rho}\). Furthermore, \(x_{\rho}\in Q:=\{x: (x,y_{1},y_{2},z)\in V(Q^{\dagger})\}\) where

Proof

Denote the objective function of problem \(P_{\rho}\) by \(F(x,t_{1},t_{2},t_{3},t_{4},t_{5})\). Suppose that \(x_{\rho}^{*}\) solves problem \(\tilde{P}_{\rho}\). Then there exist \((t_{1}^{*},t_{2}^{*},t_{3}^{*})\in Z_{3}(\rho,x_{\rho}^{*})\) and \((t_{4}^{*},t_{5}^{*})\in Z_{1}(\rho)\), such that

Moreover, \((x_{\rho}^{*}, t_{1}^{*},t_{2}^{*},t_{3}^{*},t_{4}^{*}, t_{5}^{*})\) is a feasible point of problem \(P_{\rho}\).

Then we have

where (8) holds due to the definition of \(f_{\rho}(x_{\rho})\), (9) holds because of the optimality of \((x_{\rho},t_{1}^{\rho},t_{2}^{\rho},t_{3}^{\rho},t_{4}^{\rho},t_{5}^{\rho})\) and (10) follows from (7).

Thus, (8)-(10) implies that \(x_{\rho}\) is a solution of problem \(\tilde{P}_{\rho}\).

Using the result of Lemma 3.2, we can obtain

Furthermore, by the result of Lemma 3.4, the definitions of \(Z_{2}(\rho)\) and \(Q^{\dagger}\), we find that \(x_{\rho}\in Q\). This completes the proof. □

Note that \(Q^{\dagger}\) can be referred to as the constraint region of problem (2) based on Definition 1(a).

Finally, we provide the following result which demonstrates that our penalty method is exact, and also presents the relationships between problems \(\tilde{P}_{\rho}\) and P.

Theorem 3.2

Let Assumption (A) hold, and \(\{x_{\rho}\}\) be a sequence of solutions of problem \(\tilde{P}_{\rho}\). Then there exists \(\rho^{*}>0\), such that for all \(\rho>\rho^{*}\), \(x_{\rho}\) is a solution of problem P.

Proof

Let \((y_{1}(x),y_{2}(x),z(x),w_{1}(x),w_{2}(x))\in V(Z_{5}(x))\times V(Z_{4}^{1})\times V(Z_{4}^{2})\) be a solution of problem \(P_{\rho}(x)\). For any \((\hat{y}_{1},\hat{y}_{2},\hat{z},\hat{w}_{1},\hat{w}_{2})\in V(Z_{5}(x))\times V(Z_{4}^{1})\times V(Z_{4}^{2})\), we have

In particular, choose \((\hat{y}_{i},\hat{z})\) and \(\hat{w}_{i}\) (\(i=1,2\)), such that they are solutions of problem (3) and its dual problem respectively. Then we obtain

Hence, we have

where \(\Vert \cdot \Vert _{2}\) denotes the Euclidean norm. Moreover, it follows from Assumption (A) that there exists a constant \(\delta>0\), such that

We then find that

For each \(x\in S(X)\), the number of elements of the set \(V(Z_{5}(x))\times V(Z_{4}^{1})\times V(Z_{4}^{2})\) is finite, and then there exists \(0<\rho^{*}(x)<+\infty\), such that

Since \(S(X)\) is a bounded non-empty polyhedron, there exists a constant \(\rho^{*}>0\), such that

and then \((y_{1}(x),y_{2}(x),z(x))\) is a feasible point of problem \(P(x)\). Hence, for any \(x\in S(X)\), we have

Moreover, for any \(x\in S(X)\) and \(\rho>0\), it follows from the definitions of \(f_{\rho}(x)\) and \(f(x)\) that

Therefore, for all \(\rho>\rho^{*}\), we find

where (16) and (18) follow from (15), and (17) holds because of the optimality of \(x_{\rho}\). Equations (16)-(18) imply that \(x_{\rho}\) is a solution for problem P for all \(\rho>\rho^{*}\). This concludes the proof. □

Combining the results of Theorems 3.1 and 3.2, we can characterize problem (2) as a particular kind of nonlinear programming problem whose solution is related to a vertex of the constraint region.

Theorem 3.3

Under Assumption (A), there exists a solution \((x^{*} ,y_{1}^{*} ,y_{2}^{*} ,z^{*} )\) of problem (2) such that \(x^{*} \in Q\).

From the result of Theorem 3.3, we know that a solution of problem (2) may be related to a vertex of \(Q^{+}\). Hence one possible way to find the solution would be to generate all vertices of \(Q^{+}\) and test each one as a possible solution by maximizing \(P(x)\) in \((y_{1},y_{2},z)\) for fixed x. That is, solving problem (2) is equivalent to finding \(x^{*}\) with \((y^{*}_{1},z^{*}) \in\Psi_{1}(x^{*})\) and \((y^{*}_{2},z^{*}) \in\Psi_{2}(x^{*})\) such that

where for \(x_{[j]}\) (\(j=1,2,\dots,N\)) there exists a point \((x_{[j]},y_{1}^{[j]},y_{2}^{[j]},z^{[j]})\), such that \((x_{[j]},y_{1}^{[j]},y_{2}^{[j]},z^{[j]})\) are the N ordered basic feasible points for the following linear programming problem:

Rather than enumerate all vertices of the set \(Q^{+}\) explicitly, we present the following algorithm which finds a solution to problem (2).

Algorithm

- Step 0.:

-

Choose \(\rho>0\) and \(\gamma>1\).

- Step 1.:

-

Solve problem \(P_{\rho}\), and denote the solution by \((x^{\rho},t_{1}^{\rho},t_{2}^{\rho},t_{3}^{\rho},t_{4}^{\rho},t_{5}^{\rho})\).

- Step 2.:

-

Solve problem \(P_{\rho}(x^{\rho})\), and denote the solution by \((y_{1}^{\rho},y_{2}^{\rho},w_{1}^{\rho},w_{2}^{\rho},z^{\rho})\).

- Step 3.:

-

If \(\sum_{i=1}^{2} \pi_{i}(x^{\rho},y_{i}^{\rho},w_{i}^{\rho },z^{\rho})=0\), then stop, \((x^{\rho},y_{1}^{\rho},y_{2}^{\rho},z^{\rho})\) is a solution of problem (2). Otherwise, set \(\rho=\gamma\rho\) and proceed to Step 1.

4 An application

In this section, we apply the proposed partially-shared PBLMF decision making model to a company making venture investments. Consider the investments with a CEO and the selected two departments of the company. All decision entities have individual objectives, constraints, and variables and do not cooperate with one another. The departments within the company are also in an uncooperative situation, but they need to use the same warehouse in this company. The CEO’s decision takes the responses of the selected departments into consideration and aims to maximize the company’s profit. At the same time, the departments fully consider the CEO’s decision, and make a rational response to maximize their own profit. Under incomplete and asymmetric information, the CEO cannot directly observe both departments’ effort and the inventory expense. Then it is difficult for the CEO to design an investment planning model that aims to maximize the company’s profit (or minimize the company’s cost). In this case, the CEO may want to create a safety margin to bound the damage resulting from undesirable selections of the two departments.

To model the above venture investment problem, the notations are introduced as follows:

-

(1)

The CEO (Leader)

- •:

-

Objective \(G_{1}\):

-

The aim is to maximize the company’s profit.

-

- •:

-

Variables \((x_{1},x_{2})\):

- \(x_{1}\)::

-

How much is used for the CEO’s investment in product 1.

- \(x_{2}\)::

-

How much is used for the CEO’s investment in product 2.

- •:

-

Constraint:

- \(H_{1}\leqslant0\)::

-

The total investment cost for products 1 and 2.

-

(2)

Two selected departments (Followers) Suppose that both department 1 (for product 1) and department 2 (for product 2) share the same warehouse.

- ⋄:

-

Department 1:

- •:

-

Objective \(G_{21}\):

-

The aim is to maximize his total profit which includes his own profit in product 1 and a fraction of the company’s revenue.

-

- •:

-

Variables \((y_{1},z)\):

- \(y_{1}\)::

-

The effort for completing product 1.

- z::

-

The inventory expense for product 1.

- •:

-

Constraints:

- \(H_{21}\leqslant0\)::

-

The effort and cost of department 1 that links with the CEO’s investments.

- \(H_{22}\leqslant0\)::

-

The maximum effort of department 1.

- \(H_{23}\leqslant0\)::

-

The maximum inventory expense in product 1.

- ⋄:

-

Department 2:

- •:

-

Objective \(G_{31}\):

-

The aim is to maximize his total profit which includes his own profit in product 2 and a fraction of the company’s revenue.

-

- •:

-

Variables \((y_{2},z)\):

- \(y_{2}\)::

-

The effort for completing product 2.

- z::

-

The inventory expense for product 2.

- •:

-

Constraints:

- \(H_{31}\leqslant0\)::

-

The effort and cost of department 2 that links with the CEO’s investments.

- \(H_{32}\leqslant0\)::

-

The maximum effort of department 2.

- \(H_{33}\leqslant0\)::

-

The maximum inventory expense in product 2.

Then a partially-shared linear PBLMF model of the company making venture investments is given as follows:

To use the proposed algorithm, we can equivalently transform problem (19) into the following problem:

Note that both (19) and (20) have the same solutions, and their optimal values are negatives of each other.

Choose \(\rho=1\) and \(\gamma=10\). The disjoint bilinear programming problems at Step 1 are solved by a commercial optimization software package BARON [27, 28]. By using the proposed algorithm, it is easy to see that \((x_{1}^{*},x_{2}^{*},y_{1}^{*},y_{2}^{*},z^{*})^{T}=(0,1,0.5, 0, 1)^{T}\) is a solution of problem (19), and the optimal value is 9.

5 Conclusions

This study addresses a partially-shared linear PBLMF programming problem in which there is a partially-shared variable among followers. Furthermore, the study presents the concept and a solution algorithm for such a problem. Finally, the partially-shared PBLMF model is applied to a company making venture investments. For future research, it will be interesting to propose the modified intelligent algorithms for the partially-shared PBLMF model, to apply the model in various areas, and to explore the concept, algorithms, and applications of a PBLMF model in the referential-uncooperative situation.

References

Dempe, S: Annottated bibliography on bilevel programming and mathematical problems with equilibrium constraints. Optimization 52, 333-359 (2003)

Bard, JF: Practical Bilevel Optimization: Algorithms and Applications. Kluwer Academic, Dordrecht (1998)

Dempe, S: Foundations of Bilevel Programming. Nonconvex Optimization and Its Applications Series. Kluwer Academic, Dordrecht (2002)

Dempe, S, Kalashnikov, V, Pérez-Valdés, GA, Kalashnykova, N: Bilevel Programming Problems Theory, Algorithms and Applications to Energy Networks. Springer, Berlin (2015)

Zhang, G, Lu, J, Gao, Y: Multi-Level Decision Making-Models, Methods and Applications. Springer, Berlin (2015)

Loridan, P, Morgan, J: Weak via strong Stackelberg problem: new results. J. Glob. Optim. 8, 263-287 (1996)

Zheng, Y, Wan, Z, Jia, S, Wang, G: A new method for strong-weak linear bilevel programming problem. J. Ind. Manag. Optim. 11, 529-547 (2015)

Wiesemann, W, Tsoukalas, A, Kleniati, P, Rustem, B: Pessimistic bi-level optimisation. SIAM J. Optim. 23, 353-380 (2013)

Colson, B, Marcotte, P, Savard, G: An overview of bilevel optimization. Ann. Oper. Res. 153, 235-256 (2007)

Aboussoror, A, Mansouri, A: Weak linear bilevel programming problems: existence of solutions via a penalty method. J. Math. Anal. Appl. 304, 399-408 (2005)

Aboussoror, A, Mansouri, A: Existence of solutions to weak nonlinear bilevel problems via MinSup and d.c. problems. RAIRO. Rech. Opér. 42, 87-103 (2008)

Aboussoror, A, Adly, S, Jalby, V: Weak nonlinear bilevel problems: existence of solutions via reverse convex and convex maximization problems. J. Ind. Manag. Optim. 7, 559-571 (2011)

Lignola, MB, Morgan, J: Topological existence and stability for Stackelberg problems. J. Optim. Theory Appl. 84, 145-169 (1995)

Loridan, P, Morgan, J: New results on approximate solutions in two-level optimization. Optimization 20, 819-836 (1989)

Loridan, P, Morgan, J: ϵ-Regularized two-level optimization problems: approximation and existence results. In: Proceeding of the Fifth French-German Optimization Conference, pp. 99-113. Springer, Berlin (1989)

Dassanayaka, S: Methods of variational analysis in pessimistic bilevel programming. PhD thesis, Wayne State University (2010)

Dempe, S, Mordukhovich, BS, Zemkoho, AB: Necessary optimality conditions in pessimistic bilevel programming. Optimization 63, 505-533 (2014)

Zheng, Y, Wan, Z, Sun, K, Zhang, T: An exact penalty method for weak linear bilevel programming problem. J. Appl. Math. Comput. 42, 41-49 (2013)

C̆ervinka, M, Matonoha, C, Outrata, JV: On the computation of relaxed pessimistic solutions to MPECs. Optim. Methods Softw. 28, 186-206 (2013)

Calvete, HI, Galé, C: Linear bilevel multi-follower programming with independent followers. J. Glob. Optim. 39, 409-417 (2007)

Lu, J, Shi, C, Zhang, G: On bilevel multi-follower decision making: general framework and solutions. Inf. Sci. 176, 1607-1627 (2006)

Lu, J, Zhang, G, Montero, J, Garmendia, L: Multifollower trilevel decision making models and system. IEEE Trans. Ind. Inform. 8, 974-985 (2012)

Anandalingam, G, White, DJ: A solution for the linear static Stackelberg problem using penalty function. IEEE Trans. Autom. Control 35, 1170-1173 (1990)

Campelo, M, Dantas, S, Scheimberg, S: A note on a penalty function approach for solving bi-level linear programs. J. Glob. Optim. 16, 245-255 (2000)

White, DJ, Anandalingam, G: A penalty function for solving bi-level linear programs. J. Glob. Optim. 3, 397-419 (1993)

Vaish, H, Shetty, CM: The bilinear programming problem. Nav. Res. Logist. Q. 23, 303-309 (1976)

Sahinidis, NV: BARON 12.6.0: Global Optimization of Mixed-Integer Nonlinear Programs (User’s Manual) (2013)

Tawarmalani, M, Sahinidis, NV: A polyhedral branch-and-cut approach to global optimization. Math. Program. 103, 225-249 (2005)

Acknowledgements

This research was supported by the National Natural Science Foundation of China (Nos. 11501233, 71471140, and 11401450).

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

YZ and ZZ conceived and designed the study. YZ wrote and edited the manuscript. ZZ and LY examined all the steps of the proofs in this research and gave some advice. All authors read and approved the final manuscript.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 4.0 International License (http://creativecommons.org/licenses/by/4.0/), which permits unrestricted use, distribution, and reproduction in any medium, provided you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons license, and indicate if changes were made.

About this article

Cite this article

Zheng, Y., Zhu, Z. & Yuan, L. Partially-shared pessimistic bilevel multi-follower programming: concept, algorithm, and application. J Inequal Appl 2016, 15 (2016). https://doi.org/10.1186/s13660-015-0956-1

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13660-015-0956-1