Abstract

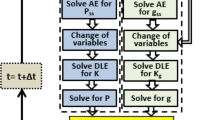

This paper studies data-driven learning-based methods for the finite-horizon optimal control of linear time-varying discrete-time systems. First, a novel finite-horizon Policy Iteration (PI) method for linear time-varying discrete-time systems is presented. Its connections with existing infinite-horizon PI methods are discussed. Then, both data-driven off-policy PI and Value Iteration (VI) algorithms are derived to find approximate optimal controllers when the system dynamics is completely unknown. Under mild conditions, the proposed data-driven off-policy algorithms converge to the optimal solution. Finally, the effectiveness and feasibility of the developed methods are validated by a practical example of spacecraft attitude control.

Similar content being viewed by others

References

R. E. Bellman. Dynamic Programming. Princeton: Princeton University Press, 1957.

D. P. Bertsekas. Dynamic Programming and Optimal Control. 4th ed. Belmont: Athena Scientific, 2017.

D. Liberzon. Calculus of Variations and Optimal Control Theory: A Concise Introduction. Princeton: Princeton University Press, 2011.

D. P. Bertsekas, J. N. Tsitsiklis. Neuro-Dynamic Programming. Belmont: Athena Scientific, 1996.

R. S. Sutton, A. G. Barto. Reinforcement Learning: An Introduction. 2nd ed. Cambridge: MIT Press, 2018.

C. Szepesvari. Algorithms for Reinforcement Learning. San Franscisco: Morgan and Claypool Publishers, 2010.

Y. Jiang, Z. P. Jiang. Robust Adaptive Dynamic Programming. Hoboken: Wiley, 2017.

F. L. Lewis, D. Liu (editors). Reinforcement Learning and Approximate Dynamic Programming for Feedback Control. Hoboken: Wiley, 2013.

B. Kiumarsi, K. G. Vamvoudakis, H. Modares, et al. Optimal and autonomous control using reinforcement learning: A survey. IEEE Transactions on Neural Networks and Learning Systems, 2018, 29(6): 2042–2062.

W. B. Powell. Approximate Dynamic Programming: Solving the Curses of Dimensionality. Hoboken: Wiley, 2011.

D. Liu, Q. Wei, D. Wang, et al. Adaptive Dynamic Programming with Applications in Optimal Control. Berlin: Springer International Publishing, 2017.

R. Kamalapurkar, P. Walters, J. Rosenfeld, et al. Reinforcement Learning for Optimal Feedback Control: A Lyapunov Based Approach. Berlin: Springer International Publishing, 2018.

W. Gao, Z. P. Jiang. Adaptive dynamic programming and adaptive optimal output regulation of linear systems. IEEE Transactions on Automatic Control, 2016, 61(12): 4164–4169.

D. Vrabie, K. G. Vamvoudakis, F. L. Lewis. Optimal Adaptive Control and Differential Games by Reinforcement Learning Principles. London: Institution of Engineering and Technology, 2013.

M. Huang, W. Gao, Z. P. Jiang. Connected cruise control with delayed feedback and disturbance: An adaptive dynamic programming approach. International Journal of Adaptive Control and Signal Processing, 2017: DOI https://doi.org/10.1002/acs.2834.

T. Bian, Z. P. Jiang. Value iteration and adaptive dynamic programming for data-driven adaptive optimal control design. Automatica, 2016, 71: 348–360.

D. P. Bertsekas. Value and policy iterations in optimal control and adaptive dynamic programming. IEEE Transactions on Neural Networks and Learning Systems, 2017, 28(3): 500–509.

D. Kleinman, T. Fortmann, M. Athans. On the design of linear systems with piecewise-constant feedback gains. IEEE Transactions on Automatic Control, 1968, 13(4): 354–361.

Q. M. Zhao, H. Xu, J. Sarangapani. Finite-horizon near optimal adaptive control of uncertain linear discrete-time systems. Optimal Control Applications and Methods, 2015, 36(6): 853–872.

C. X. Mu, D. Wang, H. B. He. Data-driven finite-horizon approximate optimal control for discrete-time nonlinear systems using iterative HDP approach. IEEE Transactions on Cybernetics, 2018, 48(10): 2948–2961.

A. Heydari, S. N. Balakrishnan. Finite-horizon control-constrained nonlinear optimal control using single network adaptive critics. IEEE Transactions on Neural Networks and Learning Systems, 2013, 24(1): 145–157.

R. Beard. Improving the Closed-loop Performance of Nonlinear Systems. Ph.D. dissertation. New York: Rensselaer Polytechnic Institute, 1995.

T. Cheng, F. L. Lewis, M. Abu-Khalaf. A neural network solution for fixed-final time optimal control of nonlinear systems. Automatica, 2007, 43(3): 482–490.

Q. M. Zhao, H. Xu, S. Jagannathan. Neural network-based finitehorizon optimal control of uncertain affine nonlinear discretetime systems. IEEE Transactions on Neural Networks and Learning Systems, 2015, 26(3): 486–499.

P. Frihauf, M. Krstic, T. Basar. Finite-horizon LQ control for unknown discrete-time linear systems via extremum seeking. European Journal of Control, 2013, 19(5): 399–407.

S. J. Liu, M. Krstic, T. Basar. Batch-to-batch finite-horizon LQ control for unknown discrete-time linear systems via stochastic extremum seeking. IEEE Transactions on Automatic Control, 2017, 62(8): 4116–4123.

J. Fong, Y. Tan, V. Crocher, et al. Dual-loop iterative optimal control for the finite horizon LQR problem with unknown dynamics. Systems & Control Letters, 2018, 111: 49–57.

G. De Nicolao. On the time-varying Riccati difference equation of optimal filtering. SIAM Journal on Control and Optimization, 1992, 30(6): 1251–1269.

E. Emre, G. Knowles. A Newton-like approximation algorithm for the steady-state solution of the riccati equation for time-varying systems. Control Applications and Methods, 1987, 8(2): 191–197.

G. Hewer. An iterative technique for the computation of the steady state gains for the discrete optimal regulator. IEEE Transactions on Automatic Control, 1971, 16(4): 382–384.

D. Kleinman. On an iterative technique for Riccati equation computations. IEEE Transactions on Automatic Control, 1968, 13(1): 114–115.

D. Kleinman. Suboptimal Design of Linear Regulator Systems Subject to Computer Storage Limitations. Ph.D. dissertation. Cambridge: Massachusetts Institute of Technology, 1967.

P. Lancaster, L. Rodman. Algebraic Riccati Equations. Oxford: Oxford University Press, 1995.

S. J. Bradtke, B. E. Ydstie, A. G. Barto. Adaptive linear quadratic control using policy iteration. Proceedings of the American Control Conference, Baltimore: IEEE, 1994: 3475–3479.

W. Gao, Y. Jiang, Z. P. Jiang, et al. Output-feedback adaptive optimal control of interconnected systems based on robust adaptive dynamic programming. Automatica, 2016, 72: 37–45.

L. V. Kantorovich, G. P. Akilov. Functional Analysis in Normed Spaces. New York: Macmillan, 1964.

S. Bittanti, P. Colaneri, G. De Nicolao. The difference periodic Riccati equation for the periodic prediction problem. IEEE Transactions on Automatic Control, 1988, 33(8): 706–712.

Y. Yang. An efficient LQR design for discrete-time linear periodic system based on a novel lifting method. Automatica, 2018, 87: 383–388.

Y. Jiang, Z. P. Jiang. Computational adaptive optimal control for continuous-time linear systems with completely unknown dynamics. Automatica, 2012, 48(10): 2699–2704.

R. Okano, T. Kida. Stability and stabilization of extending space structures. Transactions of the Society of Instrument and Control Engineers, 2002, 38(3): 284–292.

A. Long, M. Richards, D. E. Hastings. On-orbit servicing: a new value proposition for satellite design and operation. Journal of Spacecraft and Rockets, 2007, 44(4): 964–976.

L. Zhang, G. R. Duan. Robust poles assignment for a kind of second-order linear time-varying systems. Proceedings of the Chinese Control Conference, Hefei: IEEE, 2012: 2602–2606.

Author information

Authors and Affiliations

Corresponding author

Additional information

The work of B. Pang and Z.-P. Jiang has been supported in part by the National Science Foundation (No. ECCS-1501044).

Bo PANG received the B.Sc. degree in Automation from the Beihang University, Beijing, China, in 2014, and the M.Sc. degree in Control Science and Engineering from Shanghai Jiao Tong University, Shanghai, China, in 2017. He is currently working toward the Ph.D. degree with the Control and Networks Lab, Department of Electrical and Computer Engineering, Tandon School of Engineering, New York University, Brooklyn, NY, U.S.A. His research interests include optimal control, approximate/adaptive dynamic programming. and reinforcement learning.

Tao BIAN received the B.Eng. degree in Automation from Huazhong University of Science and Technology, Wuhan, China, in 2012, and the M.Sc. and the Ph.D. degree in Electrical Engineering from Tandon School of Engineering, New York University, Brooklyn, NY, in 2014 and 2017, respectively. He is currently a quantitative finance analyst, assistant vice president, at Bank of America Merrill Lynch, One Bryant Park, New York. His research interests include reinforcement learning, control and optimization of stochastic systems.

Zhong-Ping JIANG received the B.Sc. degree in Mathematics from the University of Wuhan, Wuhan, China, in 1988, the M.Sc. degree in Statistics from the University of Paris XI, Paris, France, in 1989, and the Ph.D. degree in Automatic Control and Mathematics from the ´ Ecole des Mines de Paris, Paris, in 1993. He is currently a Professor of electrical and computer engineering with the Department of Electrical and Computer Engineering, Tandon School of Engineering, New York University, Brooklyn, NY, U.S.A. He was a named a Highly Cited Researcher by Web of Science (2018) and has coauthored Stability and Stabilization of Nonlinear Systems (Springer, 2011), Nonlinear Control of Dynamic Networks (Taylor & Francis, 2014), Robust Adaptive Dynamic Programming (Wiley-IEEE Press, 2017) and Nonlinear Control Under Information Constraints (Science Press, 2018). His current research interests include stability theory, robust/adaptive/distributed nonlinear control, adaptive dynamic programming, and their applications to information, mechanical, and biological systems. Dr. Jiang is an IEEE Fellow and an IFAC Fellow.

Rights and permissions

About this article

Cite this article

Pang, B., Bian, T. & Jiang, ZP. Adaptive dynamic programming for finite-horizon optimal control of linear time-varying discrete-time systems. Control Theory Technol. 17, 73–84 (2019). https://doi.org/10.1007/s11768-019-8168-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11768-019-8168-8