Abstract

Modelling bias is an important consideration when dealing with inexpert annotations. We are concerned with training a classifier to perform sentiment analysis on news media articles, some of which have been manually annotated by volunteers. The classifier is trained on the words in the articles and then applied to non-annotated articles. In previous work we found that a joint estimation of the annotator biases and the classifier parameters performed better than estimation of the biases followed by training of the classifier. An important question follows from this result: can the annotators be usefully clustered into either predetermined or data-driven clusters, based on their biases? If so, such a clustering could be used to select, drop or otherwise categorise the annotators in a crowdsourcing task. This paper presents work on fitting a finite mixture model to the annotators’ bias. We develop a model and an algorithm and demonstrate its properties on simulated data. We then demonstrate the clustering that exists in our motivating dataset, namely the analysis of potentially economically relevant news articles from Irish online news sources.

Similar content being viewed by others

Notes

These words are: “said”, “its”, “year”, “a”, “s”, “to”, “by”, “irish”, “has”, “and”, “that”, “at”, “as”, “an”, “they”, “for”, “of”, “are”, “on”, “not”, “but”, “last”, “this”, “have”, “from”, “was”, “with”, “it”, “the”, “in”, “he”, “would”, “be”, “will”, “is”, “their”, “mr”, “were”, “had”, “which”, “we”, “ireland”, “been”, “his”, “2009”, “per”, “cent”.

Abbreviations

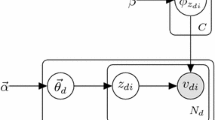

- \(K\) :

-

Number of annotators

- \(N\) :

-

Number of unique word terms in the articles

- \(A\) :

-

Number of articles

- \(B\) :

-

Number of annotator clusters

- \(J\) :

-

Number of article types; 3 in our examples

- \({y}\) :

-

\(A\times J \times K\) binary matrix of annotations

- \({w}\) :

-

\(A\times N\) binary matrix of word term presences

- \({\varvec{T}}\) :

-

\(A\times J\) length binary indicators for the types of the articles

- \({\varvec{Z}}\) :

-

\(K\times B\) length binary indicators for the cluster memberships of the annotators

- \(\pi \) :

-

\(B\times J\times J\) cluster specific matrix of annotation error rates

- \(\theta \) :

-

\(N\times J\) matrix of word term classifier probabilities

References

Aitchison J (1986) The statistical analysis of compositional data. Monographs on statistics and applied probability. Chapman and Hall, London

Biernacki C, Celeux G, Govaert G (1999) An improvement of the NEC criterion for assessing the number of clusters in a mixture model. Pattern Recognit Lett 20(3):267–272

Blitzer J, Dredze M, Pereira F (2007) Biographies, bollywood, boomboxes and blenders: domain adaptation for sentiment classification. In: Carroll JA, van den Bosch A, Zaenen A (eds) Proceedings of the 45th annual meeting of the association for computational linguistics (ACL 2007), pp 187–205

Bollen J, Mao H (2011) Twitter mood as a stock market predictor. Computer 44(10):91–94

Brew A, Greene D, Cunningham P (2010a) The interaction between supervised learning and crowdsourcing. In: NIPS workshop on computational social science and the wisdom of crowds

Brew A, Greene D, Cunningham P (2010b) Using crowdsourcing and active learning to track sentiment in online media. In: Coelho H, Studer R, Wooldridge M (eds) Proceedings of the 19th European conference on artificial intelligence (ECAI 2010), IOS Press, pp 1–11

Celeux G, Soromenho G (1996) An entropy criterion for assessing the number of clusters in a mixture model. J Classif 13(2):195–212

Dawid A, Skene A (1979) Maximum likelihood estimation of observer error-rates using the EM algorithm. J R Stat Soc Ser C (Appl Stat) 28(1):20–28

Dempster AP, Laird NM, Rubin DB (1977) Maximum likelihood from incomplete data via the EM algorithm. J R Stat Soc Ser B 39(1):1–38

Domingos P, Pazzani M (1997) On the optimality of the simple Bayesian classifier under zero-one loss. Mach Learn 29:103–130

Fraley C, Raftery AE (2002) Model-based clustering, discriminant analysis, and density estimation. J Am Stat Assoc 97(458):611–631

Govaert G, Nadif M (2003) Clustering with block mixture models. Pattern Recognit 36(2):463–473

Govaert G, Nadif M (2005) An EM algorithm for the block mixture model. IEEE Trans Pattern Anal Mach Intell 27(4):643–647

Govaert G, Nadif M (2008) Block clustering with Bernoulli mixture models: comparison of different approaches. Comput Stat Data Anal 52(6):3233–3245

Hand DJ, Yu K (2001) Idiot’s Bayes—not so stupid after all? Int Stat Rev 69(3):385–398

Hathaway R (1986) Another interpretation of the EM algorithm for mixture distributions. Stat Probab Lett 4(2):53–56

Kass R, Raftery AE (1995) Bayes factors and model uncertainty. J Am Stat Assoc 90:773–795

Keribin C (2000) Consistent estimation of the order of mixture models. Sankhyā Ser A 62(1):49–66

Leroux BG (1992) Consistent estimation of a mixing distribution. Ann Stat 20:1350–1360

Long B, Zhang Z, Yu P (2007) A probabilistic framework for relational clustering. In: Berkhin P, Caruana R, Wu X (eds) Proceedings of the 13th ACM SIGKDD international conference on knowledge discovery and data mining (KDD 2007), ACM, pp 470–479

McLachlan G, Peel D (2000) Finite mixture models. Wiley-Interscience, Hoboken

McNicholas PD, Murphy TB (2008) Parsimonious Gaussian mixture models. Stat Comput 18(3):285–296

Miller RG (1974) The jackknife—a review. Biometrika 61(1):1–15

Pang B, Lee L (2004) A sentimental education: sentiment analysis using subjectivity summarization based on minimum cuts. In: Scott D, Daelemans W, Walker MA (eds) Proceedings of the 42nd annual meeting on association for computational linguistics (ACL 2004), pp 271–278

Quenouille M (1949) Approximate tests of correlation in time-series. J R Stat Soc Ser B (Methodol) 11(1):68–84

Raykar V, Yu S, Zhao L, Valadez G, Florin C, Bogoni L, Moy L (2010) Learning from crowds. J Mach Learn Res 11:1297–1322

Raykar VC, Yu S (2012) Eliminating spammers and ranking annotators for crowdsourced labeling tasks. J Mach Learn Res 13:491–518

Salter-Townshend M, Murphy T (2012) Sentiment analysis of online media. In: Lausen B, van del Poel D, Ultsch A (eds) Algorithms from and for nature and life. Studies in classification, data analysis, and knowledge organization. Springer, Berlin

Schwarz G (1978) Estimating the dimension of a model. Ann Stat 6(2):461–464

Shan H, Banerjee A (2008) Bayesian co-clustering. In: Proceedings of the 8th IEEE international conference on data mining (ICDM 2008), IEEE, pp 530–539

Thomas M, Pang B, Lee L (2006) Get out the vote: determining support or opposition from congressional floor-debate transcripts. In: Proceedings of the 2006 conference on empirical methods in natural language processing (EMNLP 2006), pp 327–335

Tukey JW (1958) Bias and confidence in not quite large samples. Ann Math Stat 29(2):614

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Salter-Townshend, M., Murphy, T.B. Mixtures of biased sentiment analysers. Adv Data Anal Classif 8, 85–103 (2014). https://doi.org/10.1007/s11634-013-0150-6

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11634-013-0150-6