Abstract

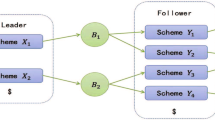

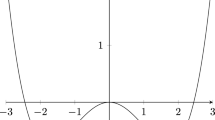

In this paper, we present a new method for finding a fixed local-optimal policy for computing the customer lifetime value. The method is developed for a class of ergodic controllable finite Markov chains. We propose an approach based on a non-converging state-value function that fluctuates (increases and decreases) between states of the dynamic process. We prove that it is possible to represent that function in a recursive format using a one-step-ahead fixed-optimal policy. Then, we provide an analytical formula for the numerical realization of the fixed local-optimal strategy. We also present a second approach based on linear programming, to solve the same problem, that implement the c-variable method for making the problem computationally tractable. At the end, we show that these two approaches are related: after a finite number of iterations our proposed approach converges to same result as the linear programming method. We also present a non-traditional approach for ergodicity verification. The validity of the proposed methods is successfully demonstrated theoretically and, by simulated credit-card marketing experiments computing the customer lifetime value for both an optimization and a game theory approach.

Similar content being viewed by others

References

Altman, E. & Shwartz, E. (1991). Markov decision problems and state-action frequencies. SIAM Journal Control and Optimization, 29(4): 786–809.

Aravindakshan, A., Rust, R.T., Lemon, K.N. & Zeithaml, V.A. (2004). Customer equity Making marketing strategy financially accountable. Journal of Systems Science and Systems Engineering, 13(4): 405–422.

Ascarza, E. & Hardie, B.G.S. (2013). A joint model of usage and churn in contractual settings. Marketing Science, 32: 570–590.

Berger, P.D. & Nasr, N.I. (1998). Customer lifetime value: Marketing models and applications. Journal of Interactive Marketing, 12(1): 17–30.

Blattberg, R.C., Getz, G. & Thomas, J.S. (2001). Customer Equity: Building and Managing Relationships as Valuable Assets. Harvard Business School Press, Boston.

Malthouseb, E.C. & Neslin, S.A. (2009). Customer lifetime value: Empirical generalizations and some conceptual questions. Journal of Interactive Marketing, 23(2): 157–168.

Borkar, V. (1983). On minimum cost per unit time control of markov chains. SIAM Journal Control and Optimization, 22: 965–978.

Borkar, V. (1986). Control of markov chains with long-run average cost criterion. In: W. Fleming, P.L. Lions (eds.) Stochastic Differential Systems, IMA Volumes in Mathematics and its Applications, 10: 57–77. Springer-Verlag, Berlin, New York.

Cao, Y., Nsakanda, A. & Diaby, M. (2012). A stochastic linear programming modelling and solution approach for planning the supply of rewards in loyalty reward programs. International Journal of Mathematics in Operational Research, 4(4): 400–421.

Cavazos-Cadena, R. (1992). Existence of optimal stationary policies in average-reward markov decision processes with a recurrent state. Applied Mathematics and Optimization, 26(2): 171–194.

Clempner, J. B. & Poznyak, A. S. (2011). Convergence properties and computational complexity analysis for lyapunov games. International Journal of Applied Mathematics and Computer Science, 21(2): 49–361.

Clempner, J. B. & Poznyak, A. S. (2013). In Parsini T., Tempo R. (eds.), Analysis of Best-Reply Strategies in Repeated Finite Markov Chains Games. 52nd IEEE Conference on Decision and Control, 934–939, Florence, Italy, December 10–13, 2013, IEEE.

Derman, C. (1970). Finite State Markovian Decision Processes. Academic Press, New York.

Dwyer, F.R. (1989). Customer lifetime valuation to support marketing decision making. Journal of Direct Marketing, 11(4): 11–15.

Feinberg, E. & Shwartz, A. (2002). Handbook of Markov Decision Processes: Methods and Applications. Kluwer.

Gupta, S., Lehmann, D.R., Stuart, J.A. (2004). Valuing customer. Journal of Marketing Research, 41(1): 7–18.

Gupta, S. (2009). Customer-based valuation. Journal of Interactive Marketing, 23(2): 169–178.

Hordijk, A., Kallenberg, L.C.M. (1979). Linear programming and markov decision chains. Management Science, 25(4): 352–362.

Howard, A. J. (1960). Dynamic Programming and Markov Processes. M.I.T. Press, Cambridge, MA.

Ho, J., Thomas, L., Pomroy, T. & Scherer, W. (2004). Segmentation in Markov chain consumer credit behavioural models. in Readings in Credit Scoring. Oxford University Press, Oxford.

Kumar, V., Sriram, S., Luo, A. & Chintagunta, P.K. (2011). Assessing the effect of marketing investments in a business marketing context. Marketing Science, 30: 924–940.

Labbi, A. & Berrospi, C. (2007). Optimizing marketing planning and budgeting using markov decision processes: An airline case study. IBM Journal of Research and Development, 51(3/4): 421–431.

Netzer, O., Lattin, J.M., Srinivasan, V. (2008). A hidden markov model of customer relationship dynamics. Marketing Science, 27: 185–204.

Persson, A. (2013). Customer assets and customer equity: Management and measurement issues. Marketing Theory, 13: 19–46.

Pfeifer, P.E. & Carraway, R.L. (2000). Modeling customer relationships as markov chains. Journal of Interactive Marketing, 14: 43–55.

Poznyak, A.S., Najim, K. & Gomez-Ramirez, E. (2000). Self-learning Control of Finite Markov Chains. Marcel Dekker, New York.

Rust, R., Lemon, K. & Zeithalm, V. (2004). Return on marketing: Using customer equity to focus marketing strategy. Journal of Marketing, 68: 109–127.

Rust, R., Zeithalm, V. & Lemon, K. (2000). Driving Customer Equity: How Customer Lifetime Value is Reshaping Corporate Strategy. Simon & Schuster, London.

Sennott, L.I. (1986). A new condition for the existence of optimal stationary policies in average cost markov decision processes. Operations Research Letter, 5: 17–23.

Sennott, L.I. (1989). Average cost optimal stationary policies in infinite state markov decision processes with unbounded costs. Operations Research 37: 626–633.

Shoham, Y. & Leyton-Brown, K. (2009). Multiagent Systems: Algorithmic, Game-Theoretic, and Logical Foundations. Cambridge University Press, New York.

Vorobeychik, Y. & Singh, S. (2012). Computing stackelberg equilibria in discounted stochastic games. Proceedings of the National Conference on Artificial Intelligence (AAAI). Toronto, ON, Canada.

Author information

Authors and Affiliations

Corresponding author

Additional information

Julio B. Clempner has more than ten years experience in the field of management consulting. He holds a Ph.D. in Computer Science from the Center for Computing Research at the National Polytechnic Institute. He specializes in the application of high technology related to Project Management, Analysis and Design of Software, Development of Software (ad-hoc and products), Information Technology Strategic Planning, Evaluation of Software, Business Process Reengineering and IT Government, for infusing advanced computing technologies into diverse lines of businesses. Julio Clempner has a strong technology leadership experience to technical engagements, along with broad functional expertise in big data including: Large Data Bases, Marketing, Security Games, Statistic Analysis, Risk, Optimization, Balanced Score Card, BICC, and Game Theory. He has experience architecting, implementing, and supporting database and data warehouse implementations in private and public sector. Dr. Clempner research interests are focused on justifying and introducing the Lyapunov equilibrium point in game theory. This interest has lead to several streams of research. One stream is on the use of Markov decision processes for formalizing the previous ideas, changing the Bellman’s equation by a Lyapunov-like function. A second stream is on the use of Petri nets as a language for modeling decision process and game theory introducing colors, hierarchy, etc. The final stream examines the possibility to meet modal logic, decision processes and game theory. He also is a member of the Mexican National System of Researchers (SNI), and of several North American and European professional organizations.

Alexander S. Poznyak was graduated from Moscow Physical Technical Institute (MPhTI) in 1970. He earned Ph.D. and Doctor Degrees from the Institute of Control Sciences of Russian Academy of Sciences in 1978 and 1989, respectively. He is the director of 35 PhD thesis’s (28 in Mexico). He has published more than 180 papers in different international journals and 10 books including “Adaptive Choice of Variants” (Nauka, Moscow, 1986), “Learning Automata: Theory and Applications” (Elsivier-Pergamon, 1994), “ Learning “Automata and Stochastic Programming” (Springer-Verlag, 1997), “Self-learning Control of Finite Markov Chains” (Marcel Dekker, 2000), “Differential Neural Networks: Identification, State Estimation and Trajectory Tracking” (World Scientific, 2001) and “Advance mathematical Tools for Automatic Control Engineers. Vol. 1: Deterministic Technique” (Elsevier, 2008) and Vol.2: Stochastic Technique (Elsevier, 2009), “Robust Maximum Principle: Theory and Applications” (Burkhauser, 2012),., “Robust Output Optimal Control via Integral Sliding Mode” (Birkhauser, 2014). He is Regular Member of Mexican Academy of Sciences and System of National Investigators (SNI -3). He is Fellow of IMA (Institute of Mathematics and Its Applications, Essex UK) and Associated Editor of Oxford-IMA Journal on Mathematical Control and Information, of Kybernetika as well as Iberamerican Int. Journal on “Computations and Systems”. He was also Associated Editor of CDC, ACC and Member of Editorial Board of IEEE CSS. He is a member of the Evaluation Committee of SNI (Ministry of Science and Technology) responsible for Engineering Science and Technology Foundation in Mexico, and a member of Award Committee of Premium of Mexico on Science and Technology.

Rights and permissions

About this article

Cite this article

Clempner, J.B., Poznyak, A.S. Simple computing of the customer lifetime value: A fixed local-optimal policy approach. J. Syst. Sci. Syst. Eng. 23, 439–459 (2014). https://doi.org/10.1007/s11518-014-5260-y

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11518-014-5260-y