Abstract

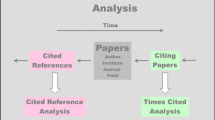

Bibliometrics is a relatively young and rapidly evolving discipline. Essential for this discipline are bibliometric databases and their information content concerning scientific publications and relevant citations. Databases are unfortunately affected by errors, whose main consequence is represented by omitted citations, i.e., citations that should be ascribed to a certain (cited) paper but, for some reason, are lost. This paper studies the impact of omitted citations on the bibliometric statistics of the major Manufacturing journals. The methodology adopted is based on a recent automated algorithm—introduced in (Franceschini et al., J Am Soc Inf Sci Technol 64(10):2149–2156, 2013)—which is applied to the Web of Science (WoS) and Scopus database. Two important results of this analysis are that: (i) on average, the omitted-citation rate (p) of WoS is slightly higher than that of Scopus; and (ii) for both databases, p values do not change drastically from journal to journal and tend to slightly decrease with respect to the issue year of citing papers. Although it would seem that omitted citations do not represent a substantial problem, they may affect indicators based on citation statistics significantly. This paper analyses the effect of omitted citations on popular bibliometric indicators like the average citations per paper and its most famous variant, i.e., the ISI Impact Factor, showing that journal classifications based on these indicators may lead to questionable discriminations.

Similar content being viewed by others

Notes

According to the 2011 JCR (Thomson Reuters 2015).

The same portfolio of cited/citing papers was used in another work of ours—i.e., (Franceschini et al. 2014)—which demonstrates the link between omitted-citation rate and publishers (e.g., Elsevier, Springer, Taylor & Francis, etc.) of the citing papers.

Authors are aware that a more rigorous testing should be that of the differences between CPP * values of pairs of journals (Schenker and Gentleman 2001). The fact remains that the qualitative approach in use is simpler and more straightforward.

References

Adam, D. (2002). Citation analysis: The counting house. Nature, 415(6873), 726–729.

Arnold, D. N., & Fowler, K. K. (2011). Nefarious numbers. Notices of American Mathematical Society, 58(3), 434–437.

Bar-Ilan, J. (2010). Ranking of information and library science journals by JIF and by h-type indices. Journal of Informetrics, 4(2), 141–147.

Buchanan, R. A. (2006). Accuracy of cited references: The role of citation databases. College & Research Libraries, 67(4), 292–303.

DORA. (2013). San Francisco declaration on research assessment. http://am.ascb.org/dora/. 20 May 2014.

ERA. (2010). Excellence in research for Australia initiative. http://www.arc.gov.au/era/era_2010/era_2010.htm. 20 May 2014.

Falagas, M. E., Kouranos, V. D., Arencibia-Jorge, R., & Karageorgopoulos, D. E. (2008). Comparison of SCImago journal rank indicator with journal impact factor. The FASEB Journal, 22(8), 2623–2628.

Franceschini, F., & Maisano, D. (2011). Influence of database mistakes on journal citation analysis: remarks on the paper by Franceschini and Maisano, QREI (2010). Quality and Reliability Engineering International, 27(7), 969–976.

Franceschini, F., Maisano, D., & Mastrogiacomo, L. (2013). A novel approach for estimating the omitted-citation rate of bibliometric databases. Journal of the American Society for Information Science and Technology, 64(10), 2149–2156.

Franceschini, F., Maisano, D., & Mastrogiacomo, L. (2014). Scientific Journal Publishers and Omitted Citations in Bibliometric Databases: Any Relationship? Journal of Informetrics, 8(3), 751–765.

Franceschini, F., Maisano, D., & Mastrogiacomo, L. (2015). Errors in DOI indexing by bibliometric databases. To appear in Scientometrics,. doi:10.1007/s11192-014-1503-4.

Hicks, D. (2009). Evolving regimes of multi-university research evaluation. Higher Education, 57, 393–404.

Jacsó, P. (2006). Deflated, inflated and phantom citation counts. Online Information Review, 30(3), 297–309.

Jacsó, P. (2012). Grim tales about the impact factor and the h-index in the Web of Science and the Journal Citation Reports databases: Reflections on Vanclay’s criticism. Scientometrics, 92(2), 325–354.

Labbé, C. (2010). Ike Antkare, one of the great stars in the scientific firmament. ISSI Newsletter, 6(2), 48–52.

Li, J., Burnham, J. F., Lemley, T., & Britton, R. M. (2010). Citation analysis: Comparison of Web of Science, Scopus, Scifinder, and Google Scholar. Journal of Electronic Resources in Medical Libraries, 7(3), 196–217.

Lowry, P. M., Humpherys, S. L., Malwitz, J., & Nix, J. (2007). A scientometric study of the perceived quality of business and technical communication journals. IEEE Transactions on Professional Communication, 50(4), 352–378.

Meho, L. I., & Yang, K. (2007). Impact of data sources on citation counts and rankings of LIS faculty: Web of Science versus Scopus and Google Scholar. Journal of the American Society for Information Science and Technology, 58(13), 2105–2125.

Moed, H. F. (2005). Citation analysis in research evaluation. Information sciences and knowledge management: Vol. 9. Dordrecht: Springer. http://dx.doi.org/10.1007/1-4020-3714-7. ISBN: 978-1-4020-3713-9.

Moed, H. F. (2011). The source-normalized impact per paper (SNIP) is a valid and sophisticated indicator of journal citation impact. Journal of the American Society for Information Science and Technology, 62(1), 211–213.

Neuhaus, C., & Daniel, H. D. (2008). Data sources for performing citation analysis: An overview. Journal of Documentation, 64(2), 193–210.

Olensky, M. (2013) Accuracy assessment for bibliographic data. Proceedings of the 13th international conference of the international society for scientometrics and informetrics (ISSI), Vol. 2, pp. 1850–1851, Vienna, Austria.

Ross, S. M. (2009). Introduction to probability and statistics for engineers and scientists. New York: Academic Press.

Rossner, M., Van Epps, H., & Hill, E. (2008). Irreproducible results—A response to Thomson Scientific. The Journal of general physiology, 131(2), 183–184.

Schenker, N., & Gentleman, J. F. (2001). On judging the significance of differences by examining the overlap between confidence intervals. The American Statistician, 55(3), 182–186.

Schubert, A., & Glänzel, W. (1983). Statistical reliability of comparisons based on the citation impact of scientific publications. Scientometrics, 5(1), 59–74.

Scopus Elsevier. (2015). Scopus content coverage. http://www.scopus.com. 20 May 2014.

Thomson Reuters. (2015). http://thomsonreuters.com/products_services/science/science_products/a-z/journal_citation_reports/. 20 May 2014.

Van Noorden, R. (2013) New record: 66 Journals banned for boosting impact factor with self-citations. Nature News Blog. http://blogs.nature.com/news/2013/06/new-record-66-journals-banned-for-boosting-impact-factor-with-self-citations.html. 20 May 2014.

VQR. (2011). Italian quality research evaluation VQR 2004–2010. http://www.anvur.org/anvur/. 20 May 2014.

Zitt, M. (2010). Citing-side normalization of journal impact: A robust variant of the Audience Factor. Journal of Informetrics, 4(3), 392–406.

Author information

Authors and Affiliations

Corresponding author

Appendix

Appendix

Analysis of the distribution of omitted citations

Study at the level of the journal of cited papers

The dispersion related to the p J value of each journal (defined in Sect. 4.1) can be roughly estimated through an expedient. Each p J value can be expressed as:

being (p J ) i = (ω J ) i /(ω J ) i (γ J ) i .(γ J ) i the percentage of citations omitted by the database of interest, referring to the i-th article published by J.

Equation 8 shows that (p J ) i can be seen as a weighted average of the omitted-citation rates of individual papers (i.e., (p J ) i values). These contributions have a variable weight, represented by the number of “theoretically overlapping” citations of each i-th article of interest (i.e., (γ J ) i ). Of course, articles with no citation will have a zero weight.

Being p J a weighted quantity, one can represent the distribution of (p J ) i values by a special box-plot based on weighted quartiles, defined as w Q (1) J , w Q (2) J and w Q (3) J , i.e., the weighted first, second (or weighted median) and third quartile of the (p J ) i values. Weighted quartiles are reported in Table 9. These indicators are obtained by ordering in ascending order the (p J ) i values of the articles of interest and considering the values for which the cumulative of weights is equal to respectively the 25, 50 and 75 % of their sum.

The differences between the (p J ) i distributions of the Manufacturing journals seem insignificant for both WoS and Scopus. The reason is that the notches related to the majority of the journals are overlapped. In particular, we note that most of the notches are “collapsed” on the line corresponding to (p J ) i = 0 and all w Q (1) J values are zero, as well as almost all of w Q (2) J values, both for WoS and Scopus. This result is very interesting because it tells us that omitted citations are generally concentrated into a relatively small number of articles. To confirm this, we can see that—for each of the journals analyzed—the weighted median of the (p J ) i values (i.e. w Q (2) J , in Table 9) is systematically lower than the weighted average, i.e. p J .

Study at the level of the age of citing papers

The dispersion related to the p Y values of each journal (defined in Sect. 4.2) can be roughly estimated through an expedient, similarly to that presented in Sect. 6.1.1. Each p Y value can be expressed as:

being (p Y ) i = (ω Y ) i /(ω Y ) i (γ Y ) i .(γ Y ) i the percentage of citations omitted by the database of interest, among those obtained in the year Y, referring to the i-th article examined.

Equation 9 shows that the p Y value relating to a database can be seen as a weighted average of the omitted-citation rates of individual papers ((p Y ) i ). These contributions have a variable weight, given by the number of theoretically overlapping citations ((γ Y ) i ).

The dispersion of the (p Y ) i values can be roughly estimated by examining the relevant weighted quartiles, defined as w Q (1) Y , w Q (2) Y and w Q (3) Y . The construction of these indicators is analogous to that described in Sect. 4.1.

The surprising result is that the totality of the weighed quartiles are zero for both databases. This result is not incompatible with the fact that the weighted quartiles seen for individual journals (in Table 9) were not necessarily all zero. In this new case, we used time-windows of a single year when counting the (omitted) citations of citing papers; the incidence of articles with zero omitted citations is therefore greater than in the previous case. The practical consequence is that all non-zero (p Y ) i values fall beyond the third weighted quartile of the corresponding (weighted) distribution. As an example, the graph in Fig. 7 represents the weighted cumulative distribution relating to the (p Y ) i values for the year 2012, according to WoS. It can be noticed that the first seventy-six weighed percentiles are all zeros. Similar results can be found considering the remaining years.

“Weighted” box-plot of the (p J ) i values relating to the papers in each journal (J), according to the WoS database. w Q (1) J , w Q (2) J and w Q (3) J are the first, second and third weighted quartile of the distributions of interest. Journal abbreviations are reported in Table 3

“Weighted” box-plot of the (p J ) i values relating to the papers in each journal (J), according to the Scopus database. w Q (1) J , w Q (2) J and w Q (3) J are the first, second and third weighted quartile of the distributions of interest. Journal abbreviations are reported in Table 3

This result confirms the fact that, although the p Y values of the two databases tend to decrease over time, these variations are quite weak from a statistical viewpoint.

Additional tables

Rights and permissions

About this article

Cite this article

Franceschini, F., Maisano, D. & Mastrogiacomo, L. Influence of omitted citations on the bibliometric statistics of the major Manufacturing journals. Scientometrics 103, 1083–1122 (2015). https://doi.org/10.1007/s11192-015-1583-9

Received:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11192-015-1583-9