Abstract

Purpose

Computerized adaptive testing (CAT) item banks may need to be updated, but before new items can be added, they must be linked to the previous CAT. The purpose of this study was to evaluate 41 pretest items prior to including them into an operational CAT.

Methods

We recruited 6,882 patients with spine, lower extremity, upper extremity, and nonorthopedic impairments who received outpatient rehabilitation in one of 147 clinics across 13 states of the USA. Forty-one new Daily Activity (DA) items were administered along with the Activity Measure for Post-Acute Care Daily Activity CAT (DA-CAT-1) in five separate waves. We compared the scoring consistency with the full item bank, test information function (TIF), person standard errors (SEs), and content range of the DA-CAT-1 to the new CAT (DA-CAT-2) with the pretest items by real data simulations.

Results

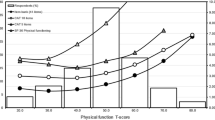

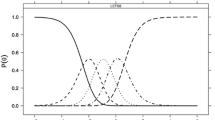

We retained 29 of the 41 pretest items. Scores from the DA-CAT-2 were more consistent (ICC = 0.90 versus 0.96) than DA-CAT-1 when compared with the full item bank. TIF and person SEs were improved for persons with higher levels of DA functioning, and ceiling effects were reduced from 16.1% to 6.1%.

Conclusions

Item response theory and online calibration methods were valuable in improving the DA-CAT.

Similar content being viewed by others

Abbreviations

- ADL:

-

Activities of daily living

- AM-PAC:

-

Activity Measure for Post-Acute Care

- CAT:

-

Computerized adaptive testing

- CFA:

-

Confirmatory factor analyses

- CFI:

-

Comparative fit index

- DA-CAT-1:

-

Original Daily Activity item bank in the CAT

- DA-CAT-2:

-

New expanded item bank with pretest items in the new CAT version

- DIF:

-

Differential item functioning

- GPCM:

-

Generalized partial credit model

- ICC:

-

Intraclass correlation coefficient

- IRT:

-

Item response theory

- PRO:

-

Patient-reported outcome

- RMSEA:

-

Root-mean-square error of approximation

- SE:

-

Standard errors

- TIF:

-

Test information function

- TLI:

-

Tucker–Lewis index

References

Hart, D. L., Cook, K. F., Mioduski, J. E., Teal, C. R., & Crane, P. K. (2006). Simulated computerized adaptive test for patients with shoulder impairments was efficient and produced valid measures of function. Journal of Clinical Epidemiology, 59(3), 290–298. doi:10.1016/j.jclinepi.2005.08.006.

Hart, D. L., Mioduski, J. E., & Stratford, P. W. (2005). Simulated computerized adaptive tests for measuring functional status were efficient with good discriminant validity in patients with hip, knee, or foot/ankle impairments. Journal of Clinical Epidemiology, 58(6), 629–638. doi:10.1016/j.jclinepi.2004.12.004.

Hart, D., Mioduski, J., Werenke, M., & Stratford, P. (2006). Simulated computerized adaptive test for patients with lumbar spine impairments was efficient and produced valid measures of function. Journal of Clinical Epidemiology, 59, 947–956. doi:10.1016/j.jclinepi.2005.10.017.

Jette, A., Haley, S., Tao, W., Ni, P., Moed, R., Meyers, D., et al. (2007). Prospective evaluation of the AM-PAC-CAT in outpatient rehabilitation settings. Physical Therapy, 87, 385–398.

Jette, A. M., & Haley, S. M. (2005). Contemporary measurement techniques for rehabilitation outcomes assessment. Journal of Rehabilitation Medicine, 37(6), 339–345. doi:10.1080/16501970500302793.

Cella, D., Gershon, R., Lai, J.-S., & Choi, S. (2007). The future of outcomes measurement: Item banking, tailored short forms, and computerized adaptive assessment. Quality of Life Research, 16, 133–141. doi:10.1007/s11136-007-9204-6.

Fries, J., Bruce, B., & Cella, D. (2005). The promise of PROMIS: Using item response theory to improve assessment of patient-reported outcomes. Clinical and Experimental Rheumatology, 23(5 (suppl 39)), S53–S57.

Cella, D., Young, S., Rothrock, N., Gershon, R., Cook, K., Reeve, B., et al. (2007). The patient-reported outcomes measurement information system (PROMIS): Progress of an NIH Roadmap Cooperative Group during its first two years. Medical Care, 45(5), S3–S11. doi:10.1097/01.mlr.0000258615.42478.55.

Hambleton, R. K. (2005). Applications of item response theory to improve health outcomes assessment: Developing item banks, linking instruments, and computer-adaptive testing. In J. Lipscomb, C. C. Gotay, & C. Snyder (Eds.), Outcomes assessment in cancer (pp. 445–464). Cambridge, UK: Cambridge University Press.

Fayers, P. (2007). Applying item response theory and computer adaptive testing: The challenges for health outcomes assessment. Quality of Life Research, 16(1), 187–194. doi:10.1007/s11136-007-9197-1.

Wainer, H. (2000). Computerized adaptive testing: A primer. Mahwah, NJ: Lawrence Erlbaum Associates.

Hambleton, R., & Swaminathan, H. (1985). Item Banking. In R. Hambleton & H. Swaminathan (Eds.), Item response theory: Principles and applications (pp. 255–279). Boston, MA: Kluwer Nijoff Publishing.

Revicki, D. A., & Cella, D. F. (1997). Health status assessment for the twenty-first century: Item response theory, item banking and computer adaptive testing. Quality of Life Research, 6, 595–600. doi:10.1023/A:1018420418455.

Bode, R. K., Lai, J. S., Cella, D., & Heinemann, A. W. (2003). Issues in the development of an item bank. Archives of Physical Medicine and Rehabilitation, 84(2), S52–S60. doi:10.1053/apmr.2003.50247.

Hays, R. D., Morales, L. S., & Reise, S. P. (2000). Item response theory and health outcomes measurement in the 21st century. Medical Care, 38(9s), II-28–II-42. doi:10.1097/00005650-200009002-00007.

Haley, S. M., Coster, W. J., Andres, P. L., Ludlow, L. H., Ni, P. S., Bond, T. L. Y., et al. (2004). Activity outcome measurement for post-acute care. Medical Care, 42(1), I-49–I-61. doi:10.1097/01.mlr.0000103520.43902.6c.

Coster, W. J., Haley, S. M., Andres, P. L., Ludlow, L. H., Bond, T. L. Y., & Ni, P. S. (2004). Refining the conceptual basis for rehabilitation outcome measurement: personal care and instrumental activities domain. Medical Care, 42(Suppl 1), I-62–I-72. doi:10.1097/01.mlr.0000103521.84103.21.

Haley, S. M., Ni, P., Hambleton, R. K., Slavin, M. D., & Jette, A. M. (2006). Computer adaptive testing improves accuracy and precision of scores over random item selection in a physical functioning item bank. Journal of Clinical Epidemiology, 59(2), 1174–1182. doi:10.1016/j.jclinepi.2006.02.010.

Sands, W. A., Waters, B. K., & McBride, J. R. (1997). Computerized adaptive testing: From inquiry to operation. Washington DC: American Psychological Association.

Muthen, B., & Muthen, L. (2001). Mplus User’s Guide. Los Angeles: Muthen & Muthen.

Stone, C. (2003). Empirical power and type I error rates for an IRT fit statistic that considers the precision of ability estimates. Educational and Psychological Measurement, 63, 566–583. doi:10.1177/0013164402251034.

Stone, C. A. (2000). Monte Carlo based null distribution for an alternative goodness-of-fit test statistic in IRT models. Journal of Educational Measurement, 37, 58–75. doi:10.1111/j.1745-3984.2000.tb01076.x.

Stone, C. A., & Zhang, B. (2003). Assessing goodness of fit of item response theory models: A comparison of traditional and alternative procedures. Journal of Educational Measurement, 40, 331–352. doi:10.1111/j.1745-3984.2003.tb01150.x.

Zumbo, B. (1999). A Handbook on the theory and methods of differential item functioning (DIF). Ottawa, ON: Directorate of Human Resources Research and Evaluation.

Cohen, J. (1988). Statistical power analysis for the behavioral sciences (2nd ed.). Hillsdale, NJ: Erlbaum.

Chen, W., & Thissen, D. (1997). Local dependence indexes for item pairs using item response theory 289. Journal of Educational and Behavioral Statistics, 22, 265–289.

Tate, R. (2003). A comparison of selected empirical methods for assessing the structure of responses to test items. Applied Psychological Measurement, 27(3), 159–203. doi:10.1177/0146621603027003001.

Yen, W. M. (1993). Scaling performance assessments: strategies for managing local item dependence. Journal of Educational Measurement, 30(3), 187–213. doi:10.1111/j.1745-3984.1993.tb00423.x.

Morgan, D., Way, W., & Augemberg, K. (2006, April 8). A comparison of online calibrations methods for a CAT. Paper presented at the National Council on Measurement on Education, San Francisco, CA.

Ban, J.-C., Hanson, B., Wang, T., Yi, Q., & Harris, D. (2000). A comparative study of online pretest item calibration/scaling methods in CAT. Washington, DC: American Educational Research Association.

Stocking, M., & Swanson, L. (1998). Optimal design of item banks for computerized adaptive tests. Applied Psychological Measurement, 22(3), 271–279. doi:10.1177/01466216980223007.

Wainer, H., & Mislevy, R. (1990). Item response theory, item calibration, and proficiency estimation. In H. Wainer (Ed.), Computer adaptive testing: A primer (pp. 65–102). Hillsdale, NJ: Lawrence Erlbaum.

Muraki, E., & Bock, R. D. (1997). PARSCALE: IRT item analysis and test scoring for rating-scale data. Chicago: Scientific Software International.

van der Linden, W., & Hambleton, R. (1997). Handbook of modern item response theory. Berlin: Springer.

Rijmen, F., Tuerlinckz, F., De Boeck, P., & Kuppens, P. (2003). A nonlinear mixed model framework for item response theory. Psychological Methods, 8, 185–205. doi:10.1037/1082-989X.8.2.185.

Ludlow, L. H., & Haley, S. M. (1995). Rasch model logits: Interpretation, use, and transformation. Educational and Psychological Measurement, 55(6), 967–975. doi:10.1177/0013164495055006005.

Luecht, R. (2004). Computer-adaptive testing. In B. Everett & D. Howell (Eds.), Encyclopedia of statistics in behavioral science. New York: Wiley.

Samejima, F. (1994). Some critical observations of the test information function as a measure of local accuracy in ability estimation. Psychometrika, 59(3), 307–329. doi:10.1007/BF02296127.

Donoghue, J. R. (1994). An empirical examination of the IRT information of polytomously scored reading items under the generalized partial credit model. Journal of Educational Measurement, 31(4), 295–311. doi:10.1111/j.1745-3984.1994.tb00448.x.

Lai, J.-S., Cella, D., Dineen, K., Bode, R., Von Roenn, J. H., Gershon, R. C., et al. (2005). An item bank was created to improve the measurement of cancer-related fatigue. Journal of Clinical Epidemiology, 58, 190–197. doi:10.1016/j.jclinepi.2003.07.016.

Ware, J. E., Jr., Gandek, B., Sinclair, S. J., & Bjorner, B. (2005). Item response theory in computer adaptive testing: Implications for outcomes measurement in rehabilitation. Rehabilitation Psychology, 50(1), 71–78. doi:10.1037/0090-5550.50.1.71.

Ware, J. E., Jr. (2003). Conceptualization and measurement of health-related quality of life: comments on an evolving field. Archives of Physical Medicine and Rehabilitation, 84, S43–S51. doi:10.1053/apmr.2003.50246.

Wainer, H., & Kiely, G. (1987). Item clusters and computerized adaptive testing: A case for testlets. Journal of Educational Measurement, 24, 185–201. doi:10.1111/j.1745-3984.1987.tb00274.x.

Lee, G., Brennan, R. L., & Frisbie, D. A. (2000). Incorporating the testlet concept in test score anaylses. Educational Measurement: Issues and Practice, 19(4), 9–15. doi:10.1111/j.1745-3992.2000.tb00041.x.

Haley, S. M., Coster, W. J., Andres, P. L., Kosinski, M., & Ni, P. S. (2004). Score comparability of short-forms and computerized adaptive testing: Simulation study with the Activity Measure for Post-Acute Care (AM-PAC). Archives of Physical Medicine and Rehabilitation, 85, 661–666. doi:10.1016/j.apmr.2003.08.097.

Acknowledgements

Select Medical Corporation purchased the Outpatient Rehabilitation Division of HealthSouth Corporation on May 1, 2007 and the individual clinics that participated in this study are now known as “Select Physical Therapy and NovaCare.” We would like to thank all of the Select Physical Therapy and NovaCare clinical sites who participated in our study by providing the data used in this study. Sources of support: Select Medical Corporation and in part by an Independent Scientist Award (K02 HD45354-01) to Dr. Haley.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Haley, S.M., Ni, P., Jette, A.M. et al. Replenishing a computerized adaptive test of patient-reported daily activity functioning. Qual Life Res 18, 461–471 (2009). https://doi.org/10.1007/s11136-009-9463-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11136-009-9463-5