Abstract

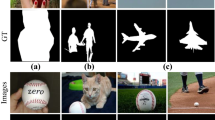

Salient region detection has gradually become a popular topic in multimedia and computer vision research. However, existing techniques exhibit remarkable variations in methodology with inherent pros and cons. In this paper, we propose fusing the saliency hypotheses, namely the saliency maps produced by different methods, by accentuating their advantages and attenuating the disadvantages. To this end, our algorithm consists of three basic steps. First, given the test image, our method finds the similar images and their saliency hypotheses by comparing the similarity of the learned deep features. Second, the error-aware coefficients are computed from the saliency hypotheses. Third, our method produces a pixel-accurate saliency map which covers the objects of interest and exploits the advantages of the state-of-the-art methods. We then evaluate the proposed framework on three challenging datasets, namely MSRA-1000, ECSSD and iCoSeg. Extensive experimental results show that our method outperforms all state-of-the-art approaches. In addition, we have applied our method to the SquareMe application, an autonomous image resizing system. The subjective user-study experiment demonstrates that human prefers the image retargeting results obtained by using the saliency maps from our proposed algorithm.

Similar content being viewed by others

References

Achanta R, Hemami SS, Estrada FJ, Süsstrunk S (2009) Frequency-tuned salient region detection. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 1597–1604

Avidan S, Shamir A (2007) Seam carving for content-aware image resizing. ACM Trans Graph 26(3):10

Batra D, Kowdle A, Parikh D, Luo J, Chen T (2010) icoseg: Interactive co-segmentation with intelligent scribble guidance. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 3169–3176

Borji A (2012) Boosting bottom-up and top-down visual features for saliency estimation. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 438–445

Borji A, Sihite DN, Itti L (2012) Salient object detection: A benchmark. In: European Conference on Computer Vision, pp 414–429

Bruce NDB, Tsotsos JK (2005) Saliency based on information maximization. In: Conference on Neural Information Processing Systems

Cerf M, Harel J, Einhäuser W, Koch C (2007) Predicting human gaze using low-level saliency combined with face detection. In: Conference on Neural Information Processing Systems, pp 241–248

Chen Y, Nguyen TV, Kankanhalli MS, Yuan J, Yan S, Wang M (2014) Audio matters in visual attention. IEEE Trans Circuits Syst Video Technol 24 (11):1992–2003

Cheng M, Zhang G, Mitra NJ, Huang X, Hu S (2011) Global contrast based salient region detection. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 409–416

Donoser M, Urschler M, Hirzer M, Bischof H (2009) Saliency driven total variation segmentation. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 817–824

Fan X, Xie X, Ma W, Zhang H, Zhou H (2003) Visual attention based image browsing on mobile devices. In: ICME, pp 53–56

Fang Y, Chen Z, Lin W, Lin C (2011) Saliency-based image retargeting in the compressed domain. In: ACM Multimedia, pp 1049–1052

Fang Y, Lin W, Lee B, Lau CT, Chen Z, Lin C (2012) Bottom-up saliency detection model based on human visual sensitivity and amplitude spectrum. IEEE Trans Multimedia 14(1):187–198

Goferman S, Zelnik-Manor L, Tal A (2010) Context-aware saliency detection. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 2376–2383

Han J, Zhang D, Hu X, Guo L, Ren J, Wu F (2015) Background prior-based salient object detection via deep reconstruction residual. IEEE Trans Circuits Syst Video Technol 25(8):1309–1321

Harel J, Koch C, Perona P (2006) Graph-based visual saliency. In: Conference on Neural Information Processing Systems, pp 545–552

Hou X, Zhang L (2007) Saliency detection: A spectral residual approach. In: IEEE Conference on Computer Vision and Pattern Recognition

Hou X, Zhang L (2008) Dynamic visual attention: Searching for coding length increments. Conf Neural Inf Proces Syst 21:681–688

Itti L, Koch C, Niebur E (1998) A model of saliency-based visual attention for rapid scene analysis. IEEE Trans Pattern Anal Mach Intell 20(11):1254–1259

Jia Y, Shelhamer E, Donahue J, Karayev S, Long J, Girshick RB, Guadarrama S, Darrell T (2014) Caffe: Convolutional architecture for fast feature embedding. In: ACM Multimedia, pp 675–678

Jiang H, Yuan Z, Cheng M, Gong Y, Zheng N, Wang J (2014) Salient object detection: A discriminative regional feature integration approach. CoRR, abs/1410.5926

Jiang RM, Crookes D (2014) Deep salience: Visual salience modeling via deep belief propagation. In: AAAI Conference on Artificial Intelligence, pp 2773–2779

Judd T, Ehinger KA, Durand F, Torralba A (2009) Learning to predict where humans look. In: International Conference on Computer Vision, pp 2106–2113

Kim J, Han D, Tai Y, Kim J (2014) Salient region detection via high-dimensional color transform. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 883–890

Koch C, Ullman S (1985) Shifts in selective visual attention: towards the underlying neural circuitry Hum Neurobiol

Krizhevsky A, Sutskever I, Hinton GE (2012) Imagenet classification with deep convolutional neural networks. In: Conference on Neural Information Processing Systems, pp 1106–1114

Lang C, Nguyen TV, Katti H, Yadati K, Kankanhalli MS, Yan S (2012) Depth matters: Influence of depth cues on visual saliency. In: European Conference on Computer Vision, pp 101–115

Lawson CL, Hanson RJ (1995) Solving least squares problems, volume 15 of Classics in Applied Mathematics Society for Industrial and Applied Mathematics

LeCun Y, Bengio Y (1995) Convolutional networks for images, speech, and time series. The handbook of brain theory and neural networks, 3361

Mai L, Niu Y, Liu F (2013) Saliency aggregation: A data-driven approach. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 1131–1138

Murray N, Vanrell M, Otazu X, Párraga CA (2011) Saliency estimation using a non-parametric low-level vision model. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 433–440

Nguyen TV, Ni B, Liu H, Xia W, Luo J, Kankanhalli M, Yan S (2013) Image re-attentionizing. IEEE Trans Multimedia 15(8):1910–1919

Nguyen TV, Sepulveda J (2015) Salient object detection via augmented hypotheses. In: Proceedings of the Twenty-Fourth International Joint Conference on Artificial Intelligence, pp 2176–2182

Nguyen TV, Song Z, Yan S (2015) STAP: Spatial-temporal attention-aware pooling for action recognition. IEEE Transactions on Circuits and Systems for Video Technology

Nguyen TV, Xu M, Gao G, Kankanhalli M, Tian Q, Yan S (2013) Static saliency vs. dynamic saliency: a comparative study. In: ACM Multimedia, pp 987–996

Oliva A, Torralba A (2001) Modeling the shape of the scene A holistic representation of the spatial envelope. Int J Comput Vis 42(3):145–175

Perazzi F, Krähenbühl P, Pritch Y, Hornung A (2012) Saliency filters: Contrast based filtering for salient region detection. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 733– 740

Torralba A, Efros AA (2011) Unbiased look at dataset bias. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 1521–1528

Viola PA, Jones MJ (2001) Robust real-time face detection. In: International Conference on Computer Vision, p 747

Yan Q, Xu L, Shi J, Jia J (2013) Hierarchical saliency detection. In: IEEE Conference on Computer Vision and Pattern Recognition, pp 1155–1162

Zhai Y, Shah M (2006) Visual attention detection in video sequences using spatiotemporal cues. In: ACM Multimedia, pp 815–824

Zhang L, Tong MH, Marks TK, Shan H, Cottrell GW (2008) Sun: A bayesian framework for saliency using natural statistics. Journal of Vision, 8(7)

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

About this article

Cite this article

Nguyen, T.V., Kankanhalli, M. As-similar-as-possible saliency fusion. Multimed Tools Appl 76, 10501–10519 (2017). https://doi.org/10.1007/s11042-016-3615-8

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-016-3615-8