Abstract

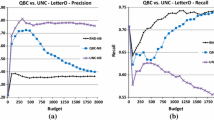

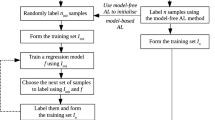

Which active learning methods can we expect to yield good performance in learning binary and multi-category logistic regression classifiers? Addressing this question is a natural first step in providing robust solutions for active learning across a wide variety of exponential models including maximum entropy, generalized linear, log-linear, and conditional random field models. For the logistic regression model we re-derive the variance reduction method known in experimental design circles as ‘A-optimality.’ We then run comparisons against different variations of the most widely used heuristic schemes: query by committee and uncertainty sampling, to discover which methods work best for different classes of problems and why. We find that among the strategies tested, the experimental design methods are most likely to match or beat a random sample baseline. The heuristic alternatives produced mixed results, with an uncertainty sampling variant called margin sampling and a derivative method called QBB-MM providing the most promising performance at very low computational cost. Computational running times of the experimental design methods were a bottleneck to the evaluations. Meanwhile, evaluation of the heuristic methods lead to an accumulation of negative results. We explore alternative evaluation design parameters to test whether these negative results are merely an artifact of settings where experimental design methods can be applied. The results demonstrate a need for improved active learning methods that will provide reliable performance at a reasonable computational cost.

Article PDF

Similar content being viewed by others

References

Abe, N., & Mamitsuka, H. (1998). Query learning strategies using boosting and bagging. In Proceedings of the 15th international conference on machine learning (ICML1998) (pp. 1–10).

Angluin, D. (1987). Learning regular sets from queries and counterexamples. Information and Computation, 75, 87–106.

Banko, M., & Brill, E. (2001). Scaling to very very large corpora for natural language disambiguation. In Proceedings of the 39’th annual ACL meeting (ACL2001).

Baum, E. B. (1991). Neural net algorithms that learn in polynomial time from examples and queries. IEEE Transactions on Neural Networks, 2(1).

Berger, A. L., Della Pietra, S. A., & Della Pietra, V. J. (1996). A maximum entropy approach to natural language processing. Computational Linguistics, 22(1), 39–71.

Bickel, P. J., & Doksum, K. A. (2001). Mathematical statistics (2nd ed., Vol. 1). Englewood Cliffs: Prentice Hall.

Blake, C., & Merz, C. (1998). UCI repository of machine learning databases.

Boram, Y., El-Yaniv, R., & Luz, K. (2003). Online choice of active learning algorithms. In Twentieth international conference on machine learning (ICML-2003).

Breiman, L. (1996). Bagging predictors. Machine Learning, 24(2), 123–140.

Buja, A., Stuetzle, W., & Shen, Y. (2005). Degrees of boosting: a study of loss functions for classification and class probability estimation. Working paper.

Chaloner, K., & Larntz, K. (1989). Optimal Bayesian design applied to logistic regression experiments. Journal of Statistical Planning and Inference, 21, 191–208.

Chen, J., Schein, A. I., Ungar, L. H., & Palmer, M. S. (2006). An empirical study of the behavior of active learning for word sense disambiguation. In Proceedings of the 2006 human language technology conference—North American chapter of the association for computational linguistics annual meeting HLT-NAACL 2006.

Cohn, D. A. (1996). Neural network exploration using optimal experimental design. Neural Networks, 9(6), 1071–1083.

Cohn, D. A. (1997). Minimizing statistical bias with queries. In Advances in neural information processing systems 9. Cambridge: MIT Press.

Craven, M., DiPasquo, D., Freitag, D., McCallum, A. K., Mitchell, T. M., & Nigam, K. et al. (2000). Learning to construct knowledge bases from the World Wide Web. Artificial Intelligence, 118(1/2), 69–113.

Dagan, I., & Engelson, S. P. (1995). Committee-based sampling for training probabilistic classifiers. In International conference on machine learning (pp. 150–157).

Darroch, J. N., & Ratcliff, D. (1972). Generalized iterative scaling for log-linear models. Annals of Mathematical Statistics, 43, 1470–1480.

Davis, R., & Prieditis, A. (1999). Designing optimal sequential experiments for a Bayesian classifier. IEEE Transactions on Pattern Analysis and Machine Intelligence, 21(3).

Freund, Y., Seung, H. S., Shamir, E., & Tishby, N. (1997). Selective sampling using the query by committee algorithm. Machine Learning, 28, 133–168.

Frey, P. W., & Slate, D. J. (1991). Letter recognition using Holland-style adaptive classifiers. Machine Learning, 6(2).

Garofolo, J., Lamel, L., Fisher, W., Fiscus, J., Pallett, D., & Dahlgren, N. (1993). Darpa timit acoustic-phonetic continuous speech corpus CD-ROM. NIST.

Geman, S., Bienenstock, E., & Doursat, R. (1992). Neural networks and the bias/variance dilemma. Neural Computation, 4, 1–58.

Gilad-Bachrach, R., Navot, A., & Tishby, N. (2003). Kernel query by committee (KQBC) (Tech. Rep. No. 2003-88). Leibniz Center, the Hebrew University.

Hosmer, D. E., & Lemeshow, S. (1989). Applied logistic regression. New York: Wiley.

Hwa, R. (2004). Sample selection for statistical parsing. Computational Linguistics, 30(3).

Hwang, J.-N., Choi, J. J., Oh, S., & Marks, R. J. (1991). Query-based learning applied to partially trained multilayer perceptrons. IEEE Transactions on Neural Networks, 2(1).

Jin, R., Yan, R., Zhang, J., & Hauptmann, A. G. (2003). A faster iterative scaling algorithm for conditional exponential model. In Proceedings of the twentieth international conference on machine learning (ICML-2001), Washington, DC.

Kaynak, C. (1995). Methods of combining multiple classifiers and their applications to handwritten digit recognition. Unpublished master’s thesis, Bogazici University.

Lafferty, J. D., McCallum, A., & Pereira, F. C. N. (2001). Conditional random fields: probabilistic models for segmenting and labeling sequence data. In Proceedings of the eighteenth international conference on machine learning (pp. 282–289). Los Altos: Kaufmann.

Lewis, D. D., & Gale, W. A. (1994). A sequential algorithm for training text classifiers. In W.B. Croft & C.J. van Rijsbergen (Eds.), Proceedings of SIGIR-94, 17th ACM international conference on research and development in information retrieval (pp. 3–12), Dublin. Heidelberg: Springer.

MacKay, D. J. C. (1991). Bayesian methods for adaptive models. Unpublished doctoral dissertation, California Institute of Technology.

MacKay, D. J. C. (1992). The evidence framework applied to classification networks. Neural Computation, 4(5), 698–714.

Malouf, R. (2002). A comparison of algorithms for maximum entropy parameter estimation.

McCallum, A., & Nigam, K. (1998). Employing em in pool-based active learning for text classification. In Proceedings of the 15th international conference on machine learning (ICML1998).

McCullagh, P., & Nelder, J. A. (1989). Generalized linear models (2nd ed.). Boca Raton: CRC Press.

Melville, P., & Mooney, R. (2004). Diverse ensembles for active learning. In Proceedings of the 21st international conference on machine learning (ICML-2004) (pp. 584–591).

Mitchell, T. M. (1997). Machine learning. New York: McGraw–Hill.

Nigam, K., Lafferty, J., & McCallum, A. (1999). Using maximum entropy for text classification. In IJCAI-99 workshop on machine learning for information filtering.

Nocedal, J., & Wright, S. J. (1999). Numerical optimization. Berlin: Springer.

Roy, N., & McCallum, A. (2001). Toward optimal active learning through sampling estimation of error reduction. In Proceedings of the 18th international conference on machine learning (pp. 441–448). San Francisco: Kaufmann.

Saar-Tsechansky, M., & Provost, F. (2001). Active learning for class probability estimation and ranking. In Proceedings of the international joint conference on artificial intelligence (pp. 911–920).

Schein, A. I. (2005). Active learning for logistic regression. Dissertation in Computer and Information Science, The University of Pennsylvania.

Seung, H. S., Opper, M., & Sompolinsky, H. (1992). Query by committee. In Computational learning theory (pp. 287–294).

Steedman, M., Hwa, R., Clark, S., Osborne, M., Sarkar, A., & Hockenmaier, J. (2003). Example selection for bootstrapping statistical parsers. In Proceedings of the annual meeting of the North American chapter of the ACL, Edmonton, Canada.

Tang, M., Luo, X., & Roukos, S. (2002). Active learning for statistical natural language parsing. In ACL 2002.

Zheng, Z., & Padmanabhan, B. (2006). Selectively acquiring customer information: A new data acquisition problem and an active learning-based solution. Management Science, 52(5), 697–712.

Author information

Authors and Affiliations

Corresponding author

Additional information

Editor: David Page.

Rights and permissions

About this article

Cite this article

Schein, A.I., Ungar, L.H. Active learning for logistic regression: an evaluation. Mach Learn 68, 235–265 (2007). https://doi.org/10.1007/s10994-007-5019-5

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10994-007-5019-5