Abstract

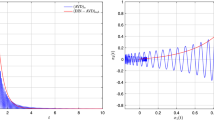

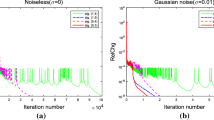

In order to solve the minimization of a nonsmooth convex function, we design an inertial second-order dynamic algorithm, which is obtained by approximating the nonsmooth function by a class of smooth functions. By studying the asymptotic behavior of the dynamic algorithm, we prove that each trajectory of it weakly converges to an optimal solution under some appropriate conditions on the smoothing parameters, and the convergence rate of the objective function values is \(o\left( t^{-2}\right)\). We also show that the algorithm is stable, that is, this dynamic algorithm with a perturbation term owns the same convergence properties when the perturbation term satisfies certain conditions. Finally, we verify the theoretical results by some numerical experiments.

Similar content being viewed by others

Data and code availability

The data and code that support the findings of this study are available from the corresponding author upon request.

References

Álvarez, F.: On the minimizing property of a second-order dissipative system in Hilbert spaces. SIAM J. Control Optim. 38, 1102–1119 (2000)

Apidopoulos, V., Aujol, J.F., Dossal, Ch.: Convergence rate of inertial forward-backward algorithm beyond Nesterov’s rule. Math. Program. 180, 137–156 (2020)

Attouch, H., Cabot, A.: Asymptotic stabilization of inertial gradient dynamics with time-dependent viscosity. J. Differ. Equ. 263, 5412–5458 (2017)

Attouch, H., Cabot, A.: Convergence of damped inertial dynamics governed by regularized maximally monotone operators. J. Differ. Equ. 264, 7138–7182 (2018)

Attouch, H., Chbani, Z., Peypouquet, J., Redont, P.: Fast convergence of inertial dynamics and algorithms with asymptotic vanishing viscosity. Math. Program. 168, 123–175 (2018)

Attouch, H., Chbani, Z., Riahi, H.: Rate of convergence of the Nesterov accelerated gradient method in the subcritical case \(\alpha \le 3\). ESAIM-Control Optim. Calc. Var. 25, 34 (2019)

Beck, A., Teboulle, M.: A fast iterative shrinkage-thresholding algorithms for linear inverse problems. SIAM J. Imaging Sci. 2, 183–202 (2009)

Bian, W., Chen, X.: A smoothing proximal gradient algorithm for nonsmooth convex regression with cardinality penalty. SIAM J. Numer. Anal. 58, 858–883 (2020)

Bian, W., Chen, X.: Smoothing neural network for constrained non-Lipschitz optimization with applications. IEEE Trans. Neural Netw. Learn. Syst. 23, 399–411 (2012)

Brézis, H.: Opérateurs maximaux monotones dans les espaces de Hilbert et équations d’évolution. Lecture Notes 5, North Holland (1972)

Burke, J.V., Chen, X., Sun, H.: The subdifferential of measurable composite max integrands and smoothing approximation. Math. Program. 181(2), 229–264 (2020)

Cabot, A., Engler, H., Gadat, S.: On the long time behavior of second order differential equations with asymptotically small dissipation. Trans. Am. Math. Soc. 361, 5983–6017 (2009)

Cabot, A., Engler, H., Gadat, S.: Second order differential equations with asymptotically small dissipation and piecewise flat potentials. Electron. J. Differ. Equ. 17, 33–38 (2009)

Chambolle, A., Dossal, Ch.: On the convergence of the iterates of the fast iterative shrinkage thresholding algorithm. J. Optim. Theory Appl. 166, 968–982 (2015)

Chen, X.: Smoothing methods for complementarity problems and their applications: a survey. J. Oper. Res. Soc. Japn. 43, 32–47 (2000)

Chen, X.: Smoothing methods for nonsmooth, nonconvex minimization. Math. Program. 134, 71–99 (2012)

Fiori, S., Bengio, Y.: Quasi-geodesic neural learning algorithms over the orthogonal group: a tutorial. J. Mach. Learn. Res. 6(1), 743–781 (2005)

Haraux, A.: Systèmes Dynamiques Dissipatifs et Applications. Recherches en Mathématiques Appliquées 17, Masson, paris (1991)

Helmke, U., Moore, J.B.: Optimization and Dynamical Systems. Springer, London (1994)

Kreimer, J., Rubinstein, R.Y.: Nondifferentiable optimization via smooth approximation: general analytical approach. Ann. Oper. Res. 39, 97–119 (1993)

May, R.: Asymptotic for a second order evolution equation with convex potential and vanishing damping term. Turk. J. Math. 41, 681–685 (2017)

Necoara, I., Suykens, J.: Application of a smoothing technique to decomposition in convex optimization. IEEE Trans. Autom. Control. 53, 2674–2679 (2008)

Nesterov, Y.: A method of solving a convex programming problem with convergence rate \(O(\frac{1}{k^2})\). Sov. Math. Dokl. 27, 372–376 (1983)

Nesterov, Y.: Gradient methods for minimizing composite functions. Math. Program. 140, 125–161 (2013)

Nesterov, Y.: Introductory Lectures on Convex Optimization: A Basic Course. Applied Optimization. Kluwer Academic Publishers, Boston (2004)

Nesterov, Y.: Smooth minimization of nonsmooth functions. Math. Program. 103, 127–152 (2005)

Attouch, H., Goudou, X.: A continuous gradient-like dynamical approach to Pareto-optimization in Hilbert spaces. Set-Valued Var. Anal. 22(1), 189–219 (2014)

Polyak, B.T.: Introduction to Optimization. Publications Division, New York, Optimization Software (1987)

Polyak, B.T.: Some methods of speeding up the convergence of iteration methods. USSR Comput. Math. & Math. Phys. 4, 1–17 (1964)

Shor, N.: Minimization Methods for Non-Differentiable Functions. Springer-Verlag, Berlin (1985)

Su, W., Boyd, S., Candès, E.J.: A differential equation for modeling Nesterov’s accelerated gradient method: theory and insights. Neural Inf. Process. Syst. 27, 2510–2518 (2014)

Zhang, C., Chen, X.: A smoothing active set method for linearly constrained non-Lipschitz Nonconvex optimization. SIAM J. Optim. 30(1), 1–30 (2020)

Funding

This work is funded by the National Natural Science Foundation of China (Nos: 11871178, 62176073).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Qu, X., Bian, W. Fast inertial dynamic algorithm with smoothing method for nonsmooth convex optimization. Comput Optim Appl 83, 287–317 (2022). https://doi.org/10.1007/s10589-022-00388-6

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10589-022-00388-6