Abstract

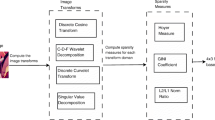

The human visual system is sensitive to structural information in images, and modeling this information has been regarded as useful for predicting their perceptual quality. In this study, we propose a no-reference (NR) image quality assessment (IQA) method based on a sparse representation of the distribution of structural information. The grayscale fluctuation map of an image is first calculated and divided into patches of fixed size that are rearranged into column vectors, which are regarded as structural elements of the image. Following this, using sparse coding, these structural elements can be represented by sparse representation coefficients and a trained dictionary. By using the former, a probability vector for observing different elements in the trained dictionary can then be obtained. Finally, the prediction model is trained using support vector regression. The results of experiments to test the proposed method show that it can accurately predict humans’ perception of image quality and is competitive in comparison with prevalent NR–IQA methods.

Similar content being viewed by others

References

Gu K, Zhai G, Yang X et al (2015) Automatic contrast enhancement technology with saliency preservation. IEEE Trans Circuits Syst Video Technol 25(9):1480–1494

Gu K, Zhai G, Lin W et al (2016) The analysis of image contrast: from quality assessment to automatic enhancement. IEEE Trans Cybern 46(1):284–297

Lin W, Jay Kuo CC (2011) Perceptual visual quality metrics: a survey. J Vis Commun Image Represent 22(4):297–312

Ma L, Deng C, Ngan KN et al (2013) Recent advances and challenges of visual signal quality assessment. China Commun 10(5):62–78

Wang Z, Bovik AC, Sheikh HR et al (2004) Image quality assessment: from error visibility to structural similarity. IEEE Trans Image Process 13(4):600–612

Sheikh HR, Bovik AC (2006) Image information and visual quality. IEEE Trans Image Process 15(2):430–444

Zhang L, Zhang L, Mou X et al (2011) FSIM: a feature similarity index for image quality assessment. IEEE Trans Image Process 20(8):2378

Narwaria M, Lin W (2012) SVD-based quality metric for image and video using machine learning. IEEE Trans Syst Man Cybern Part B Cybern 42(2):347–364

Chang HW, Yang H, Gan Y et al (2013) Sparse feature fidelity for perceptual image quality assessment. IEEE Trans Image Process 22(10):4007–4018

Tao D, Li X, Lu W et al (2009) Reduced-reference IQA in contourlet domain. IEEE Trans Syst Man Cybern Part B Cybern 39(6):1623–1627

Rehman A, Zhou R (2012) Reduced-reference image quality assessment by structural similarity estimation. IEEE Trans Image Process 21(8):3378–3389

Wu J, Lin W, Shi G et al (2013) Reduced-reference image quality assessment with visual information fidelity. IEEE Trans Multimed 15(7):1700–1705

Pan F, Lin X, Rahardja S et al (2004) A locally adaptive algorithm for measuring blocking artifacts in images and videos. Signal Process Image Commun 19(6):499–506

Liu H, Klomp N, Heynderickx I (2010) A no-reference metric for perceived ringing artifacts in images. IEEE Trans Circuits Syst Video Technol 20(4):529–539

Ferzli R, Karam LJ (2009) A no-reference objective image sharpness metric based on the notion of just noticeable blur (JNB). IEEE Trans Image Process 18(4):717–728

Liang L, Wang S, Chen J et al (2010) No-reference perceptual image quality metric using gradient profiles for JPEG2000. Signal Process Image Commun 25(7):502–516

Moorthy AK, Bovik AC (2010) A two-step framework for constructing blind image quality indices. IEEE Signal Process Lett 17(5):513–516

Moorthy AK, Bovik AC (2011) Blind image quality assessment: from natural scene statistics to perceptual quality. IEEE Trans Image Process 20(12):3350

Saad MA, Bovik AC, Charrier C (2010) A DCT statistics-based blind image quality index. IEEE Signal Process Lett 17(6):583–586

Saad MA, Bovik AC, Charrier C (2012) Blind image quality assessment: a natural scene statistics approach in the DCT domain. IEEE Trans Image Process 21(8):3339–3352

Mittal A, Moorthy AK, Bovik AC (2012) No-reference image quality assessment in the spatial domain. IEEE Trans Image Process 21(12):4695–4708

Ghadiyaram D, Bovik AC (2017) Perceptual quality prediction on authentically distorted images using a bag of features approach. J Vis 17(1):32

Jenadeleh M, Masaeli MM, Moghaddam ME (2017) Blind image quality assessment based on aesthetic and statistical quality-aware features. J Electron Imaging 26(4):1

Ma K, Liu W, Liu T et al (2017) dipIQ: blind image quality assessment by learning-to-rank discriminable image pairs. IEEE Trans Image Process 26(8):3951–3964

Kim J, Lee S (2017) Fully deep blind image quality predictor. IEEE J Sel Top Signal Process 11(1):206–220

Mittal A, Soundararajan R, Bovik AC (2013) Making a “completely blind” image quality analyzer. IEEE Signal Process Lett 20(3):209–212

Zhang L, Zhang L, Bovik AC (2015) A feature-enriched completely blind image quality evaluator. IEEE Trans Image Process 24(8):2579–2591

Lu X, Wang Y, Yuan Y (2013) Sparse coding from a Bayesian perspective. IEEE Trans Neural Netw Learn Syst 24(6):929–939

Lu X, Yuan Y, Yan P (2013) Image super-resolution via double sparsity regularized manifold learning. IEEE Trans Circuits Syst Video Technol 23(12):2022–2033

Chen L, Liu L, Chen CLP (2016) A robust bi-sparsity model with non-local regularization for mixed noise reduction. Inf Sci 354:101–111

Yu J, Rui Y, Tao D (2014) Click prediction for web image reranking using multimodal sparse coding. IEEE Trans Image Process 23(5):2019–2032

Olshausen BA, Field DJ (1997) Sparse coding with an overcomplete basis set: a strategy employed by V1? Vis Res 37(23):3311–3325

Bell AJ, Sejnowski TJ (1997) The, “independent components” of natural scenes are edge filters. Vis Res 37(23):3327–3338

Yuan Y, Guo Q, Lu X (2015) Image quality assessment: a sparse learning way. Neurocomputing 159(1):227–241

Yang X, Sun Q, Wang T (2014) Completely blind image quality assessment based on gray-scale fluctuations[C]. In: International conference on digital image processing, p 915916

Yang X, Sun Q, Wang T (2016) Image quality assessment via spatial structural analysis. Comput Electr Eng. https://doi.org/10.1016/j.compeleceng.2016.08.014

Yang X, Sun Q, Wang T (2016) Blind image quality assessment via probabilistic latent semantic analysis. Springerplus 5(1):1714

Sheikh HR, Sabir MF, Bovik AC (2006) A statistical evaluation of recent full reference image quality assessment algorithms. IEEE Trans Image Process 15(11):3440–3451

Larson EC, Chandler DM (2010) Most apparent distortion: full-reference image quality assessment and the role of strategy. J Electron Imaging 19(1):011006

Ponomarenko N, Lukin V, Zelensky A et al (2009) TID2008-a database for evaluation of full-reference visual quality assessment metrics. Adv Mod Radioelectron 10(4):30–45

Ponomarenko N, Jin L, Ieremeiev O et al (2015) Image database TID2013: peculiarities, results and perspectives. Signal Process Image Commun 30:57–77

Ji H, Liu C (2008) Motion blur identification from image gradients. In: IEEE conference on computer vision and pattern recognition, 2008. CVPR 2008. IEEE, pp 1–8

Ghadiyaram D, Bovik AC (2015) Massive online crowdsourced study of subjective and objective picture quality. IEEE Trans Image Process 25(1):372–387

Chang CC, Lin CJ (2011) LIBSVM: a library for support vector machines. ACM Trans Intell Syst Technol (TIST) 2(3):27

Liu L, Liu B, Huang H et al (2014) No-reference image quality assessment based on spatial and spectral entropies. Signal Process Image Commun 29(8):856–863

Oyedotun OK, Khashman A (2017) Deep learning in vision-based static hand gesture recognition. Neural Comput Appl 28(12):3941–3951

Zhang H, Cao X, Ho JKL et al (2017) Object-level video advertising: an optimization framework. IEEE Trans Ind Inf 13(2):520–531

Acknowledgements

This paper is supported by the National Science Foundation of China (Grant No. 61673220).

Author information

Authors and Affiliations

Contributions

XY conceived, designed, and performed the experiments. XY and QS analyzed the data. XY, QS, and TW wrote and reviewed the paper. XY, QS, TW approved the final version of the paper.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Rights and permissions

About this article

Cite this article

Yang, X., Sun, Q. & Wang, T. No-reference image quality assessment based on sparse representation. Neural Comput & Applic 31, 6643–6658 (2019). https://doi.org/10.1007/s00521-018-3497-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00521-018-3497-y