Abstract

Multiple imputation (MI) has been proven an effective procedure to deal with incomplete datasets. Compared with complete case analysis (CCA), MI is more efficient since it uses the information provided by incomplete cases which are simply discarded in CCA. A few simulation studies have shown that statistical power can be improved when MI is used. However, there is a lack of knowledge about how much power can be gained. In this article, we build a general formula to calculate the statistical power when MI is used. Specific formulas are given for several different conditions. We demonstrate our finding through simulation studies and a data example.

Similar content being viewed by others

References

Baguley T (2004) Understanding statistical power in the context of applied research. Appl Ergon 35:73–80

Balkin RS, Sheperis CJ (2011) Evaluating and reporting statistical power in counseling research. J Couns Dev 89(3):268–272

Barnard J, Rubin DB (1999) Small-sample degrees of freedom with multiple imputation. Biometrika 86(4):948–955

Beaujean AA (2014) Sample size determination for regression models using Monte Carlo methods in R. Pract Assess Res Eval 19:2

Champely S, Ekstrom C, Dalgaard P, Gill J, Wunder J, Rosario HD (2015) Basic functions for power analysis

Cohen J (1988) Statistical power analysis for behavioral science, 2nd edn. Routledge, London

Collins LM, Schafer JL, Kam C-M (2001) A comparison of inclusive and restrictive strategies in modern missing data procedures. Psychol Methods 6(4):330–351

Desai M, Esserman DA, Gammon MD, Terry MB (2011) The use of complete-case and multiple imputation-based analyses in molecular epidemiology studies that assess interaction effects. Epidemiol Perspect Innov 8(1):5

Elashoff JD (2007) nQuery advisor® Version 7.0 user’s guide

Faul F, Erdfelder E, Lang A-G, Buchner A (2007) G*Power 3: a flexible statistical power analysis program for the social, behavioral, and biomedical sciences. Behav Res Methods 39:175–191

Ginsburg GS, Drake KL, Tein JY, Teetse R, Riddle MA (2015) Preventing onset of anxiety disorders in offspring of anxious parents: a randomized controlled trial of a family-based intervention. Am J Psychiatry 172(December):1207–1214

Graham JW (2009) Missing data analysis: making it work in the real world. Ann Rev Psychol 60:549–576

Graham JW, Olchowski AE, Gilreath TD (2007) How many imputations are really needed? Some practical clarifications of multiple imputation theory. Prev Sci 8:206–213

Hansen MH, Hurwitz WN, Madow WG (1953) Sample survey methods and survey, 1st edn. Wiley, New York

Harel O (2007) Inferences on missing information under multiple imputation and two-stage multiple imputation. Stat Methodol 4(January):75–89

Harel O, Zhou XH (2007) Multiple imputation: review of theory, implementation and software. Stat Med 26(16):3057–3077

IBM Corp. (2013) IBM SPSS statistics for windows, version 22.0. IBM Corp., Armonk, NY

Little RJA, Rubin DB (2002) Statistical analysis with missing data, 2nd edn. Wiley, New York

Marshall A, Altman DG, Holder RL, Royston P (2009) Combining estimates of interest in prognostic modelling studies after multiple imputation: current practice and guidelines. BMC Med Res Methodol 9(1):1

McGinniss J, Harel O (2016) Multiple imputation in three or more stages. J Stat Plan Inference 176:33–51

Meng X-L (1994) Multiple-imputation inferences with uncongenial sources of input (Disc: pp. 558–573). Stat Sci 9:538–558

Moher D, Dulberg CS, Wells GA (1994) Statistical power, sample size, and their reporting in randomized controlled trials. JAMA 272(2):122–124

Murphy KR, Myor B, Wolach A (1998) Statistical power analysis: a simple and general model for traditional and modern hypothesis tests, 1st edn. Routledge, London

Muthén LK, Muthén BO (2002) How to use a Monte Carlo study to decide on sample size and determine power. Struct Equ Model 9(4):599–620

NCSS, LLC. Kaysville, Utah, USA (2017) PASS 15 power analysis and sample size software

Peterman RM (1990) The importance of reporting statistical power: the forest decline and acidic deposition example. Ecology 71(5):2024–2027

R Core Team (2015) R: a language and environment for statistical computing. R Foundation for Statistical Computing, Vienna

Raghunathan TE, Solenberger PW, Van Hoewyk J (2002) IVEware: imputation and variance estimation software user guide. Survey Methodology Program Survey Research Center, Institute for Social Research, University of Michigan, Ann Arbor, MI

Reiter JP (2008) Multiple imputation when records used for imputation are not used or disseminated for analysis. Biometrika 95:933–946

Rubin DB (1978) Multiple imputations in sample surveys: a phenomenological Bayesian approach to nonresponse, pp 20–28. Survey Research Methods Section of the American Statistical Association

Rubin DB (1988) An overview of multiple imputation. In: JSM proceedings on survey research methods section. Alexandria: American Statistical Association

Rubin DB (1987) Multiple imputation for nonresponse in surveys, 1st edn. Wiley, New York

SAS (2008) SAS/STAT 9.2 user’s guide. SAS, Cary, NC

SAS Institute Inc. (2011) SAS/STAT Software, Version 9.3. Cary, NC

Schafer JL (1997) Analysis of incomplete multivariate data, 1st edn. Chapman and Hall, Boca Raton

Schafer JL (1999) Multiple imputation: a primer. Stat Method Med Res 8(1):3–15

Schafer JL, Graham JW (2002) Multiple imutation: our view of the state of art. Psychol Method 7(2):147–177

Schafer JL, Olsen MK (1998) Multiple imputation for multivariate missing-data problems: A data analyst’s perspective. Multivariate Behav Res 33(4):545–571

Shen ZJ (2000) Nested multiple imputation. Ph.D. thesis, Department of Statistics, Harvard University

StataCorp (2013) Stata power and sample-size reference manual release 13

Steidl RJ, Hayes JP, Schauber E (1997) Statistical Power Analysis in Wildlife Research. The Journal of Wildlife Management 61(2):270–279

Templ M, Filzmoser P (2008) Visualization of missing values using the R-package VIM. Research report cs-2008-1, Department of Statistics and Probability Theory, Vienna University of Technology

van Buuren S (2012) Flexible imputation of missing data, 1st edn. Chapman and Hall, Boca Raton

van Buuren S, Groothuis-Oudshoorn K (2011) Mice: multivariate imputation by chained equations in R. J Stat Softw 45(3):1–67

Van der Sluis S, Dolan CV, Neale MC, Posthuma D (2008) Power calculations using exact data simulation: a useful tool for genetic study designs. Behav Genet 38:202–211

Verbeke G, Molenberghs G (2000) Chap. 21. New York: Springer

Wagstaff D A, Harel O (2011) A closer examination of three small-sample approximations to the multiple-imputation degrees of freedom. Stata J 11(3):403–419(17)

White IR, Carlin JB (2010) Bias and efficiency of multiple imputation compared with complete-case analysis for missing covariate valuese size for planned missing designs. Stat Med 29(December):2929–2931

White IR, Royston P, Wood AM (2011) Multiple imputation using chained equations: issues and guidance for practice. Stat Med 30(4):377–399

Wothke W (2000) Longitudinal and multigroup modeling with missing data. Lawrence Erlbaum Associates Publishers

Acknowledgements

The data used in this manuscript came from a Grant (R01 MH077312) awarded to Dr. Golda Ginsburg by the National Institute of Mental Health. ClinicalTrials.gov: NCT00847561

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

1.1 Compare type I error of different methods

To evaluate the comparison between CCA and MI, we also provide the proportion of rejecting null hypothesis if the null hypothesis is true (\(\alpha \)). We are showing the results in Tables 3 and 4. ‘P’ represents the percentage of missing values. ‘Complete’ represents the complete data results, ‘MI’ represents the MI results, and ‘CCA’ represents the CCA results.

In terms of the type I error, there is not much differences between the three methods no matter the size of \(B_E\). All methods reach a type I error rate close to the predetermined value 0.05.

1.2 Explore the effect of changing alternative hypothesis, sample size n and number of imputations m

As requested by reviewers, we explored the effect of changing the simulation parameters and evaluated the bias between simulated power and calculated power under different setups.

To explore if different alternative hypothesis could affect the results, we had four different setups shown in Figs. 6 and 7. In the first two setups, we set \(n=100\), \(m=10\), and \(Q_1 - Q_0 = 1\). The only difference between the first two setups is that in setup 1, \(Q_0 = 0\), and in setup 2, \(Q_0 = 1\). Similarly, the last two setups have same sample size and number of imputation, \(Q_1 - Q_0 = 0.5\). In setup 3, \(Q_0 = 0\), and in setup 4, \(Q_0 = 1\). All four setups used the same random seed and the number of Monte Carlo samples (iterations) was 10000 across all setups.

The simulation results shows that if d, the difference between null hypothesis and alternative hypothesis is constant, the simulation result won’t change. In other words, it is not the alternative hypothesis, but the difference between two hypothesis that determined the simulation results.

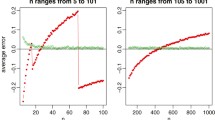

We examined the performance of the power calculation method by comparing the simulated and theoretical power under different combinations of m and n. In the simulation, we use \(Q_0 = 1\), \(Q_1 = 1.5\), and number of Monte Carlo samples (i.e., the number of iterations) is 10,000. The results are shown in Fig. 8.

In addition, we calculated the average of absolute difference between simulated and calculated power, see Table 5.

The simulation shows that while there exists a minimal bias. The bias could be either positive or negative, and due to the its small amount, it may not of big problem.

1.3 When the population and MI imputation model have different distributions

To examine how the method perform if the population follows a different distribution, we examined the situation where the population follows a t distribution with degree of freedom as 3. In the simulation shown in Fig. 9, we have the random error follow a t-distribution instead of a standard normal distribution; In the simulations shown in Fig. 10, we keep the variance of Y to be 20. For the large \(B_E\) setup, the correlation coefficient between X and Y is about 0.32. For the small \(B_E\) setup that is about 0.92.

Comparison between theoretical and simulated power under MCAR, one dimensional X, under t distribution. In the simulation, \(\mathbf {X} \sim N(0, 4^{2})\), \({\varvec{\beta ^*}} = (1, 1)\), \({\varvec{\epsilon }} \sim {\mathrm{t}}_{3}\), and \(m = 10\). Each point in the figure represents the theoretical power and the simulated power for one population. Ideally, the points should be clustered along the forty-five-degree line

It can be seen from Fig. 9 that while the population follows a t distribution instead, the simulated power now is slightly greater than the calculated power, meaning our method now generates a small negative bias. In Table 6. Such phenomenon can also be observed from Fig. 10.

Rights and permissions

About this article

Cite this article

Zha, R., Harel, O. Power calculation in multiply imputed data. Stat Papers 62, 533–559 (2021). https://doi.org/10.1007/s00362-019-01098-8

Received:

Revised:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00362-019-01098-8