Abstract

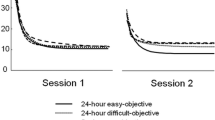

Dopamine neurons appear to code an error in the prediction of reward. They are activated by unpredicted rewards, are not influenced by predicted rewards, and are depressed when a predicted reward is omitted. After conditioning, they respond to reward-predicting stimuli in a similar manner. With these characteristics, the dopamine response strongly resembles the predictive reinforcement teaching signal of neural network models implementing the temporal difference learning algorithm. This study explored a neural network model that used a reward-prediction error signal strongly resembling dopamine responses for learning movement sequences. A different stimulus was presented in each step of the sequence and required a different movement reaction, and reward occurred at the end of the correctly performed sequence. The dopamine-like predictive reinforcement signal efficiently allowed the model to learn long sequences. By contrast, learning with an unconditional reinforcement signal required synaptic eligibility traces of longer and biologically less-plausible durations for obtaining satisfactory performance. Thus, dopamine-like neuronal signals constitute excellent teaching signals for learning sequential behavior.

Similar content being viewed by others

Author information

Authors and Affiliations

Additional information

Received: 24 November 1997 / Accepted: 30 April 1998

Rights and permissions

About this article

Cite this article

Suri, R., Schultz, W. Learning of sequential movements by neural network model with dopamine-like reinforcement signal. Exp Brain Res 121, 350–354 (1998). https://doi.org/10.1007/s002210050467

Issue Date:

DOI: https://doi.org/10.1007/s002210050467