Abstract

OBJECTIVE: To determine which aspects of outpatient attending physician performance (e.g., clinical ability, teaching ability, interpersonal conduct) were measurable and separable by resident report.

DESIGN: Self-administered evaluation form.

SETTING: University internal medicine resident continuity clinic.

PARTICIPANTS: All residents with their continuity clinic at the university hospital evaluated the two attendings who staffed their clinic for the academic years of 1990–1991, 1991–1992, and 1992–1993 (average of 85 total residents per year). The overall response rate was 74%.

ANALYSIS: Exploratory analyses were conducted on a preliminary evaluation form in the first two years of the study (236 evaluations of 20 different clinic attendings) and confirmatory analyses using factor analysis and generalizability analysis were performed on the third year’s data (142 evaluations of 15 different clinic attendings). Analysis of variance was used to evaluate factors associated with evaluation scores.

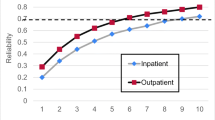

RESULTS: Analyses demonstrated that the residents did not distinguish between the attendings’ clinical and teaching abilities, resulting in a single four-item scale that was named the Clinical/Teaching Excellence Scale, measured on a five-point scale from poor to outstanding (Cronbach’s alpha=0.92). A large amount of the variance for this scale score was associated with attending identity (adjusted R2=46%). However, two alternative approaches to evaluating the performance of the attending (preference for him or her to the “average” attending and perceived impact of the attending on residents’ clinical skills) did not provide useful information independent of the Clinical/Teaching Excellence Scale. The ratings of three separate conduct scales [availability in clinic (Availability Scale), treating residents and patients with respect (Respect Scale), and time efficiency in staffing cases (Slow Staffing Scale)] were separable from each other and from the rating of clinical/teaching excellence. For the Clinical/Teaching Excellent Scale, as few as four evaluations produced good interrater reliability and eight evaluations produced excellent reliability (reliability coefficients were 0.70 and 0.84, respectively).

CONCLUSIONS: Although this evaluation instrument for measuring clinic attending performance must be considered preliminary, this study suggests that relatively few attending evaluations are required to reliably profile an individual attending’s performance, that attending identity is associated with a large amount of the scale score variation, and that special issues of attending performance more relevant to the outpatient setting than the inpatient setting (availability in clinic and sensitivity to time efficiency) should be considered when evaluating clinic attending performance.

Similar content being viewed by others

References

Perkoff GT. Teaching clinical medicine in the ambulatory setting: an idea whose time has finally come. N Engl J Med. 1986;814:27–31.

Howell JD, Lurie N, Woolliscroft JO. Worlds apart: some thoughts to be delivered to house staff on the first day of clinic. JAMA. 1987;258:502–3.

SGIM Council. Guidelines for promotion of clinical teachers: draft policy statement. SGIM News. 1993;Nov:7–14.

Whitman N, Schwenk T. Faculty evaluation as a means of faculty development. J Fam Pract. 1982;14:1097–101.

Smith LG. The development of an evaluation system for house staff and attendings. J Med Soc N Jersey. 1974;71:685–7.

Irby D, Rakestraw P. Evaluating clinical teaching in medicine. J Med Educ. 1981;56:181–6.

Ramsey PG, Gillmore GM, Irby DM. Evaluating clinical teaching in the medicine clerkship: relationship of instructor experience and training setting to ratings of teaching effectiveness. J Gen Intern Med. 1988;3:351–5.

Tortolani AJ, Risucci DA, Rosati RJ. Resident evaluation of surgical faculty. J Surg Res. 1991;51:186–91.

Downing SM, English DC, Dean RE. Resident ratings of surgical faculty: improved teaching effectiveness through feedback. Am Surg. 1983;49:329–32.

Irby DM, Gillmore GM, Ramsey PG. Factors affecting ratings of clinical teachers by medical students and residents. J Med Educ. 1987;62:1–7.

Donnelly MB, Woolliscroft JO. Evaluation of clinical instructors by third-year medical students. Acad Med. 1989;64:159–64.

McLeod PJ, James CA, Abrahamowicz M. Clinical tutor evaluation: a 5-year study by students on an in-patient service and residents in an ambulatory care clinic. Med Educ. 1993;27:48–54.

Ramsbottom-Lucier MT, Gillmore GM, Irby DM, Ramsey PG. Evaluation of clinical teaching by general internal medicine faculty in outpatient and inpatient settings. Acad Med. 1994;69:152–4.

Kim J. Factor Analysis: Statistical Methods and Practical Issues. Beverly Hills, CA: Sage Publications. 1978.

Holzinger KJ, Harman HH. Factor Analysis: A Synthesis of Factorial Methods. Chicago, IL: University of Chicago Press, 1941.

Nunnally JC. Psychometric Theory. 2nd ed. New York: McGraw-Hill, 1978.

Stata Corporation. Stata Reference Manual: Release 3.1. 6th ed. College Station, TX: Stata Corporation, 1993.

Brennan RL. Elements of Generalizability Theory. Iowa City, IA: American College Testing Publications, 1983.

Ebel RL. Estimation of the reliability of ratings. Psyehometrika. 1951;16:407–24.

Author information

Authors and Affiliations

Rights and permissions

About this article

Cite this article

Hayward, R.A., Williams, B.C., Gruppen, L.D. et al. Measuring attending physician performance in a general medicine outpatient clinic. J Gen Intern Med 10, 504–510 (1995). https://doi.org/10.1007/BF02602402

Published:

Issue Date:

DOI: https://doi.org/10.1007/BF02602402