Abstract

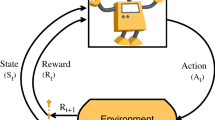

Until recently, Deep Reinforcement Learning was restricted to innovations in games like Atari, Dota2. Despite surpassing the benchmarks established by their human counterparts in multiple games, these methods could not scale to real-life and industrial automation tasks. The main reason for this was the essential requirement of complex and continuous action control and sophisticated physics of the domain involved in these tasks. Because of these reasons, most of the incumbent solutions for such applications involved the invent of custom planning algorithms. The design of such sophisticated custom solutions required complete knowledge to the dynamics of the domain and its derivatives and hence were not scalable. Policy-based DRL has democratized this space, as now deep reinforcement learning agents could be trained to learn similar sophisticated policies just by learning from the data generated by interacting with these systems or their respective simulations. This has led to significant innovations in real-life and high-value control automation applications like autonomous vehicles, drones, and industrial robots. Therefore, in this paper, we present an overview of different types of policy-approximation based technique in Deep Reinforcement Learning that are the basis of many advanced control automation systems.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Sewak, M.: Deep Reinforcement Learning: Frontiers of Artificial Intelligence. Springer (2019)

Mnih, V., Kavukcuoglu, K., Silver, D., Graves, A., Antonoglou, I., Wierstra, D., Riedmiller, M.: Playing atari with deep reinforcement learning. arXiv preprint arXiv:1312.5602 (2013)

Sewak, M., Sahay, S.K., Rathore, H.: An overview of deep learning architecture of deep neural networks and autoencoders. J. Comput. Theoret. Nanosci. 17(1), 182–188 (2020)

Sewak, M., Karim, M.R., Pujari, P.: Practical Convolutional Neural Networks: Implement Advanced Deep Learning Models Using Python. Packt Publishing Ltd (2018)

Sahay, S.K., Sharma, A., Rathore, H.: Evolution of malware and its detection techniques. In: Information and Communication Technology for Sustainable Development, pp. 139–150. Springer (2020)

Rathore, H., Sahay, S.K., Chaturvedi, P., Sewak, M.: Android malicious application classification using clustering. In: International Conference on Intelligent Systems Design and Applications, pp. 659–667. Springer (2018)

Rathore, H., Agarwal, S., Sahay, S.K., Sewak, M.: Malware detection using machine learning and deep learning. In: International Conference on Big Data Analytics, pp. 402–411. Springer (2018)

Hasselt, H.v., Guez, A., Silver, D.: Deep reinforcement learning with double q-learning. In: Thirtieth AAAI Conference on Artificial Intelligence, pp. 2094–2100 (2016)

Wang, Z., Schaul, T., Hessel, M., Hasselt, H., Lanctot, M., Freitas, N.: Dueling network architectures for deep reinforcement learning. In: International conference on machine learning, pp. 1995–2003 (2016)

Sewak, M., Sahay, S.K., Rathore, H.: Value-approximation based deep reinforcement learning techniques: an overview. In: 5th IEEE International Conference on Computing, Communication and Automation (ICCCA 2020) (2020)

Lillicrap, T.P., Hunt, J.J., Pritzel, A., Heess, N., Erez, T., Tassa, Y., Silver, D., Wierstra, D.: Continuous control with deep reinforcement learning. In: ICLR (Poster) (2016)

Schulman, J., Levine, S., Abbeel, P., Jordan, M., Moritz, P.: Trust region policy optimization. In: International conference on machine learning, pp. 1889–1897 (2015)

Schulman, J., Wolski, F., Dhariwal, P., Radford, A., Klimov, O.: Proximal policy optimization algorithms. CoRR arxiv: abs/1707.06347 (2017)

Zhu, M., Wang, X., Wang, Y.: Human-like autonomous car-following model with deep reinforcement learning. Transp. Res. part C: Emerg. Technol. 97, 348–368 (2018)

Kohl, N., Stone, P.: Policy gradient reinforcement learning for fast quadrupedal locomotion. In: IEEE International Conference on Robotics and Automation, 2004. Proceedings. ICRA ’04. 2004, vol. 3, pp. 2619–2624 (2004)

Sewak, M., Sahay, S.K., Rathore, H.: Comparison of deep learning and the classical machine learning algorithm for the malware detection. In: 2018 19th IEEE/ACIS International Conference on Software Engineering, Artificial Intelligence, Networking and Parallel/Distributed Computing (SNPD), pp. 293–296. IEEE (2018)

Sewak, M., Sahay, S.K., Rathore, H.: An investigation of a deep learning based malware detection system. In: Proceedings of the 13th International Conference on Availability, Reliability and Security, pp. 1–5 (2018)

Silver, D., Huang, A., Maddison, C.J., Guez, A., Sifre, L., Van Den Driessche, G., Schrittwieser, J., Antonoglou, I., Panneershelvam, V., Lanctot, M., et al.: Mastering the game of go with deep neural networks and tree search. Nature 529(7587), 484–489 (2016)

Christopher, B.: Dota 2 with large scale deep reinforcement learning. arXiv preprint arXiv:1912.06680 (2019)

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A.A., Veness, J., Bellemare, M.G., Graves, A., Riedmiller, M., Fidjeland, A.K., Ostrovski, G., et al.: Human-level control through deep reinforcement learning. Nature 518(7540), 529–533 (2015)

Smart, W.D., Pack Kaelbling, L.: Effective reinforcement learning for mobile robots. In: Proceedings 2002 IEEE International Conference on Robotics and Automation (Cat. No.02CH37292), vol. 4, pp. 3404–3410 (2002)

Rajeswaran, A., Kumar, V., Gupta, A., Schulman, J., Todorov, E., Levine, S.: Learning complex dexterous manipulation with deep reinforcement learning and demonstrations. CoRR arxiv: abs/1709.10087 (2017)

Poznyak, A.S.: Advanced Mathematical Tools for Automatic Control Engineers: Stochastic Techniques. Elsevier (2009)

Socha, K., Dorigo, M.: Ant colony optimization for continuous domains. Eur. J. Oper. Res. 185(3), 1155–1173 (2008)

Finn, C., Levine, S., Abbeel, P.: Guided cost learning: deep inverse optimal control via policy optimization, pp. 49–58. PMLR (2016)

Sutton, R.S., McAllester, D.A., Singh, S.P., Mansour, Y.: Policy gradient methods for reinforcement learning with function approximation. In: Advances in Neural Information Processing Systems, pp. 1057–1063 (2000)

Bhatnagar, S., Ghavamzadeh, M., Lee, M., Sutton, R.S.: Incremental natural actor-critic algorithms. In: Advances in Neural Information Processing Systems, pp. 105–112 (2008)

Silver, D., Lever, G., Heess, N., Degris, T., Wierstra, D., Riedmiller, M.: Deterministic policy gradient algorithms. In: 31st International Conference on International Conference on Machine Learning, pp. I–387 (2014)

Singh, S., Jaakkola, T., Littman, M.L., Szepesvári, C.: Convergence results for single-step on-policy reinforcement-learning algorithms. Mach. Learn. 38(3), 287–308 (2000)

Munos, R., Stepleton, T., Harutyunyan, A., Bellemare, M.: Safe and efficient off-policy reinforcement learning. In: Advances in Neural Information Processing Systems, pp. 1054–1062 (2016)

Mnih, V., Badia, A.P., Mirza, M., Graves, A., Lillicrap, T., Harley, T., Silver, D., Kavukcuoglu, K.: Asynchronous methods for deep reinforcement learning. In: International Conference on Machine Learning, pp. 1928–1937 (2016)

Degris, T., Pilarski, P.M., Sutton, R.S.: Model-free reinforcement learning with continuous action in practice. In: American Control Conference, pp. 2177–2182. IEEE (2012)

Sutton, R.S., Barto, A.G.: Introduction to Reinforcement Learning, 1st edn. MIT Press, Cambridge, MA, USA (1998)

Sutton, R.S.: Learning to predict by the methods of temporal differences. Mach. Learn. 3(1), 9–44 (1988)

Parisotto, E., Ba, L.J., Salakhutdinov, R.: Actor-mimic: deep multitask and transfer reinforcement learning. CoRR arxiv: abs/1511.06342 (2015)

Duan, Y., Chen, X., Houthooft, R., Schulman, J., Abbeel, P.: Benchmarking deep reinforcement learning for continuous control. In: International Conference on Machine Learning, pp. 1329–1338 (2016)

Sutton, R.S., McAllester, D., Singh, S., Mansour, Y.: Policy gradient methods for reinforcement learning with function approximation. In: 12th International Conference on Neural Information Processing Systems, pp. 1057–1063 (1999)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Sewak, M., Sahay, S.K., Rathore, H. (2022). Policy-Approximation Based Deep Reinforcement Learning Techniques: An Overview. In: Joshi, A., Mahmud, M., Ragel, R.G., Thakur, N.V. (eds) Information and Communication Technology for Competitive Strategies (ICTCS 2020). Lecture Notes in Networks and Systems, vol 191. Springer, Singapore. https://doi.org/10.1007/978-981-16-0739-4_47

Download citation

DOI: https://doi.org/10.1007/978-981-16-0739-4_47

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-0738-7

Online ISBN: 978-981-16-0739-4

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)