Abstract

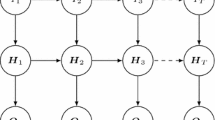

Dynamic Bayesian networks (DBNs) are a class of probabilistic graphical models that has become a standard tool for modeling various stochastic time-varying phenomena. Probabilistic graphical models such as 2-Time slice BN (2T-BNs) are the most used and popular models for DBNs. Because of the complexity induced by adding the temporal dimension, DBN structure learning is a very complex task. Existing algorithms are adaptations of score-based BN structure learning algorithms but are often limited when the number of variables is high. We focus in this paper to DBN structure learning with another family of structure learning algorithms, local search methods, known for its scalability. We propose Dynamic MMHC, an adaptation of the ”static” MMHC algorithm. We illustrate the interest of this method with some experimental results.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

References

Chickering, D., Geiger, D., Heckerman, D.: Learning bayesian networks is NP-hard. Tech. Rep. MSR-TR-94-17, Microsoft Research Technical Report (1994)

Daly, R., Shen, Q., Aitken, S.: Learning bayesian networks: approaches and issues. Knowledge Engineering Review 26(2), 99–157 (2011)

Dash, D., Druzdzel, M.: A robust independence test for constraint-based learning of causal structure. In: Proceedings of the Nineteenth Conference Annual Conference on Uncertainty in Artificial Intelligence (UAI 2003), pp. 167–174. Morgan Kaufmann (2003)

de Jongh, M., Druzdzel, M.: A comparison of structural distance measures for causal bayesian network models. In: Recent Advances in Intelligent Information Systems, Challenging Problems of Science. Computer Science series, pp. 443–456 (2009)

Dojer, N.: Learning bayesian networks does not have to be np-hard. In: Královič, R., Urzyczyn, P. (eds.) MFCS 2006. LNCS, vol. 4162, pp. 305–314. Springer, Heidelberg (2006)

Friedman, N., Murphy, K., Russell, S.: Learning the structure of dynamic probabilistic networks. In: UAI 1998, pp. 139–147 (1998)

Gámez, J.A., Mateo, J.L., Puerta, J.M.: Learning bayesian networks by hill climbing: efficient methods based on progressive restriction of the neighborhood. Data Mining and Knowledge Discovery 22, 106–148 (2011)

Gao, S., Xiao, Q., Pan, Q., Li, Q.: Learning dynamic bayesian networks structure based on bayesian optimization algorithm. In: Liu, D., Fei, S., Hou, Z., Zhang, H., Sun, C. (eds.) ISNN 2007, Part II. LNCS, vol. 4492, pp. 424–431. Springer, Heidelberg (2007)

Murphy, K.: Dynamic Bayesian Networks: Representation, Inference and Learning. Phd, University of California, Berkeley (2002)

Peña, J.M., Björkegren, J., Tegnér, J.: Scalable, efficient and correct learning of markov boundaries under the faithfulness assumption. In: Godo, L. (ed.) ECSQARU 2005. LNCS (LNAI), vol. 3571, pp. 136–147. Springer, Heidelberg (2005)

Rodrigues de Morais, S., Aussem, A.: A novel scalable and data efficient feature subset selection algorithm. In: Daelemans, W., Goethals, B., Morik, K. (eds.) ECML PKDD 2008, Part II. LNCS (LNAI), vol. 5212, pp. 298–312. Springer, Heidelberg (2008)

Trabelsi, G., Leray, P., Ben Ayeb, M., Alimi, A.: Benchmarking dynamic bayesian network structure learning algorithms. In: ICMSAO 2013, Hammamet, Tunisia, pp. 1–6 (2013)

Tsamardinos, I., Aliferis, C., Statnikov, A.: Time and sample efficient discovery of markov blankets and direct causal relations. In: ACM-KDD 2003, vol. 36(4), pp. 673–678 (2003)

Tsamardinos, I., Brown, L.E., Constantin, F., Aliferis, C.F.: The max-min hill-climbing bayesian network structure learning algorithm. Mach. Learn. 65(1), 31–78 (2006)

Tsamardinos, I., Constantin, F.A., Statnikov, A.: Algorithms for Large Scale Markov Blanket Discovery. In: FLAIRS, pp. 376–380 (2003)

Tucker, A., Liu, X.: Extending evolutionary programming methods to the learning of dynamic bayesian networks. In: The Genetic and Evolutionary Computation Conference, pp. 923–929 (1999)

Tucker, A., Liu, X., Ogden-Swift, A.: Evolutionary learning of dynamic probabilistic models with large time lags. International Journal of Intelligent Systems 16(5), 621–646 (2001)

Vinh, N., Chetty, M., Coppel, R., Wangikar, P.: Local and global algorithms for learning dynamic bayesian networks. In: ICDM 2012, pp. 685–694 (2012)

Vinh, N., Chetty, M., Coppel, R., Wangikar, P.: Polynomial time algorithm for learning globally optimal dynamic bayesian network. In: Lu, B.-L., Zhang, L., Kwok, J. (eds.) ICONIP 2011, Part III. LNCS, vol. 7064, pp. 719–729. Springer, Heidelberg (2011), http://dx.doi.org/10.1007/978-3-642-24965-5_81

Wang, H., Yu, K., Yao, H.: Learning dynamic bayesian networks using evolutionary mcmc. In: ICCIS 2006, pp. 45–50 (2006)

Wilczynski, B., Dojer, N.: Bnfinder: exact and efficient method for learning bayesian networks. Bioinformatics 25(2), 286–287 (2009)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Trabelsi, G., Leray, P., Ben Ayed, M., Alimi, A.M. (2013). Dynamic MMHC: A Local Search Algorithm for Dynamic Bayesian Network Structure Learning. In: Tucker, A., Höppner, F., Siebes, A., Swift, S. (eds) Advances in Intelligent Data Analysis XII. IDA 2013. Lecture Notes in Computer Science, vol 8207. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-642-41398-8_34

Download citation

DOI: https://doi.org/10.1007/978-3-642-41398-8_34

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-642-41397-1

Online ISBN: 978-3-642-41398-8

eBook Packages: Computer ScienceComputer Science (R0)