Abstract

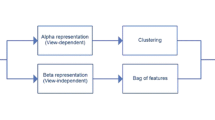

This paper concerns recognition of human actions under view changes. We explore self-similarities of action sequences over time and observe the striking stability of such measures across views. Building upon this key observation we develop an action descriptor that captures the structure of temporal similarities and dissimilarities within an action sequence. Despite this descriptor not being strictly view-invariant, we provide intuition and experimental validation demonstrating the high stability of self-similarities under view changes. Self-similarity descriptors are also shown stable under action variations within a class as well as discriminative for action recognition. Interestingly, self-similarities computed from different image features possess similar properties and can be used in a complementary fashion. Our method is simple and requires neither structure recovery nor multi-view correspondence estimation. Instead, it relies on weak geometric properties and combines them with machine learning for efficient cross-view action recognition. The method is validated on three public datasets, it has similar or superior performance compared to related methods and it performs well even in extreme conditions such as when recognizing actions from top views while using side views for training only.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Moeslund, T., Hilton, A., Krüger, V.: A survey of advances in vision-based human motion capture and analysis. CVIU 103, 90–126 (2006)

Wang, L., Hu, W., Tan, T.: Recent developments in human motion analysis. Pattern Recognition 36, 585–601 (2003)

Yilmaz, A., Shah, M.: Recognizing human actions in videos acquired by uncalibrated moving cameras. In: Proc. ICCV, pp. I:150–157 (2005)

Syeda-Mahmood, T., Vasilescu, M., Sethi, S.: Recognizing action events from multiple viewpoints. In: Proc. EventVideo, pp. 64–72 (2001)

Parameswaran, V., Chellappa, R.: View invariance for human action recognition. IJCV 66, 83–101 (2006)

Shen, Y., Foroosh, H.: View invariant action recognition using fundamental ratios. In: Proc. CVPR (2008)

Li, R., Tian, T., Sclaroff, S.: Simultaneous learning of nonlinear manifold and dynamical models for high-dimensional time series. In: Proc. ICCV (2007)

Ogale, A., Karapurkar, A., Aloimonos, Y.: View-invariant modeling and recognition of human actions using grammars. In: Proc. W. on Dyn. Vis., pp. 115–126 (2006)

Weinland, D., Boyer, E., Ronfard, R.: Action recognition from arbitrary views using 3D exemplars. In: Proc. ICCV (2007)

Shechtman, E., Irani, M.: Matching local self-similarities across images and videos. In: Proc. CVPR (2007)

Benabdelkader, C., Cutler, R., Davis, L.: Gait recognition using image self-similarity. EURASIP J. Appl. Signal Process 2004, 572–585 (2004)

Cutler, R., Davis, L.: Robust real-time periodic motion detection, analysis, and applications. PAMI 22, 781–796 (2000)

Carlsson, S.: Recognizing walking people. In: Proc. ECCV, pp. I: 472–486 (2000)

Lele, S.: Euclidean distance matrix analysis (EDMA): Estimation of mean form and mean form difference. Mathematical Geology 25, 573–602 (1993)

Tenenbaum, J., de Silva, V., Langford, J.: A global geometric framework for nonlinear dimensionality reduction. Science 290, 2319–2323 (2000)

Rao, C., Yilmaz, A., Shah, M.: View-invariant representation and recognition of actions. IJCV 50(2), 203–226 (2002)

Ali, S., Basharat, A., Shah, M.: Chaotic invariants for human action recognition. In: Proc. ICCV (2007)

Gorelick, L., Blank, M., Shechtman, E., Irani, M., Basri, R.: Actions as space-time shapes. PAMI 29, 2247–2253 (2007)

Dalal, N., Triggs, B.: Histograms of oriented gradients for human detection. In: Proc. CVPR, pp. I: 886–893 (2005)

Lucas, B., Kanade, T.: An iterative image registration technique with an application to stereo vision. In: Image Understanding Workshop, pp. 121–130 (1981)

Laptev, I., Caputo, B., Schüldt, C., Lindeberg, T.: Local velocity-adapted motion events for spatio-temporal recognition. CVIU 108, 207–229 (2007)

Niebles, J., Wang, H., Li, F.: Unsupervised learning of human action categories using spatial-temporal words. In: Proc. BMVC (2006)

Marszałek, M., Schmid, C., Harzallah, H., van de Weijer, J.: Learning object representations for visual object class recognition. In: The PASCAL VOC 2007 Challenge Workshop, in conjunction with ICCV (2007)

Ikizler, N., Duygulu, P.: Human action recognition using distribution of oriented rectangular patches. In: Workshop on Human Motion, pp. 271–284 (2007)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Junejo, I.N., Dexter, E., Laptev, I., Pérez, P. (2008). Cross-View Action Recognition from Temporal Self-similarities. In: Forsyth, D., Torr, P., Zisserman, A. (eds) Computer Vision – ECCV 2008. ECCV 2008. Lecture Notes in Computer Science, vol 5303. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-88688-4_22

Download citation

DOI: https://doi.org/10.1007/978-3-540-88688-4_22

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-88685-3

Online ISBN: 978-3-540-88688-4

eBook Packages: Computer ScienceComputer Science (R0)