Abstract

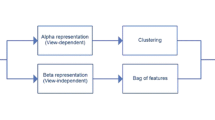

Appearance features are good at discriminating activities in a fixed view, but behave poorly when aspect is changed. We describe a method to build features that are highly stable under change of aspect. It is not necessary to have multiple views to extract our features. Our features make it possible to learn a discriminative model of activity in one view, and spot that activity in another view, for which one might poses no labeled examples at all. Our construction uses labeled examples to build activity models, and unlabeled, but corresponding, examples to build an implicit model of how appearance changes with aspect. We demonstrate our method with challenging sequences of real human motion, where discriminative methods built on appearance alone fail badly.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Niculescu-Mizil, R.C.A.: Inductive transfer for bayesian network structure learning (2007)

Aloimonos, Y., Ogale, A.S., Karapurkar, A.P.: View invariant recognition of actions using grammars. In: Proc. Workshop CAPTECH (2004)

Mori, G., Efros, A.A., Berg, A.C., Malik, J.: Recognizing action at a distance. In: IEEE International Conference on Computer Vision (ICCV 2003) (2003)

Ando, R.K., Zhang, T.: A framework for learning predictive structures from multiple tasks and unlabeled data. J. Mach. Learn. Res. 6, 1817–1853 (2005)

Bakker, T.H.B.: Task clustering and gating for bayesian multitask learning. Journal of Machine Learning, 83–99 (2003)

Barron, C., Kakadiaris, I.: Estimating anthropometry and pose from a single uncalibrated image. Computer Vision and Image Understanding 81(3), 269–284 (2001)

Blank, M., Gorelick, L., Shechtman, E., Irani, M., Basri, R.: Actions as space-time shapes. In: ICCV, pp. 1395–1402 (2005)

Blank, M., Gorelick, L., Shechtman, E., Irani, M., Basri, R.: Actions as space-time shapes. In: ICCV (2005)

Bobick, A., Davis, J.: The recognition of human movement using temporal templates. PAMI 23(3), 257–267 (2001)

Bregler, C., Malik, J.: Tracking people with twists and exponential maps. In: IEEE Conf. on Computer Vision and Pattern Recognition, pp. 8–15 (1998)

Perkins, G.S.D.N.: Transfer of learning, 2nd edn. International Encyclopedia of Education (1992)

Dai, W., Yang, Q., Xue, G.-R., Yu, Y.: Boosting for transfer learning. In: ICML 2007 (2007)

Dance, C., Willamowski, J., Fan, L., Bray, C., Csurka, G.: Visual categorization with bags of keypoints. In: ECCV International Workshop on Statistical Learning in Computer Vision (2004)

Elidan, G., Heitz, G., Koller, D.: Learning object shape: From drawings to images. In: CVPR 2006, Washington, DC, USA, pp. 2064–2071. IEEE Computer Society, Los Alamitos (2006)

Evgeniou, T., Pontil, M.: Regularized multi–task learning. In: KDD 2004 (2004)

Farhadi, A., Forsyth, D.A., White, R.: Transfer learning in sign language. In: CVPR (2007)

Feng, X., Perona, P.: Human action recognition by sequence of movelet codewords. In: Proceedings of First International Symposium on 3D Data Processing Visualization and Transmission,2002, pp. 717–721 (2002)

Forsyth, D., Arikan, O., Ikemoto, L., O’Brien, J., Ramanan, D.: Computational aspects of human motion i: tracking and animation. Foundations and Trends in Computer Graphics and Vision 1(2/3), 1–255 (2006)

Howe, N.R., Leventon, M.E., Freeman, W.T.: Bayesian reconstruction of 3d human motion from single-camera video. In: Solla, S., Leen, T., Müller, K.-R. (eds.) Advances in Neural Information Processing Systems 12, pp. 820–826. MIT Press, Cambridge (2000)

Hu, W., Tan, T., Wang, L., Maybank, S.: A survey on visual surveillance of object motion and behaviors. IEEE Trans. Systems, Man and Cybernetics - Part C: Applications and Reviews 34(3), 334–352 (2004)

Ikizler, N., Forsyth, D.: Searching video for complex activities with finite state models. In: CVPR (2007)

Kaski, S., Peltonen, J.: Learning from Relevant Tasks Only. Springer, Heidelberg (2007)

Laptev, I., Lindeberg, T.: Space-time interest points (2003)

Lucas, B., Kanade, T.: An iterative image registration technique with an application to stero vision. IJCAI (1981)

Rosenstein, L.K.M.T., Marx, Z.: To transfer or not to transfer (2005)

Niu, F., Abdel-Mottaleb, M.: View-invariant human activity recognition based on shape and motion features. In: ISMSE 2004 (2004)

Niyogi, S., Adelson, E.: Analyzing and recognizing walking figures in xyt. In: Media lab vision and modelling tr-223. MIT, Cambridge (1995)

Scovanner, M.S.P., Ali, S.: A 3-dimensional sift descriptor and its application to action recognition. ACM Multimedia (2007)

Parameswaran, V., Chellappa, R.: View invariants for human action recognition. In: IEEE Conf. on Computer Vision and Pattern Recognition (2003)

Raina, D.K.R., Ng, A.Y.: Transfer learning by constructing informative priors (2005)

Ramanan, D., Forsyth, D.: Automatic annotation of everyday movements. In: Advances in Neural Information Processing (2003)

Rao, C., Yilmaz, A., Shah, M.: View-invariant representation and recognition of actions. IJCV 50(2), 203–226 (2002)

Taylor, C.: Reconstruction of articulated objects from point correspondences in a single uncalibrated image. Computer Vision and Image Understanding 80(3), 349–363 (2000)

Taylor, M.E., Stone, P.: Cross-domain transfer for reinforcement learning. In: ICML 2007 (2007)

Thrun, S.: Is learning the n-th thing any easier than learning the first? In: NIPS (1996)

Tran, D., Sorokin, A.: Human activity recognition with metric learning. In: ECCV (2008)

Turaga, P.K., Veeraraghavan, A., Chellappa, R.: From videos to verbs: Mining videos for activities using a cascade of dynamical systems. In: IEEE Conf. on Computer Vision and Pattern Recognition (2007)

Wang, L., Suter, D.: Recognizing human activities from silhouettes: Motion subspace and factorial discriminative graphical model. In: IEEE Conf. on Computer Vision and Pattern Recognition (2007)

Wang, L., Suter, D.: Recognizing human activities from silhouettes: Motion subspace and factorial discriminative graphical model. CVPR (2007)

Wang, Y., Huang, K., Tan, T.: Human activity recognition based on r transform. Visual Surveillance (2007)

Weinland, D., Boyer, E., Ronfard, R.: Action recognition from arbitrary views using 3d exemplars. In: ICCV, Rio de Janeiro, Brazil (2007)

Weinland, D., Ronfard, R., Boyer, E.: Free viewpoint action recognition using motion history volumes. Computer Vision and Image Understanding (2006)

Wilson, A., Bobick, A.: Learning visual behavior for gesture analysis. In: IEEE Symposium on Computer Vision, pp. 229–234 (1995)

Wilson, A., Fern, A., Ray, S., Tadepalli, P.: Multi-task reinforcement learning: a hierarchical bayesian approach. In: ICML 2007 (2007)

Yamato, J., Ohya, J., Ishii, K.: Recognising human action in time sequential images using hidden markov model. In: IEEE Conf. on Computer Vision and Pattern Recognition, pp. 379–385 (1992)

Yang, J., Xu, Y., Chen, C.S.: Human action learning via hidden markov model. IEEE Transactions on Systems Man and Cybernetics 27, 34–44 (1997)

Yilmaz, A., Shah, M.: Actions sketch: A novel action representation (2005)

Marx, L.K.Z., Rosenstein, M.T.: Transfer learning with an ensemble of background tasks (2005)

Zhang, K., Tsang, I.W., Kwok, J.T.: Maximum margin clustering made practical. In: ICML 2007 (2007)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2008 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Farhadi, A., Tabrizi, M.K. (2008). Learning to Recognize Activities from the Wrong View Point. In: Forsyth, D., Torr, P., Zisserman, A. (eds) Computer Vision – ECCV 2008. ECCV 2008. Lecture Notes in Computer Science, vol 5302. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-88682-2_13

Download citation

DOI: https://doi.org/10.1007/978-3-540-88682-2_13

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-88681-5

Online ISBN: 978-3-540-88682-2

eBook Packages: Computer ScienceComputer Science (R0)