Abstract

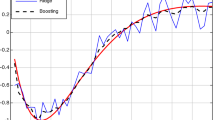

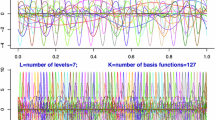

Boosting is a simple yet powerful modeling technique that is used in many machine learning and data mining related applications. In this paper, we propose a novel scale-space based boosting framework which applies scale-space theory for choosing the optimal regressors during the various iterations of the boosting algorithm. In other words, the data is considered at different resolutions for each iteration in the boosting algorithm. Our framework chooses the weak regressors for the boosting algorithm that can best fit the current resolution and as the iterations progress, the resolution of the data is increased. The amount of increase in the resolution follows from the wavelet decomposition methods. For regression modeling, we use logitboost update equations based on first derivative of the loss function. We clearly manifest the advantages of using this scale-space based framework for regression problems and show results on different real-world regression datasets.

Chapter PDF

Similar content being viewed by others

Keywords

These keywords were added by machine and not by the authors. This process is experimental and the keywords may be updated as the learning algorithm improves.

References

Breiman, L.: Arcing classifiers. The Annals of Statistics 26(3), 801–849 (1998)

Hastie, T., Tibshirani, R., Friedman, J.: Boosting and Additive Trees. In: The Elements of Statistical Learning. Data Mining, Inference, and Prediction, Springer, Heidelberg (2001)

Sporring, J., Nielsen, M., Florack, L., Johansen, P.: Gaussian Scale-Space Theory. Kluwer Academic Publishers, Dordrecht (1997)

Breiman, L.: Bagging predictors. Machine Learning 24(2), 123–140 (1996)

Friedman, J.H., Hastie, T., Tibshirani, R.: Additive logistic regression: A statistical view of boosting. Annals of Statistics 28(2), 337–407 (2000)

Zemel, R.S., Pitassi, T.: A gradient-based boosting algorithm for regression problems. Neural Information Processing Systems, 696–702 (2000)

Schapire, R.E., Singer, Y.: Improved boosting using confidence-rated predictions. Machine Learning 37(3), 297–336 (1999)

Zhu, J., Rosset, S., Zou, H., Hastie, T.: Multi-class adaboost. Technical Report 430, Department of Statistics, University of Michigan (2005)

Buhlmann, P., Yu, B.: Boosting with the l2 loss: Regression and classification. Journal of American Statistical Association 98(462), 324–339 (2003)

Lindeberg, T.: Scale-space for discrete signals. IEEE Transactions on Pattern Analysis Machine Intelligence 12(3), 234–254 (1990)

Leung, Y., Zhang, J., Xu, Z.: Clustering by scale-space filtering. IEEE Transactions on Pattern Analysis Machine Intelligence 22(12), 1396–1410 (2000)

Mallat, S.: A theory for multiresolution signal decomposition: the wavelet representation. IEEE Transaction on Pattern Analysis and Machine Intelligence 11, 674–693 (1989)

Information Technology Laboratory, N.I.o.S. (NIST), T.: Nist strd (statistics reference datasets), http://www.itl.nist.gov/div898/strd/

Hastie, T., Tibshirani, R.: Generalized additive models, p. 304. Chapman and Hall, London (1990)

Blake, C., Merz, C.: UCI repository of machine learning databases. University of California, Irvine, Dept. of Information and Computer Sciences (1998), http://www.ics.uci.edu/~mlearn/MLRepository.html

Author information

Authors and Affiliations

Editor information

Rights and permissions

Copyright information

© 2007 Springer-Verlag Berlin Heidelberg

About this paper

Cite this paper

Park, JH., Reddy, C.K. (2007). Scale-Space Based Weak Regressors for Boosting. In: Kok, J.N., Koronacki, J., Mantaras, R.L.d., Matwin, S., Mladenič, D., Skowron, A. (eds) Machine Learning: ECML 2007. ECML 2007. Lecture Notes in Computer Science(), vol 4701. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-74958-5_66

Download citation

DOI: https://doi.org/10.1007/978-3-540-74958-5_66

Publisher Name: Springer, Berlin, Heidelberg

Print ISBN: 978-3-540-74957-8

Online ISBN: 978-3-540-74958-5

eBook Packages: Computer ScienceComputer Science (R0)