Abstract

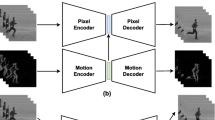

Videos always exhibit various pattern motions, which can be modeled according to dynamics between adjacent frames. Previous methods based on linear dynamic system can model dynamic textures but have limited capacity of representing sophisticated nonlinear dynamics. Inspired by the nonlinear expression power of deep autoencoders, we propose a novel model named dynencoder which has an autoencoder at the bottom and a variant of it at the top (named as dynpredictor). It generates hidden states from raw pixel inputs via the autoencoder and then encodes the dynamic of state transition over time via the dynpredictor. Deep dynencoder can be constructed by proper stacking strategy and trained by layer-wise pre-training and joint fine-tuning. Experiments verify that our model can describe sophisticated video dynamics and synthesize endless video texture sequences with high visual quality. We also design classification and clustering methods based on our model and demonstrate the efficacy of them on traffic scene classification and motion segmentation.

Chapter PDF

Similar content being viewed by others

References

Baktashmotlagh, M., Harandi, M., Bigdeli, A., Lovell, B., Salzmann, M.: Non-linear stationary subspace analysis with application to video classification. In: International Conference on Machine Learning, pp. 450–458 (2013)

Basharat, A., Shah, M.: Time series prediction by chaotic modeling of nonlinear dynamical systems. In: IEEE International Conference on Computer Vision, pp. 1941–1948 (2009)

Bengio, Y.: Learning deep architectures for AI. Foundations and Trends in Machine Learning 2(1), 1–127 (2009)

Boots, B., Gordon, G.J., Siddiqi, S.M.: A constraint generation approach to learning stable linear dynamical systems. In: Advances in Neural Information Processing Systems 20, pp. 1329–1336 (2008)

Chan, A., Vasconcelos, N.: Probabilistic kernels for the classification of auto-regressive visual processes. In: IEEE Conference on Computer Vision and Pattern Recognition, vol. 1, pp. 846–851 (2005)

Chan, A., Vasconcelos, N.: Classifying video with kernel dynamic textures. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–6 (2007)

Chan, A., Vasconcelos, N.: Modeling, clustering, and segmenting video with mixtures of dynamic textures. IEEE Transactions on Pattern Analysis and Machine Intelligence 30(5), 909–926 (2008)

Chang, C.C., Lin, C.J.: Libsvm: a library for support vector machines. ACM Transactions on Intelligent Systems and Technology 2(3), 27 (2011)

Doretto, G., Chiuso, A., Wu, Y., Soatto, S.: Dynamic textures. International Journal of Computer Vision 51(2), 91–109 (2003)

Doretto, G., Cremers, D., Favaro, P., Soatto, S.: Dynamic texture segmentation. In: IEEE International Conference on Computer Vision (October 2003)

Erhan, D., Bengio, Y., Courville, A., Manzagol, P.A., Vincent, P., Bengio, S.: Why does unsupervised pre-training help deep learning? Journal of Machine Learning Research 11, 625–660 (2010)

Le, Q.V., Zou, W.Y., Yeung, S.Y., Ng, A.Y.: Learning hierarchical invariant spatio-temporal features for action recognition with independent subspace analysis. In: IEEE Conference on Computer Vision and Pattern Recognition (2011)

Liu, C.B., Lin, R.S., Ahuja, N., Yang, M.H.: Dynamic textures synthesis as nonlinear manifold learning and traversing. In: BMVC, pp. 859–868 (2006)

Martin, R.: A metric for ARMA processes. IEEE Transactions on Signal Processing 48(4), 1164–1170 (2000)

Masiero, A., Chiuso, A.: Nonlinear temporal textures synthesis: a monte carlo approach. In: Leonardis, A., Bischof, H., Pinz, A. (eds.) ECCV 2006, Part II. LNCS, vol. 3952, pp. 283–294. Springer, Heidelberg (2006)

Péteri, R., Fazekas, S., Huiskes, M.: Dyntex: A comprehensive database of dynamic textures. Pattern Recognition Letters 31(12), 1627–1632 (2010)

Ravichandran, A., Chaudhry, R., Vidal, R.: Categorizing dynamic textures using a bag of dynamical systems. IEEE Transactions on Pattern Analysis and Machine Intelligence 35(2), 342–353 (2013)

Saisan, P., Doretto, G., Wu, Y.N., Soatto, S.: Dynamic texture recognition. In: IEEE Conference on Computer Vision and Pattern Recognition, vol. 2 (2001)

Sankaranarayanan, A.C., Turaga, P.K., Baraniuk, R.G., Chellappa, R.: Compressive acquisition of dynamic scenes. In: Daniilidis, K., Maragos, P., Paragios, N. (eds.) ECCV 2010, Part I. LNCS, vol. 6311, pp. 129–142. Springer, Heidelberg (2010)

Shi, J., Malik, J.: Normalized cuts and image segmentation. IEEE Transactions on Pattern Analysis and Machine Intelligence 22(8), 888–905 (2000)

Taylor, G.W., Fergus, R., LeCun, Y., Bregler, C.: Convolutional learning of spatio-temporal features. In: Daniilidis, K., Maragos, P., Paragios, N. (eds.) ECCV 2010, Part VI. LNCS, vol. 6316, pp. 140–153. Springer, Heidelberg (2010)

Taylor, G.W., Hinton, G.E.: Factored conditional restricted boltzmann machines for modeling motion style. In: Annual International Conference on Machine Learning, pp. 1025–1032. ACM (2009)

Taylor, G.W., Hinton, G.E., Roweis, S.T.: Modeling human motion using binary latent variables. In: Advances in Neural Information Processing Systems 19, p. 1345 (2007)

Vincent, P., Larochelle, H., Lajoie, I., Bengio, Y., Manzagol, P.A.: Stacked denoising autoencoders: Learning useful representations in a deep network with a local denoising criterion. Journal of Machine Learning Research 11, 3371–3408 (2010)

Wolf, L., Hassner, T., Taigman, Y.: The one-shot similarity kernel. In: IEEE International Conference on Computer Vision, pp. 897–902 (2009)

Xie, J., Xu, L., Chen, E.: Image denoising and inpainting with deep neural networks. In: Advances in Neural Information Processing Systems, pp. 350–358 (2012)

Yan, X., Chang, H., Chen, X.: Temporally multiple dynamic textures synthesis using piecewise linear dynamic systems. In: IEEE International Conference on Image Processing (2013)

Yuan, L., Wen, F., Liu, C., Shum, H.-Y.: Synthesizing dynamic texture with closed-loop linear dynamic system. In: Pajdla, T., Matas, J(G.) (eds.) ECCV 2004. LNCS, vol. 3022, pp. 603–616. Springer, Heidelberg (2004)

Author information

Authors and Affiliations

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2014 Springer International Publishing Switzerland

About this paper

Cite this paper

Yan, X., Chang, H., Shan, S., Chen, X. (2014). Modeling Video Dynamics with Deep Dynencoder. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds) Computer Vision – ECCV 2014. ECCV 2014. Lecture Notes in Computer Science, vol 8692. Springer, Cham. https://doi.org/10.1007/978-3-319-10593-2_15

Download citation

DOI: https://doi.org/10.1007/978-3-319-10593-2_15

Publisher Name: Springer, Cham

Print ISBN: 978-3-319-10592-5

Online ISBN: 978-3-319-10593-2

eBook Packages: Computer ScienceComputer Science (R0)