Abstract

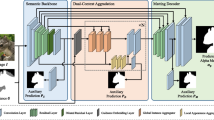

Automatic human matting is highly desired for many real applications. We investigate recent human matting methods and show that common bad cases happen when semantic human segmentation fails. This indicates that semantic understanding is crucial for robust human matting. From this, we develop a fast yet accurate human matting framework, named Semantic Guided Human Matting (SGHM). It builds on a semantic human segmentation network and introduces a light-weight matting module with only marginal computational cost. Unlike previous works, our framework is data efficient, which requires a small amount of matting ground-truth to learn to estimate high quality object mattes. Our experiments show that trained with merely 200 matting images, our method can generalize well to real-world datasets, and outperform recent methods on multiple benchmarks, while remaining efficient. Considering the unbearable labeling cost of matting data and widely available segmentation data, our method becomes a practical and effective solution for the task of human matting. Source code is available at https://github.com/cxgincsu/SemanticGuidedHumanMatting.

X. Chen and Y. Zhu—These authors contributed equally to this work.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Lin, S., Ryabtsev, A., Sengupta, S., Curless, B., Seitz, S., Kemelmacher-Shlizerman, I.: Real-time high-resolution background matting. In: CVPR (2021)

Sengupta, S., Jayaram, V., Curless, B., Seitz, S., Kemelmacher-Shlizerman, I.: Background matting: the world is your green screen. In: CVPR (2020)

Ke, Z., Sun, J., Li, K., Yan, Q., Lau, R.W.: Modnet: real-time trimap-free portrait matting via objective decomposition. In: AAAI (2022)

Yu, Q., et al.: Mask guided matting via progressive refinement network. In: CVPR (2021)

Li, J., Zhang, J., Maybank, S.J., Tao, D.: Bridging composite and real: towards end-to-end deep image matting. Int. J. Comput. Vision 130(2), 246–266 (2022)

Chen, Q., Ge, T., Xu, Y., Zhang, Z., Yang, X., Gai, K.: Semantic human matting. In: ACM MM (2018)

Shen, X., Tao, X., Gao, H., Zhou, C., Jia, J.: Deep automatic portrait matting. In: ECCV (2016)

Lin, S., Yang, L., Saleemi, I., Sengupta, S.: Robust high-resolution video matting with temporal guidance. In: WACV (2022)

Aksoy, Y., Ozan Aydin, T., Pollefeys, M.: Designing effective inter-pixel information flow for natural image matting. In: CVPR (2017)

Chen, Q., Li, D., Tang, C.K.: KNN matting. TPAMI 35(9), 2175–2188 (2013)

Chuang, Y.Y., Curless, B., Salesin, D.H., Szeliski, R.: A bayesian approach to digital matting. In: CVPR (2001)

Gastal, E.S., Oliveira, M.M.: Shared sampling for real-time alpha matting. In: Computer Graphics Forum, vol. 29, pp. 575–584 (2010)

Levin, A., Lischinski, D., Weiss, Y.: A closed-form solution to natural image matting. TPAMI 30(2), 228–242 (2007)

Levin, A., Rav-Acha, A., Lischinski, D.: Spectral matting. TPAMI 30(10), 1699–1712 (2008)

Sun, J., Jia, J., Tang, C.K., Shum, H.Y.: Poisson matting. In: ToG, vol. 23, pp. 315–321 (2004)

Chen, T., Wang, Y., Schillings, V., Meinel, C.: Grayscale image matting and colorization. In: ACCV (2004)

Pham, V.Q., Takahashi, K., Naemura, T.: Real-time video matting based on bilayer segmentation. In: ACCV (2009)

Park, Y., Yoo, S.I.: A convex image segmentation: Extending graph cuts and closed-form matting. In: ACCV (2010)

Sindeev, M., Konushin, A., Rother, C.: Alpha-flow for video matting. In: ACCV (2012)

Xu, N., Price, B., Cohen, S., Huang, T.: Deep image matting. In: CVPR (2017)

Forte, M., Pitié, F.: F, B, alpha matting. CoRR abs/2003.07711 (2020)

Liu, Y., et al.: Tripartite information mining and integration for image matting. In: ICCV (2021)

Yang, S., Wang, B., Li, W., Lin, Y., He, C., et al.: Unified interactive image matting. arXiv preprint arXiv:2205.08324 (2022)

Dai, Y., Price, B., Zhang, H., Shen, C.: Boosting robustness of image matting with context assembling and strong data augmentation. In: CVPR, pp. 11707–11716 (2022)

Chen, G., et al.: PP-matting: high-accuracy natural image matting. arXiv preprint arXiv:2204.09433 (2022)

Qiao, Y., et al.: Attention-guided hierarchical structure aggregation for image matting. In: CVPR (2020)

Zhang, Y., et al.: A late fusion CNN for digital matting. In: CVPR (2019)

Zhu, B., Chen, Y., Wang, J., Liu, S., Zhang, B., Tang, M.: Fast deep matting for portrait animation on mobile phone. In: ACM MM (2017)

Sun, Y., Tang, C.K., Tai, Y.W.: Human instance matting via mutual guidance and multi-instance refinement. In: CVPR (2022)

Xing, Y., Li, Y., Wang, X., Zhu, Y., Chen, Q.: Composite photograph harmonization with complete background cues. In: ACM MM (2022)

Li, J., Ma, S., Zhang, J., Tao, D.: Privacy-preserving portrait matting. arXiv (2021)

Chen, L.C., Papandreou, G., Schroff, F., Adam, H.: Rethinking atrous convolution for semantic image segmentation. arXiv (2017)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: CVPR (2016)

Miyato, T., Kataoka, T., Koyama, M., Yoshida, Y.: Spectral normalization for generative adversarial networks. arXiv (2018)

Wang, Q., Wu, B., Zhu, P., Li, P., Zuo, W., Hu, Q.: ECA-Net: efficient channel attention for deep convolutional neural networks. In: CVPR. IEEE (2020)

supervise.ly: Supervisely person dataset. supervise.ly (2018)

Wu, Z., Huang, Y., Yu, Y., Wang, L., Tan, T.: Early hierarchical contexts learned by convolutional networks for image segmentation. In: ICPR. IEEE (2014)

Gong, K., Liang, X., Li, Y., Chen, Y., Yang, M., Lin, L.: Instance-level human parsing via part grouping network. In: ECCV (2018)

Hou, Q., Liu, F.: Context-aware image matting for simultaneous foreground and alpha estimation. In: ICCV (2019)

Li, J., Zhang, J., Maybank, S.J., Tao, D.: End-to-end animal image matting. arXiv (2020)

Liu, J., et al.: Boosting semantic human matting with coarse annotations. In: CVPR (2020)

Rhemann, C., Rother, C., Wang, J., Gelautz, M., Kohli, P., Rott, P.: A perceptually motivated online benchmark for image matting. In: CVPR. IEEE (2009)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Chen, X. et al. (2023). Robust Human Matting via Semantic Guidance. In: Wang, L., Gall, J., Chin, TJ., Sato, I., Chellappa, R. (eds) Computer Vision – ACCV 2022. ACCV 2022. Lecture Notes in Computer Science, vol 13842. Springer, Cham. https://doi.org/10.1007/978-3-031-26284-5_37

Download citation

DOI: https://doi.org/10.1007/978-3-031-26284-5_37

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-26283-8

Online ISBN: 978-3-031-26284-5

eBook Packages: Computer ScienceComputer Science (R0)