Abstract

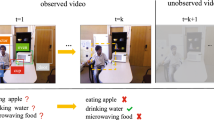

Events in natural videos typically arise from spatio-temporal interactions between actors and objects and involve multiple co-occurring activities and object classes. To capture this rich visual and semantic context, we propose using two graphs: (1) an attributed spatio-temporal visual graph whose nodes correspond to actors and objects and whose edges encode different types of interactions, and (2) a symbolic graph that models semantic relationships. We further propose a graph neural network for refining the representations of actors, objects and their interactions on the resulting hybrid graph. Our model goes beyond current approaches that assume nodes and edges are of the same type, operate on graphs with fixed edge weights and do not use a symbolic graph. In particular, our framework: a) has specialized attention-based message functions for different node and edge types; b) uses visual edge features; c) integrates visual evidence with label relationships; and d) performs global reasoning in the semantic space. Experiments on challenging video understanding tasks, such as temporal action localization on the Charades dataset, show that the proposed method leads to state-of-the-art performance.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Assari, S.M., Zamir, A.R., Shah, M.: Video classification using semantic concept co-occurrences. In: IEEE Conference on Computer Vision and Pattern Recognition (2014)

Bajaj, M., Wang, L., Sigal, L.: G3raphground: Graph-based language grounding. In: IEEE International Conference on Computer Vision, pp. 4281–4290 (2019)

Baradel, F., Neverova, N., Wolf, C., Mille, J., Mori, G.: Object level visual reasoning in videos. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11217, pp. 106–122. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01261-8_7

Carreira, J., Zisserman, A.: Quo vadis, action recognition? A new model and the kinetics dataset. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 4724–4733 (2017). https://doi.org/10.1109/CVPR.2017.502

Chen, X., Li, L., Fei-Fei, L., Gupta, A.: Iterative visual reasoning beyond convolutions. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 7239–7248 (2018). https://doi.org/10.1109/CVPR.2018.00756

Chen, Y., Rohrbach, M., Yan, Z., Shuicheng, Y., Feng, J., Kalantidis, Y.: Graph-based global reasoning networks. In: IEEE Conference on Computer Vision and Pattern Recognition (2019)

Chéron, G., Laptev, I., Schmid, C.: P-CNN: pose-based CNN features for action recognition. In: IEEE International Conference on Computer Vision, pp. 3218–3226 (2015). https://doi.org/10.1109/ICCV.2015.368

Choi, M.J., Lim, J.J., Torralba, A., Willsky, A.S.: Exploiting hierarchical context on a large database of object categories. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 129–136 (2010). https://doi.org/10.1109/CVPR.2010.5540221

Dave, A., Russakovsky, O., Ramanan, D.: Predictive-corrective networks for action detection. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 2067–2076 (2017). https://doi.org/10.1109/CVPR.2017.223

Deng, J., et al.: Large-scale object classification using label relation graphs. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8689, pp. 48–64. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10590-1_4

Deng, Z., Vahdat, A., Hu, H., Mori, G.: Structure inference machines: recurrent neural networks for analyzing relations in group activity recognition. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 4772–4781 (2016). https://doi.org/10.1109/CVPR.2016.516

Ghosh, P., Yao, Y., Davis, L., Divakaran, A.: Stacked spatio-temporal graph convolutional networks for action segmentation. In: IEEE Winter Applications of Computer Vision Conference (2020)

Gilmer, J., Schoenholz, S.S., Riley, P.F., Vinyals, O., Dahl, G.E.: Neural message passing for quantum chemistry. In: International Conference on Machine Learning, pp. 1263–1272 (2017)

Girdhar, R., Carreira, J., Doersch, C., Zisserman, A.: Video action transformer network. In: IEEE Conference on Computer Vision and Pattern Recognition (2019)

Gkioxari, G., Girshick, R., Malik, J.: Contextual action recognition with R*CNN. In: IEEE International Conference on Computer Vision, pp. 1080–1088 (2015). https://doi.org/10.1109/ICCV.2015.129

Gong, L., Cheng, Q.: Exploiting edge features for graph neural networks. In: IEEE Conference on Computer Vision and Pattern Recognition (2019)

He, K., Gkioxari, G., Dollar, P., Girshick, R.: Mask R-CNN. IEEE Trans. Pattern Anal. Mach. Intell., 1 (2018). https://doi.org/10.1109/TPAMI.2018.2844175

He, K., Zhang, X., Ren, S., Sun, J.: Delving deep into rectifiers: surpassing human-level performance on ImageNet classification. In: IEEE International Conference on Computer Vision (2015)

Huang, H., Zhou, L., Zhang, W., Xu, C.: Dynamic graph modules for modeling higher-order interactions in activity recognition. In: British Machine Vision Conference (2019)

Ibrahim, M.S., Mori, G.: Hierarchical relational networks for group activity recognition and retrieval. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11207, pp. 742–758. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01219-9_44

Jain, A., Zamir, A.R., Savarese, S., Saxena, A.: Structural-RNN: deep learning on spatio-temporal graphs. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 5308–5317 (2016)

Jiang, C., Xu, H., Liang, X., Lin, L.: Hybrid knowledge routed modules for large-scale object detection. In: Neural Information Processing Systems, pp. 1552–1563 (2018)

Jiang, Y.G., Wu, Z., Wang, J., Xue, X., Chang, S.F.: Exploiting feature and class relationships in video categorization with regularized deep neural networks. IEEE Trans. Pattern Anal. Mach. Intell. 40(2), 352–364 (2018). https://doi.org/10.1109/TPAMI.2017.2670560

Junior, N.I.N., Hu, H., Zhou, G., Deng, Z., Liao, Z., Mori, G.: Structured label inference for visual understanding. IEEE Trans. Pattern Anal. Mach. Intell., 1 (2019). https://doi.org/10.1109/TPAMI.2019.2893215

Kipf, T.N., Welling, M.: Semi-supervised classification with graph convolutional networks. In: International Conference on Learning Representations (2017)

Koller, D., et al.: Towards robust automatic traffic scene analysis in real-time. In: IEEE Conference on Computer Vision and Pattern Recognition (1994)

Koppula, H.S., Gupta, R., Saxena, A.: Learning human activities and object affordances from RGB-D videos. Int. J. Rob. Res. 32(8), 951–970 (2013). https://doi.org/10.1177/0278364913478446

Krishna, R., et al.: Visual genome: connecting language and vision using crowdsourced dense image annotations. Int. J. Comput. Vis. 123(1), 32–73 (2017). https://doi.org/10.1007/s11263-016-0981-7

Lea, C., Flynn, M.D., Vidal, R., Reiter, A., Hager, G.D.: Temporal convolutional networks for action segmentation and detection. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1003–1012 (2017). https://doi.org/10.1109/CVPR.2017.113

Lee, C., Fang, W., Yeh, C., Wang, Y.F.: Multi-label zero-shot learning with structured knowledge graphs. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1576–1585 (2018). https://doi.org/10.1109/CVPR.2018.00170

Li, R., Tapaswi, M., Liao, R., Jia, J., Urtasun, R., Fidler, S.: Situation recognition with graph neural networks. In: IEEE International Conference on Computer Vision (2017)

Li, Y., Gupta, A.: Beyond grids: learning graph representations for visual recognition. In: Bengio, S., Wallach, H., Larochelle, H., Grauman, K., Cesa-Bianchi, N., Garnett, R. (eds.) Neural Information Processing Systems, pp. 9225–9235 (2018)

Liang, X., Hu, Z., Zhang, H., Lin, L., Xing, E.P.: Symbolic graph reasoning meets convolutions. In: Neural Information Processing Systems, pp. 1853–1863. Curran Associates, Inc. (2018)

Lin, T.-Y., et al.: Microsoft COCO: common objects in context. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8693, pp. 740–755. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10602-1_48

Liu, J., Kuipers, B., Savarese, S.: Recognizing human actions by attributes. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 3337–3344 (2011). https://doi.org/10.1109/CVPR.2011.5995353

Ma, C., Kadav, A., Melvin, I., Kira, Z., AlRegib, G., Graf, H.P.: Attend and interact: higher-order object interactions for video understanding. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 6790–6800 (2018). https://doi.org/10.1109/CVPR.2018.00710

Marszalek, M., Schmid, C.: Semantic hierarchies for visual object recognition. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–7 (2007). https://doi.org/10.1109/CVPR.2007.383272

Marszałek, M., Schmid, C.: Constructing category hierarchies for visual recognition. In: Forsyth, D., Torr, P., Zisserman, A. (eds.) ECCV 2008. LNCS, vol. 5305, pp. 479–491. Springer, Heidelberg (2008). https://doi.org/10.1007/978-3-540-88693-8_35

Mavroudi, E., Tao, L., Vidal, R.: Deep moving poselets for video based action recognition. In: IEEE Winter Applications of Computer Vision Conference, pp. 111–120 (2017). https://doi.org/10.1109/WACV.2017.20

Mikolov, T., Sutskever, I., Chen, K., Corrado, G.S., Dean, J.: Distributed representations of words and phrases and their compositionality. In: Neural Information Processing Systems, pp. 3111–3119 (2013)

Nicolicioiu, A., Duta, I., Leordeanu, M.: Recurrent space-time graph neural networks. In: Neural Information Processing Systems (2019)

Oliva, A., Torralba, A.: The role of context in object recognition. Trends Cogn. Sci. 11(12), 520–527 (2007). https://doi.org/10.1016/j.tics.2007.09.009

Piergiovanni, A., Ryoo, M.S.: Learning latent super-events to detect multiple activities in videos. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 5304–5313 (2018). https://doi.org/10.1109/CVPR.2018.00556

Piergiovanni, A.J., Ryoo, M.S.: Temporal gaussian mixture layer for videos. In: International Conference on Machine learning (2019)

Prest, A., Ferrari, V., Schmid, C.: Explicit modeling of human-object interactions in realistic videos. IEEE Trans. Pattern Anal. Mach. Intell. (2013)

Qi, S., Wang, W., Jia, B., Shen, J., Zhu, S.-C.: Learning human-object interactions by graph parsing neural networks. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11213, pp. 407–423. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01240-3_25

Ramanathan, V., et al.: Learning semantic relationships for better action retrieval in images. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1100–1109 (2015). https://doi.org/10.1109/CVPR.2015.7298713

Schlichtkrull, M., Kipf, T.N., Bloem, P., van den Berg, R., Titov, I., Welling, M.: Modeling relational data with graph convolutional networks. In: Gangemi, A., et al. (eds.) ESWC 2018. LNCS, vol. 10843, pp. 593–607. Springer, Cham (2018). https://doi.org/10.1007/978-3-319-93417-4_38

Sigurdsson, G.A., Divvala, S., Farhadi, A., Gupta, A.: Asynchronous temporal fields for action recognition. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 5650–5659 (2017). https://doi.org/10.1109/CVPR.2017.599

Sigurdsson, G.A., Varol, G., Wang, X., Farhadi, A., Laptev, I., Gupta, A.: Hollywood in homes: crowdsourcing data collection for activity understanding. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9905, pp. 510–526. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46448-0_31

Simonyan, K., Zisserman, A.: Two-stream convolutional networks for action recognition in videos. In: Ghahramani, Z., Welling, M., Cortes, C., Lawrence, N.D., Weinberger, K.Q. (eds.) Neural Information Processing Systems, pp. 568–576. Curran Associates, Inc. (2014)

Sun, C., et al.: Actor-centric relation network. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11215, pp. 335–351. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01252-6_20

Teney, D., Liu, L., van den Hengel, A.: Graph-structured representations for visual question answering. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 1–9 (2017)

Tran, D., Bourdev, L., Fergus, R., Torresani, L., Paluri, M.: Learning spatiotemporal features with 3D convolutional networks. In: IEEE International Conference on Computer Vision (2015)

Veličković, P., Cucurull, G., Casanova, A., Romero, A., Liò, P., Bengio, Y.: Graph attention networks. In: International Conference on Learning Representations (2018)

Wang, L., et al.: Temporal segment networks: towards good practices for deep action recognition. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9912, pp. 20–36. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46484-8_2

Wang, X., Gupta, A.: Videos as space-time region graphs. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11209, pp. 413–431. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01228-1_25

Wang, X., Ji, Q.: Video event recognition with deep hierarchical context model. In: IEEE Conference on Computer Vision and Pattern Recognition (2015)

Xiong, Y., Huang, Q., Guo, L., Zhou, H., Zhou, B., Lin, D.: A graph-based framework to bridge movies and synopses. In: IEEE International Conference on Computer Vision (2019)

Xu, H., Das, A., Saenko, K.: R-C3d: region convolutional 3D network for temporal activity detection. In: IEEE International Conference on Computer Vision, pp. 5794–5803 (2017). https://doi.org/10.1109/ICCV.2017.617

Yatskar, M., Zettlemoyer, L., Farhadi, A.: Situation recognition: visual semantic role labeling for image understanding. In: IEEE Conference on Computer Vision and Pattern Recognition (2016)

Yuan, Y., Liang, X., Wang, X., Yeung, D., Gupta, A.: Temporal dynamic graph LSTM for action-driven video object detection. In: IEEE International Conference on Computer Vision, pp. 1819–1828 (2017). https://doi.org/10.1109/ICCV.2017.200

Zhang, Y., Tokmakov, P., Hebert, M., Schmid, C.: A structured model for action detection. In: IEEE Conference on Computer Vision and Pattern Recognition (2019)

Zhou, B., Andonian, A., Oliva, A., Torralba, A.: Temporal relational reasoning in videos. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11205, pp. 831–846. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01246-5_49

Zhou, L., Kalantidis, Y., Chen, X., Corso, J.J., Rohrbach, M.: Grounded video description. In: IEEE Conference on Computer Vision and Pattern Recognition (2019)

Zhou, L., Zhou, Y., Corso, J.J., Socher, R., Xiong, C.: End-to-end dense video captioning with masked transformer. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 8739–8748 (2018)

Zhou, Y., Ni, B., Tian, Q.: Interaction part mining: a mid-level approach for fine-grained action recognition. In: IEEE Conference on Computer Vision and Pattern Recognition, pp. 3323–3331 (2015). https://doi.org/10.1109/CVPR.2015.7298953

Zhu, Y., Nayak, N.M., Roy-Chowdhury, A.K.: Context-aware modeling and recognition of activities in video. In: IEEE Conference on Computer Vision and Pattern Recognition (2013)

Zitnik, M., Agrawal, M., Leskovec, J.: Modeling polypharmacy side effects with graph convolutional networks. Bioinformatics, 457–466 (2018)

Acknowledgements

The authors thank Carolina Pacheco Oñate, Paris Giampouras and the anonymous reviewers for their valuable comments. This research was supported by the IARPA DIVA program via contract number D17PC00345.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Mavroudi, E., Haro, B.B., Vidal, R. (2020). Representation Learning on Visual-Symbolic Graphs for Video Understanding. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, JM. (eds) Computer Vision – ECCV 2020. ECCV 2020. Lecture Notes in Computer Science(), vol 12374. Springer, Cham. https://doi.org/10.1007/978-3-030-58526-6_5

Download citation

DOI: https://doi.org/10.1007/978-3-030-58526-6_5

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-58525-9

Online ISBN: 978-3-030-58526-6

eBook Packages: Computer ScienceComputer Science (R0)